From workflows to pipelines

Designing Machine Learning Workflows in Python

Dr. Chris Anagnostopoulos

Honorary Associate Professor

Revisiting our workflow

from sklearn.ensemble import RandomForestClassifier as rf

X_train, X_test, y_train, y_test = train_test_split(X, y)

grid_search = GridSearchCV(rf(), param_grid={'max_depth': [2, 5, 10]})

grid_search.fit(X_train, y_train)

depth = grid_search.best_params_['max_depth']

vt = SelectKBest(f_classif, k=3).fit(X_train, y_train)

clf = rf(max_depth=best_value).fit(vt.transform(X_train), y_train)

accuracy_score(clf.predict(vt.transform(X_test), y_test))

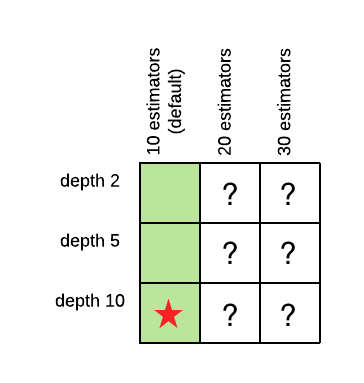

The power of grid search

Optimize max_depth:

pg = {'max_depth': [2,5,10]}

gs = GridSearchCV(rf(),

param_grid=pg)

gs.fit(X_train, y_train)

depth = gs.best_params_['max_depth']

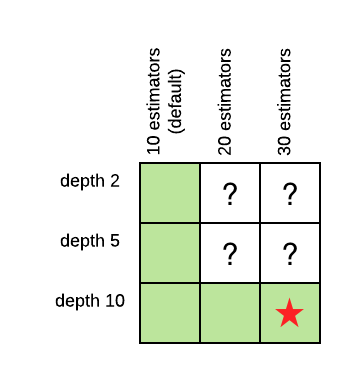

The power of grid search

Then optimize n_estimators:

pg = {'n_estimators': [10,20,30]}

gs = GridSearchCV(

rf(max_depth=depth),

param_grid=pg)

gs.fit(X_train, y_train)

n_est = gs.best_params_[

'n_estimators']

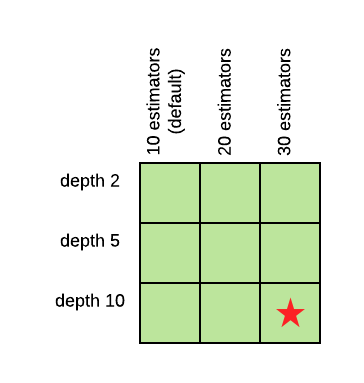

The power of grid search

Jointly max_depth and n_estimators:

pg = { 'max_depth': [2,5,10], 'n_estimators': [10,20,30] } gs = GridSearchCV(rf(), param_grid=pg) gs.fit(X_train, y_train) print(gs.best_params_){'max_depth': 10, 'n_estimators': 20}

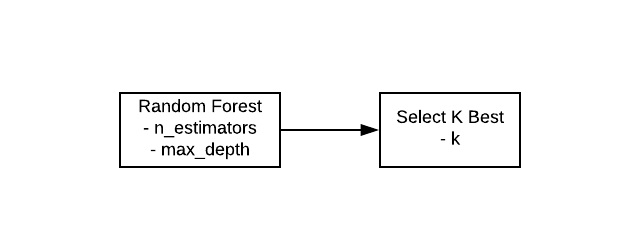

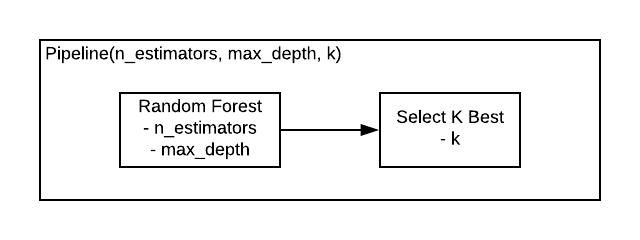

Pipelines

Pipelines

Pipelines

from sklearn.pipeline import Pipeline pipe = Pipeline([ ('feature_selection', SelectKBest(f_classif)), ('classifier', RandomForestClassifier()) ])params = dict( feature_selection__k=[2, 3, 4], classifier__max_depth=[5, 10, 20] )grid_search = GridSearchCV(pipe, param_grid=params) gs = grid_search.fit(X_train, y_train).best_params_

{'classifier__max_depth': 20, 'feature_selection__k': 4}

Customizing your pipeline

from sklearn.metrics import roc_auc_score, make_scorer auc_scorer = make_scorer(roc_auc_score)grid_search = GridSearchCV(pipe, param_grid=params, scoring=auc_scorer)

Don't overdo it

params = dict(

feature_selection__k=[2, 3, 4],

clf__max_depth=[5, 10, 20],

clf__n_estimators=[10, 20, 30]

)

grid_search = GridSearchCV(pipe, params, cv=10)

3 x 3 x 3 x 10 = 270 classifier fits!

Supercharged workflows

Designing Machine Learning Workflows in Python