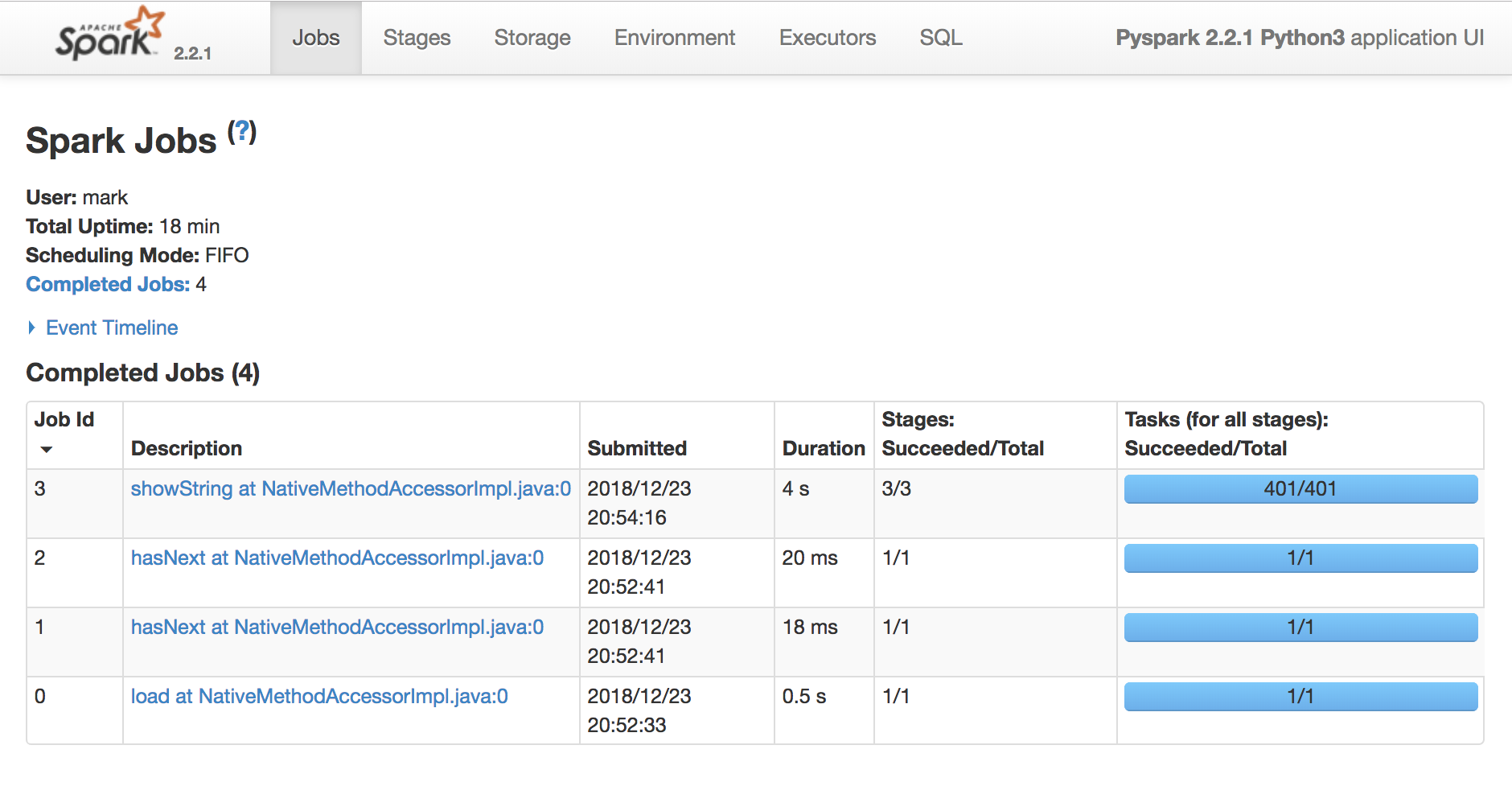

The Spark UI

Introduction to Spark SQL in Python

Mark Plutowski

Data Scientist

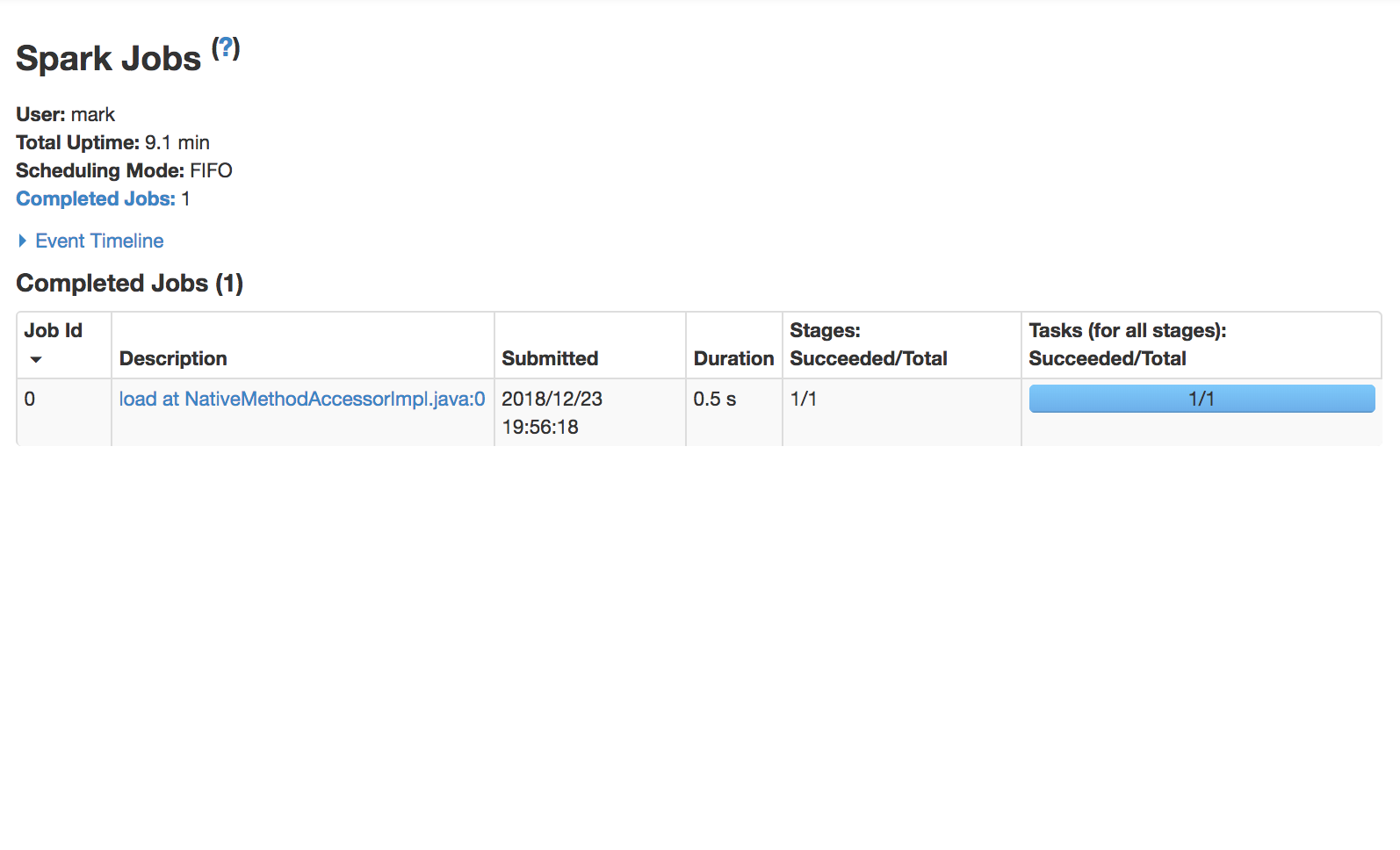

Use the Spark UI inspect execution

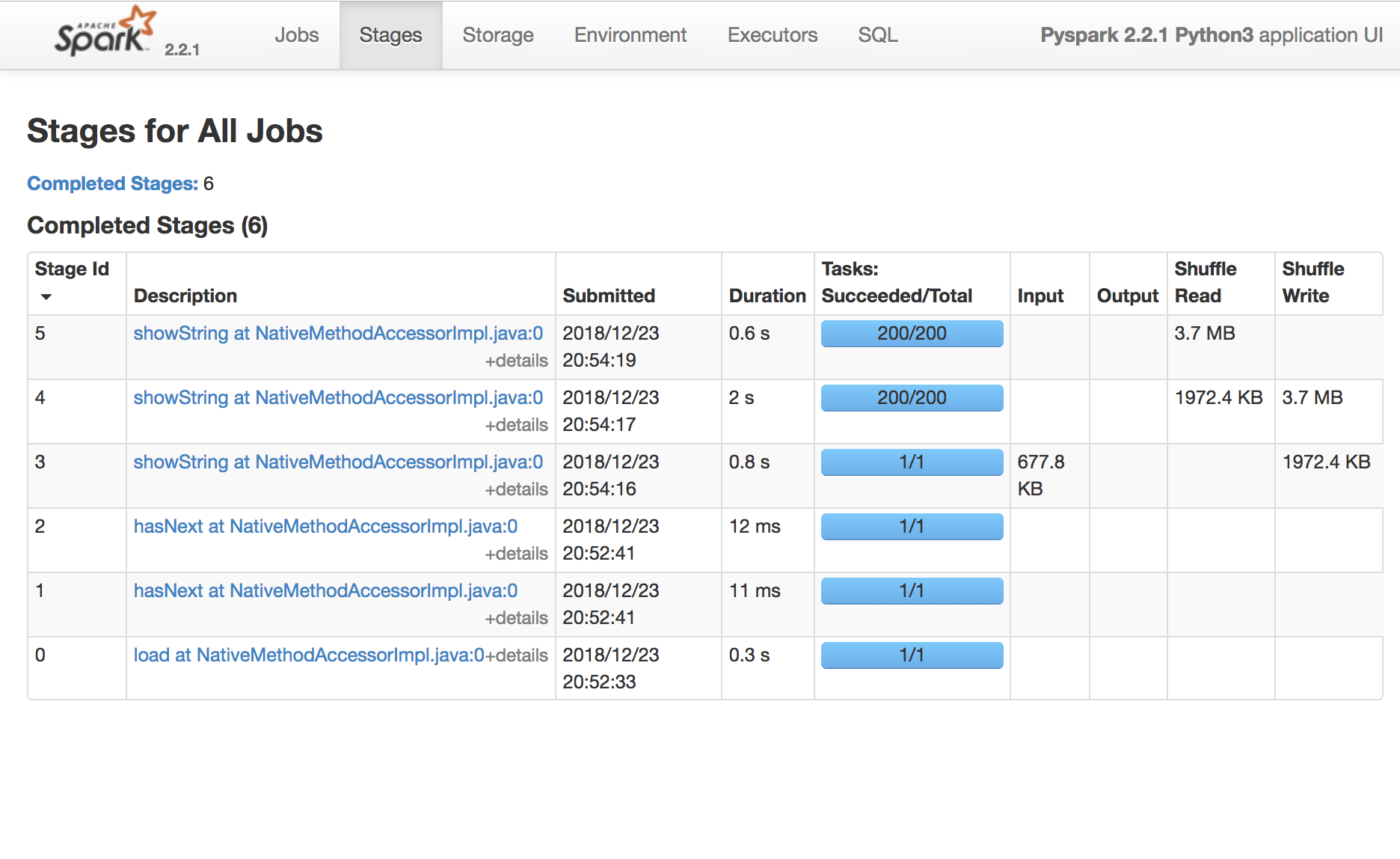

Spark Task is a unit of execution that runs on a single cpu

Spark Stage a group of tasks that perform the same computation in parallel, each task typically running on a different subset of the data

Spark Job is a computation triggered by an action, sliced into one or more stages.

Finding the Spark UI

- http://[DRIVER_HOST]:4040

- http://[DRIVER_HOST]:4041

- http://[DRIVER_HOST]:4042

- http://[DRIVER_HOST]:4043

...

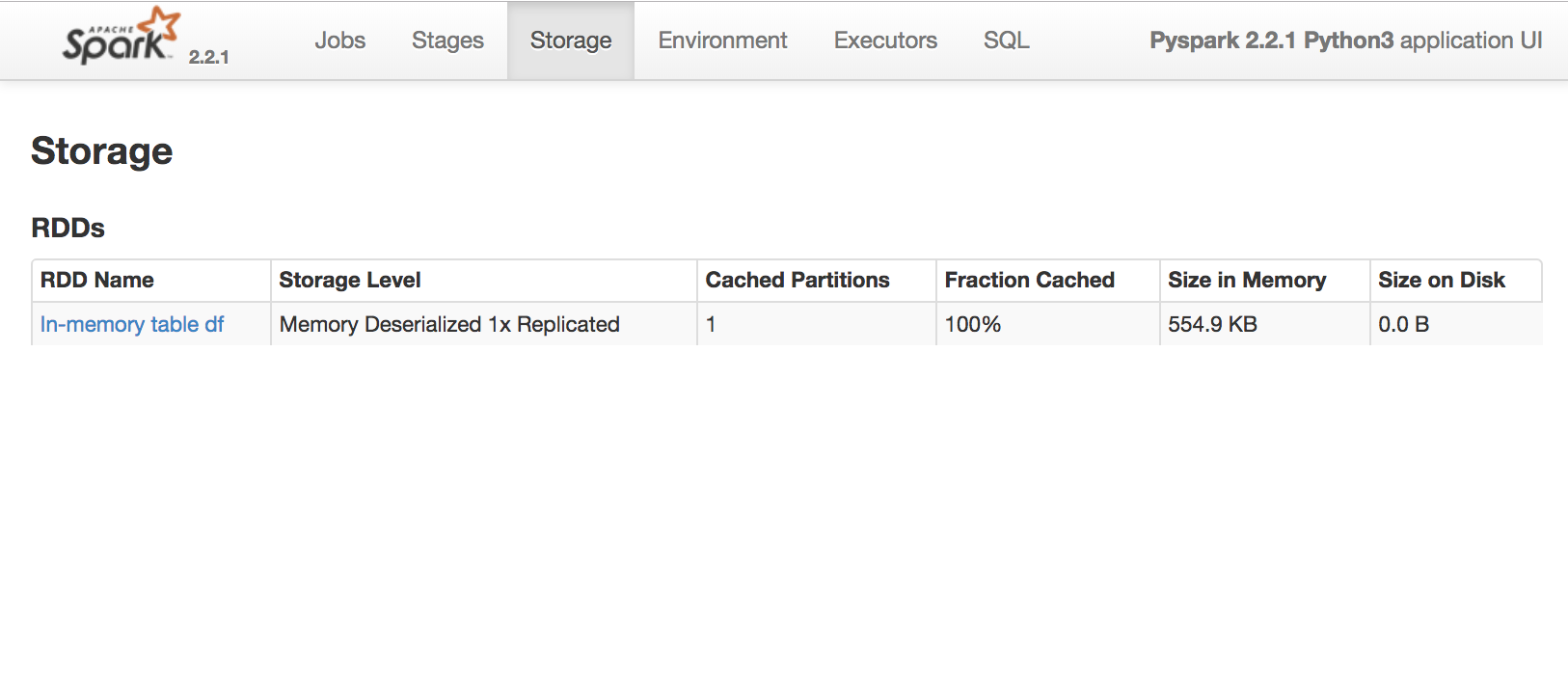

Spark catalog operations

spark.catalog.cacheTable('table1')spark.catalog.uncacheTable('table1')spark.catalog.isCached('table1')spark.catalog.dropTempView('table1')

Spark Catalog

spark.catalog.listTables()

[Table(name='text', database=None, description=None, tableType='TEMPORARY', isTemporary=True)]

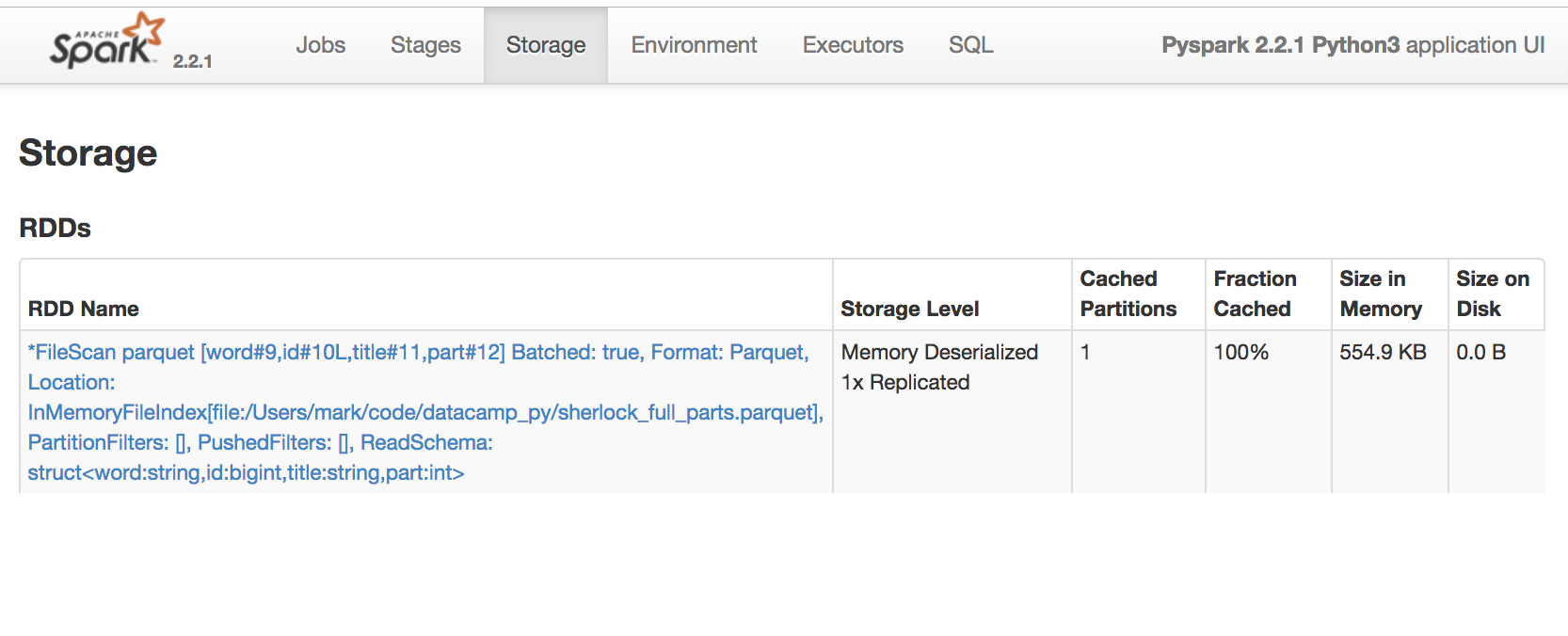

Spark UI Storage Tab

Shows where data partitions exist

- in memory,

- or on disk,

- across the cluster,

- at a snapshot in time.

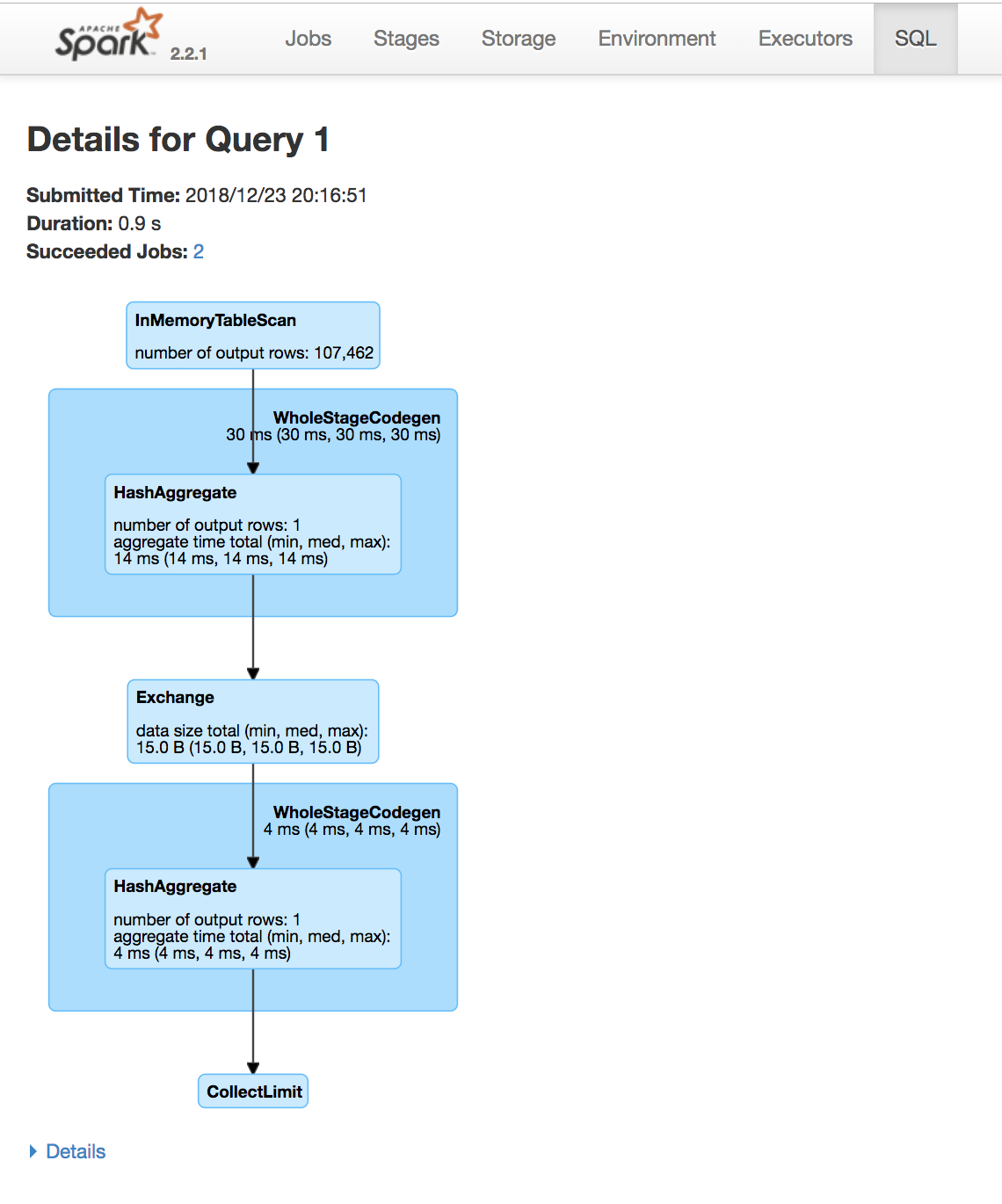

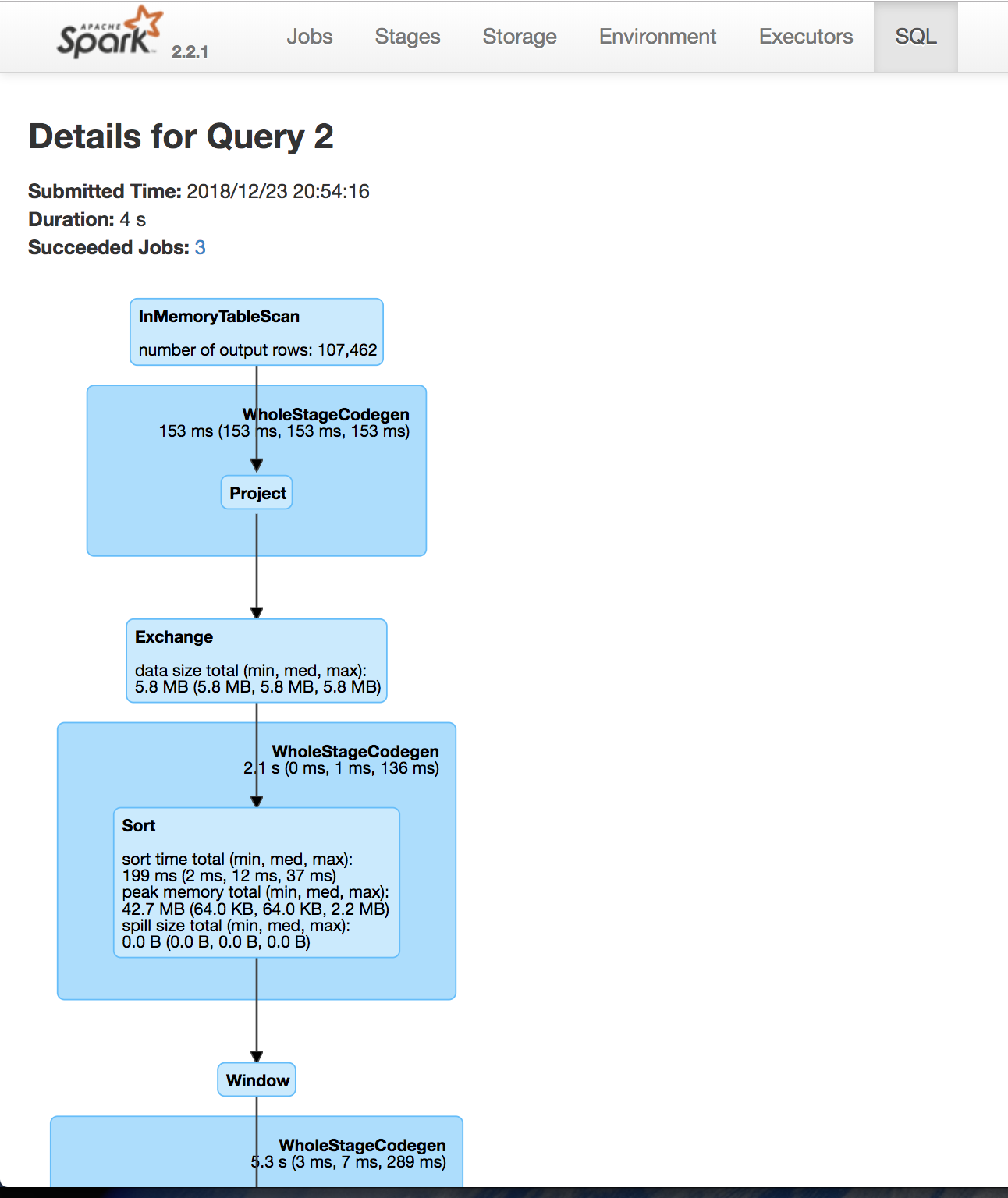

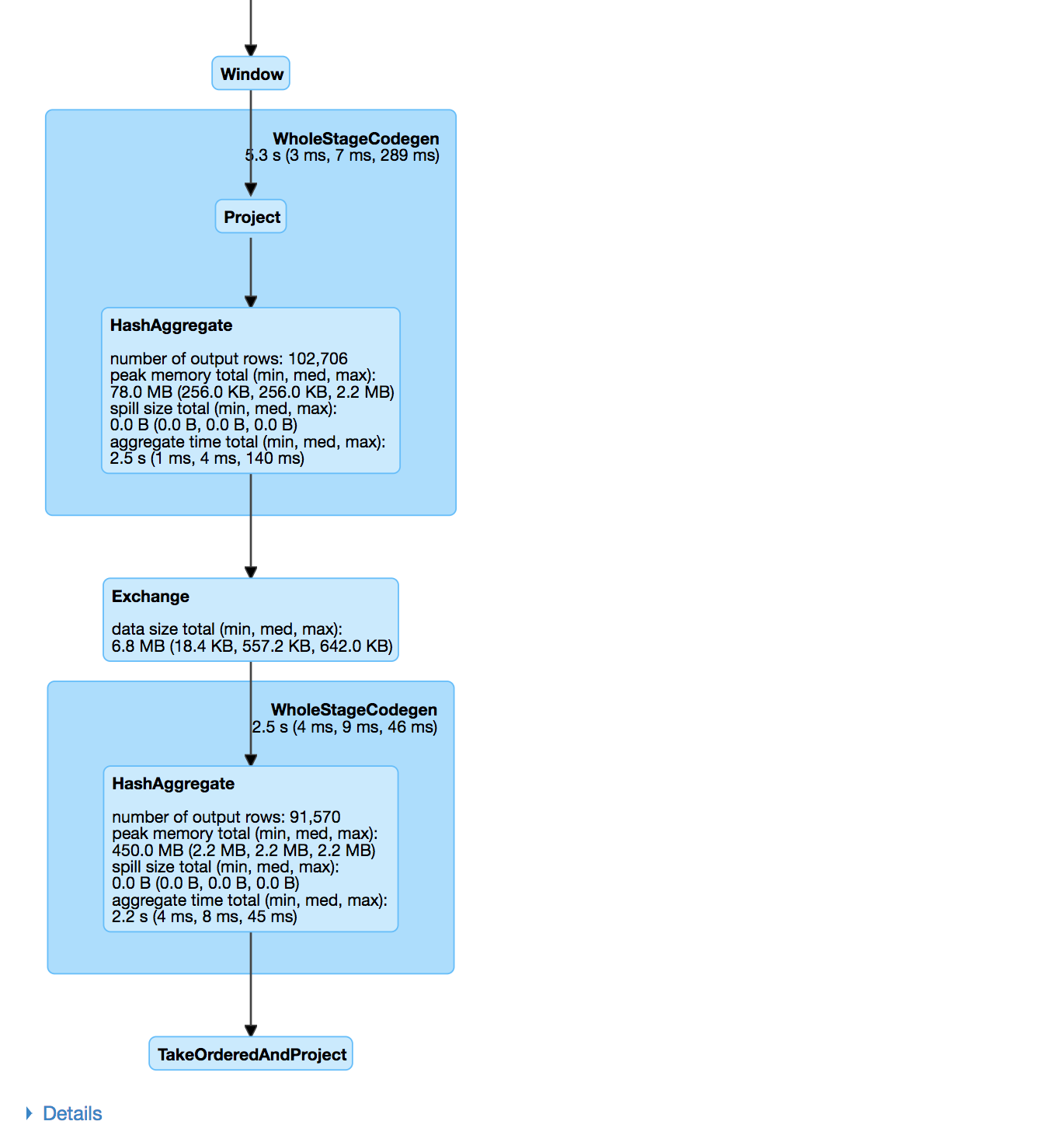

Spark UI SQL tab

query3agg = """ SELECT w1, w2, w3, COUNT(*) as count FROM ( SELECT word AS w1, LEAD(word,1) OVER(PARTITION BY part ORDER BY id ) AS w2, LEAD(word,2) OVER(PARTITION BY part ORDER BY id ) AS w3 FROM df ) GROUP BY w1, w2, w3 ORDER BY count DESC """spark.sql(query3agg).show()

Let's practice

Introduction to Spark SQL in Python