Training the NMT model

Machine Translation with Keras

Thushan Ganegedara

Data Scientist and Author

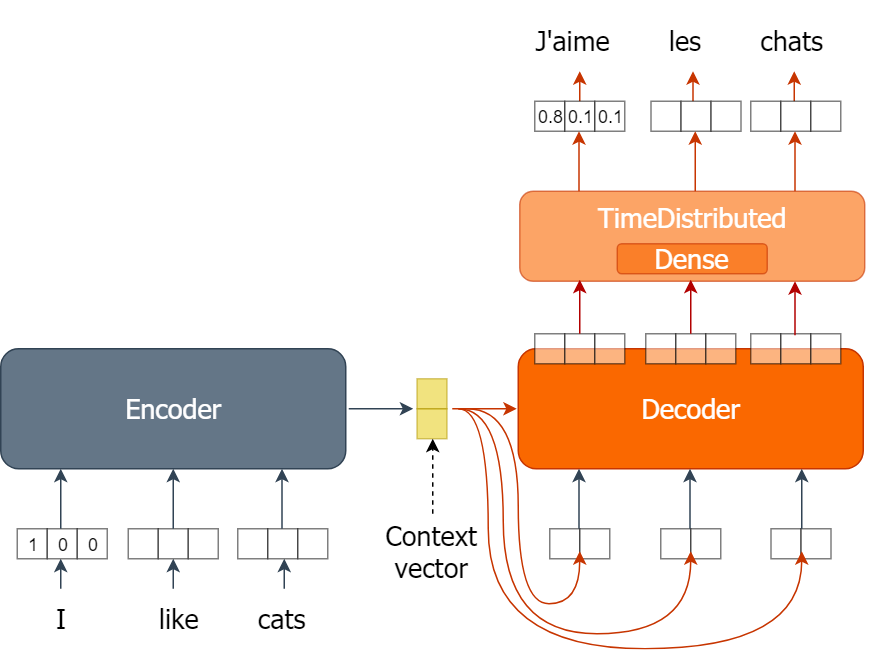

Revisiting the model

- Encoder GRU

- Consumes English words

- Outputs a context vector

- Decoder GRU

- Consumes the context vector

- Outputs a sequence of GRU outputs

- Decoder Prediction layer

- Consumes the sequence of GRU outputs

- Outputs prediction probabilities for French words

Optimizing the parameters

- GRU layer and Dense layer have parameters

- Often represented by

W(weights) andb(bias) (Initialized with random values) - Responsible for transforming a given input to an useful output

- Changed over time to minimize a given loss using an optimizer

- Loss: Computed as the difference between:

- The predictions (i.e. French words generated with the model)

- The actual outputs (i.e. actual French words).

- Loss: Computed as the difference between:

- Informed the model during model compilation

nmt.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['acc'])

Training the model

- Training iterations

for ei in range(n_epochs): # Single traverse through the dataset for i in range(0,data_size,bsize): # Processing a single batch - Obtaining a batch of training data

en_x = sents2seqs('source', en_text[i:i+bsize], onehot=True, reverse=True) de_y = sents2seqs('target', en_text[i:i+bsize], onehot=True) - Training on a single batch of data

nmt.train_on_batch(en_x, de_y) - Evaluating the model

res = nmt.evaluate(en_x, de_y, batch_size=bsize, verbose=0)

Training the model

- Getting the training loss and the accuracy

res = nmt.evaluate(en_x, de_y, batch_size=bsize, verbose=0) print("Epoch {} => Train Loss:{}, Train Acc: {}".format( ei+1,res[0], res[1]*100.0))

Epoch 1 => Train Loss:4.8036723136901855, Train Acc: 5.215999856591225

...

Epoch 1 => Train Loss:4.718592643737793, Train Acc: 47.0880001783371

...

Epoch 5 => Train Loss:2.8161656856536865, Train Acc: 56.40000104904175

Epoch 5 => Train Loss:2.527724266052246, Train Acc: 54.368001222610474

Epoch 5 => Train Loss:2.2689621448516846, Train Acc: 54.57599759101868

Epoch 5 => Train Loss:1.9934935569763184, Train Acc: 56.51199817657471

Epoch 5 => Train Loss:1.7581449747085571, Train Acc: 55.184000730514526

Epoch 5 => Train Loss:1.5613118410110474, Train Acc: 55.11999726295471

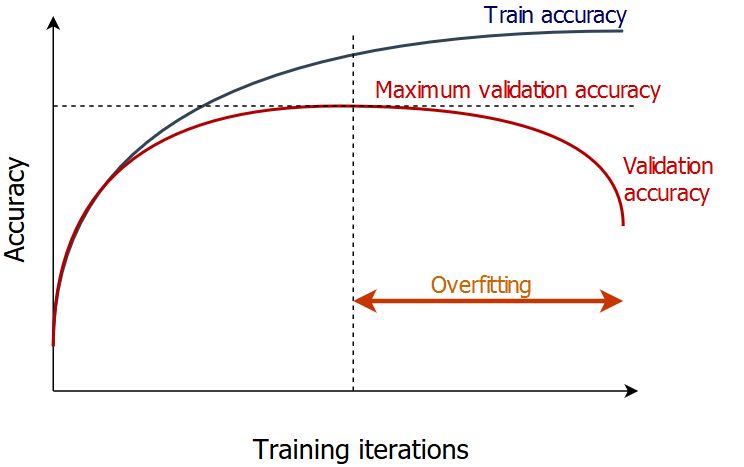

Avoiding overfitting

- Break the dataset to two parts

- Training set - The model will be trained on

- Validation set - The model's accuracy will be monitored on

- When the validation accuracy stops increasing, stop the training.

Splitting the dataset

Define a train dataset size and validation dataset size

train_size, valid_size = 800, 200Shuffle the data indices randomly

inds = np.arange(len(en_text)) np.random.shuffle(inds)Get the train and valid indices

train_inds = inds[:train_size] valid_inds = inds[train_size:train_size+valid_size]

Splitting the dataset

- Split the dataset by separating,

- Data having train indices to a train set

- Data having valid indices to a valid set

tr_en = [en_text[ti] for ti in train_inds]

tr_fr = [fr_text[ti] for ti in train_inds]

v_en = [en_text[ti] for ti in valid_inds]

v_fr = [fr_text[ti] for ti in valid_inds]

Training the model with validation

n_epochs, bsize = 5, 250 for ei in range(n_epochs):for i in range(0,train_size,bsize): en_x = sents2seqs('source', tr_en[i:i+bsize], onehot=True, pad_type='pre') de_y = sents2seqs('target', tr_fr[i:i+bsize], onehot=True) nmt.train_on_batch(en_x, de_y)v_en_x = sents2seqs('source', v_en, onehot=True, pad_type='pre') v_de_y = sents2seqs('target', v_fr, onehot=True)res = nmt.evaluate(v_en_x, v_de_y, batch_size=valid_size, verbose=0) print("Epoch: {} => Loss:{}, Val Acc: {}".format(ei+1,res[0], res[1]*100.0))

Epoch 1 => Train Loss:4.8036723136901855, Train Acc: 5.215999856591225

Let's practice!

Machine Translation with Keras