ReLU activation functions

Introduction to Deep Learning with PyTorch

Jasmin Ludolf

Senior Data Science Content Developer, DataCamp

Sigmoid and softmax functions

$$

- SIGMOID for BINARY classification

$$

- SOFTMAX for MULTI-CLASS classification

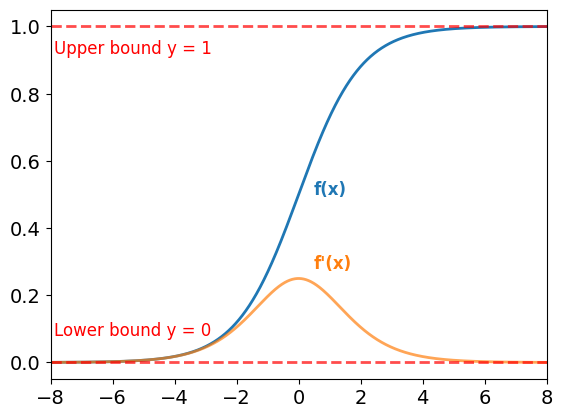

Limitations of the sigmoid and softmax function

Sigmoid function:

- Outputs bounded between 0 and 1

- Usable anywhere in a network

Gradients:

- Very small for large and small values of x

- Cause saturation, leading to the vanishing gradients problem

$$

The softmax function also suffers from saturation

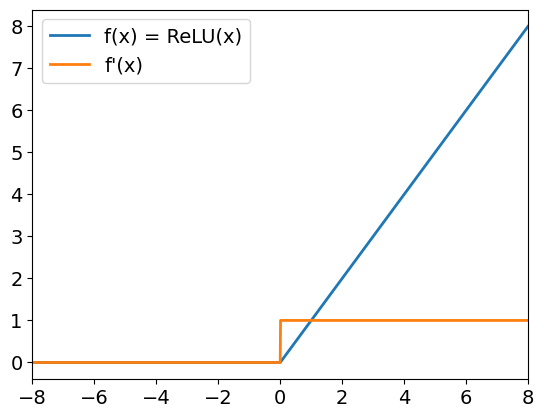

ReLU

Rectified Linear Unit (ReLU):

f(x) = max(x, 0)- For positive inputs: output equals input

- For negative inputs: output is 0

- Helps overcome vanishing gradients

$$

In PyTorch:

relu = nn.ReLU()

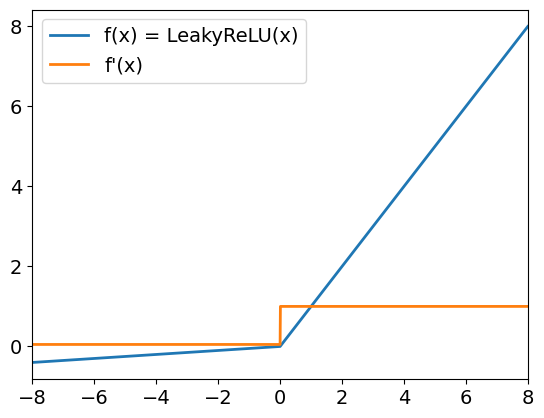

Leaky ReLU

Leaky ReLU:

- Positive inputs behave like ReLU

- Negative inputs are scaled by a small coefficient (default 0.01)

- Gradients for negative inputs are non-zero

$$

In PyTorch:

leaky_relu = nn.LeakyReLU(

negative_slope = 0.05)

Let's practice!

Introduction to Deep Learning with PyTorch