Evaluación del rendimiento de los modelos

Introducción al aprendizaje profundo con PyTorch

Jasmin Ludolf

Senior Data Science Content Developer, DataCamp

Formación, validación y pruebas

$$

- Un conjunto de datos suele dividirse en tres subconjuntos:

| Porcentaje de datos | Función | |

|---|---|---|

| Entrenamiento | 80-90 % | Ajusta los parámetros del modelo |

| Validación | 10-20 % | Ajusta los hiperparámetros |

| Prueba | 5-10 % | Evalúa el rendimiento final del modelo |

$$

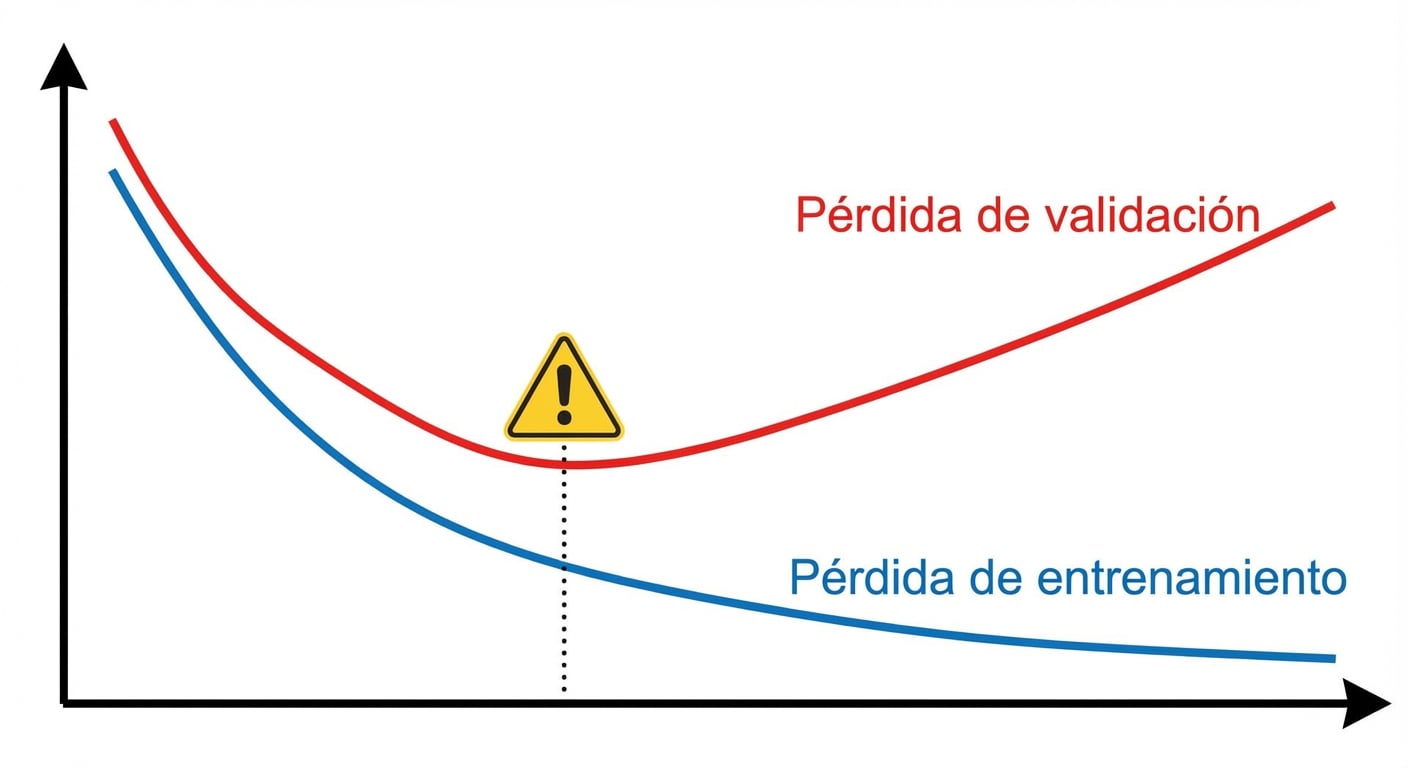

- Sigue la pérdida y la precisión durante el entrenamiento y la validación

Cálculo de la pérdida de entrenamiento

$$

Para cada época:

- Suma la pérdida de todos los lotes del cargador de datos

- Calcula la pérdida media de entrenamiento al final de la época

training_loss = 0.0for inputs, labels in trainloader: # Run the forward pass outputs = model(inputs) # Compute the loss loss = criterion(outputs, labels)# Backpropagation loss.backward() # Compute gradients optimizer.step() # Update weights optimizer.zero_grad() # Reset gradients# Calculate and sum the loss training_loss += loss.item()epoch_loss = training_loss / len(trainloader)

Cálculo de la pérdida de validación

validation_loss = 0.0 model.eval() # Put model in evaluation modewith torch.no_grad(): # Disable gradients for efficiencyfor inputs, labels in validationloader: # Run the forward pass outputs = model(inputs) # Calculate the loss loss = criterion(outputs, labels) validation_loss += loss.item() epoch_loss = validation_loss / len(validationloader) # Compute mean lossmodel.train() # Switch back to training mode

Sobreajuste

Calcular la precisión con la antorcha métrica

import torchmetrics# Create accuracy metric metric = torchmetrics.Accuracy(task="multiclass", num_classes=3)for features, labels in dataloader: outputs = model(features) # Forward pass # Compute batch accuracy (keeping argmax for one-hot labels) metric.update(outputs, labels.argmax(dim=-1))# Compute accuracy over the whole epoch accuracy = metric.compute()# Reset metric for the next epoch metric.reset()

¡Vamos a practicar!

Introducción al aprendizaje profundo con PyTorch