Training with PPO

Reinforcement Learning from Human Feedback (RLHF)

Mina Parham

AI Engineer

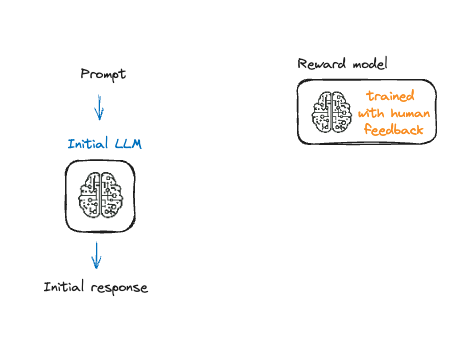

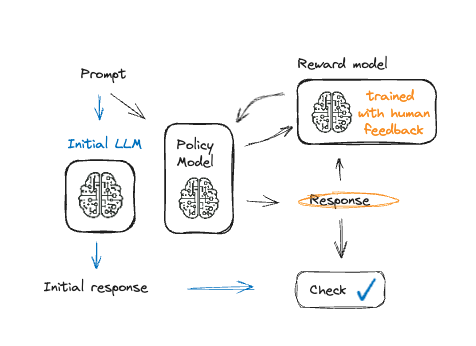

Fine-Tuning with reinforcement learning

Fine-Tuning with reinforcement learning

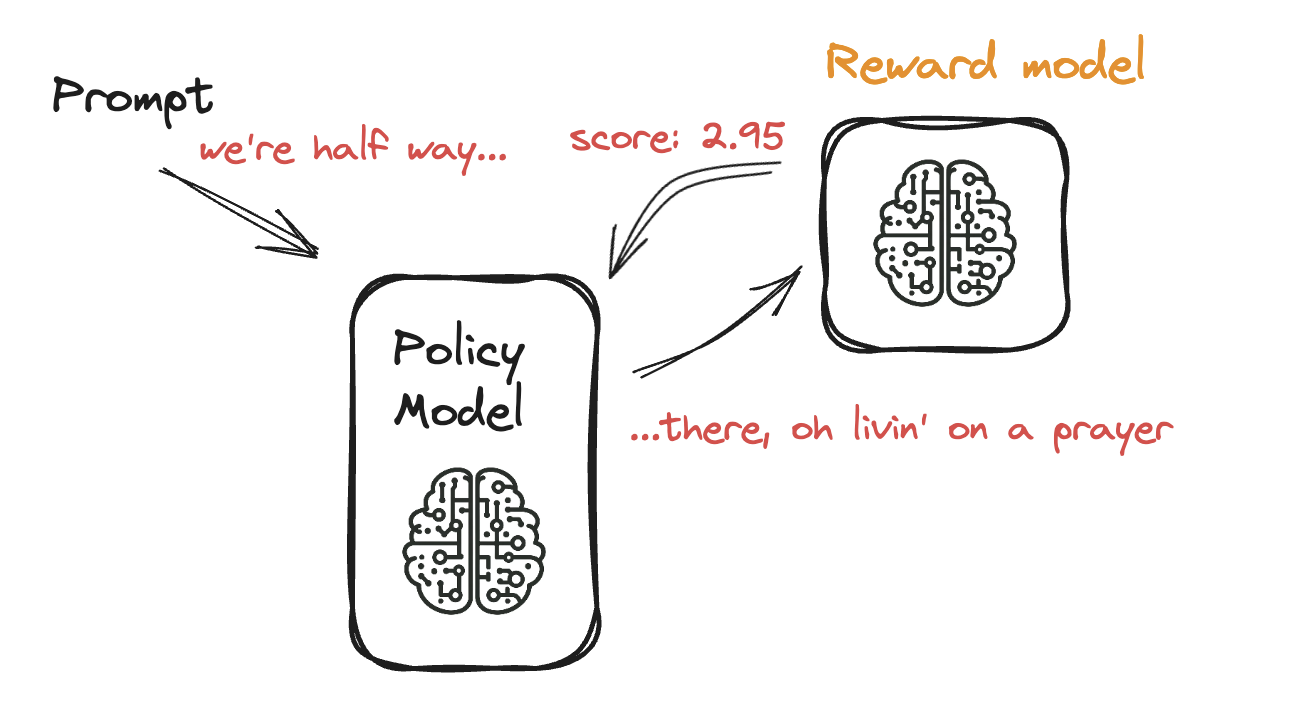

Fine-Tuning a Language Model with PPO

Fine-Tuning a Language Model with PPO

Fine-Tuning a Language Model with PPO

Fine-Tuning a Language Model with PPO

- PPO: gradual adjustment for the model

- Avoids overfitting to feedback

Implementing PPOTrainer with TRL

from trl import PPOConfig

config = PPOConfig(model_name="gpt2",learning_rate=1.4e-5)

from trl import AutoModelForCausalLMWithValueHead

model = AutoModelForCausalLMWithValueHead.from_pretrained(config.model_name)

tokenizer = AutoTokenizer.from_pretrained(config.model_name)

from trl import PPOTrainer

ppo_trainer = PPOTrainer(model=model,config=config,dataset=dataset,

tokenizer=tokenizer)

Starting the training loop

for epoch in tqdm(range(10), "epoch: "):for batch in tqdm(ppo_trainer.dataloader):# Get responses response_tensors = ppo_trainer.generate(batch["input_ids"])batch["response"] = [tokenizer.decode(r.squeeze()) for r in response_tensors]# Compute reward score texts = [q + r for q, r in zip(batch["query"], batch["response"])]rewards = reward_model(texts)stats = ppo_trainer.step(query_tensors, response_tensors, rewards) ppo_trainer.log_stats(stats, batch, rewards)

Let's practice!

Reinforcement Learning from Human Feedback (RLHF)