Preparing data for RLHF

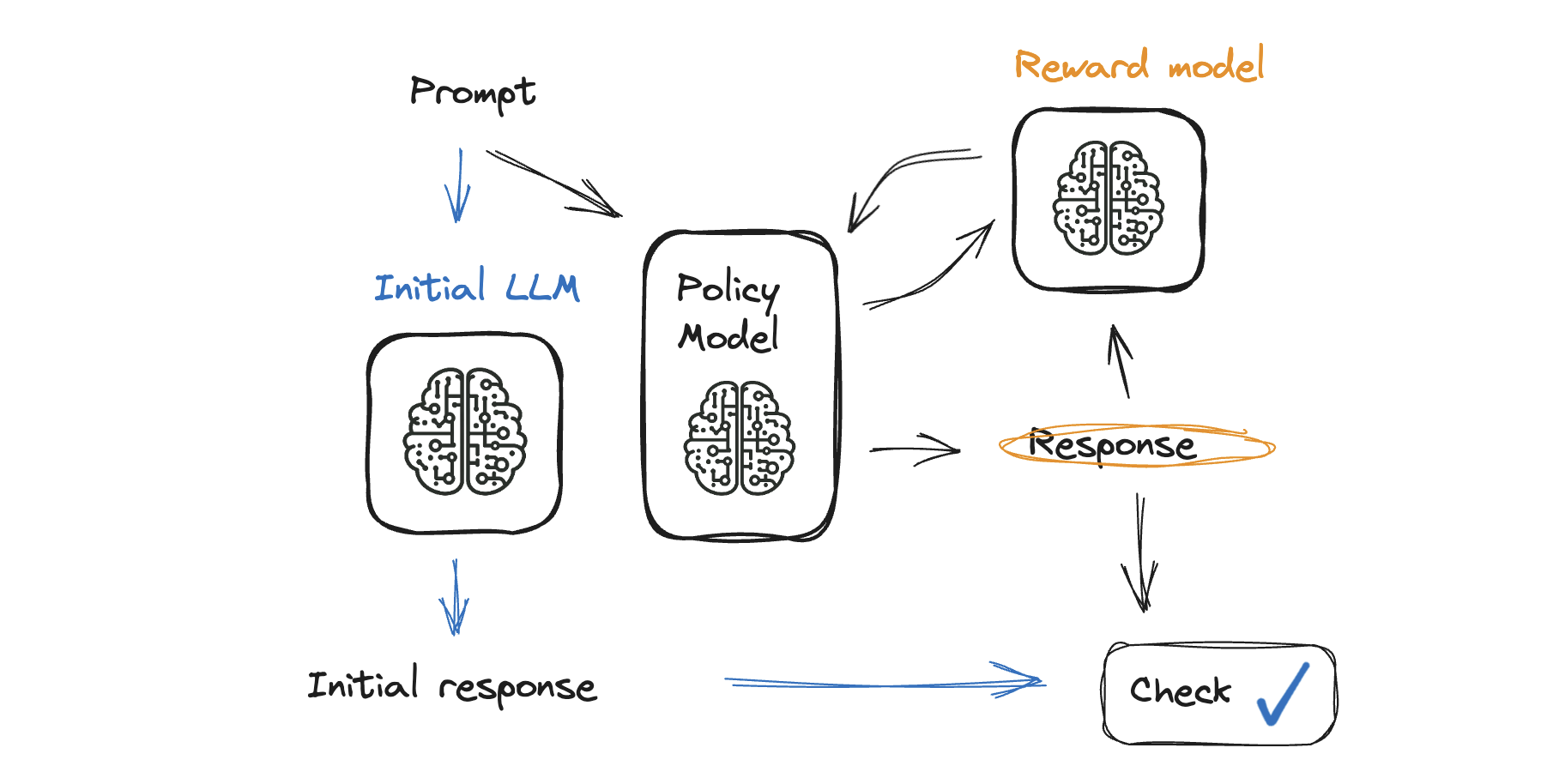

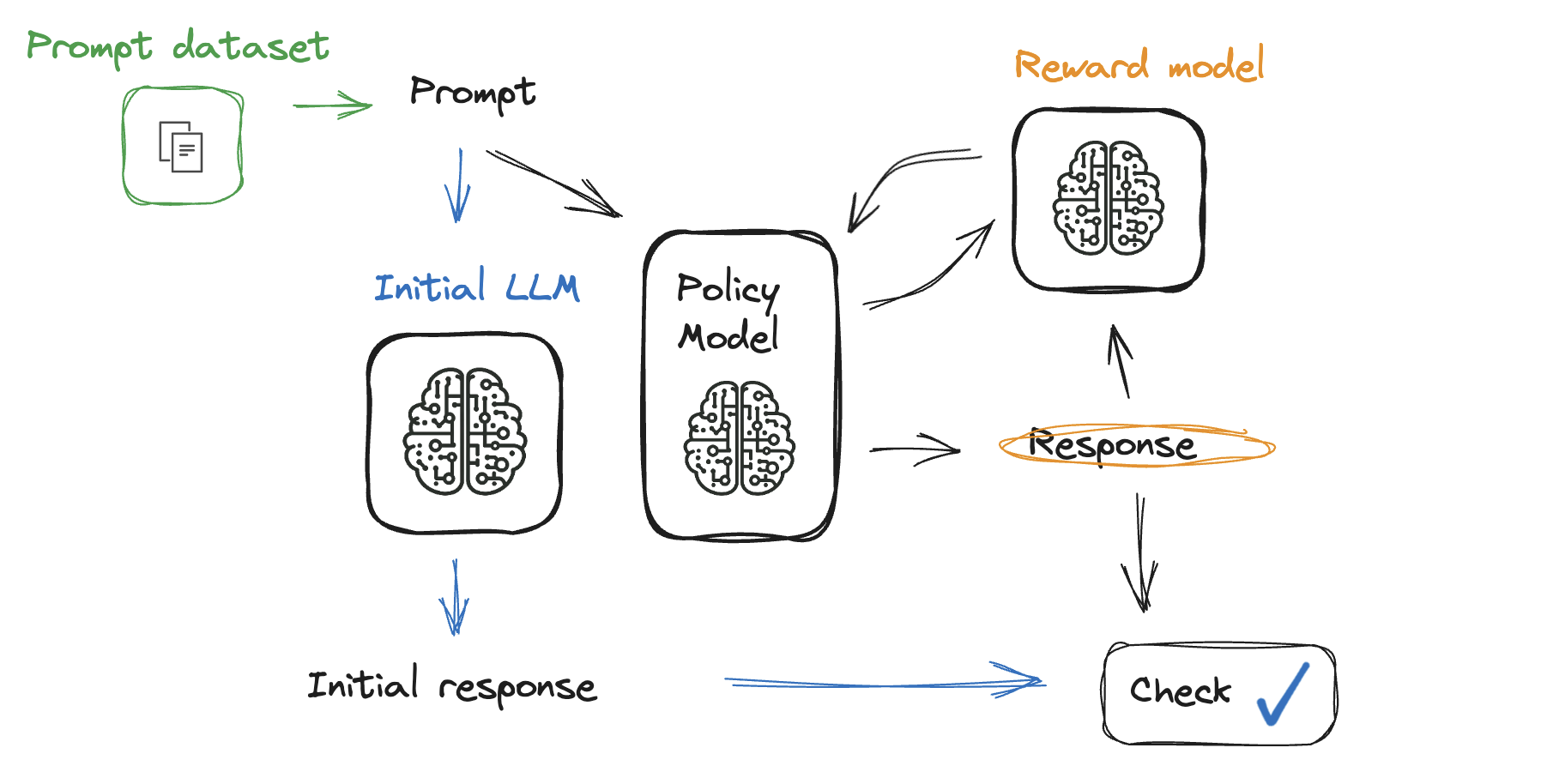

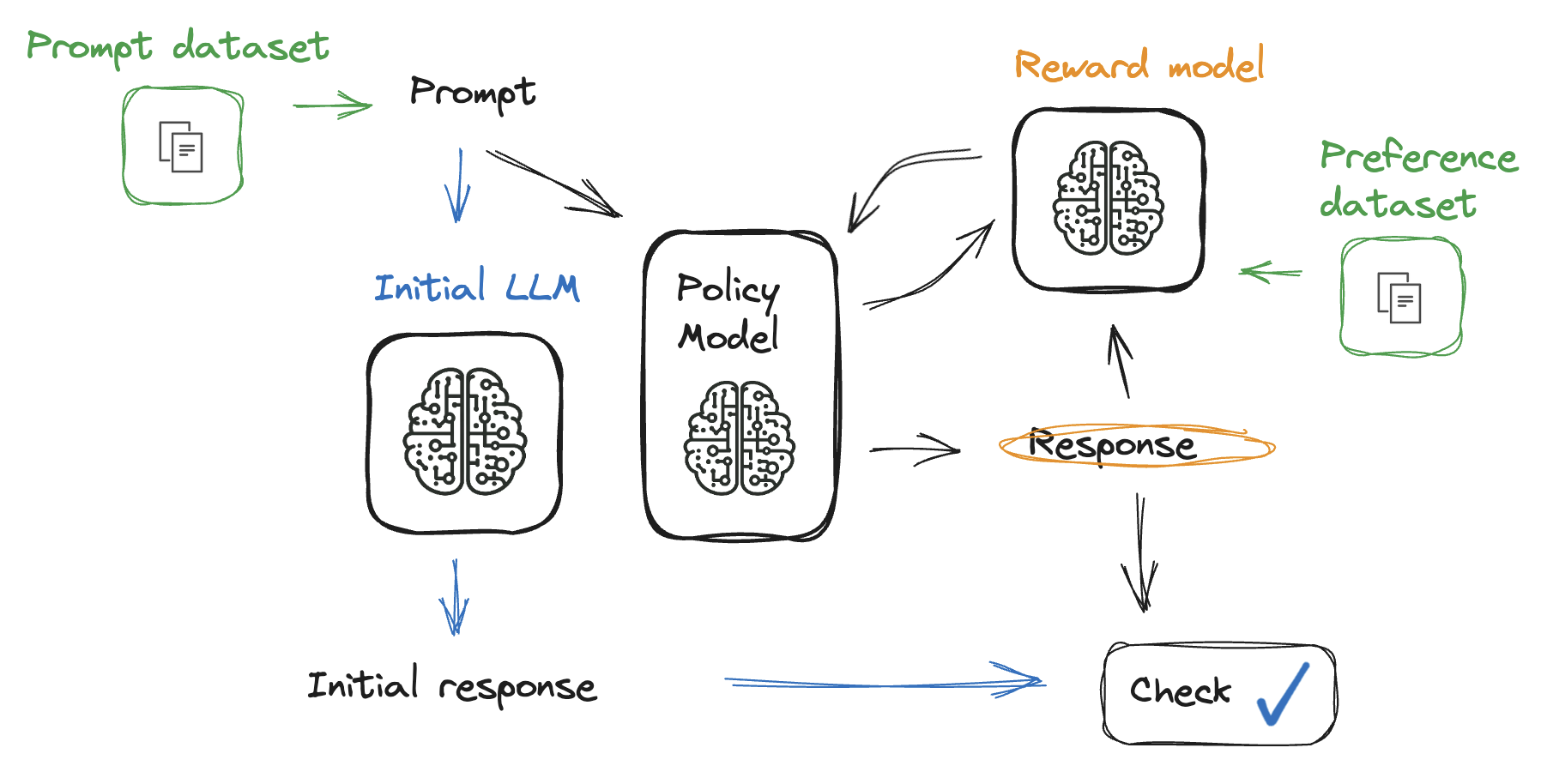

Reinforcement Learning from Human Feedback (RLHF)

Mina Parham

AI Engineer

Preference vs. Prompt datasets

Preference vs. Prompt datasets

Preference vs. Prompt datasets

Prompt dataset

- Questions for the model

- Can be found on Hugging Face datasets

prompt_data = load_dataset("center-for-humans-and-machines/rlhf-hackathon-prompts",

split="train")

prompt_data['prompt'][0]

'How important is climate change?'

- Might need to extract the prompt

- Look for markers such as:

Input=,{{Text}}:,###Human:

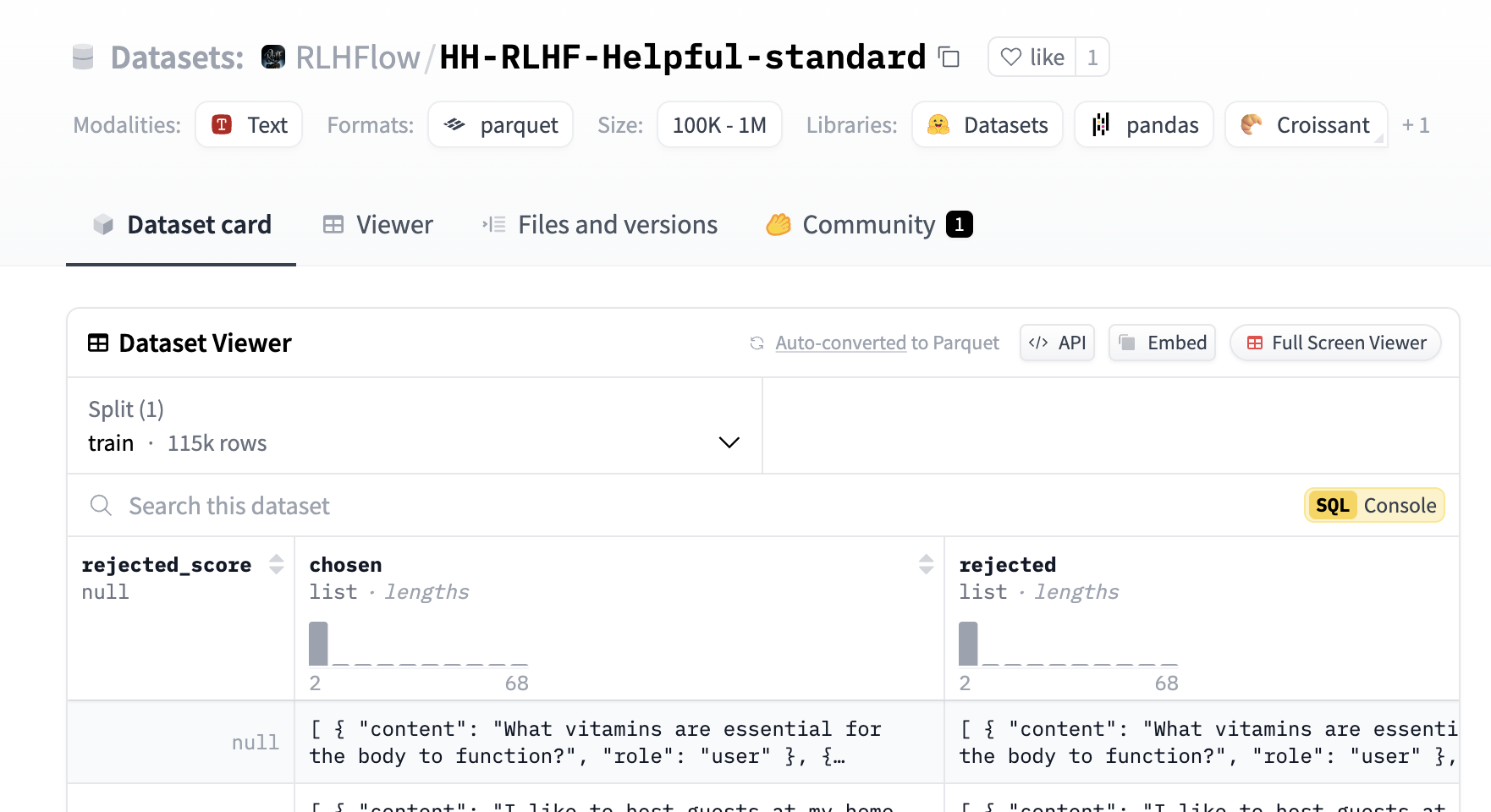

Exploring the preference dataset

from datasets import load_dataset

preference_data = load_dataset("trl-internal-testing/hh-rlhf-helpful-base-trl-style",

split="train")

Processing the preference dataset

def extract_prompt(text):

# Extract the prompt as the first element in the list

prompt = text[0]["content"]

return prompt

# Apply the extraction function to the dataset

preference_data_with_prompt = preference_data.map(

lambda example: {**example, 'prompt': extract_prompt(example['chosen'])}

)

- The way prompts are extracted is different for different datasets

Final preference dataset

sample = preference_data_with_prompt.select(range(1))

sample['prompt']

'What vitamins are essential for the body to function?'

sample['chosen']

[ { "content": "What vitamins are essential for the body to function?", "role":

"user" }, { "content": "There are some very important vitamins that ensure the

proper functioning of the body, including Vitamins A, C, D, E, and K along ...}]

Let's practice!

Reinforcement Learning from Human Feedback (RLHF)