Breaking down the Transformer

Transformer Models with PyTorch

James Chapman

Curriculum Manager, DataCamp

The paper that changed everything...

- Attention Is All You Need by Ashish Vaswani et al. (arXiv:1706.03762)

- Attention mechanisms

- Optimized for text modeling

- Used in Large Language Models (LLMs)

The paper that changed everything...

- Attention Is All You Need by Ashish Vaswani et al.

- Attention mechanisms

- Optimized for text modeling

- Used in Large Language Models (LLMs)

The paper that changed everything...

- Attention Is All You Need by Ashish Vaswani et al.

- Attention mechanisms

- Optimized for text modeling

- Used in Large Language Models (LLMs)

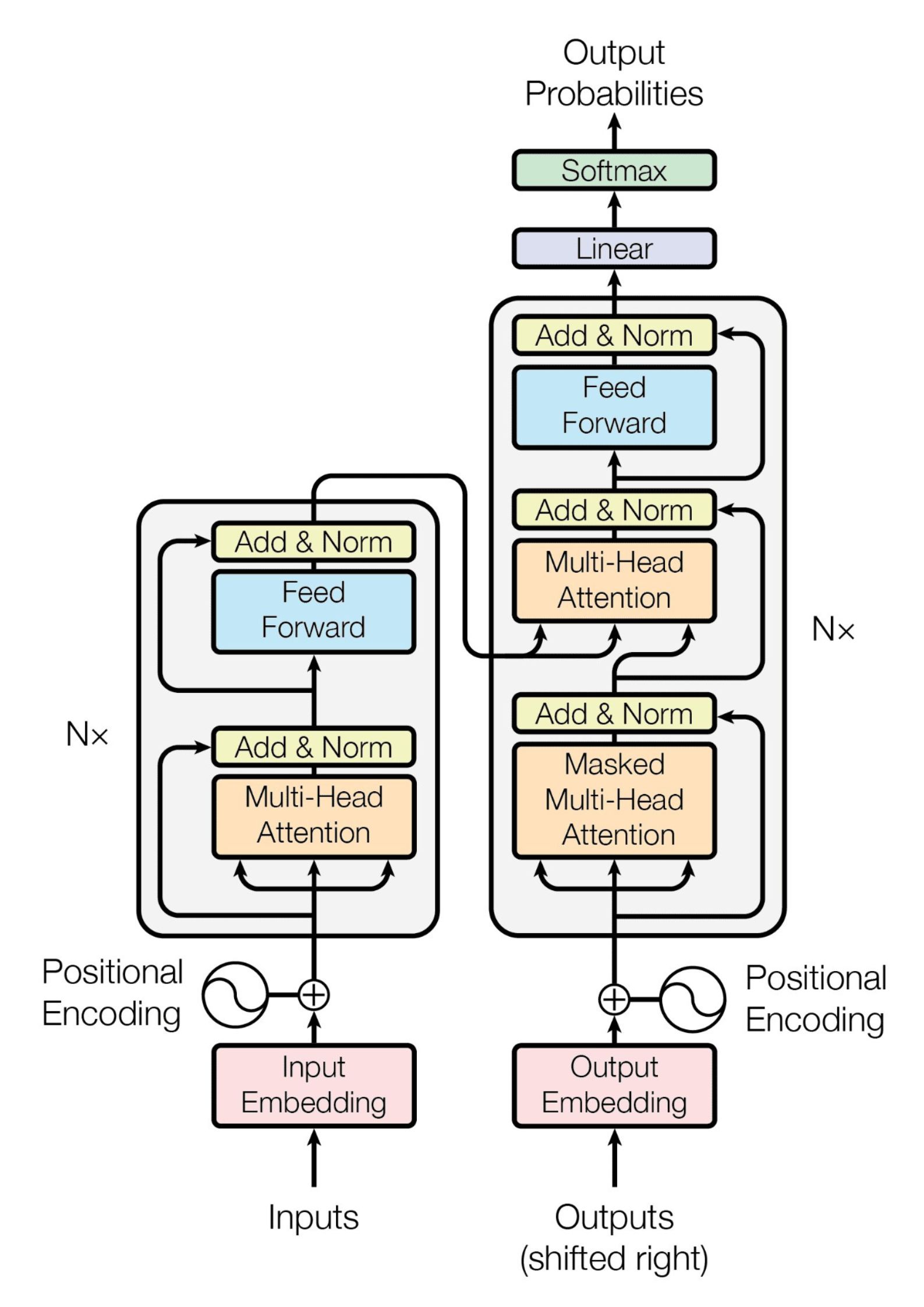

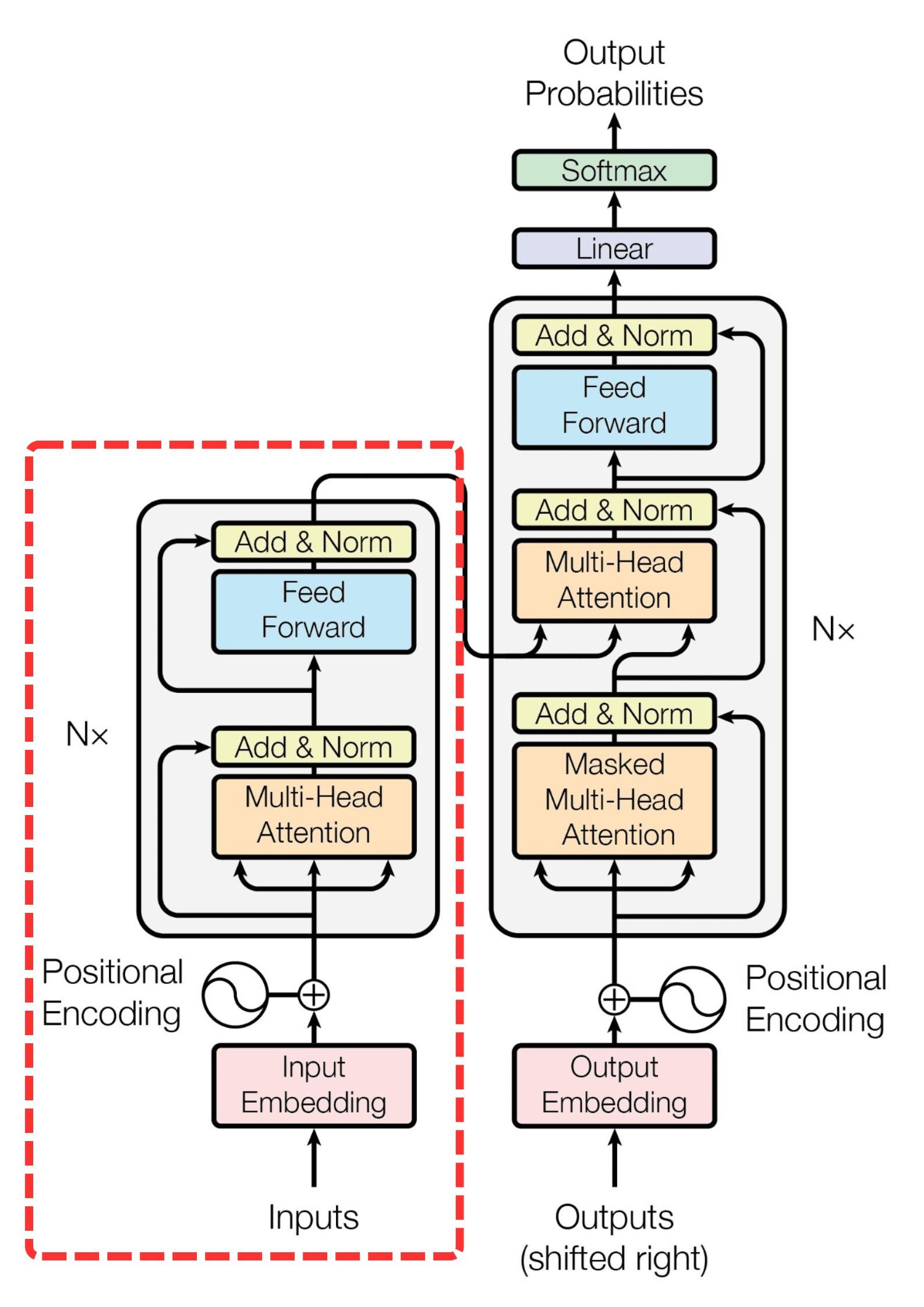

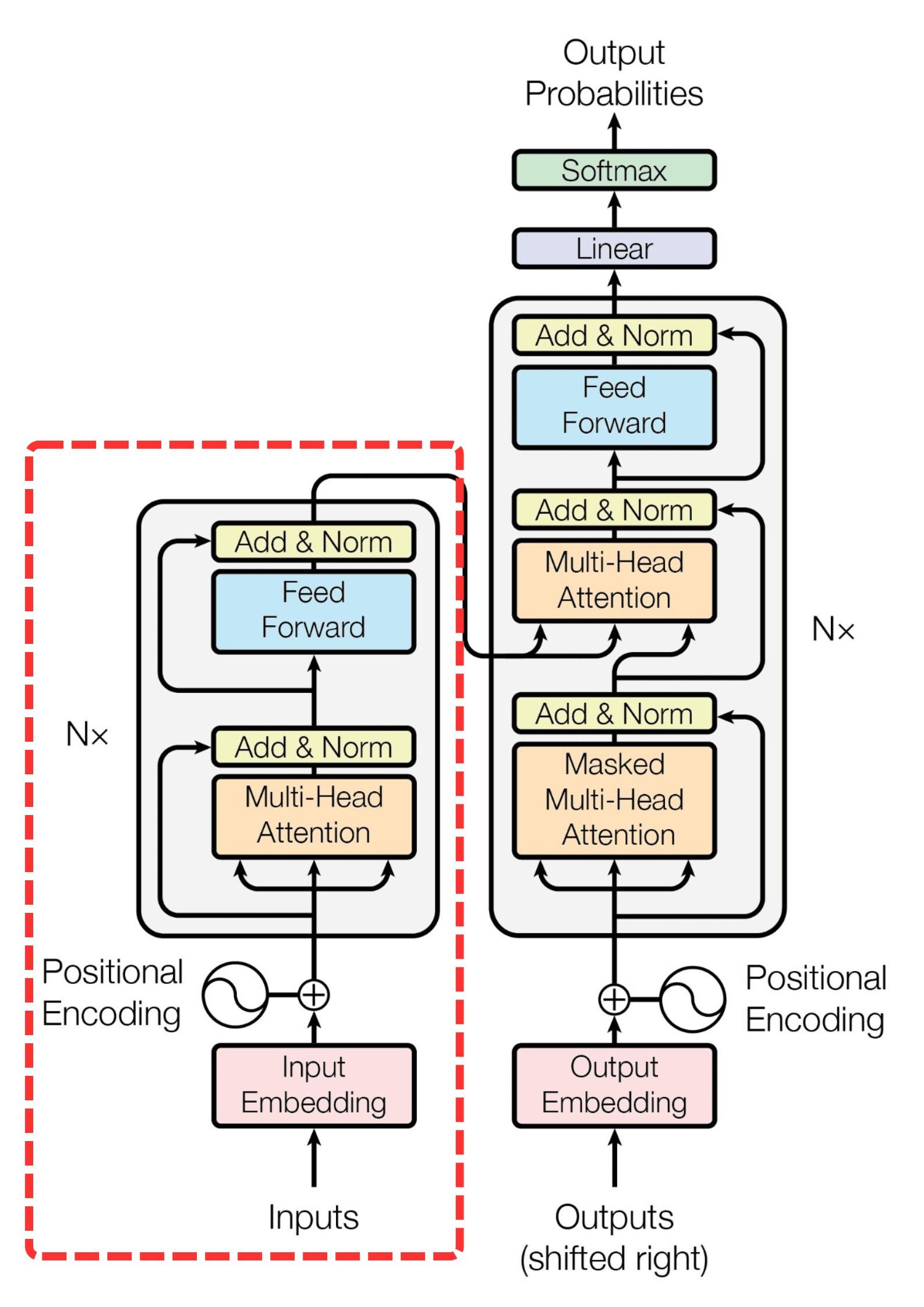

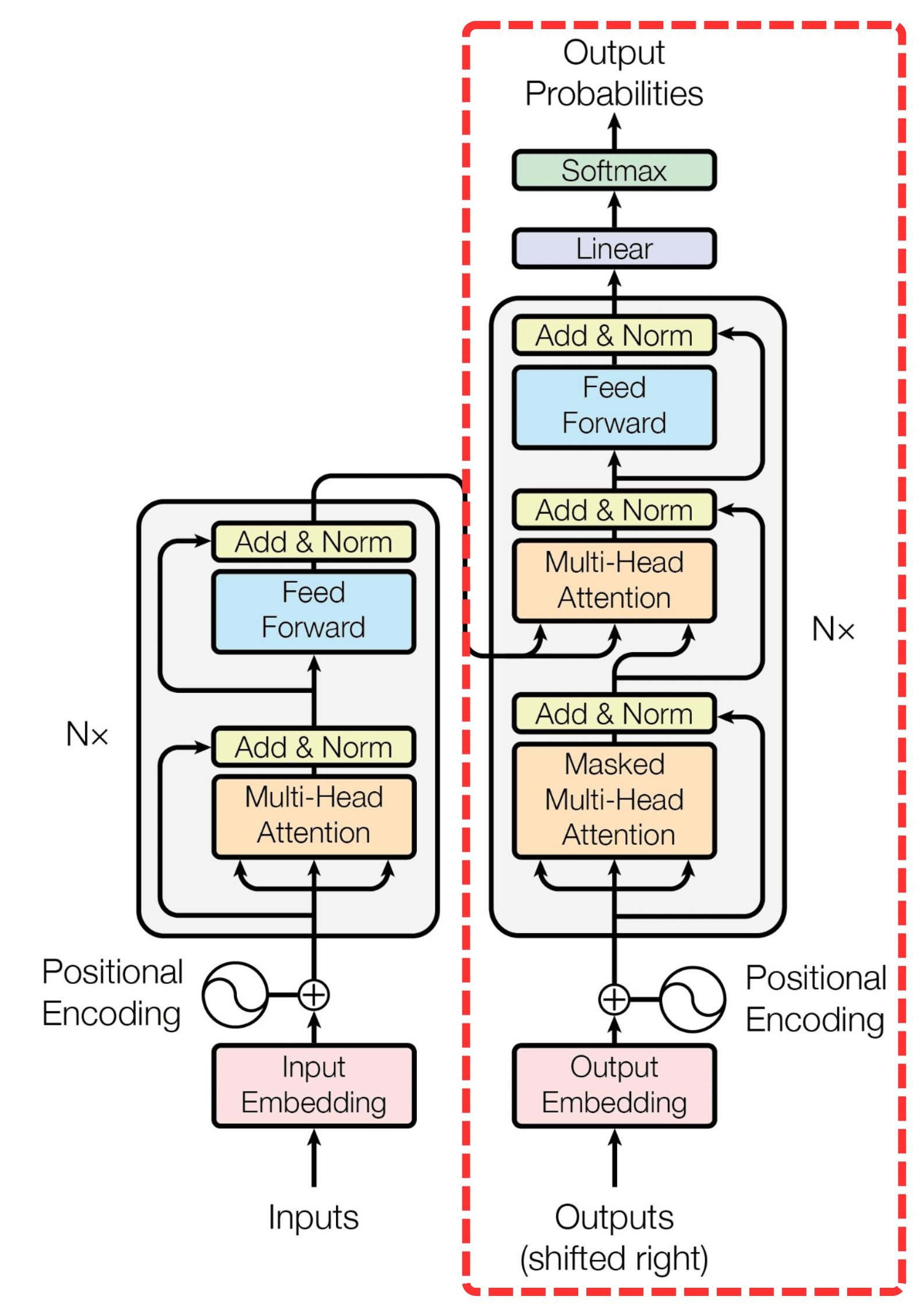

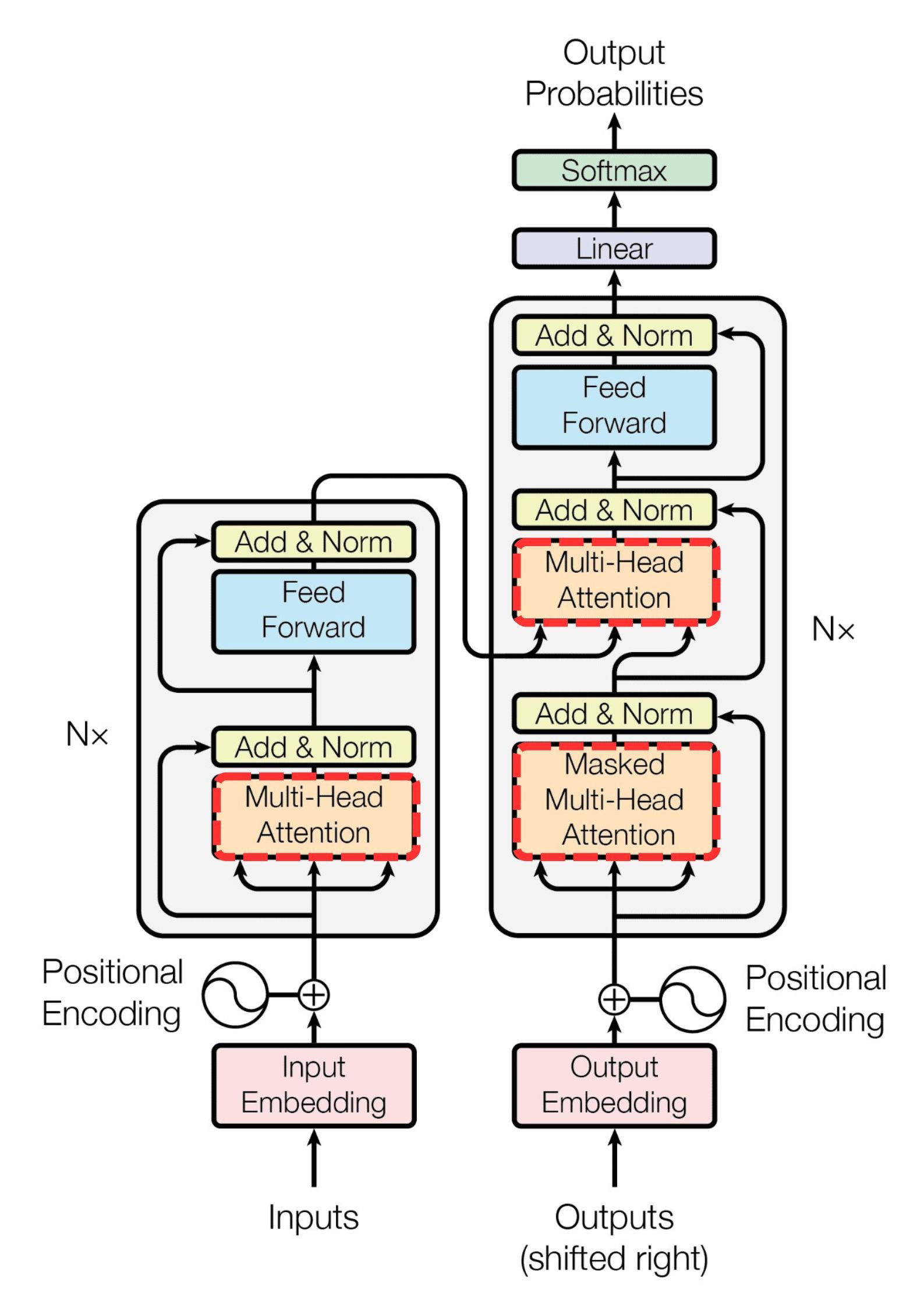

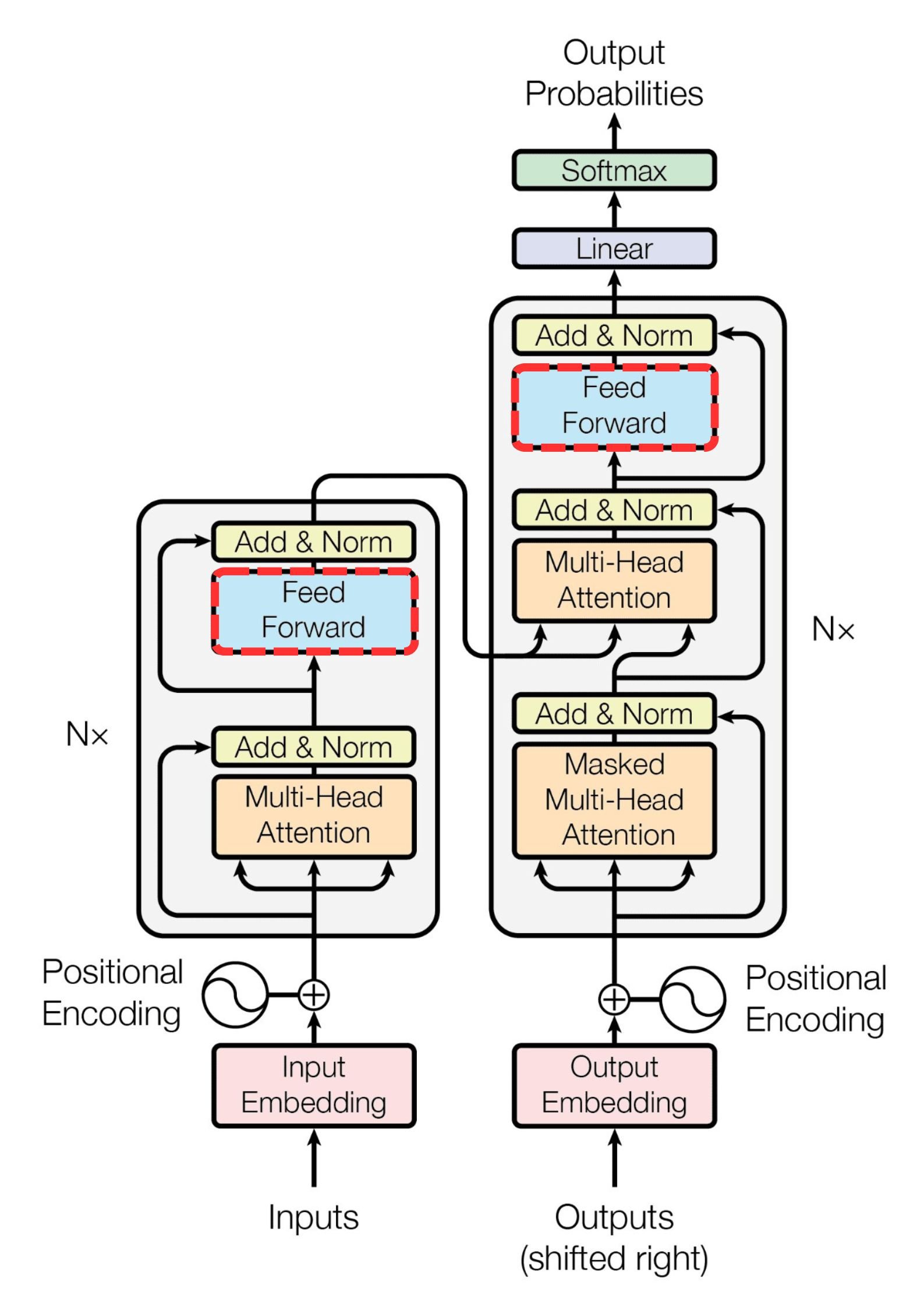

Unpacking the Transformer...

Encoder block

- Multiple identical layers

- Reading and processing input sequence

- Generate context-rich numerical representations

- Uses self-attention and feed-forward networks

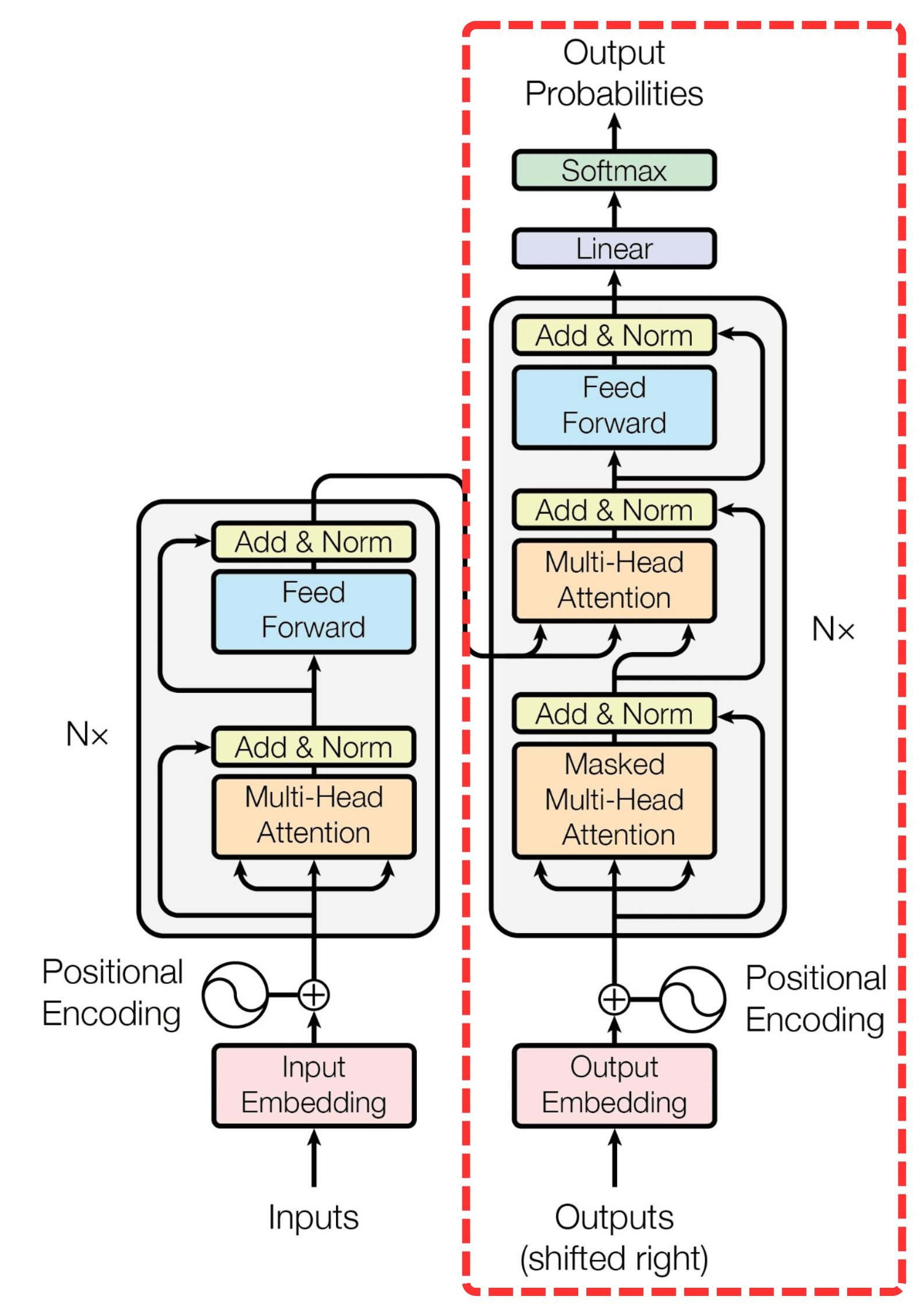

Unpacking the Transformer...

Decoder block

- Encoded input sequence → output sequence

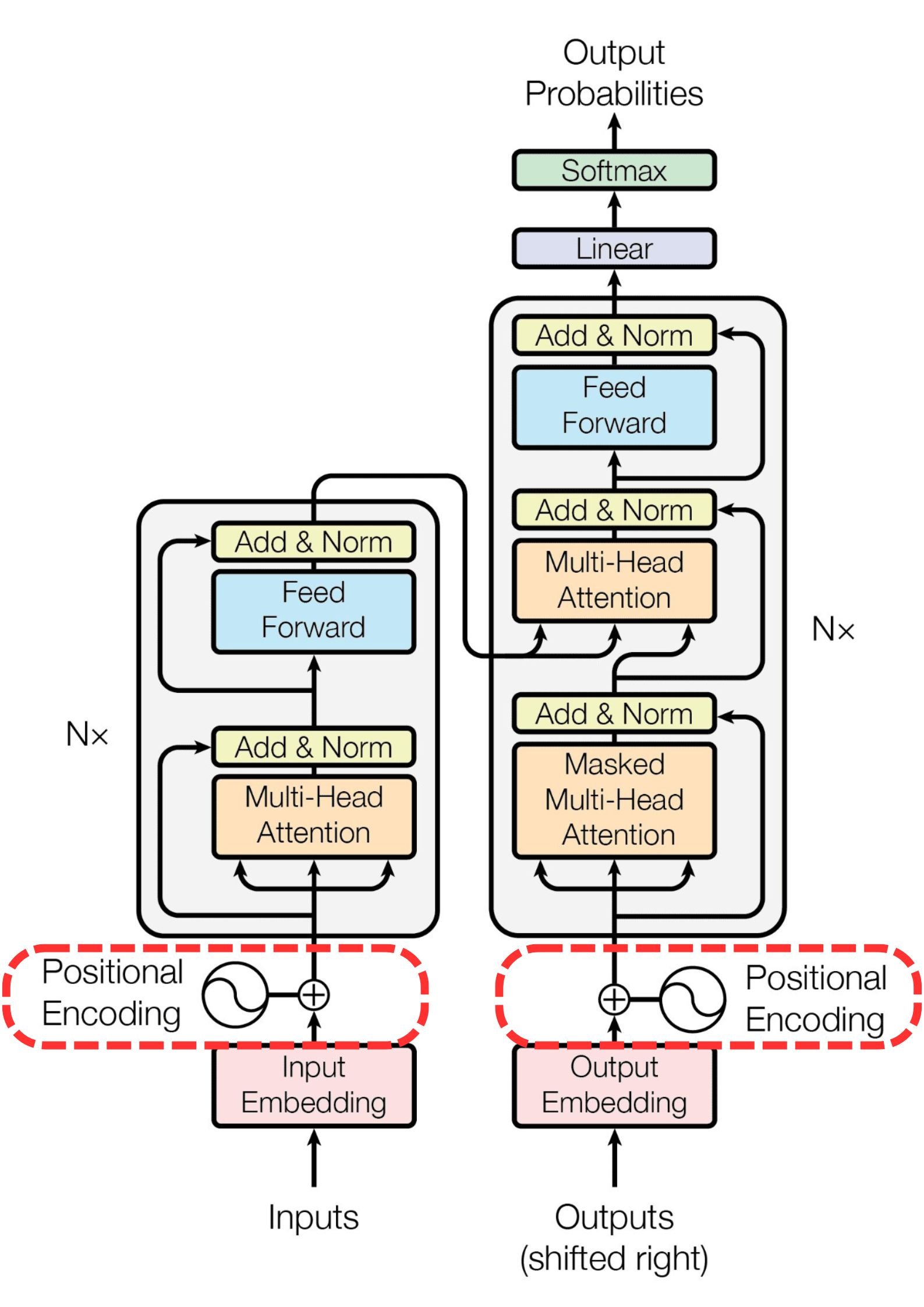

Unpacking the Transformer...

Positional encoding

- Encode each token's position in the sequence

- Order is crucial for modeling sequences

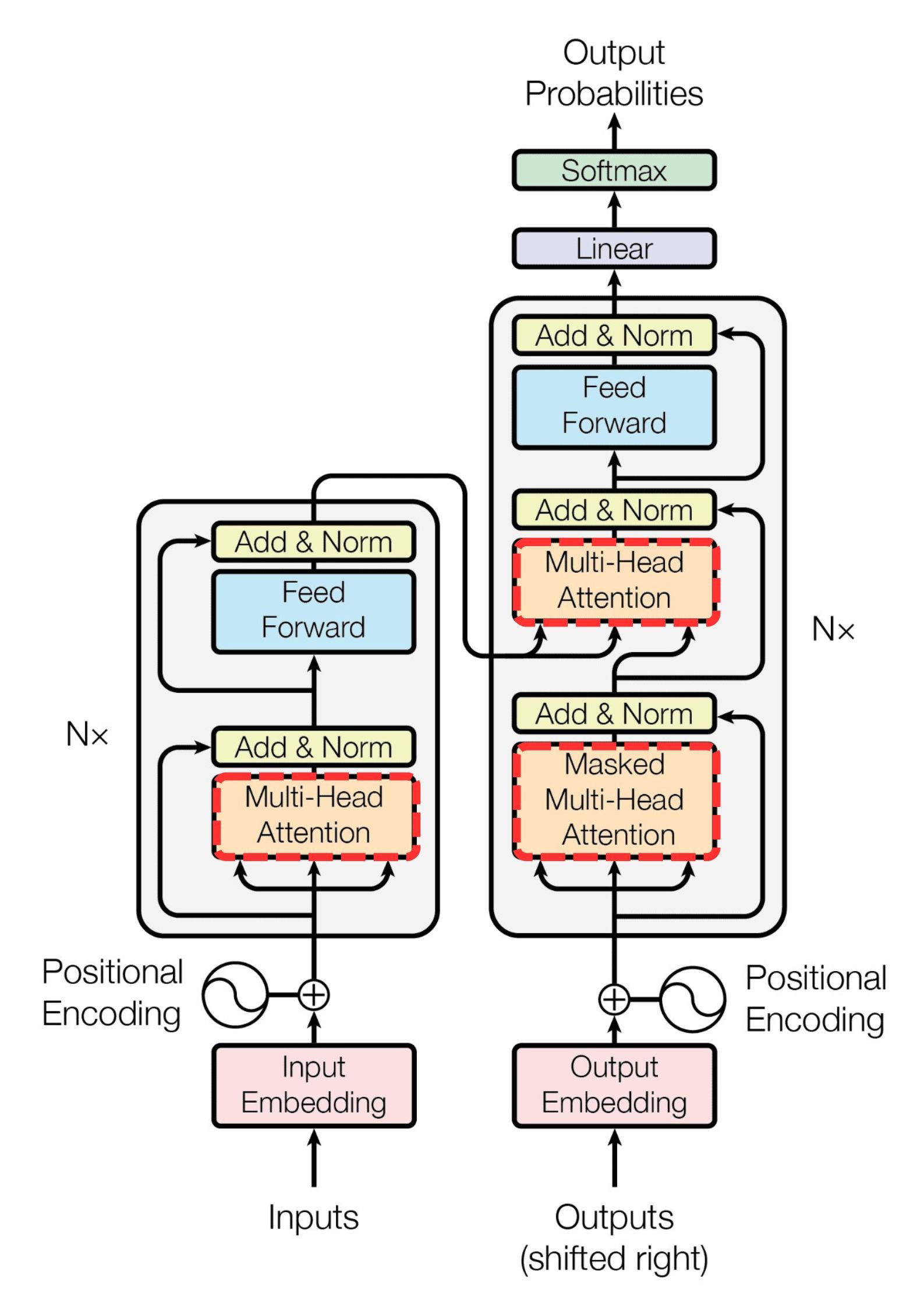

Unpacking the Transformer...

Attention mechanisms

- Focus on important tokens and their relationships

- Improve text generation

Unpacking the Transformer...

Attention mechanisms

- Focus on important tokens and their relationships

- Improve text generation

Self-attention

- Weighting token importance

- Captures long-range dependencies

Unpacking the Transformer...

Attention mechanisms

- Focus on important tokens and their relationships

- Improve text generation

Self-attention

- Weighting token importance

- Captures long-range dependencies

Multi-head attention

- Splits input into multiple heads

- Heads capture distinct patterns, leading to richer representations

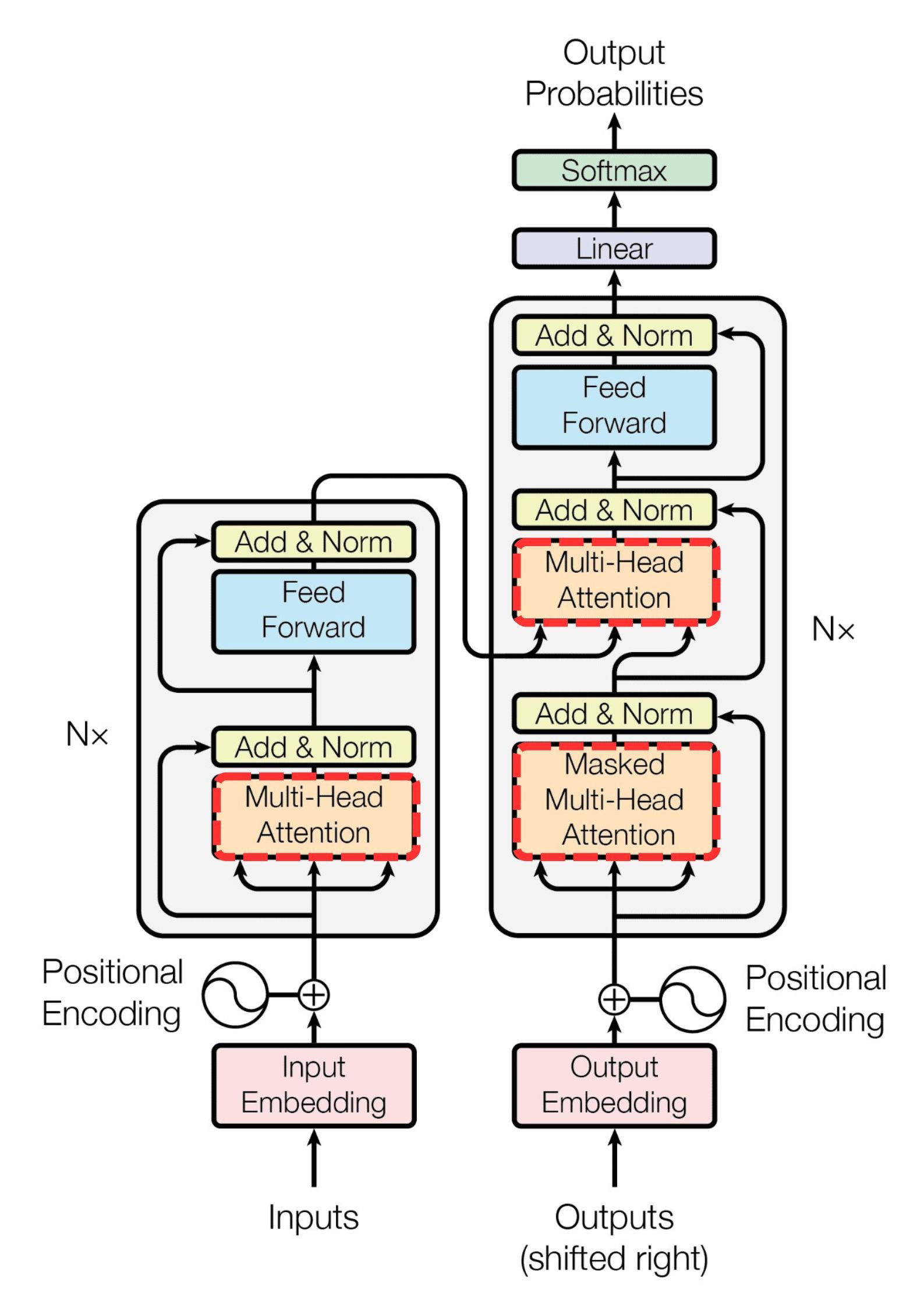

Unpacking the Transformer...

Position-wise feed-forward networks

- Simple NNs that apply transformations

- Each token is transformed independently

- Position-independent/"position-wise"

Transformers in PyTorch

d_model: Dimensionality of model inputsnheads: Number of attention headsnum_encoder_layers: Number of encoder layersnum_decoder_layers: Number of decoder layers

import torch.nn as nnmodel = nn.Transformer(d_model=512,nhead=8,num_encoder_layers=6,num_decoder_layers=6)print(model)

Transformer(

(encoder): TransformerEncoder(

(layers): ModuleList(

(0-5): 6 x TransformerEncoderLayer(

(self_attn): MultiheadAttention(

(out_proj): NonDynamicallyQuantizableLinear(in_features=512, out_features=512, bias=True)

)

(linear1): Linear(in_features=512, out_features=2048, bias=True)

(dropout): Dropout(p=0.1, inplace=False)

(linear2): Linear(in_features=2048, out_features=512, bias=True)

(norm1): LayerNorm((512,), eps=1e-05, elementwise_affine=True)

(norm2): LayerNorm((512,), eps=1e-05, elementwise_affine=True)

(dropout1): Dropout(p=0.1, inplace=False)

(dropout2): Dropout(p=0.1, inplace=False)

)

)

(norm): LayerNorm((512,), eps=1e-05, elementwise_affine=True)

)

...

(decoder): TransformerDecoder(

(layers): ModuleList(

(0-5): 6 x TransformerDecoderLayer(

(self_attn): MultiheadAttention(

(out_proj): NonDynamicallyQuantizableLinear(in_features=512, out_features=512, bias=True)

)

(multihead_attn): MultiheadAttention(

(out_proj): NonDynamicallyQuantizableLinear(in_features=512, out_features=512, bias=True)

)

(linear1): Linear(in_features=512, out_features=2048, bias=True)

(dropout): Dropout(p=0.1, inplace=False)

(linear2): Linear(in_features=2048, out_features=512, bias=True)

(norm1): LayerNorm((512,), eps=1e-05, elementwise_affine=True)

(norm2): LayerNorm((512,), eps=1e-05, elementwise_affine=True)

(norm3): LayerNorm((512,), eps=1e-05, elementwise_affine=True)

(dropout1): Dropout(p=0.1, inplace=False)

(dropout2): Dropout(p=0.1, inplace=False)

(dropout3): Dropout(p=0.1, inplace=False)

)

)

(norm): LayerNorm((512,), eps=1e-05, elementwise_affine=True)

)

)

Let's practice!

Transformer Models with PyTorch