Decoder transformers

Transformer Models with PyTorch

James Chapman

Curriculum Manager, DataCamp

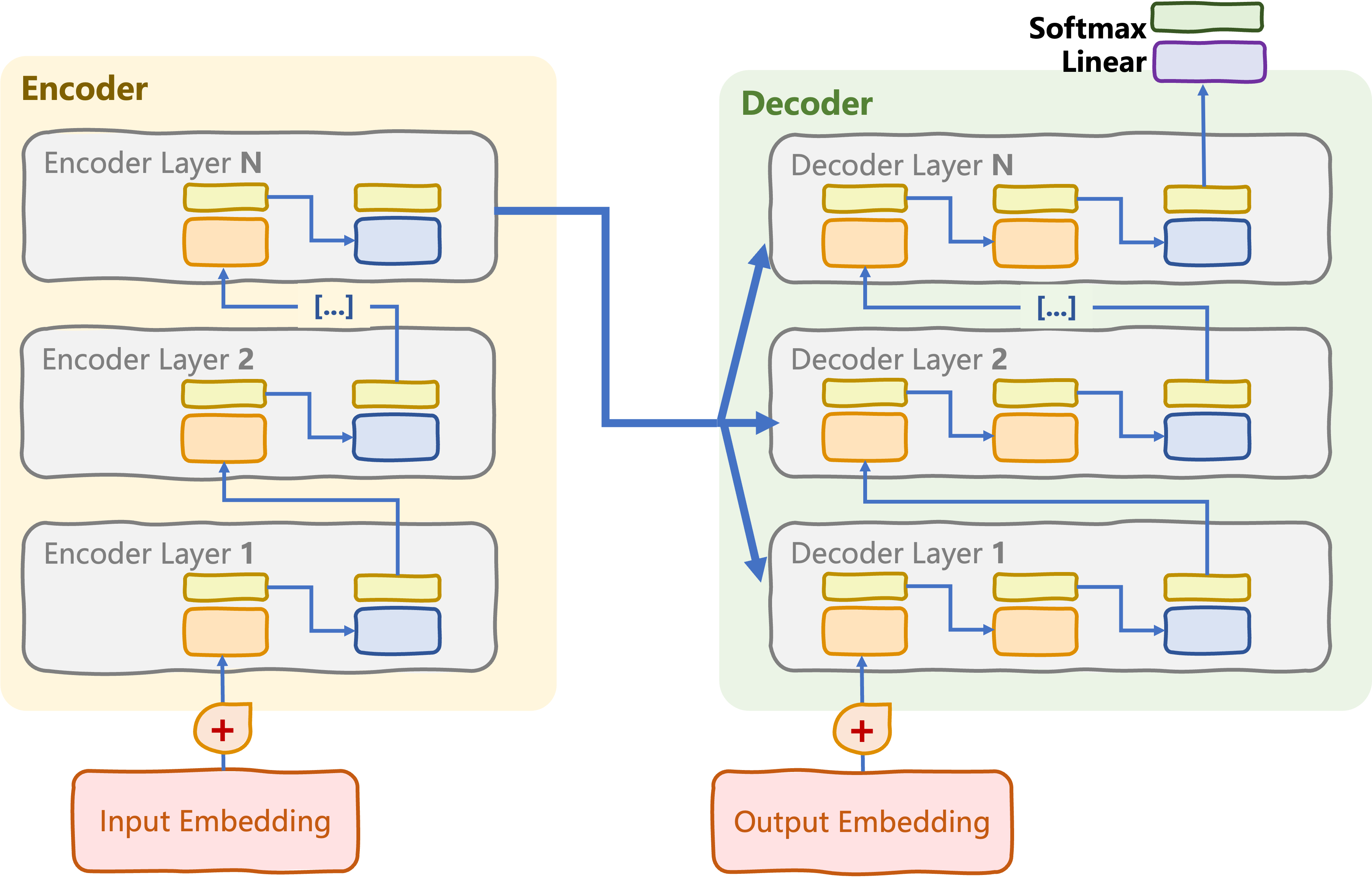

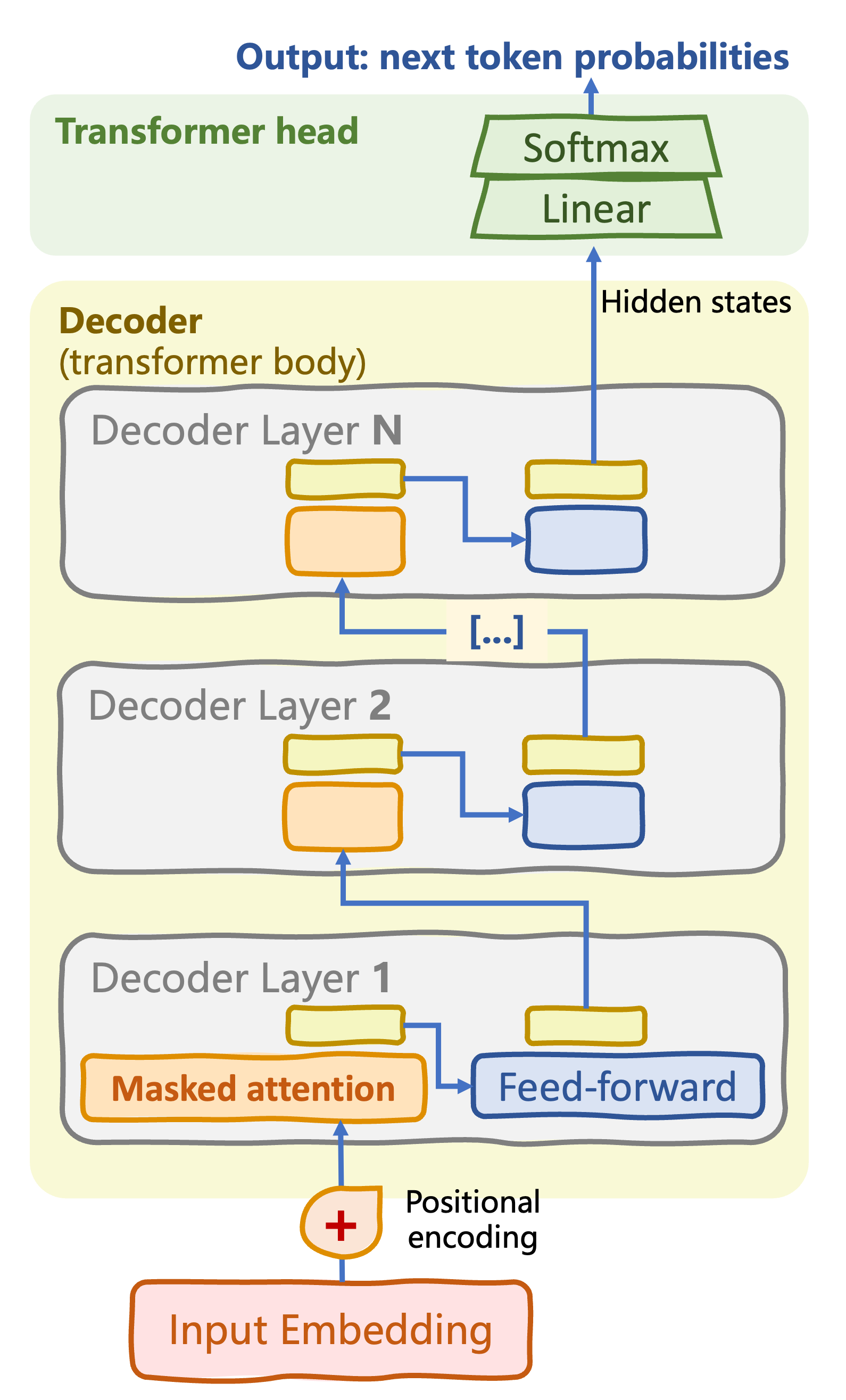

From original to decoder-only transformer

From original to decoder-only transformer

Autoregressive sequence generation: text generation and completion

From original to decoder-only transformer

Autoregressive sequence generation: text generation and completion

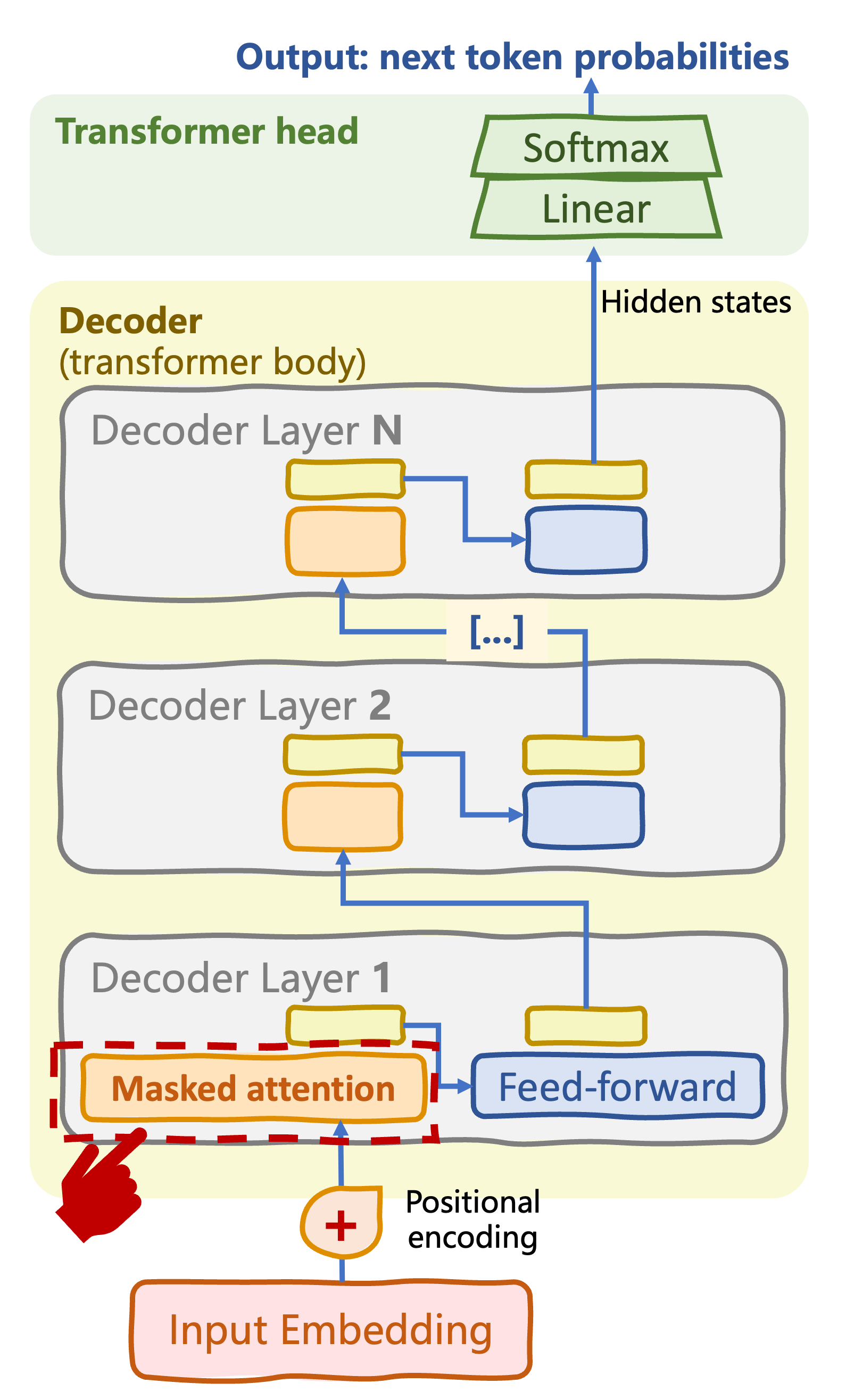

Masked multi-head self-attention

- Hide later tokens in sequence

From original to decoder-only transformer

Autoregressive sequence generation: text generation and completion

Masked multi-head self-attention

- Hide later tokens in sequence

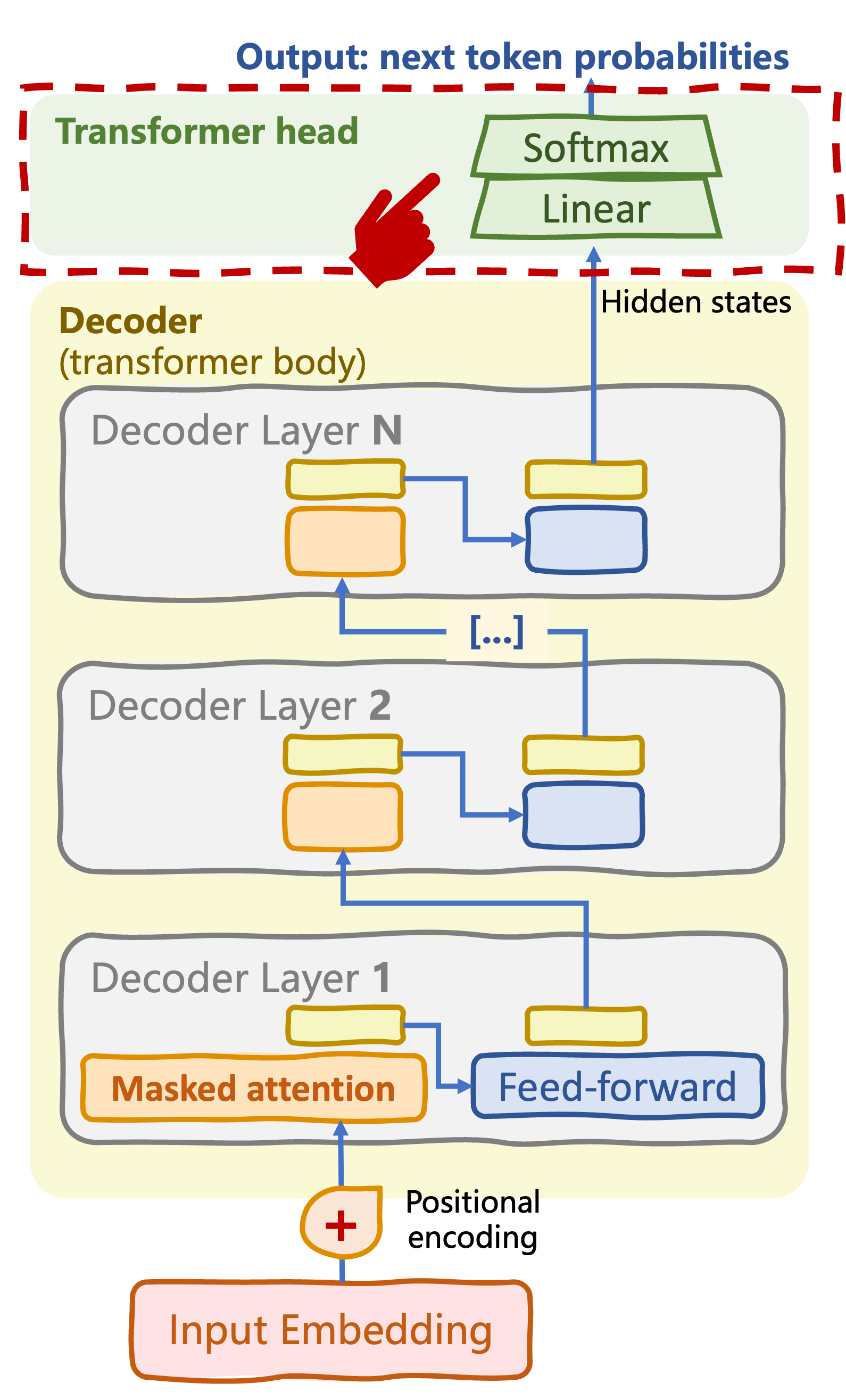

Decoder-only transformer head

- Linear + Softmax over vocabulary

- Predict most likely next tokens

Masked self-attention/causal attention

- Key to autoregressive or causal behavior

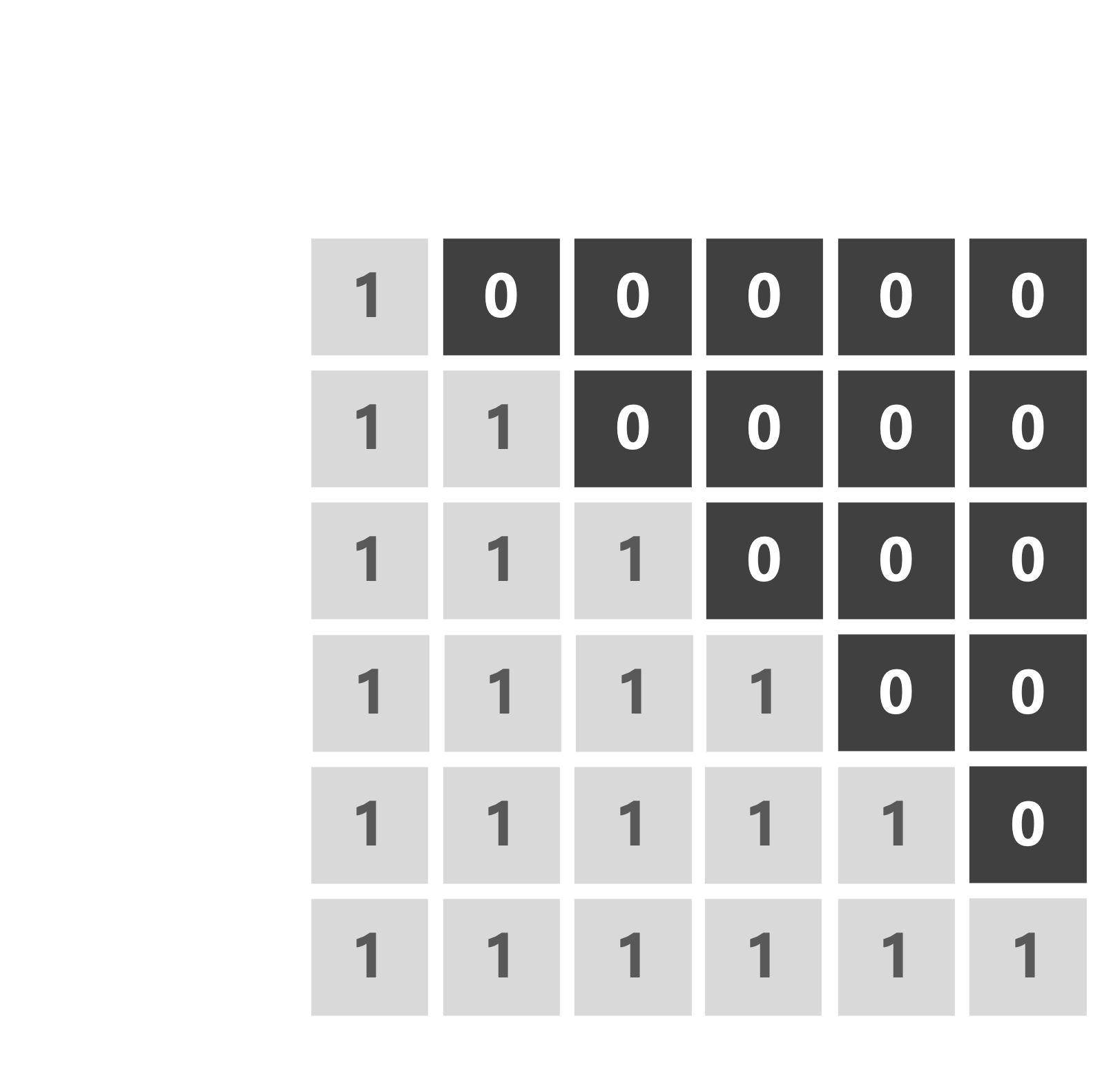

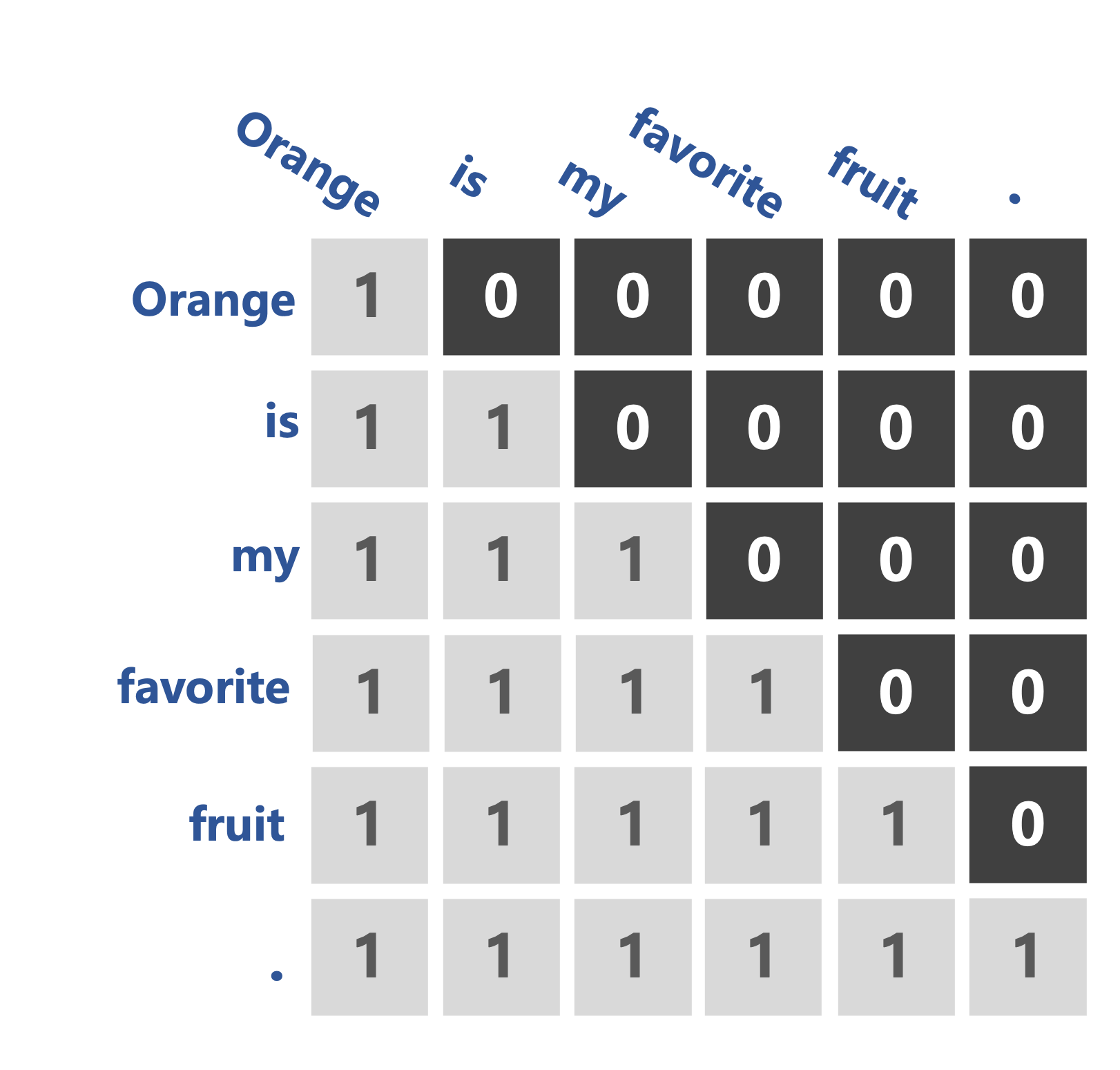

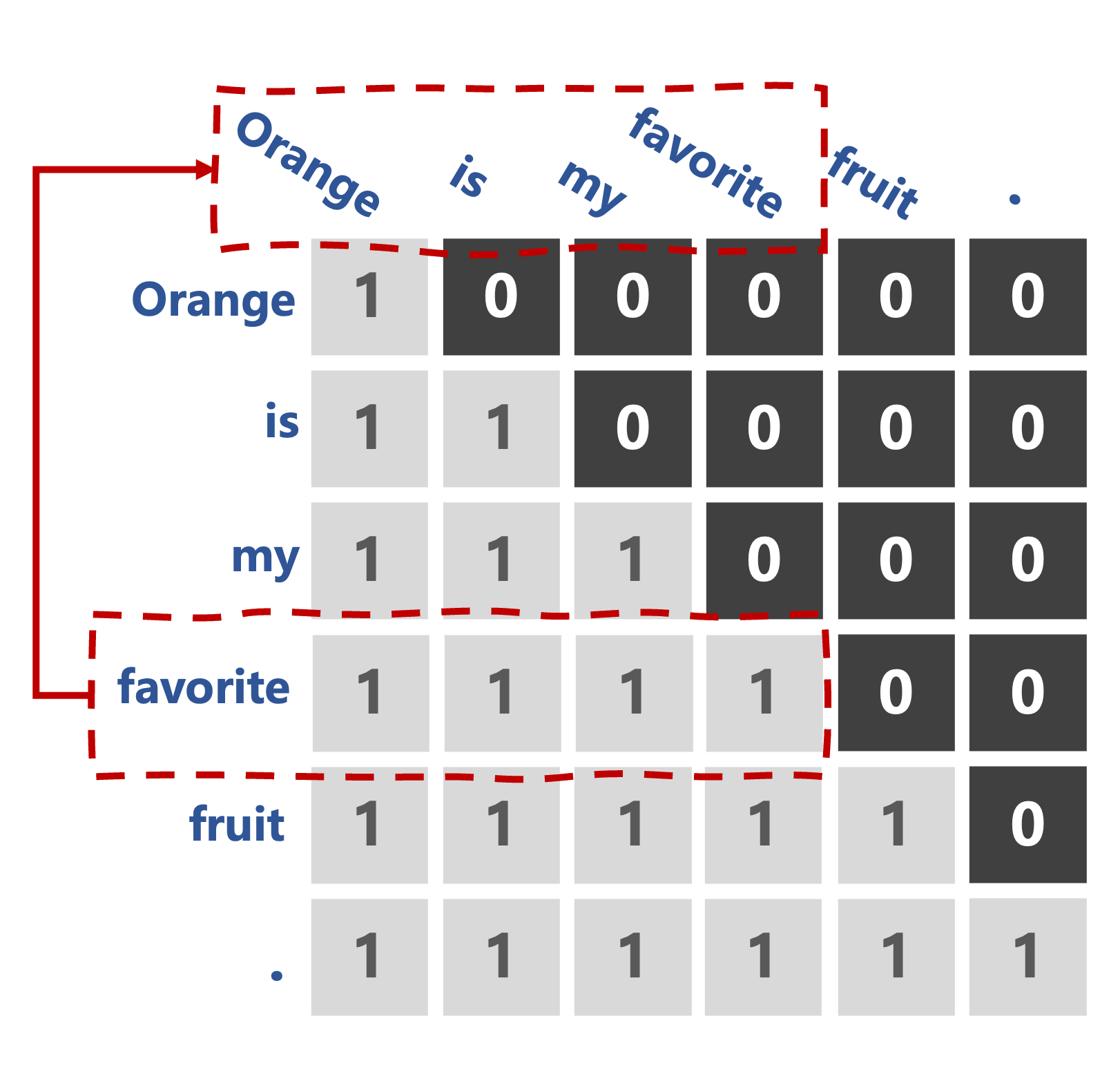

- Triangular (causal) attention mask

Masked self-attention/causal attention

- Key to autoregressive or causal behavior

- Triangular (causal) attention mask

- Token only pays attention to prior tokens in the sequence

Masked self-attention/causal attention

tgt_mask = (1 - torch.triu(

torch.ones(1, seq_len, seq_len), diagonal=1)

).bool()

- Key to autoregressive or causal behavior

- Triangular (causal) attention mask

Token only pays attention to prior tokens in the sequence

- "favorite": "orange", "is", "my", "favorite"

Enforced causal attention: predict next word to generate, e.g., "fruit"

Decoder layer

class DecoderLayer(nn.Module):

def __init__(self, d_model, num_heads, d_ff, dropout):

super().__init__()

self.self_attn = MultiHeadAttention(d_model, num_heads)

self.ff_sublayer = FeedForwardSubLayer(d_model, d_ff)

self.norm1 = nn.LayerNorm(d_model)

self.norm2 = nn.LayerNorm(d_model)

self.dropout = nn.Dropout(dropout)

def forward(self, x, tgt_mask):

attn_output = self.self_attn(x, x, x, tgt_mask)

x = self.norm1(x + self.dropout(attn_output))

ff_output = self.ff_sublayer(x)

x = self.norm2(x + self.dropout(ff_output))

return x

Decoder transformer body and head

class TransformerDecoder(nn.Module): def __init__(self, vocab_size, d_model, num_layers, num_heads, d_ff, dropout, max_seq_length): super(TransformerDecoder, self).__init__() self.embedding = InputEmbeddings(vocab_size, d_model) self.positional_encoding = PositionalEncoding(d_model, max_seq_length) self.layers = nn.ModuleList([DecoderLayer(d_model, num_heads, d_ff, dropout) for _ in range(num_layers)])self.fc = nn.Linear(d_model, vocab_size)def forward(self, x, tgt_mask): x = self.embedding(x) x = self.positional_encoding(x) for layer in self.layers: x = layer(x, tgt_mask)x = self.fc(x) return F.log_softmax(x, dim=-1)

self.fc: output linear layer withvocab_sizeneurons- Add

self.fcand softmax activation in forward pass

Instantiating the decoder-only transformer

decoder = TransformerDecoder(vocab_size, d_model, num_layers, num_heads, d_ff, dropout, max_seq_length=seq_length)output = decoder(input_sequence, tgt_mask)

tensor([[[ -9.4692, -9.8429, -9.3077, ..., -9.9523, -10.2669, -9.7084],

[ -9.1556, -9.6133, -10.0923, ..., -9.3810, -9.0420, -9.1780],

...,

[ -9.5327, -10.3534, -9.8443, ..., -9.8170, -8.8491, -8.8322],

[ -9.6086, -9.6336, -10.1595, ..., -9.8550, -9.9955, -8.7121]],

[[ -9.5865, -8.0360, -8.5056, ..., -9.9855, -9.5677, -9.0352],

[ -9.7213, -8.6451, -8.3779, ..., -9.2994, -9.2601, -9.8509],

...,

[ -9.0471, -9.7410, -10.0160, ..., -10.0195, -9.4651, -8.9605],

[ -9.5767, -10.2692, -8.8394, ..., -8.3458, -9.1479, -10.0650]]],

grad_fn=<LogSoftmaxBackward0>)

Let's practice!

Transformer Models with PyTorch