Assembler le tout

Introduction au data engineering

Vincent Vankrunkelsven

Data Engineer @ DataCamp

La fonction ETL

def extract_table_to_df(tablename, db_engine): return pd.read_sql("SELECT * FROM {}".format(tablename), db_engine)def split_columns_transform(df, column, pat, suffixes): # Converts column into str and splits it on pat...def load_df_into_dwh(film_df, tablename, schema, db_engine): return pd.to_sql(tablename, db_engine, schema=schema, if_exists="replace")db_engines = { ... } # Needs to be configured def etl(): # Extract film_df = extract_table_to_df("film", db_engines["store"]) # Transform film_df = split_columns_transform(film_df, "rental_rate", ".", ["_dollar", "_cents"]) # Load load_df_into_dwh(film_df, "film", "store", db_engines["dwh"])

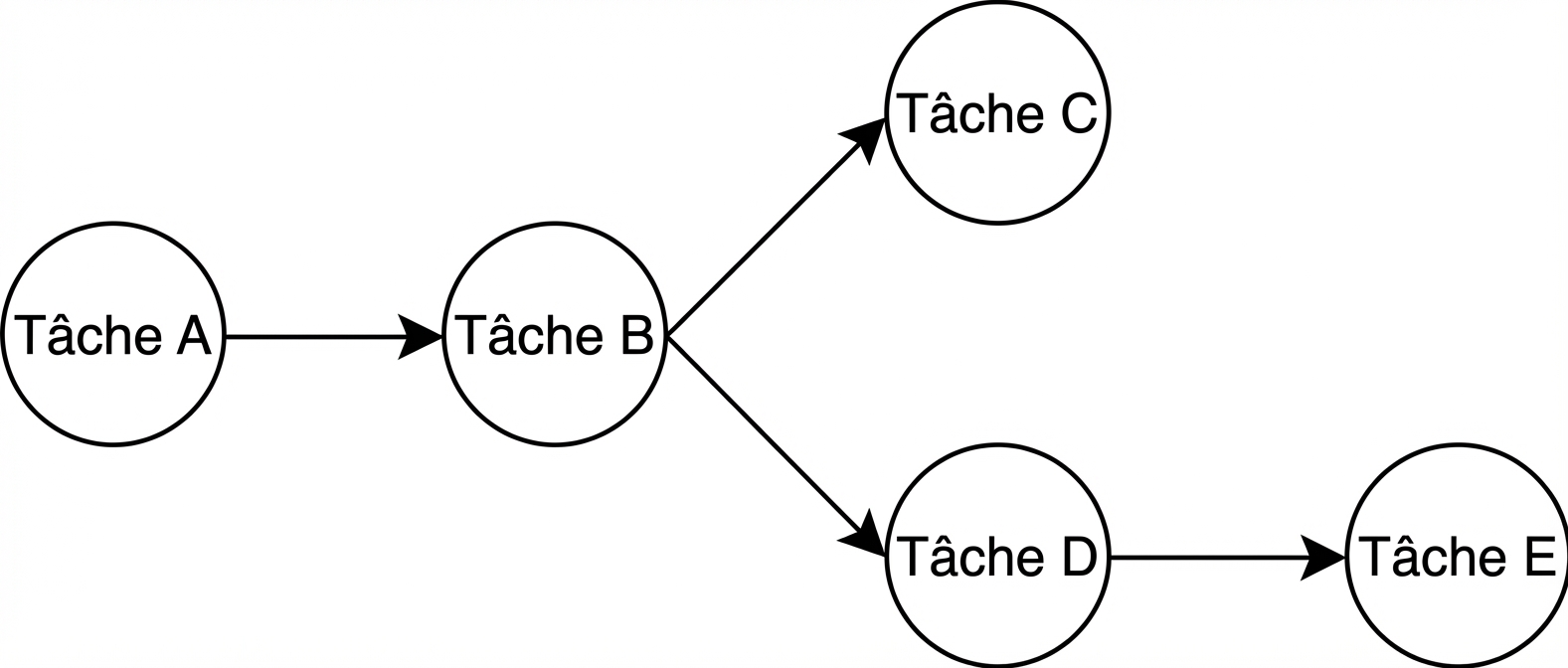

Rappel sur Airflow

- Orchestrateur de workflows

- Python

- DAG

- Tâches définies via des operators (ex.

BashOperator)

Planifier avec des DAG dans Airflow

from airflow.models import DAG

dag = DAG(dag_id="sample",

...,

schedule_interval="0 0 * * *")

# cron

# .------------------------- minute (0 - 59)

# | .----------------------- heure (0 - 23)

# | | .--------------------- jour du mois (1 - 31)

# | | | .------------------- mois (1 - 12)

# | | | | .----------------- jour de la semaine (0 - 6)

# * * * * * <commande>

# Exemple

0 * * * * # Chaque heure à la minute 0

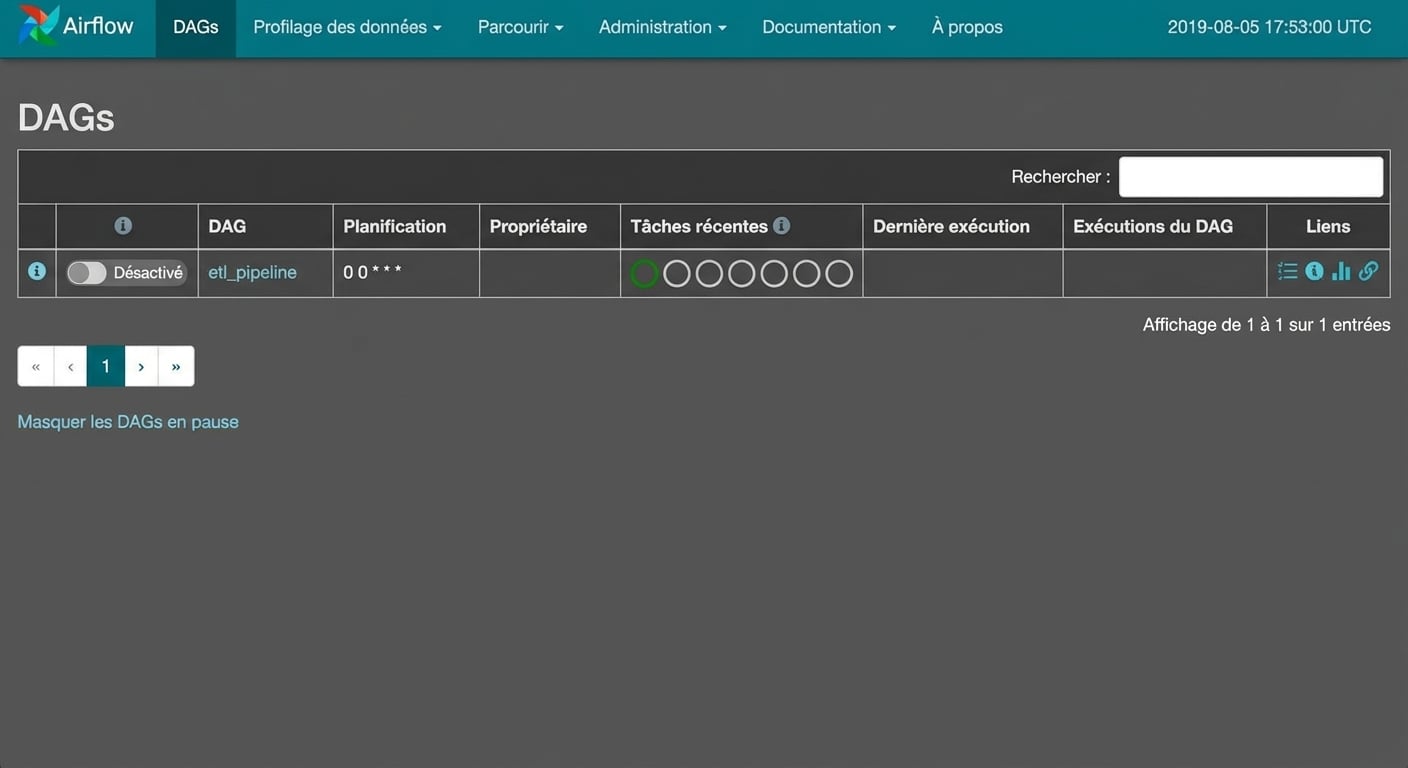

Le fichier de définition du DAG

from airflow.models import DAG from airflow.operators.python_operator import PythonOperator dag = DAG(dag_id="etl_pipeline", schedule_interval="0 0 * * *")etl_task = PythonOperator(task_id="etl_task", python_callable=etl, dag=dag)etl_task.set_upstream(wait_for_this_task)

Le fichier de définition du DAG

from airflow.models import DAG

from airflow.operators.python_operator import PythonOperator

...

etl_task.set_upstream(wait_for_this_task)

Enregistré sous etl_dag.py dans ~/airflow/dags/

Interface Airflow

Passons à la pratique !

Introduction au data engineering