Ajout de mémoire et de conversation

Concevoir des systèmes agentiques avec LangChain

Dilini K. Sumanapala, PhD

Founder & AI Engineer, Genverv, Ltd.

Tester l’usage d’outils

Tester l’usage d’outils

# Produire le graphe du chatbot display(Image(app.get_graph().draw_mermaid_png()))# Définir une fonction pour exécuter le chatbot et diffuser chaque message def stream_tool_responses(user_input: str): for event in graph.stream({"messages": [("user", user_input)]}):# Renvoyer la dernière réponse de l'agent for value in event.values(): print("Agent:", value["messages"])# Définir la requête et lancer le chatbot user_query = "House of Lords" stream_tool_responses(user_query)

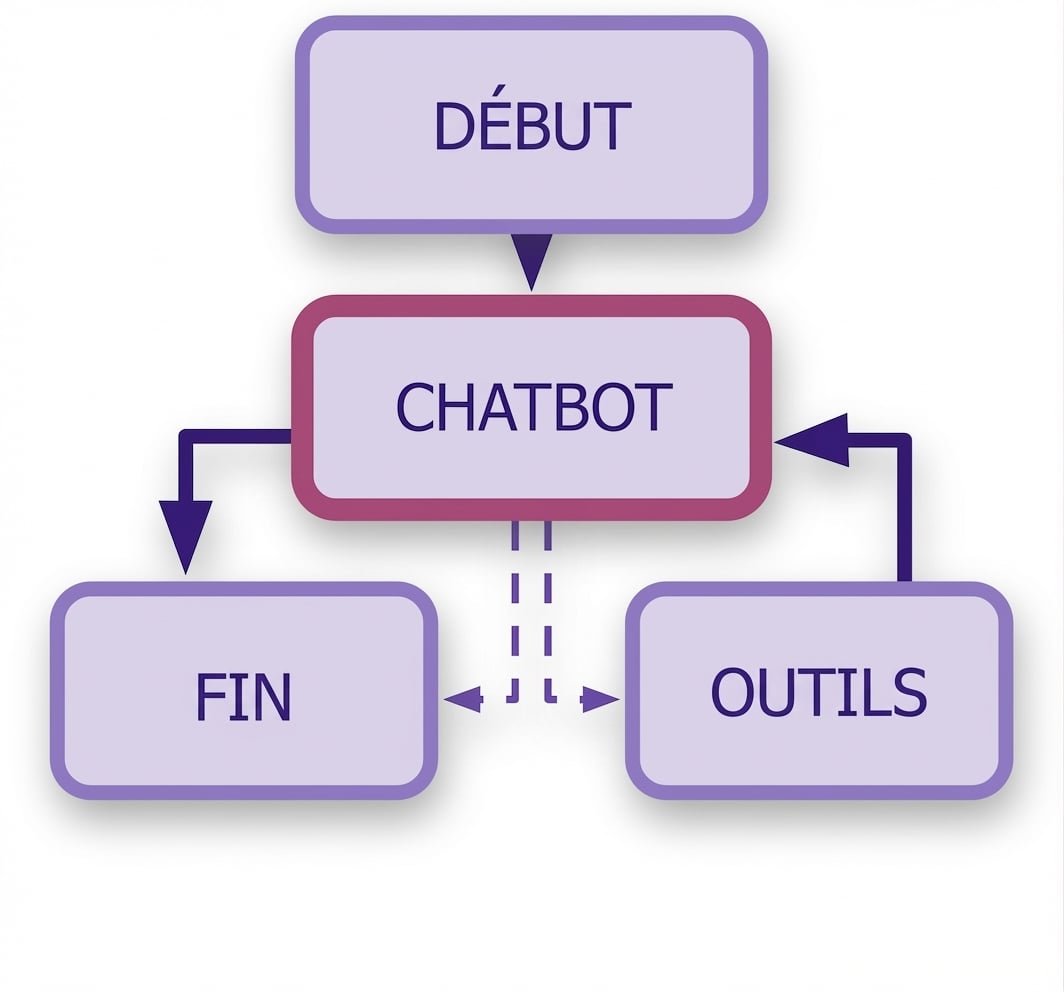

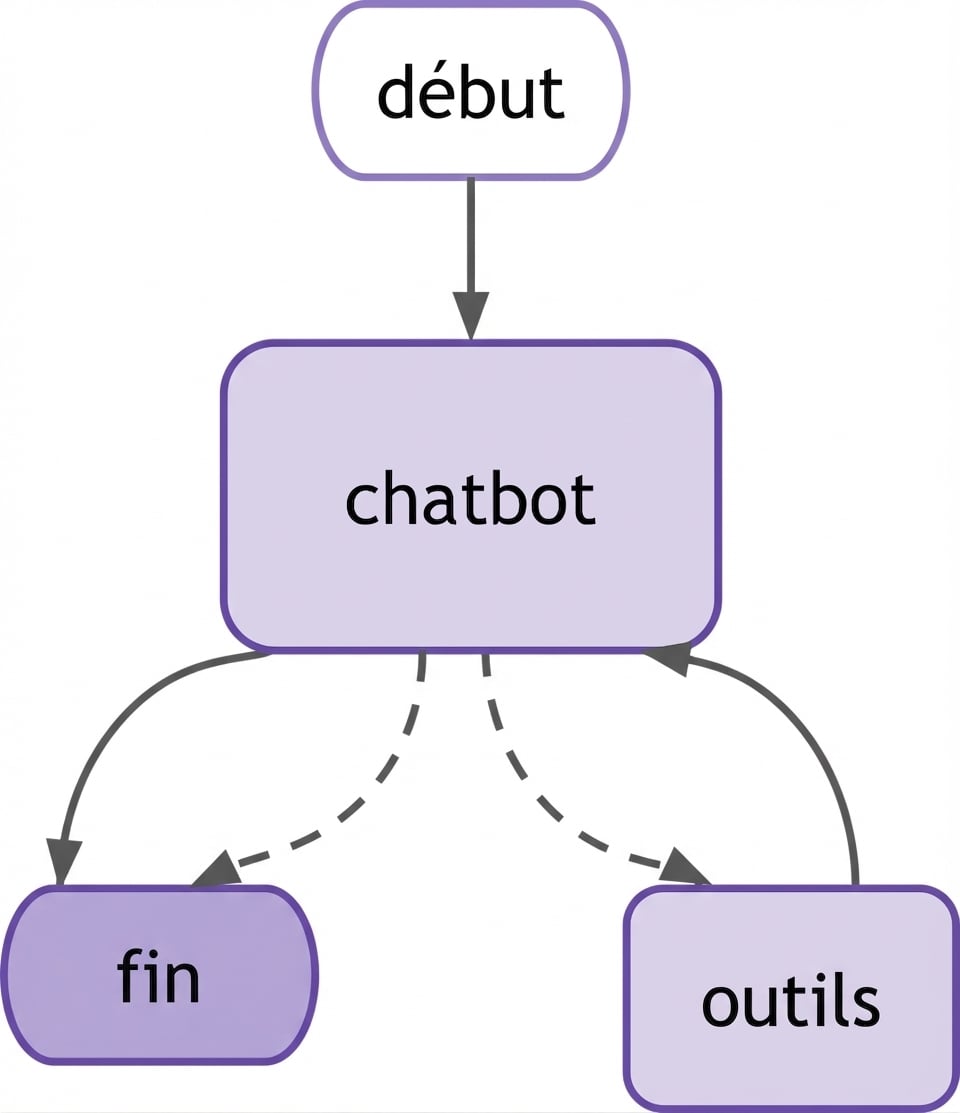

Visualiser le diagramme

Diffuser la sortie

Agent: [AIMessage(content='', additional_kwargs={'tool_calls': [{'function': {'arguments': '{"query":"House of Lords"}', 'name': 'wikipedia'}, 'type': 'function'}]}, response_metadata={'...'}])]Agent: [ToolMessage(content='Page: House of Lords\nSummary: The House of Lords is the upper house of the Parliament of the United Kingdom. Like the lower house...The House of Lords also has a Church of England role...', name='wikipedia', id='...',')]Agent: [AIMessage(content='The House of Lords is the upper house of the Parliament of the United Kingdom, located in the Palace of Westminster in London. It is one of the oldest institutions in the world..., additional_kwargs={},response_metadata= {'finish_reason': 'stop', 'model_name': 'gpt-4o-mini-2024-07-18', 'system_fingerprint': 'fp_0ba0d124f1'}, id='run-ae3a0b4f-5b42-4f4e-9409-7c191af0b9c9-0')]

Ajouter de la mémoire

# Importer les modules pour sauvegarder la mémoire from langgraph.checkpoint.memory import MemorySaver# Modifier le graphe avec pointage de mémoire memory = MemorySaver()# Compiler le graphe en passant la mémoire graph = graph_builder.compile(checkpointer=memory)

Diffuser des sorties avec mémoire

# Configure une fonction de streaming pour un utilisateur def stream_memory_responses(user_input: str):config = {"configurable": {"thread_id": "single_session_memory"}}# Diffuser les événements du graphe for event in graph.stream({"messages": [("user", user_input)]}, config):# Renvoyer la dernière réponse de l'agent for value in event.values(): if "messages" in value and value["messages"]: print("Agent:", value["messages"])stream_memory_responses("What is the Colosseum?") stream_memory_responses("Who built it?")

Générer une sortie avec mémoire

stream_memory_responses("What is the Colosseum?")

Agent: [AIMessage(content='', additional_kwargs={'tool_calls': [{'index': 0, 'id': '...', 'function': {'arguments': ' {"query":"Colosseum"}', 'name': 'wikipedia'}, ...])]Agent: [ToolMessage(content='Page: Colosseum\nSummary: The Colosseum is an ancient amphitheatre in Rome, Italy. It is the largest standing amphitheatre in the world..)]Agent: [AIMessage(content='The Colosseum, located in Rome, is the largest ancient amphitheatre still standing. Built under Emperor Vespasian and completed by his son Titus, it hosted gladiatorial games and public events. It is also known as the Flavian Amphitheatre due to its association with the Flavian dynasty.', additional_kwargs={}, response_metadata={'finish_reason': 'stop', 'model_name': 'gpt-4o-mini-...', ...')]

Générer une sortie avec mémoire

stream_memory_responses("Who built it?")

Agent: [AIMessage(content='The Colosseum was built by Emperor Vespasian around

72 AD and completed by his successor, Emperor Titus. Later modifications were

made by Emperor Domitian...')]

Passons à la pratique !

Concevoir des systèmes agentiques avec LangChain