Embeddings

Natural Language Processing (NLP) in Python

Fouad Trad

Machine Learning Engineer

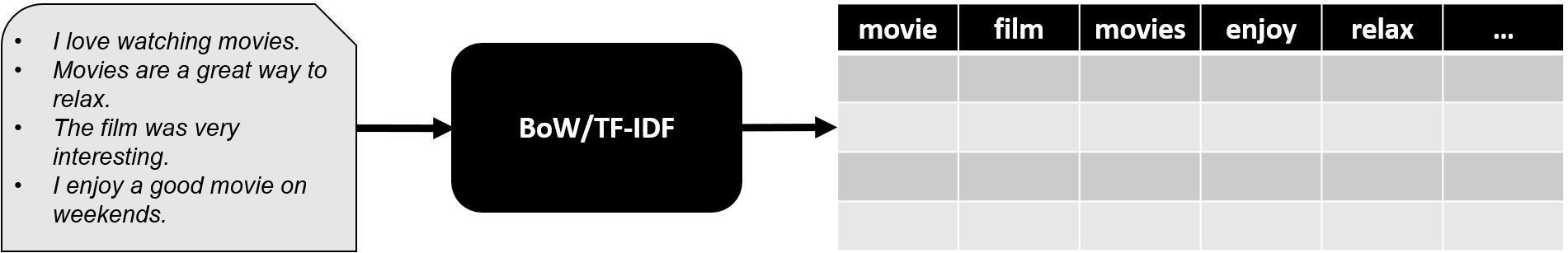

Limitations of BoW and TF-IDF

- Treating similar words as completely unrelated

- Failing to capture meaning of text

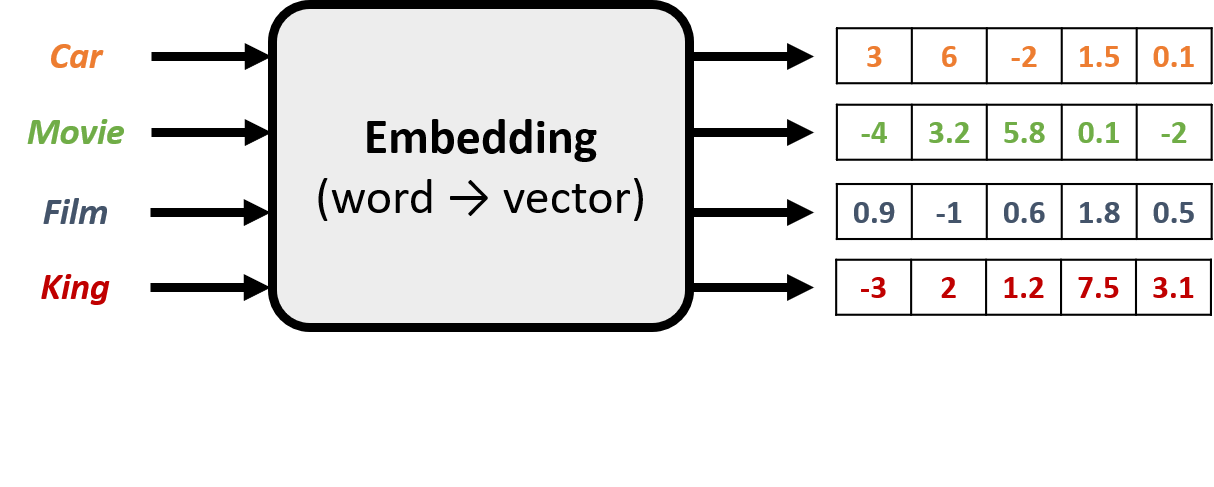

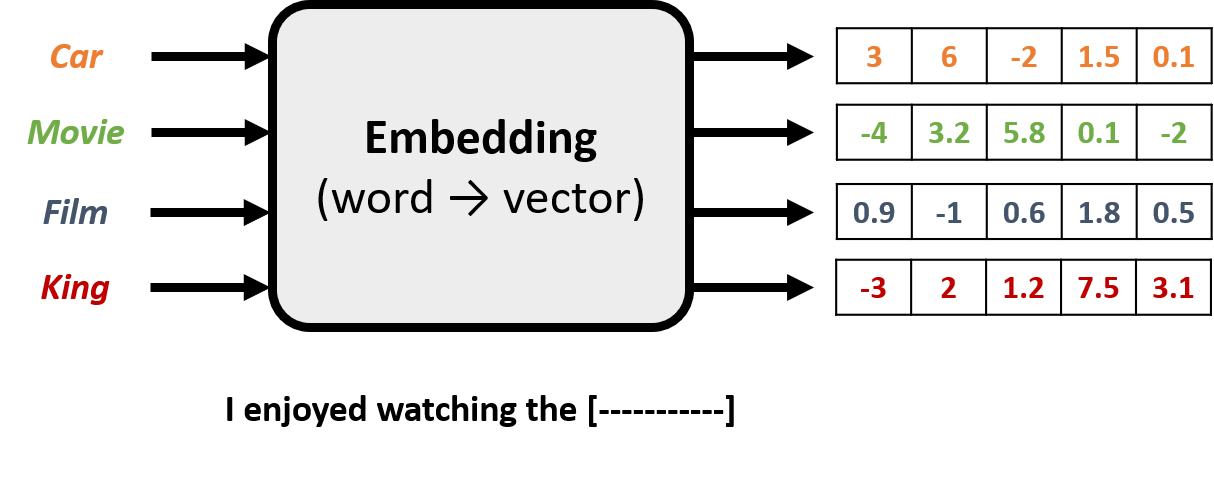

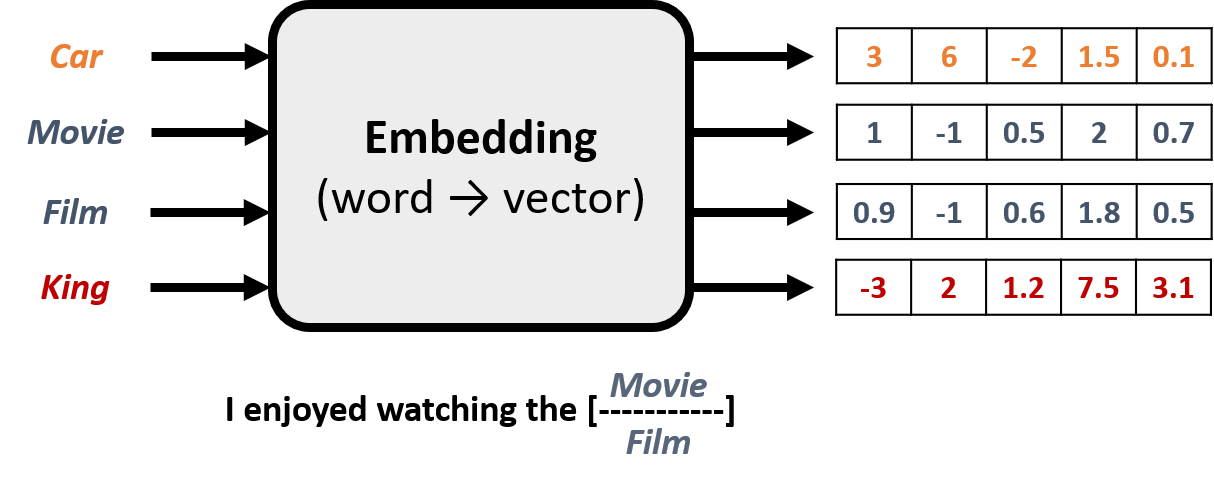

Embeddings

- Represent a word with a vector that captures its meaning

Embeddings

- Represent a word with a vector that captures its meaning

- Assigns random values to each word

Embeddings

- Represent a word with a vector that captures its meaning

- Assigns random values to each word

- Refines values by predicting missing words in sentences

Embeddings

- Represent a word with a vector that captures its meaning

- Assigns random values to each word

- Refines values by predicting missing words in sentences

- Words appearing in similar contexts end up with similar representations

Embeddings as GPS coordinates for words

Gensim

- Provides popular embedding models

- Word2Vec

- GloVe

word2vec-ruscorpora-300

word2vec-google-news-300

glove-wiki-gigaword-50

glove-wiki-gigaword-100

glove-wiki-gigaword-200

glove-wiki-gigaword-300

...

Loading an embedding model

import gensim.downloader as apimodel = api.load('glove-wiki-gigaword-50')print(type(model))print(model['movie'])

<class 'gensim.models.keyedvectors.KeyedVectors'>[ 0.30824 0.17223 -0.23339 0.023105 0.28522 0.23076 -0.41048 -1.0035 -0.2072 1.4327 -0.80684 0.68954 -0.43648 1.1069 1.6107 -0.31966 0.47744 0.79395 -0.84374 0.064509 0.90251 0.78609 0.29699 0.76057 0.433 -1.5032 -1.6423 0.30256 0.30771 -0.87057 2.4782 -0.025852 0.5013 -0.38593 -0.15633 0.45522 0.04901 -0.42599 -0.86402 -1.3076 -0.29576 1.209 -0.3127 -0.72462 -0.80801 0.082667 0.26738 -0.98177 -0.32147 0.99823 ]

Computing similarity

similarity = model.similarity("film", "movie")print(similarity)

0.9310100078582764

Finding most similar words

similar_to_movie = model.most_similar('movie', topn=3)print(similar_to_movie)

[('movies', 0.9322481155395508),

('film', 0.9310100078582764),

('films', 0.8937394618988037)]

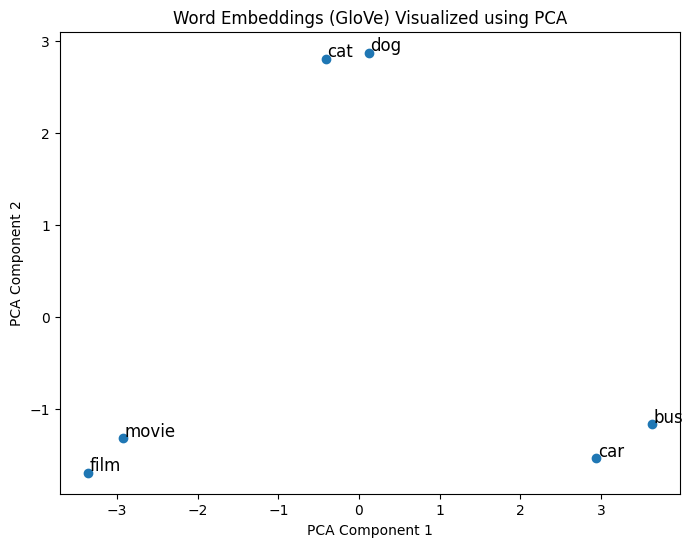

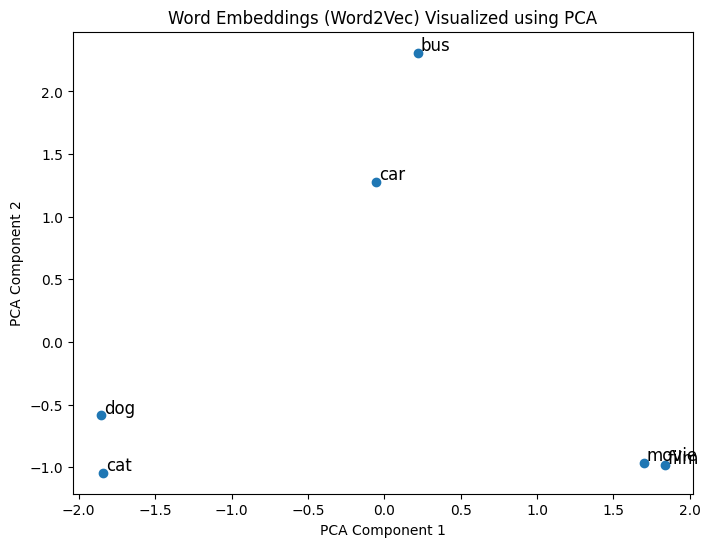

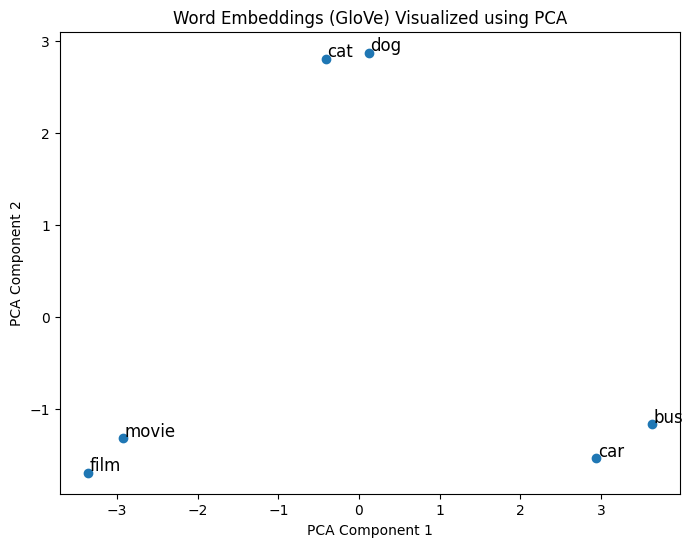

Visualizing embeddings

- Principal Component Analysis (PCA):

- High-dimensional vectors → 2D or 3D vectors

1 Image generated by DALL-E

Visualizing embeddings with PCA

from sklearn.decomposition import PCAwords = ["film", "movie", "dog", "cat", "car", "bus"]word_vectors = [model[word] for word in words]pca = PCA(n_components=2)word_vectors_2d = pca.fit_transform(word_vectors)plt.scatter(word_vectors_2d[:, 0], word_vectors_2d[:, 1])for word, (x, y) in zip(words, word_vectors_2d): plt.annotate(word, (x, y))plt.show()

Comparison of embeddings

word2vec-google-news-300

glove-wiki-gigaword-50

Let's practice!

Natural Language Processing (NLP) in Python