Operating AI systems after launch

AI for Human Resources

Chris Klaus

Senior AI Architect, Chalice AI

What to watch for

$$

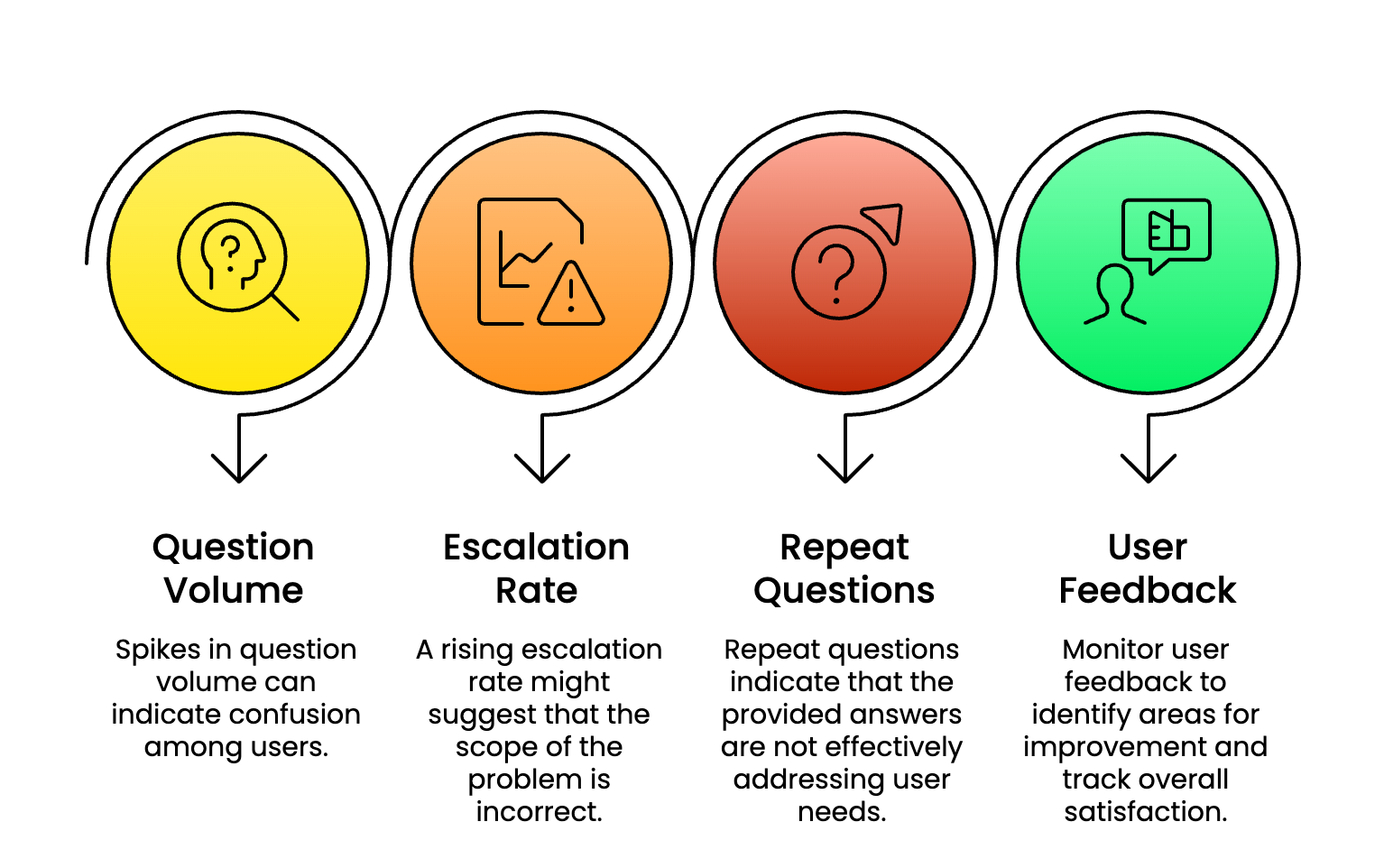

Watch for patterns:

Same questions recurring

Employees double-checking answers

Slight inconsistencies in responses

$$

Keep a simple log:

- Questions, responses, what felt off

$$

These signals tell you when systems are drifting

Regular checks

$$

30-minute monthly review

Policy alignment - Did anything update? Test with current questions.

Language drift - Do responses match your latest handbook?

Edge cases - Review your log for recurring weak spots.

Scope creep - Handling requests it wasn't designed for?

Instruction accuracy - Rules still reflect current operations?

When policies change, update instructions first

$$

New policy announced? Update AI systems first - before employees start asking.

$$

| Step | Action |

|---|---|

| 1 | Update AI instructions immediately - revise context sources and response guidelines |

| 2 | Test with realistic questions - use actual employee queries to validate responses |

| 3 | Focus on edge cases - identify scenarios where the agent might fail or produce errors |

| 4 | If inaccurate → narrow the agent's scope or route to humans for judgment |

Teamwork

$$

Quarterly spot-check process

Find one other person who can review your systems

Give them five realistic scenarios

Ask them to flag confusion, surprises, or moments they'd prefer a human

$$

When something goes wrong

$$

Immediate response:

Fix the configuration immediately

Document what happened and changed

Communicate clearly with affected employees

$$

Example message:

"We noticed [system] provided outdated guidance about [topic]. Here's the current policy..."

Building your support network

$$

$$

Three ongoing connections you need:

| Role | Why you need them |

|---|---|

| HR contact | Sees employee confusion early |

| Technical contact | Adjusts configurations quickly |

| Compliance contact | Flags regulatory changes |

Monitoring

$$

$$

$$

$$

Four-week launch framework:

Week 1: Test top 20 expected questions

Week 2: Pilot with 10-15 people

Week 3: Update based on feedback

Week 4: Set monthly reviews

Let's practice!

AI for Human Resources