MCP and LLMs: Tools

Introduction to Model Context Protocol (MCP)

James Chapman

AI Curriculum Manager, DataCamp

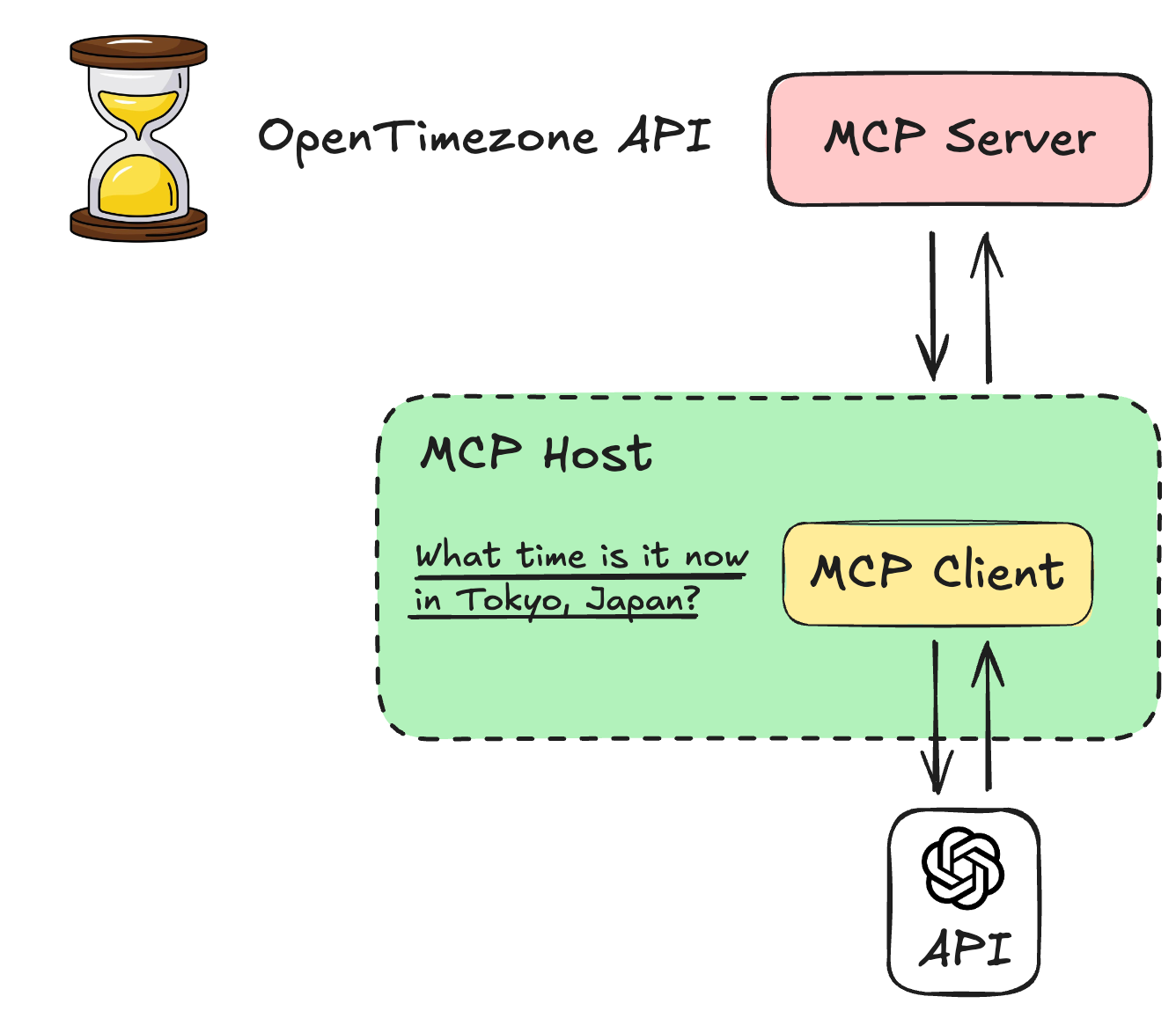

MCP Tools to LLM Tools

Tool ⇄ LLM integration is highly LLM-dependent

- LLMs accept tools in different ways and with different syntax

- Use OpenAI's Responses API

- Focus: processes and transformations

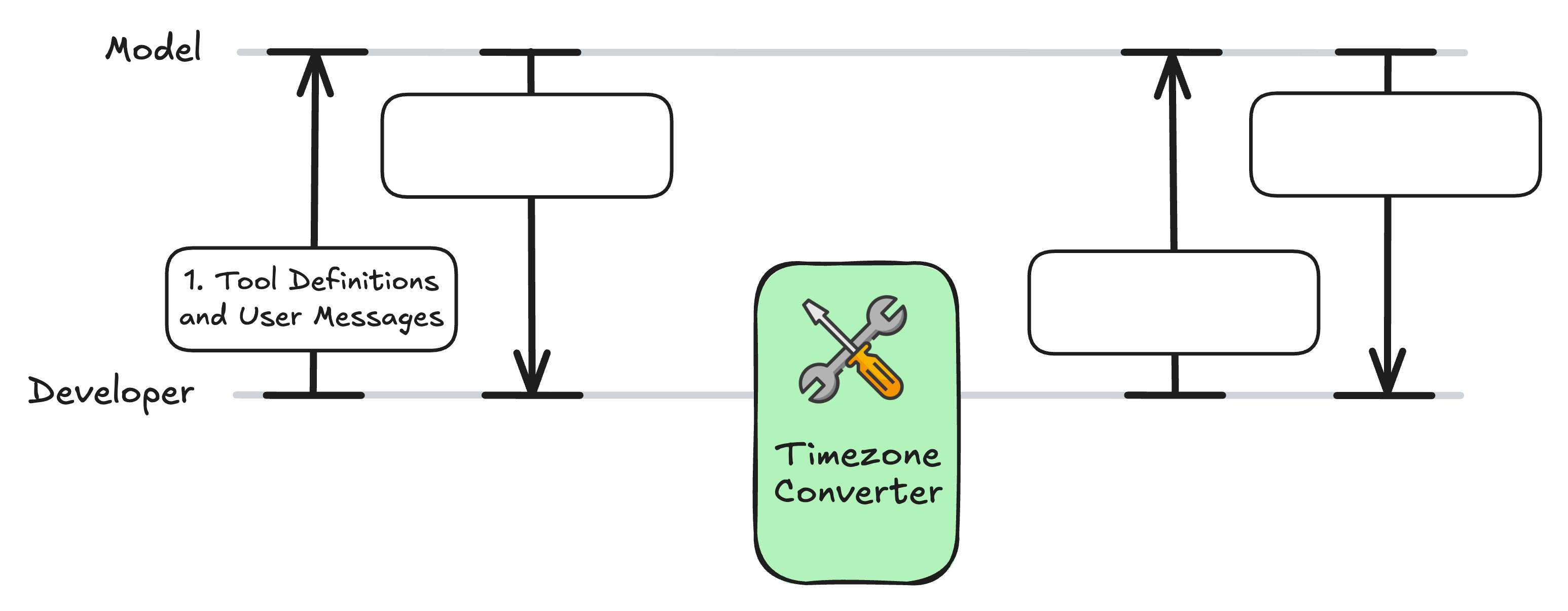

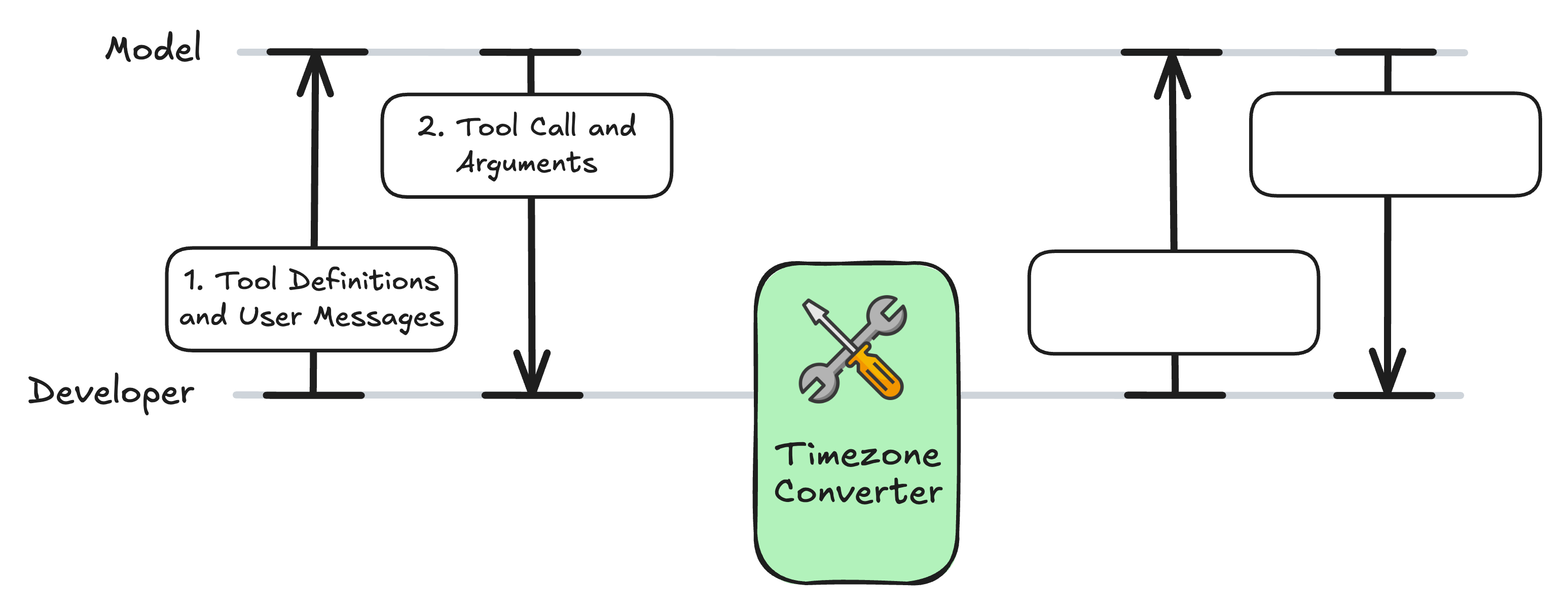

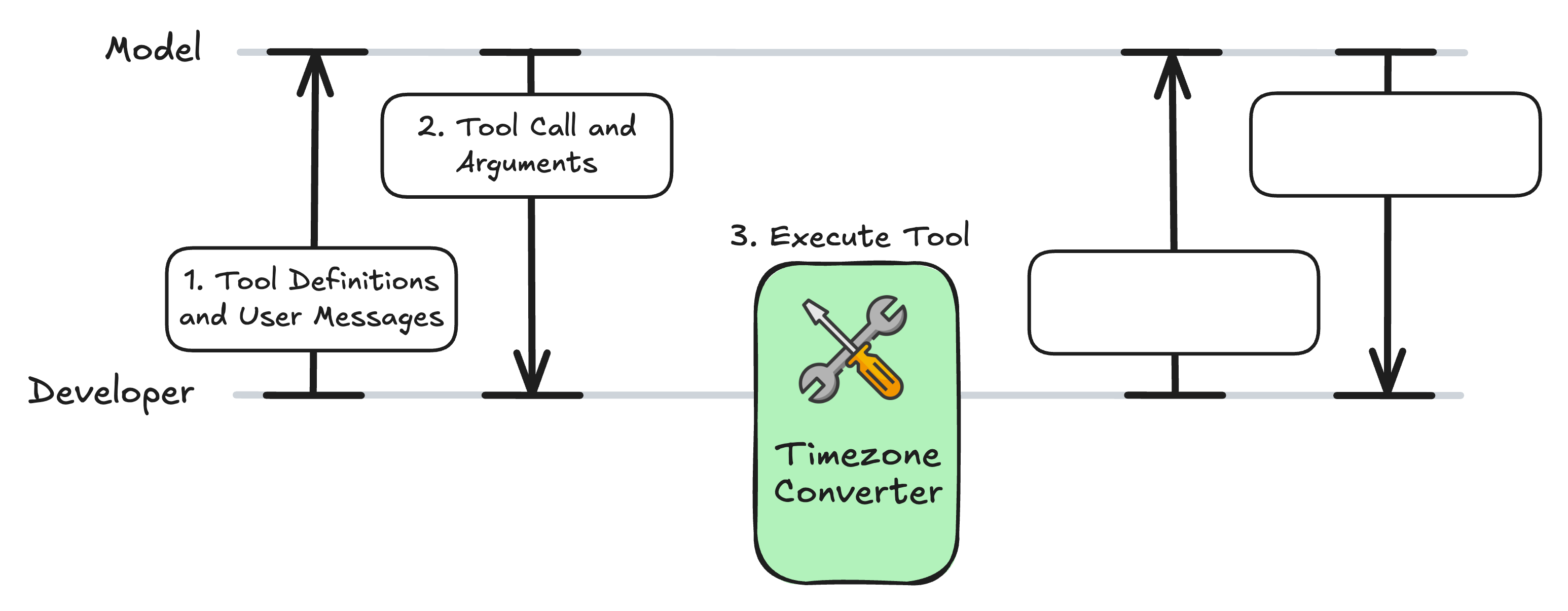

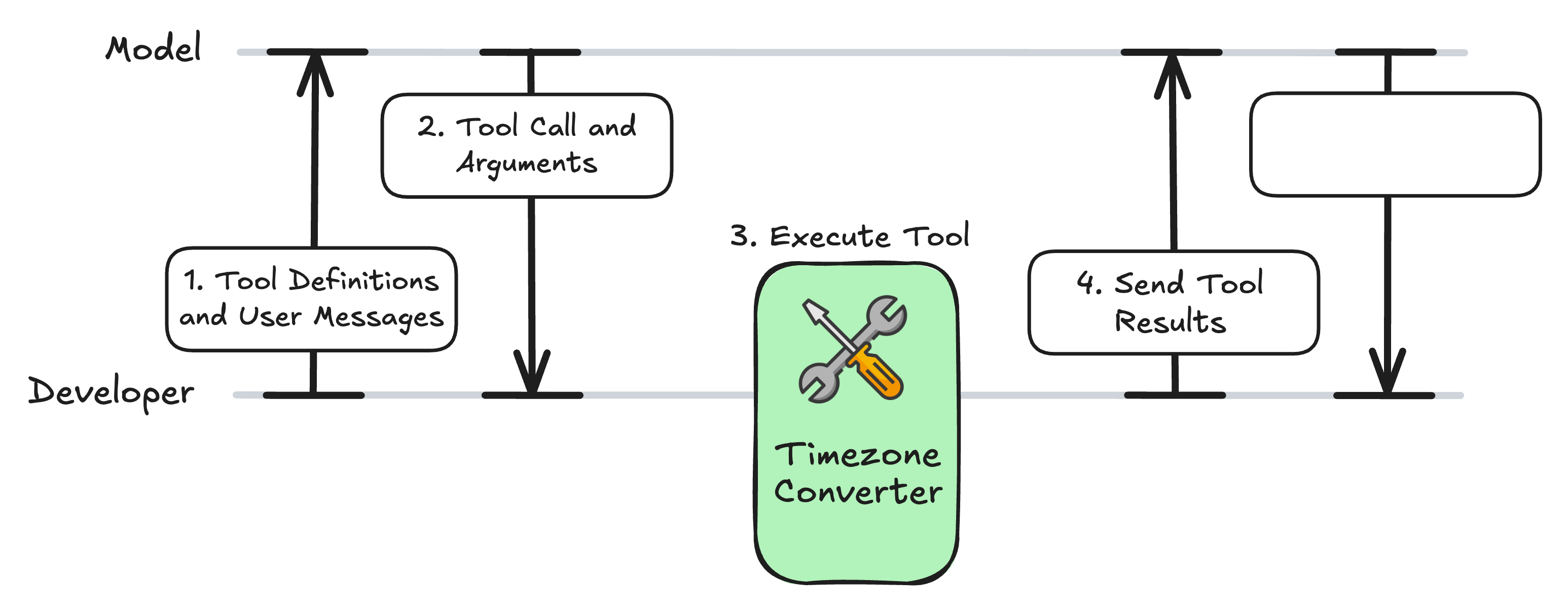

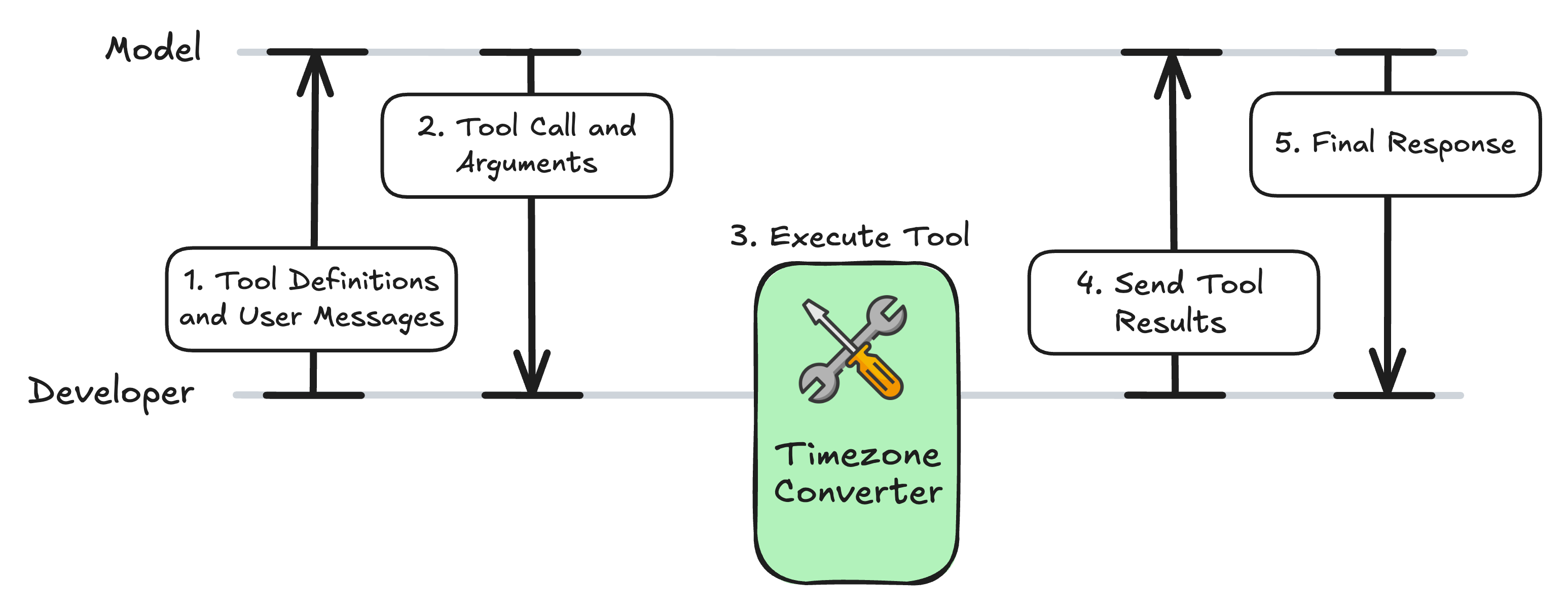

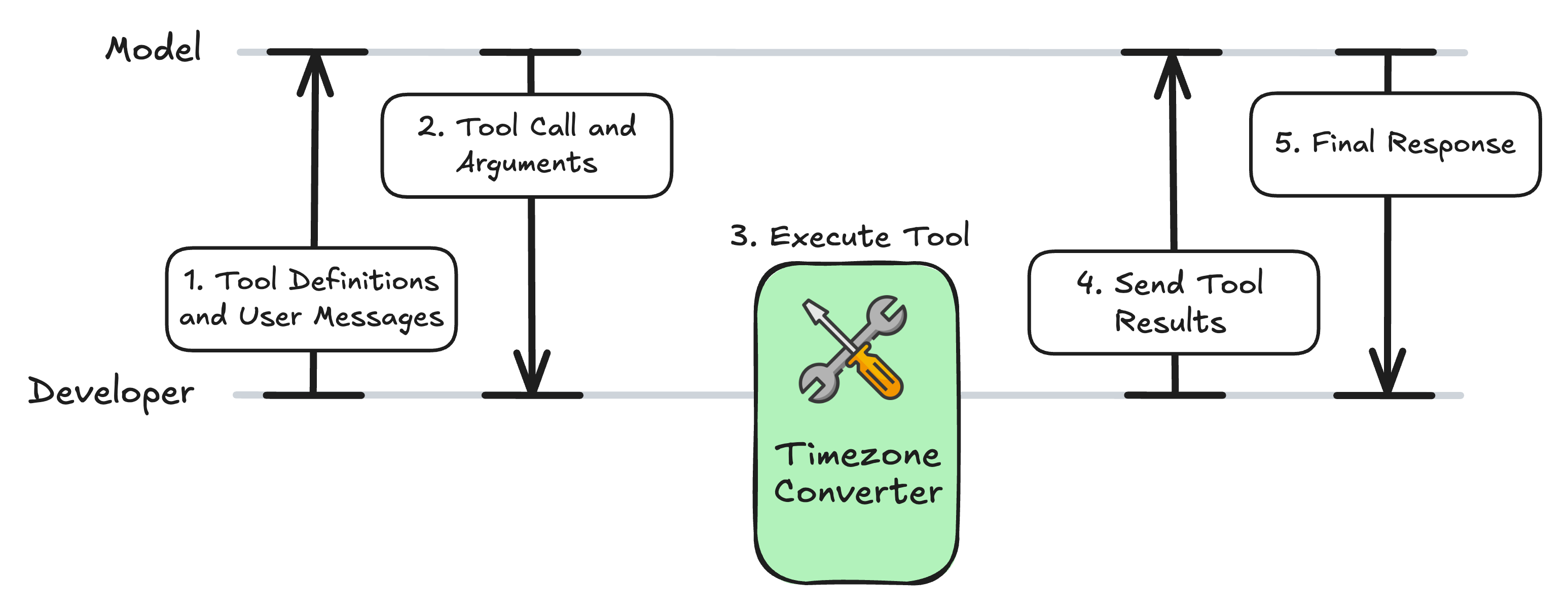

The Tool-Calling Workflow

The Tool-Calling Workflow

The Tool-Calling Workflow

The Tool-Calling Workflow

The Tool-Calling Workflow

The Tool-Calling Workflow

MCP Server: timezone_server.py

from mcp.server.fastmcp import FastMCP

import requests

mcp = FastMCP("Timezone Converter")

@mcp.tool()

def convert_timezone(date_time: str, from_timezone: str, to_timezone: str) -> str:

...

if __name__ == "__main__":

mcp.run(transport="stdio")

Expanding the Client Code

async def get_tools_from_mcp(): # ... return response.tools async def call_mcp_tool(tool_name: str, arguments: dict) -> str: # ... result = await session.call_tool(tool_name, arguments) return str(result.content[0].text)async def call_openai_llm(user_query: str):

Setup: Format the Tools for the LLM

async def call_openai_llm(user_query: str): """Call OpenAI LLM with MCP tools."""mcp_tools = await get_tools_from_mcp()openai_tools = [] for tool in mcp_tools:openai_tool = { "type": "function", "name": tool.name, "description": tool.description or "", "parameters": tool.inputSchema, # MCP uses JSON Schema format }openai_tools.append(openai_tool)

1. Send the Query and Tools to the LLM

from openai import AsyncOpenAI

async def call_openai_llm(user_query: str): # ...client = AsyncOpenAI(api_key="<OPENAI_API_TOKEN>")response = await client.responses.create( model="gpt-4o-mini", input=user_query, tools=openai_tools, )

2. Checking for a Tool Call

async def call_openai_llm(user_query: str): # ... output = response.output[0]if output.type == "function_call":args = json.loads(output.arguments) name = output.name print(f"Model decided to call: {name}") print(f"Arguments: {args}\n")

3. Calling the Tool | 4. The Follow-Up Message

async def call_openai_llm(user_query: str): # ... if output.type == "function_call": # ...result = await call_mcp_tool(name, args)followup = await client.responses.create( model="gpt-4o-mini", input=[ {"role": "user", "content": user_query}, output, {"type": "function_call_output", "call_id": output.call_id, "output": result} ], )

5. The Final Response

async def call_openai_llm(user_query: str): # ... if output.type == "function_call": # ...if followup.output and followup.output[0].type == "message": print(f"\nAssistant: {followup.output[0].content[0].text}") else: print("No follow-up message from model.")else: print(f"\nAssistant: {output.content[0].text}") return str(output.content[0].text)

Testing the Function

if __name__ == "__main__":

asyncio.run(call_openai_llm("It is 9:50 AM in the UK in January. What time is

it in Lisbon, Portugal?"))

Assistant: It's 9:50 AM in Lisbon as well.

The Tool-Calling Workflow

Let's practice!

Introduction to Model Context Protocol (MCP)