Production pipelines with workflows

Data Transformation with Spark SQL in Databricks

Disha Mukherjee

Lead Data Engineer

Why Delta Lake?

$$

- ACID transactions → roll back failed writes

- Schema enforcement → block mismatched types

- Versioning → query any previous state

Writing to Delta

df_valid.write.format("delta") \

.mode("overwrite") \

.saveAsTable("transactions_clean")

print(f"Rows written: {df_valid.count():,}")

Rows written: 33,223

$$

$$

- A new Delta table appears in Unity Catalog

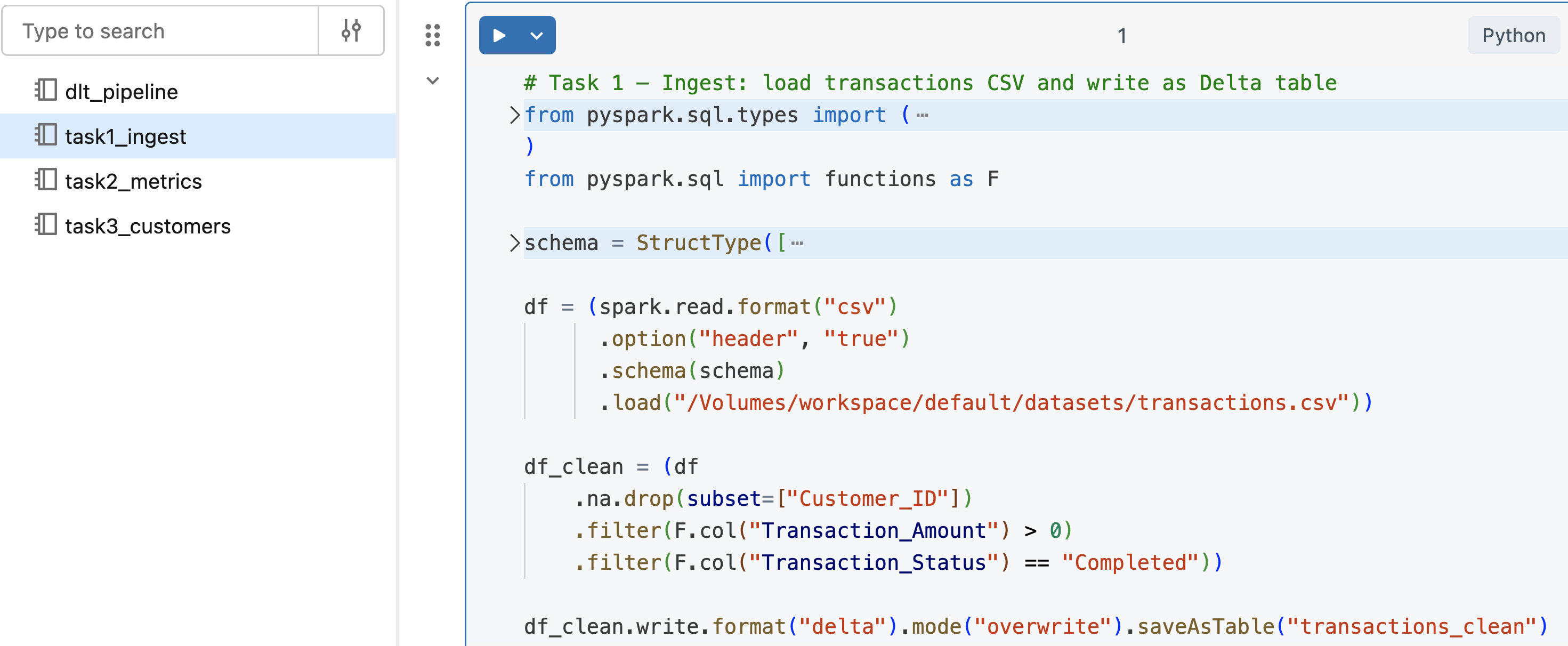

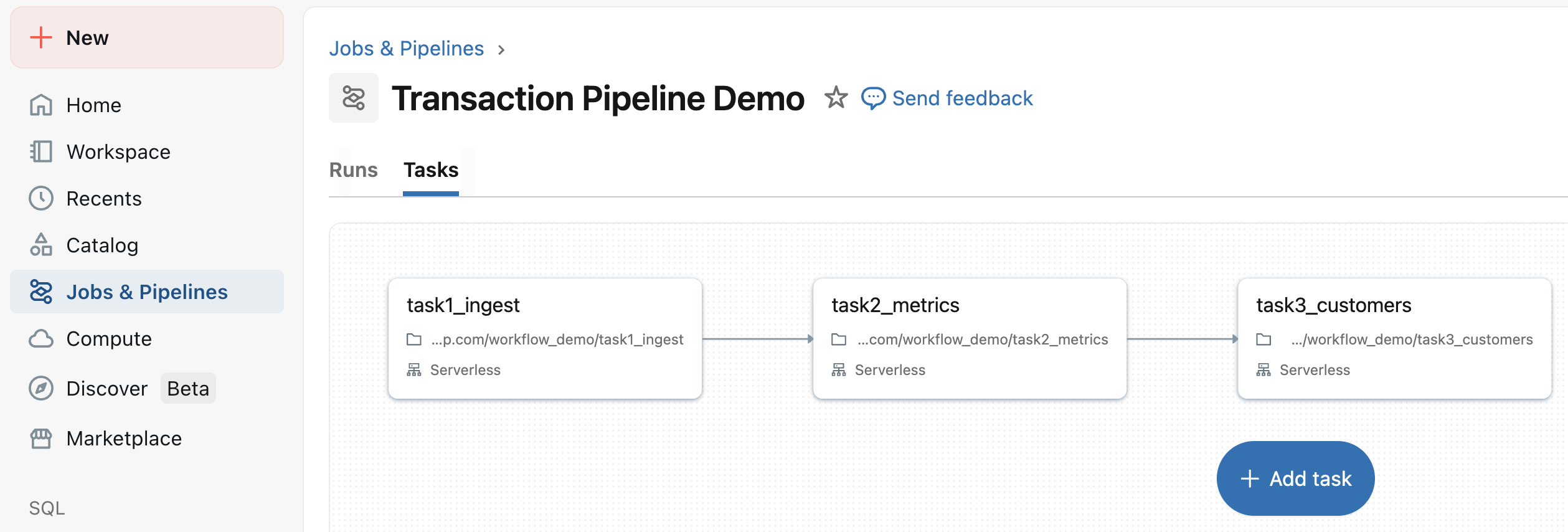

Notebook tasks

- Loads CSV → applies cleaning → writes a Delta table

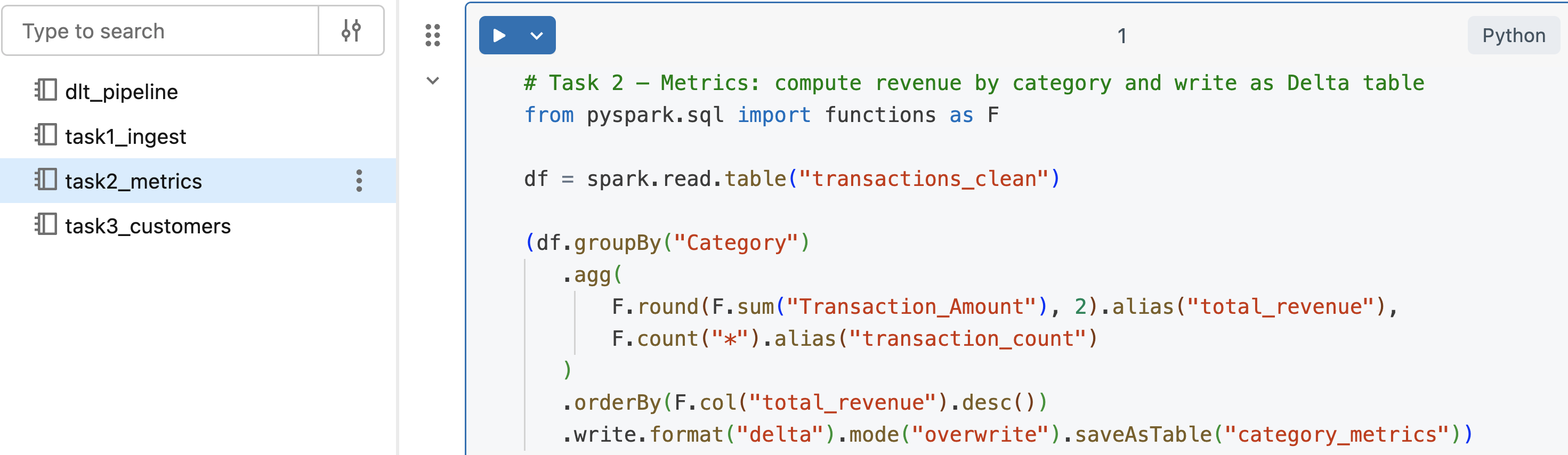

Notebook tasks

- Reads cleaned table → computes metrics → writes to a new table

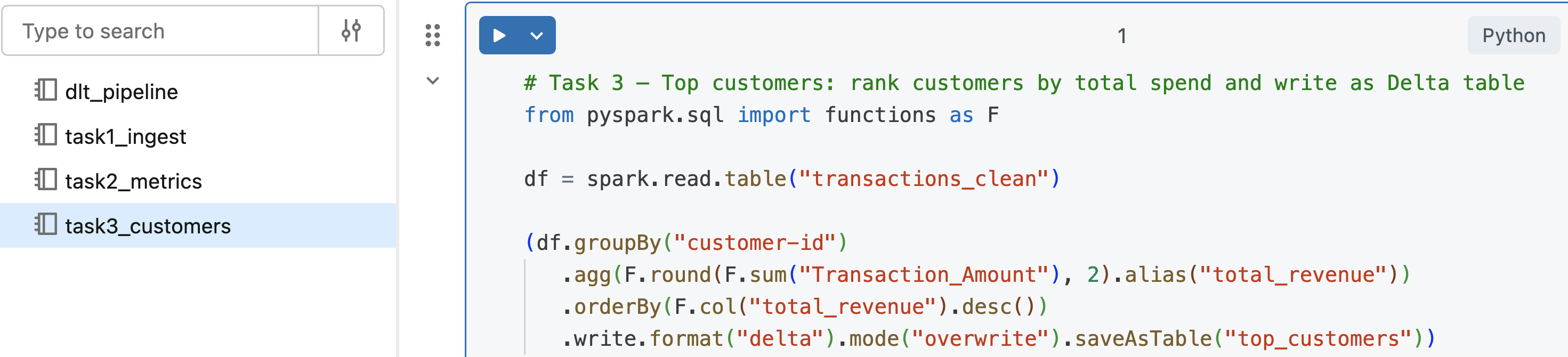

Notebook tasks

- Reads cleaned table → ranks customers → saves to a new table

Creating the job

- Can run manually, schedule jobs, and set triggers

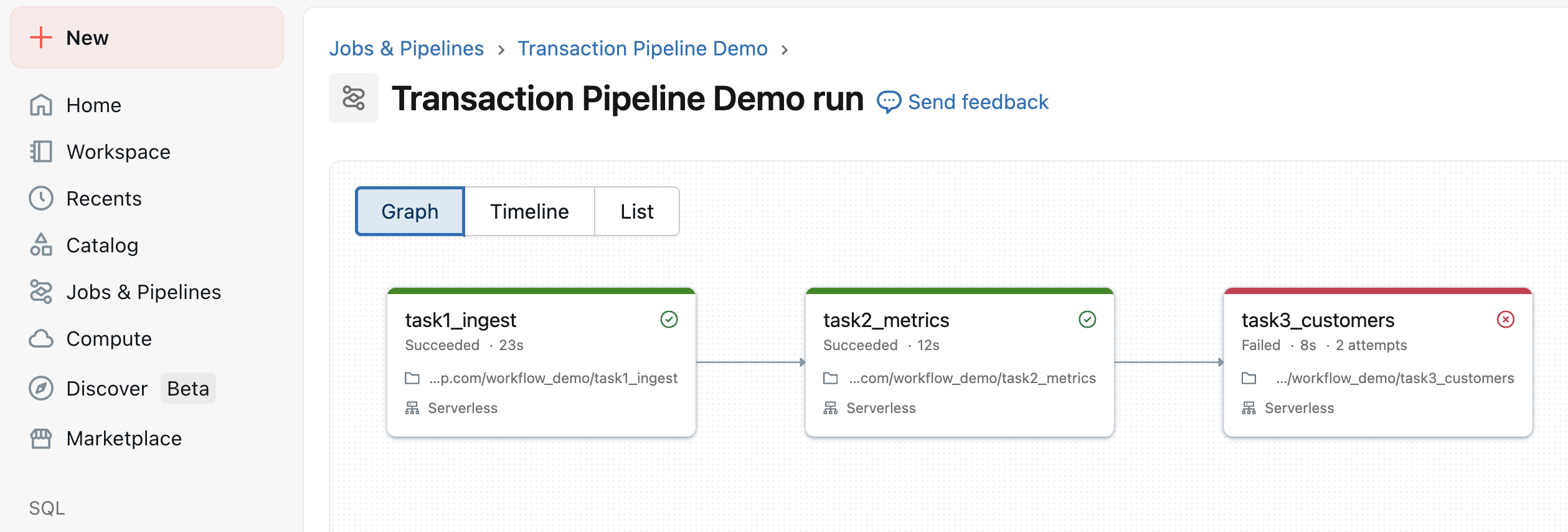

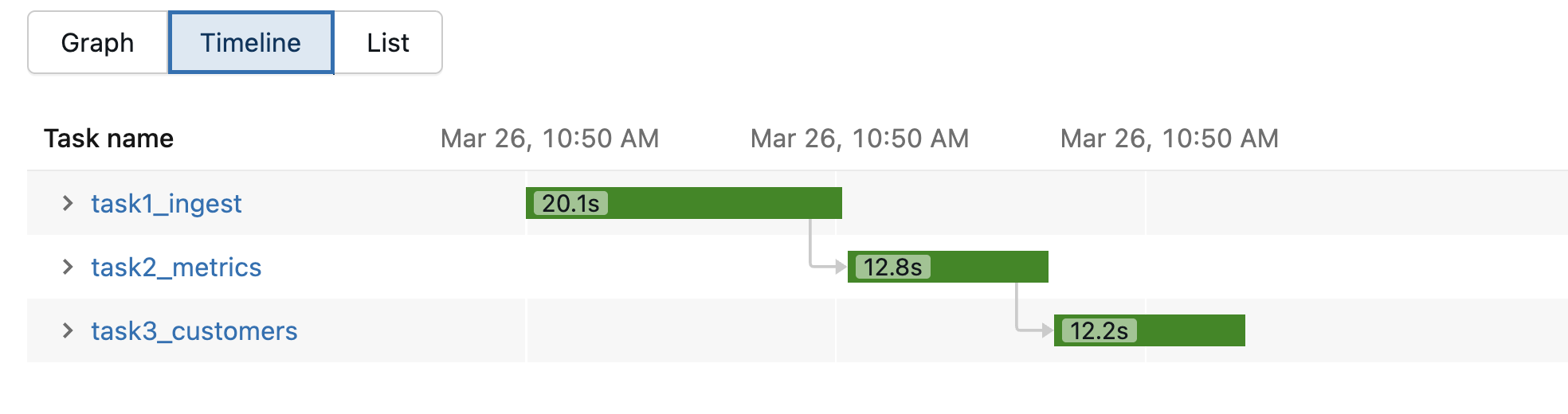

Running the job

Running the job

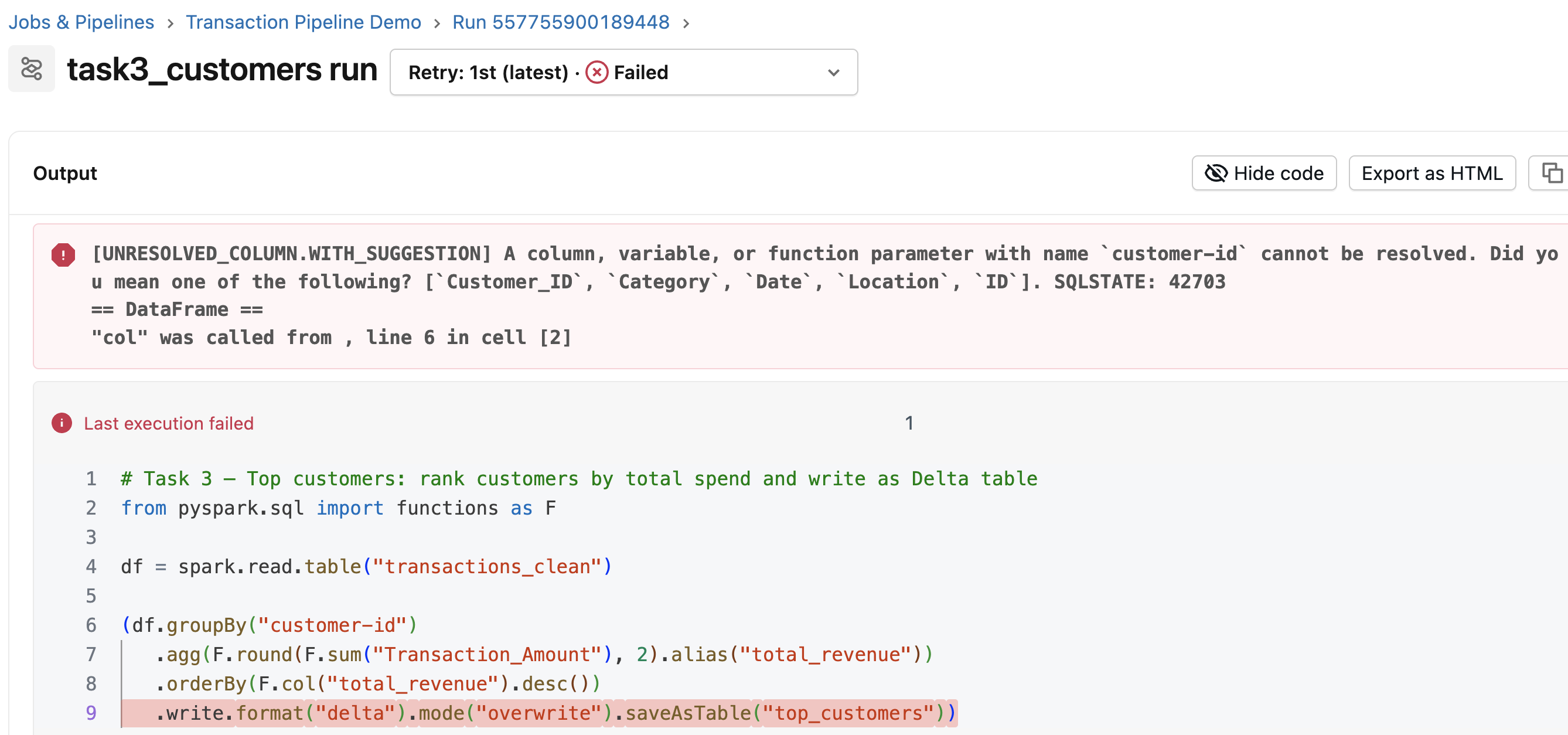

- Error:

customer-idshould beCustomer_ID

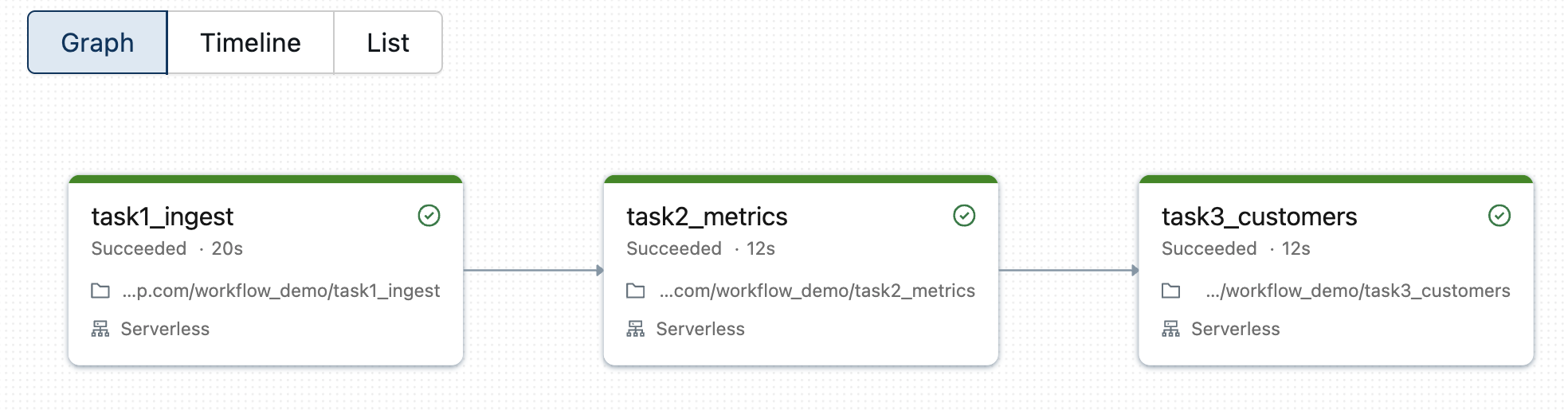

Running the job

What is Lakeflow?

$$

$$

- Jobs → we manage each step

- Lakeflow → declare what tables should contain

- Databricks handles order, retries, and compute

The @dlt.table pattern

@dlt.table(name="transactions_bronze") def transactions_bronze(): return spark.read.format("csv").schema(schema).load(FILE_PATH)@dlt.table(name="transactions_silver") def transactions_silver(): return dlt.read("transactions_bronze").na.drop(...).filter(...)@dlt.table(name="category_revenue_gold") def category_revenue_gold(): return dlt.read("transactions_silver").groupBy("Category").agg(...)

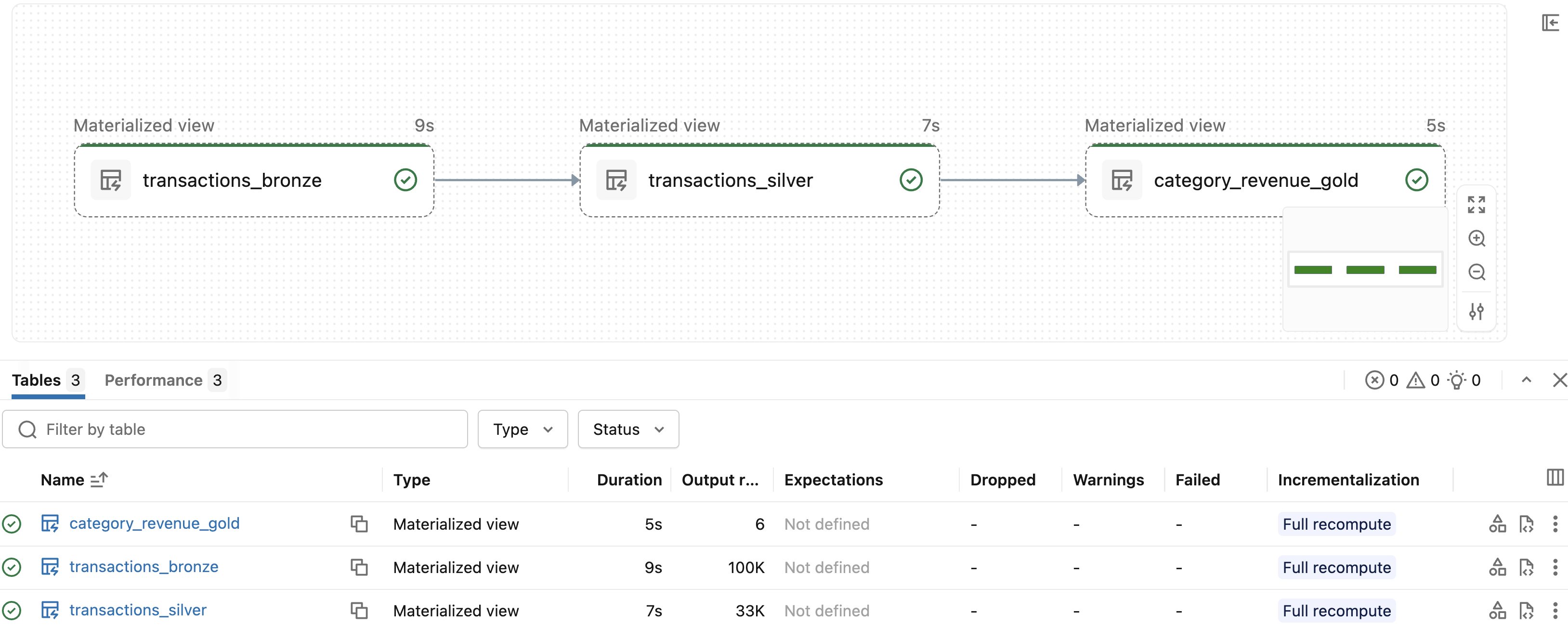

Pipeline run

- Bronze (100K rows) → Silver (33K rows) → Gold (6 rows)

Notebooks, Jobs, or Lakeflow?

$$

$$

- Notebooks → exploring, prototyping

- Databricks Jobs → multi-step, scheduled pipelines

- Lakeflow → fully managed, declarative

Let's practice!

Data Transformation with Spark SQL in Databricks