Wrapping up

Data Transformation with Spark SQL in Databricks

Disha Mukherjee

Lead Data Engineer

Chapter 1

- Loading and inspecting DataFrames

- Shaping data with PySpark and Spark SQL

Chapter 2

total_rows = df_enriched.count() distinct_rows = df_enriched.distinct().count()null_rate = ( df_enriched.filter(F.col("Customer_ID").isNull()) .count() / total_rows * 100 )print(f"Total rows: {total_rows}") print(f"Duplicates: {total_rows - distinct_rows}") print(f"Null rate: {null_rate:.2f}%")

Total rows: 33,223

Duplicates: 0

Null rate: 0.00%

- Cleaning nulls, duplicates, and invalid records

- Aggregating and joining with broadcast optimization

Chapter 3

- Window functions and streaming queries

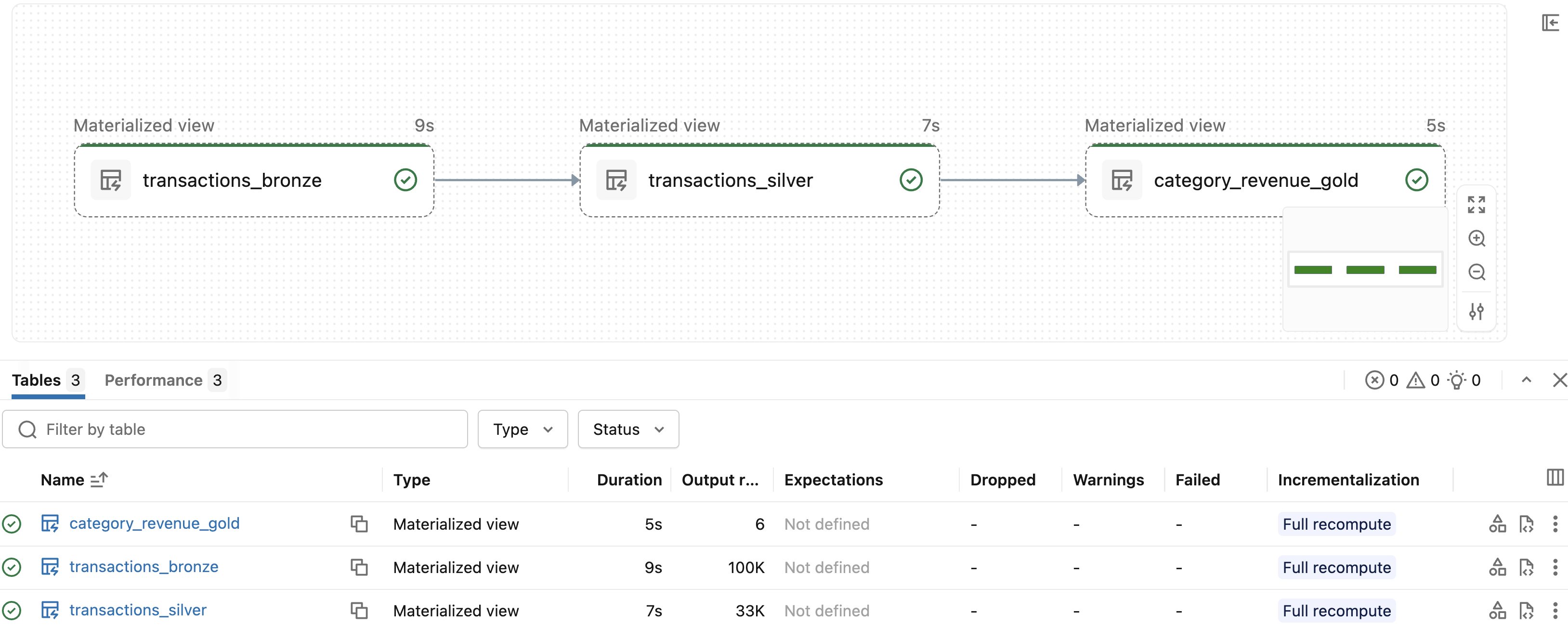

- Production pipelines with Databricks Jobs and Lakeflow

Practice in a live Databricks workspace

$$

$$

$$

- Transactional and online retail datasets

- Interactive practice in a live Databricks workspace

Congratulations!

Data Transformation with Spark SQL in Databricks