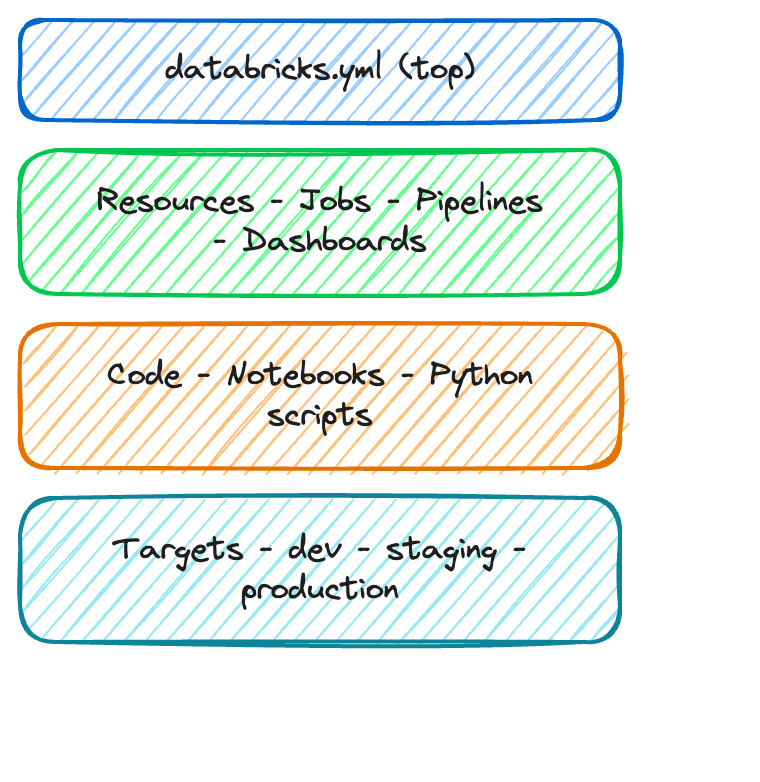

Inside an asset bundle

Introduction to Databricks Lakehouse

Gang Wang

Senior Data Scientist

Bundle project structure

$$

my_project/

|-- databricks.yml

|-- src/

| |-- etl_pipeline.py

| |-- data_quality.py

|-- resources/

| |-- jobs/

| | |-- nightly_etl.yml

| |-- pipelines/

| |-- sales_pipeline.yml

|-- tests/

|-- test_etl.py

$$

databricks.yml- the central config filesrc/- your notebooks and coderesources/- job and pipeline definitionstests/- optional test files

The databricks.yml file

bundle:

name: sales_analytics

workspace:

host: https://myworkspace.databricks.com

targets:

dev:

default: true

workspace:

root_path: /Users/me/dev

production:

workspace:

root_path: /Shared/production

permissions:

- level: CAN_MANAGE

group_name: data_engineers

Defining resources

$$

$$

resources:

jobs:

nightly_etl:

name: "Nightly ETL Pipeline"

schedule:

quartz_cron: "0 0 2 * * ?"

tasks:

- task_key: ingest

notebook_task:

notebook_path: src/etl.py

Bundle CLI commands

$$

| Command | Purpose |

|---|---|

bundle validate |

Check config for errors |

bundle deploy |

Deploy to a target |

bundle run |

Trigger a deployed job |

bundle destroy |

Remove deployed resources |

$$

# Validate before deploying

databricks bundle validate

# Deploy to production

databricks bundle deploy \

--target production

# Trigger a run

databricks bundle run nightly_etl

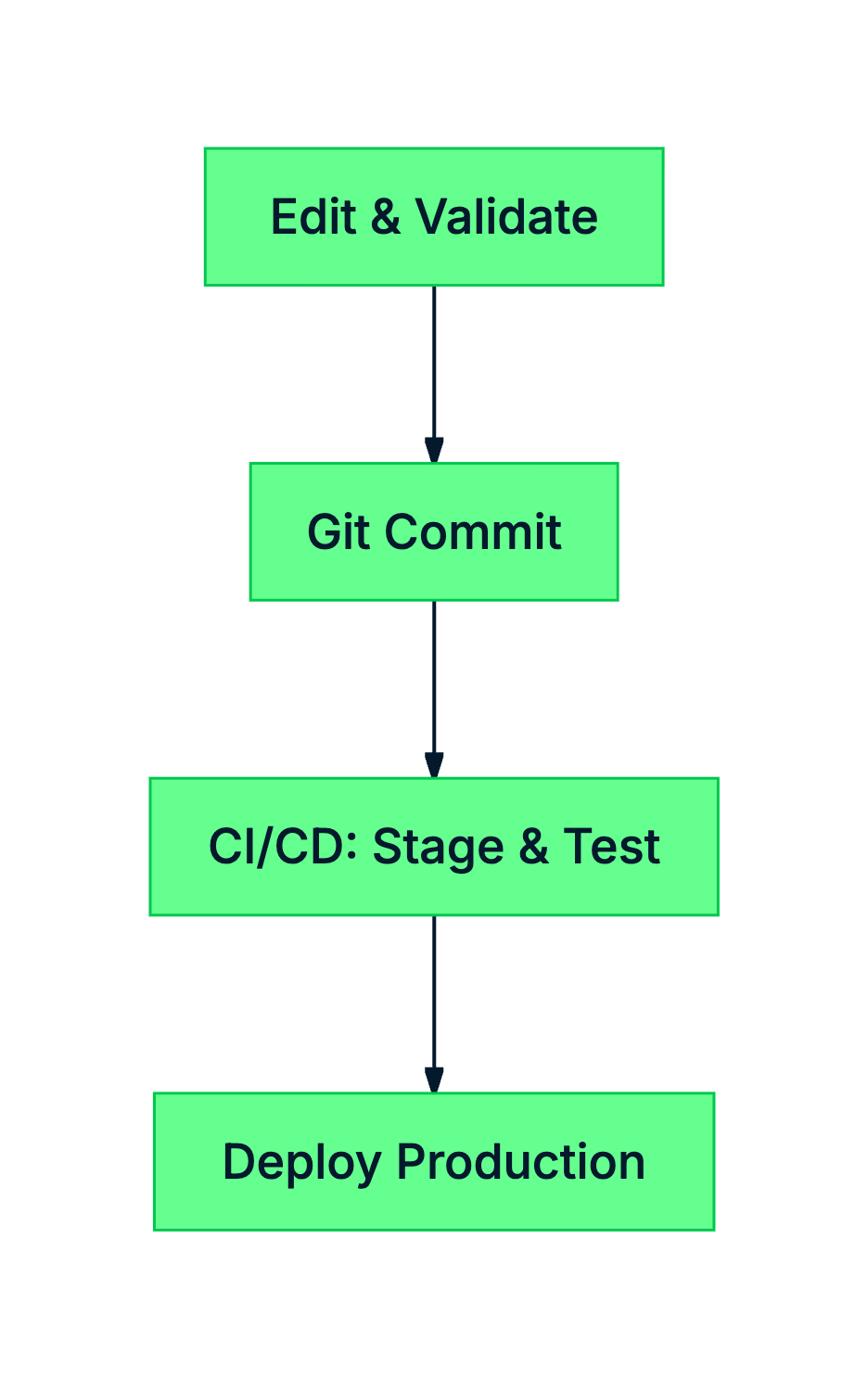

Deployment workflow

$$

- Local development - edit code and validate configs

- Version control - commit changes to Git

- CI/CD automation - deploy to staging and run tests

- Production promotion - deploy with confidence

Summary

$$

databricks.ymldefines your project, targets, and resources- Resources include jobs, pipelines, and dashboards

- Targets map to environments (dev, staging, production)

- CLI commands:

validate,deploy,run,destroy

Let's practice!

Introduction to Databricks Lakehouse