Mécanismes d'attention pour la génération de texte

Deep Learning pour le texte avec PyTorch

Shubham Jain

Instructor

L’ambiguïté dans le traitement du texte

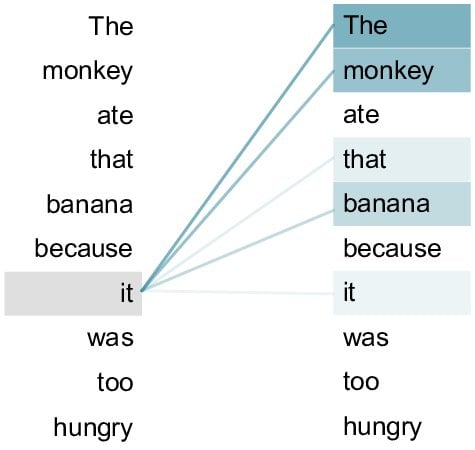

"- « Le singe a mangé cette banane car il était trop affamé »

À quoi fait référence le pronom « il » ici ?

"

"

Mécanismes d'attention

"- Attribue de l'importance aux mots

- Garantit que l'interprétation de la machine correspond à la compréhension humaine {{2}}"

" {{2}}"

{{2}}"

Self Attention et Multi Head Attention

"- Auto-attention : attribue de l'importance aux mots dans une phrase {{1}} - Le chat, qui était sur le toit, a eu peur\" {{2}} - Relier \"a eu peur\" à \"Le chat\"

- Attention multi-tête : comme avoir plusieurs projecteurs, capturant différentes facettes {{3}} - Comprendre \"a eu peur\" peut se rapporter à {{4}} - \"Le chat\", \"le toit\" ou \"était sur\" {{4}}"

Mécanisme d'attention - définition du vocabulaire et des données

"`python

data = [\"the cat sat on the mat\", ...]

----CODE_GLUE---- ```python vocab = set(' '.join(data).split())word_to_ix = {word: i for i, word in enumerate(vocab)} ix_to_word = {i: word for word, i in word_to_ix.items()}

----CODE_GLUE----

python

pairs = [sentence.split() for sentence in data]

input_data = [[word_to_ix[word] for word in sentence[:-1]] for sentence in pairs]

target_data = [word_to_ix[sentence[-1]] for sentence in pairs]

inputs = [torch.tensor(seq, dtype=torch.long) for seq in input_data]

targets = torch.tensor(target_data, dtype=torch.long){{4}}"

Définition du modèle

"`python

embedding_dim = 10

hidden_dim = 16

----CODE_GLUE---- ```python class RNNWithAttentionModel(nn.Module): def __init__(self): super(RNNWithAttentionModel, self).__init__()self.embeddings = nn.Embedding(vocab_size, embedding_dim) self.rnn = nn.RNN(embedding_dim, hidden_dim, batch_first=True)self.attention = nn.Linear(hidden_dim, 1)

----CODE_GLUE----

python

self.fc = nn.Linear(hidden_dim, vocab_size){{5}}"

Propagation avant avec attention

"python

def forward(self, x): x = self.embeddings(x) out, _ = self.rnn(x)

Préparation de l'entraînement

"`python

criterion = nn.CrossEntropyLoss()

----CODE_GLUE---- ```python attention_model = RNNWithAttentionModel() optimizer = torch.optim.Adam(attention_model.parameters(), lr=0.01)for epoch in range(300): attention_model.train() optimizer.zero_grad()padded_inputs = pad_sequences(inputs) outputs = attention_model(padded_inputs)

----CODE_GLUE----

python

loss = criterion(outputs, targets)

loss.backward()

optimizer.step(){{5}}"

Évaluation du modèle

"`python

for input_seq, target in zip(input_data, target_data):

input_test = torch.tensor(input_seq, dtype=torch.long).unsqueeze(0)

----CODE_GLUE---- ```python attention_model.eval() attention_output = attention_model(input_test)attention_prediction = ix_to_word[torch.argmax(attention_output).item()]print(f\"\nInput : {' '.join([ix_to_word[ix] for ix in input_seq])}\") print(f\"Cible : {ix_to_word[target]}\") print(f\"Prédiction RNN avec Attention : {attention_prediction}\")

out

Input : the cat sat on the

Cible : mat

Prédiction RNN avec Attention : mat{{5}}"

Passons à la pratique !

Deep Learning pour le texte avec PyTorch