Modelltraining

End-to-End Machine Learning

Joshua Stapleton

Machine Learning Engineer

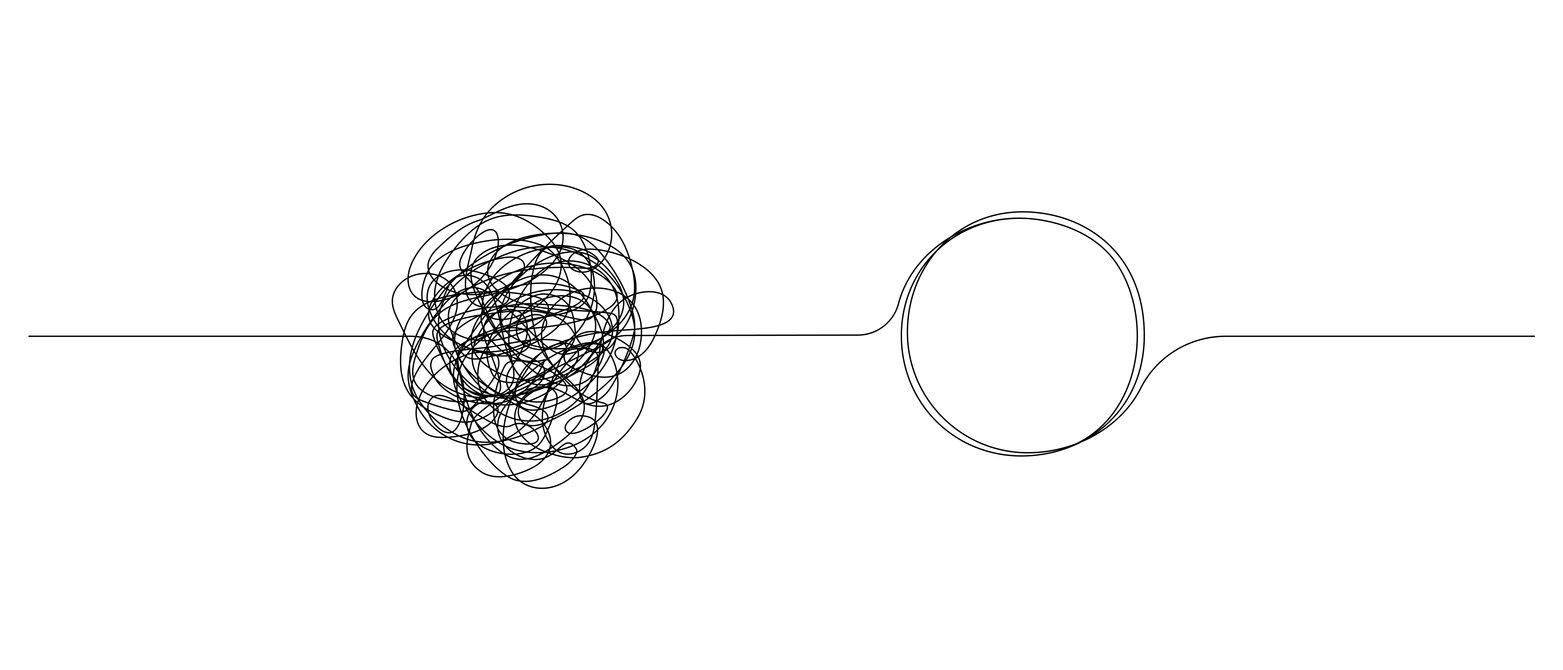

Ockhams Rasiermesser

- Die einfachste hinreichende Erklärung ist am besten

- Bevorzuge einfache Modelle bei der Auswahl

Modellauswahl

Logistische Regression

- Findet die Entscheidungsgrenze zwischen Klassen

sklearn.linear_model.LogisticRegression

Support Vector Classifier

- Findet eine Trennebene zwischen Klassen

sklearn.svm.SVC

Entscheidungsbaum

- Nutzt einfache „Regeln“ zur Klassifikation

sklearn.tree.DecisionTreeClassifier

Random Forest

- Kombiniert mehrere Entscheidungsbäume

sklearn.ensemble.RandomForestClassifier

Weitere Modelle

Deep-Learning-Modelle

- Neuronale Netze

- Convolutional Neural Networks

- Generative Pretrained Transformer (GPT)

K-Nearest Neighbors (KNN)

- Überwachter Lernalgorithmus

XGBoost

- Gradient-Boosted-Modell

- https://xgboost.readthedocs.io/en/stable/

Trainingsprinzipien

Modell:

- Nutzt bereinigte Daten mit Feature-Verarbeitung

- Lernt Muster aus Trainingsdaten

- Sagt das Ziel „Herzkrankheitsdiagnose“ voraus

Prinzipien:

- Muss auf unbekannte Daten generalisieren

- Teil der Daten zurückhalten und erst nach Training testen

- Train/Test-Aufteilung meist 70/30 oder 80/20

sklearn.model_selection.train_test_splitnutzen

Ein Modell trainieren

# Importing necessary libraries from sklearn.model_selection import train_test_split from sklearn.linear_model import LogisticRegression# Split the data into training and testing sets (80:20) X_train, X_test, y_train, y_test = train_test_split(features, heart_disease_y, test_size=0.2, random_state=42)# Define the models logistic_model = LogisticRegression(max_iter=200)# Train the model logistic_model.fit(X_train, y_train)

Modellvorhersagen erhalten

# Jane Does Gesundheitsdaten, z. B.: [Alter, Cholesterin, Blutdruck, ...] jane_doe_data = [45, 230, 120, ...]# In 2D umformen, da scikit-learn ein 2D-Array erwartet jane_doe_data = jane_doe_data.reshape(1, -1)# Mit dem Modell Janes Herzkrankheitsdiagnose-Wahrscheinlichkeiten vorhersagen jane_doe_probabilities = logistic_model.predict_proba(jane_doe_data) jane_doe_prediction = logistic_model.predict(jane_doe_data)

Modellvorhersagen (fortgesetzt)

# Print the probabilities

print(f"Jane Doe's predicted probabilities: {jane_doe_probabilities[0]}")

print(f"Jane Doe's predicted health condition: {jane_doe_prediction[0]}")

Jane Does vorhergesagte Zustandswahrscheinlichkeiten: [0.2 0.8]Jane Does vorhergesagter Gesundheitszustand: 1

Lass uns üben!

End-to-End Machine Learning