Generative Adversarial Networks für die Textgenerierung

Deep Learning für Text mit PyTorch

Shubham Jain

Instructor

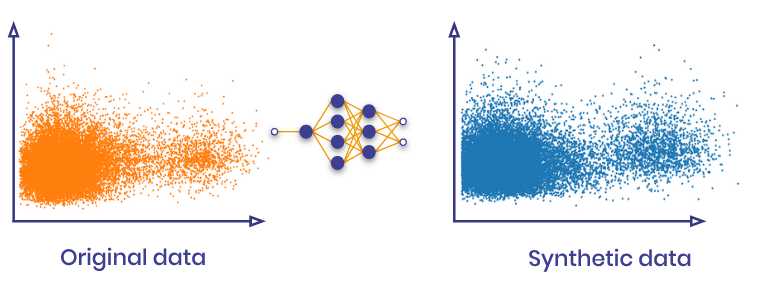

GANs und ihre Rolle bei der Textgenerierung

"- GANs können neue Inhalte erzeugen, die original wirken

- Erhält statistische Ähnlichkeiten

- Kann komplexe Muster replizieren, die von RNNs nicht erreicht werden können

- Kann reale Muster nachahmen

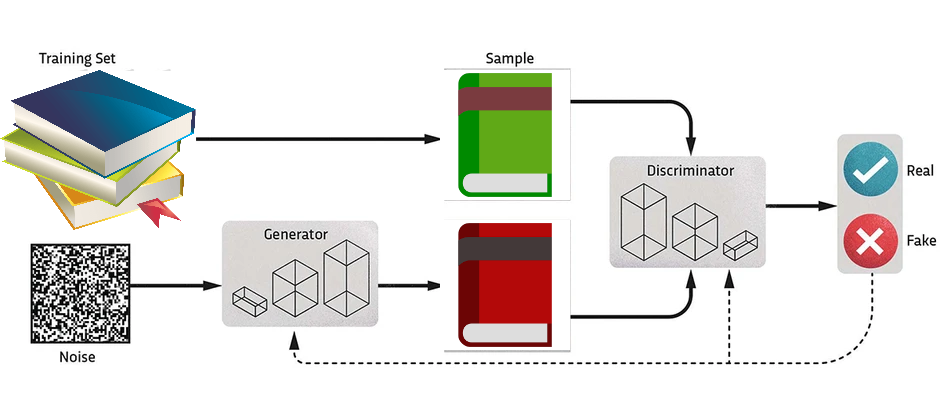

Struktur eines GAN

"- Ein GAN besteht aus zwei Komponenten:

- Generator: erzeugt gefälschte Beispiele durch Hinzufügen von Rauschen

- Diskriminator: unterscheidet zwischen echten und generierten Textdaten

{{3}}"

{{3}}"

"Erstellen eines GAN-Modells in PyTorch: Generator"

"`python

Embedding reviews

Convert reviews to tensors

----CODE_GLUE---- ```python class Generator(nn.Module): def __init__(self): super().__init__()self.model = nn.Sequential( nn.Linear(seq_length, seq_length), nn.Sigmoid() )

----CODE_GLUE----

python

def forward(self, x):

return self.model(x){{4}}"

Aufbau des Diskriminator-Netzwerks

"`python

class Discriminator(nn.Module):

def init(self):

super().init()

----CODE_GLUE----

```python

self.model = nn.Sequential(

nn.Linear(seq_length, 1),

nn.Sigmoid()

)

----CODE_GLUE----

python

def forward(self, x):

return self.model(x){{3}}"

Initialisierung von Netzwerken und Verlustfunktion

generator = Generator()discriminator = Discriminator()criterion = nn.BCELoss()optimizer_gen = torch.optim.Adam(generator.parameters(), lr=0.001) optimizer_disc = torch.optim.Adam(discriminator.parameters(), lr=0.001)

Das Training des Diskriminators

"`python

num_epochs = 50

for epoch in range(num_epochs):

----CODE_GLUE---- ```python for real_data in data: real_data = real_data.unsqueeze(0)noise = torch.rand((1, seq_length))disc_real = discriminator(real_data)fake_data = generator(noise) disc_fake = discriminator(fake_data.detach())loss_disc = criterion(disc_real, torch.ones_like(disc_real)) + criterion(disc_fake, torch.zeros_like(disc_fake))optimizer_disc.zero_grad()loss_disc.backward()

----CODE_GLUE----

python

optimizer_disc.step(){{9}}"

Training des Generators

"`python

# ... (continued from last slide)

disc_fake = discriminator(fake_data)

----CODE_GLUE---- ```python loss_gen = criterion(disc_fake, torch.ones_like(disc_fake))optimizer_gen.zero_grad()loss_gen.backward()optimizer_gen.step()if (epoch+1) % 10 == 0:

----CODE_GLUE----

python

print(f\"Epoche {epoch+1}/{num_epochs}:\t

Generatorverlust: {loss_gen.item()}\t

Diskriminatorverlust: {loss_disc.item()}\"){{7}}"

Ausgabe von realen und generierten Daten

"python

print(\"\nEchte Daten: \")

print(data[:5])

----CODE_GLUE----

`python

print(\"\nGenerierte Daten: \")

for _ in range(5):

noise = torch.rand((1, seq_length))

generated_data = generator(noise)

print(torch.round(generated_data).detach())

`{{2}}"

"GANs: generierte synthetische Daten"

"out

Epoche 10/50: Generatorverlust: 0,8992824673652 Diskriminatorverlust: 1.37682652473

Epoche 20/50: Generatorverlust: 0,7347183227539 Diskriminatorverlust: 1.390102505683

...

Epoche 50/50: Generatorverlust: 0,7019854784011 Diskriminatorverlust: 1.3501529693603"

Generierte Daten

"out

Echte Daten:

tensor([[1., 0., 0., 1., 1.],

[0., 0., 1., 0., 0.],

[1., 0., 1., 1., 1.],

[1., 0., 1., 0., 0.],

[1., 1., 1., 1., 1.]])

- Bewertungsmetrik: Korrelationsmatrix {{1}}"

"out

Generierte Daten:

tensor([[0., 1., 1., 0., 0.]]),

tensor([[0., 1., 1., 1., 1.]])

tensor([[1, 1., 1., 0., 0.]]),

tensor([[1., 1., 1., 0., 0.]])

tensor([[0., 1., 1., 1., 1.]])"

Nun kannst du wieder etwas üben!

Deep Learning für Text mit PyTorch