Einführung in den Aufbau einer Textverarbeitungspipeline

Deep Learning für Text mit PyTorch

Shubham Jain

Data Scientist

"Zusammenfassung: Vorverarbeitung"

" {{1}}"

{{1}}"

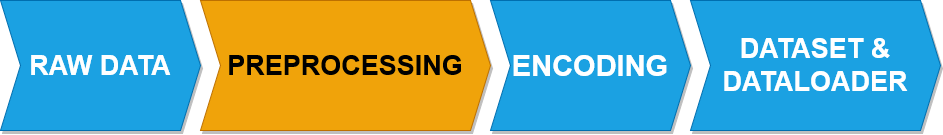

"- Vorverarbeitung:

- Tokenisierung

Textverarbeitungs-Pipeline

" "

"

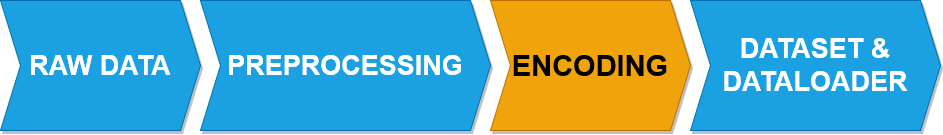

"- Kodierung:

- One-hot-Encoding

- Bag-of-Words

- TF-IDF

- Embedding {{4}}"

Textverarbeitungs-Pipeline

" "

"

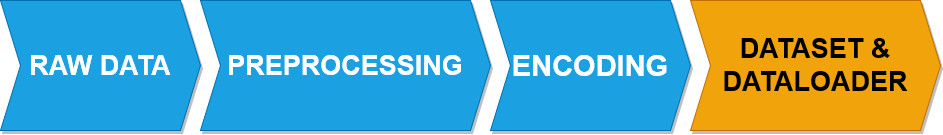

"- Dataset als Container für verarbeiteten und kodierten Text

- DataLoader: Batch-Verarbeitung, Durchmischen und Multiprocessing{{2}}"

"Zusammenfassung: Implementierung von Dataset und DataLoader"

"`py

Import libraries

from torch.utils.data import Dataset, DataLoader

----CODE_GLUE---- ```py # Create a class class TextDataset(Dataset):def __init__(self, text): self.text = textdef __len__(self): return len(self.text)

----CODE_GLUE----

py

def __getitem__(self, idx):

return self.text[idx]{{5}}"

"Zusammenfassung: Integration von Dataset und DataLoader"

dataset = TextDataset(encoded_text)dataloader = DataLoader(dataset, batch_size=2, shuffle=True)

Verwenden von Hilfsfunktionen

"py

def preprocess_sentences(sentences):

processed_sentences = []

for sentence in sentences:

sentence = sentence.lower()

tokens = tokenizer(sentence)

tokens = [token for token in tokens

if token not in stop_words]

tokens = [stemmer.stem(token)

for token in tokens]

freq_dist = FreqDist(tokens)

threshold = 2

tokens = [token for token in tokens if

freq_dist[token] > threshold]

processed_sentences.append(

' '.join(tokens))

return processed_sentences{{1}}"

"py

def encode_sentences(sentences):

vectorizer = CountVectorizer()

X = vectorizer.fit_transform(sentences)

encoded_sentences = X.toarray()

return encoded_sentences, vectorizer

py

def extract_sentences(data):

sentences = re.findall(r'[A-Z][^.!?]*[.!?]',

data)

return sentences{{3}}"

Erstellung der Textverarbeitungspipeline

"`py

def text_processing_pipeline(text):

----CODE_GLUE---- ```py tokens = preprocess_sentences(text)encoded_sentences, vectorizer = encode_sentences(tokens)dataset = TextDataset(encoded_sentences)dataloader = DataLoader(dataset, batch_size=2, shuffle=True)

----CODE_GLUE----

py

return dataloader, vectorizer{{5}}"

Anwenden der Textverarbeitungspipeline

text_data = "Dies sind die ersten Textdaten. Und hier ist noch einer."sentences = extract_sentences(text_data) dataloaders, vectorizer = [text_processing_pipeline(text) for text in sentences]print(next(iter(dataloader))[0, :10])

[[1, 1, 1, 1, 1], [0, 0, 0, 1, 1]]

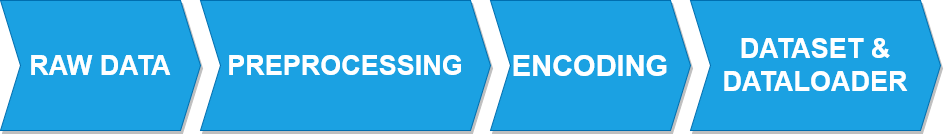

"Textverarbeitungs-Pipeline: Das war's!"

" "

"

Nun kannst du wieder etwas üben!

Deep Learning für Text mit PyTorch