Scaling and concurrency controls

Serverless Applications with AWS Lambda

Claudio Canales

Senior DevOps Engineer

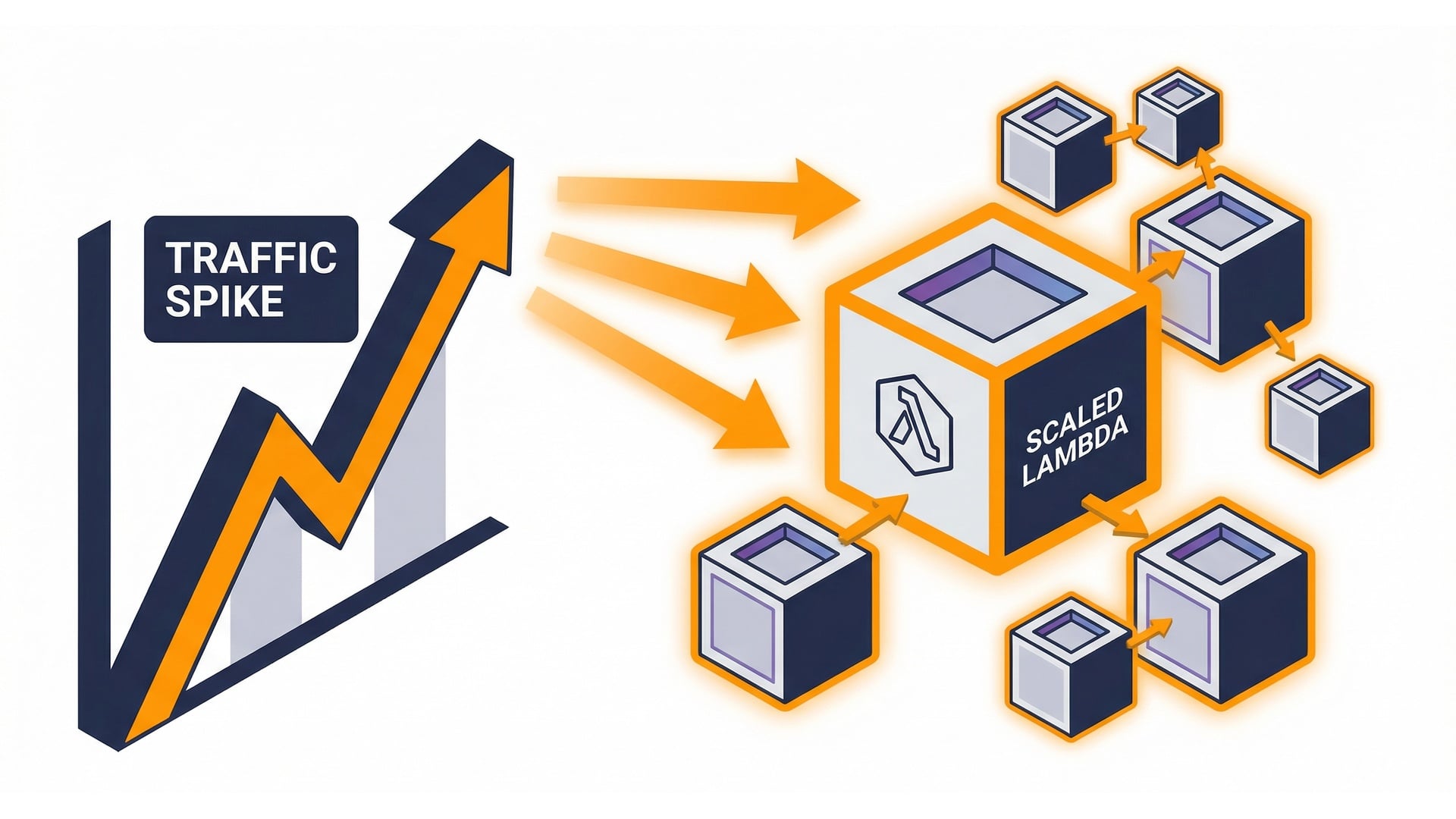

Scaling in one picture

- More demand -> more parallel runs.

- Each run uses an execution environment.

- Concurrency = runs happening now.

What is concurrency?

- One invocation = one unit of work.

- Concurrency = how many are running now.

- If 10 are running, concurrency = 10.

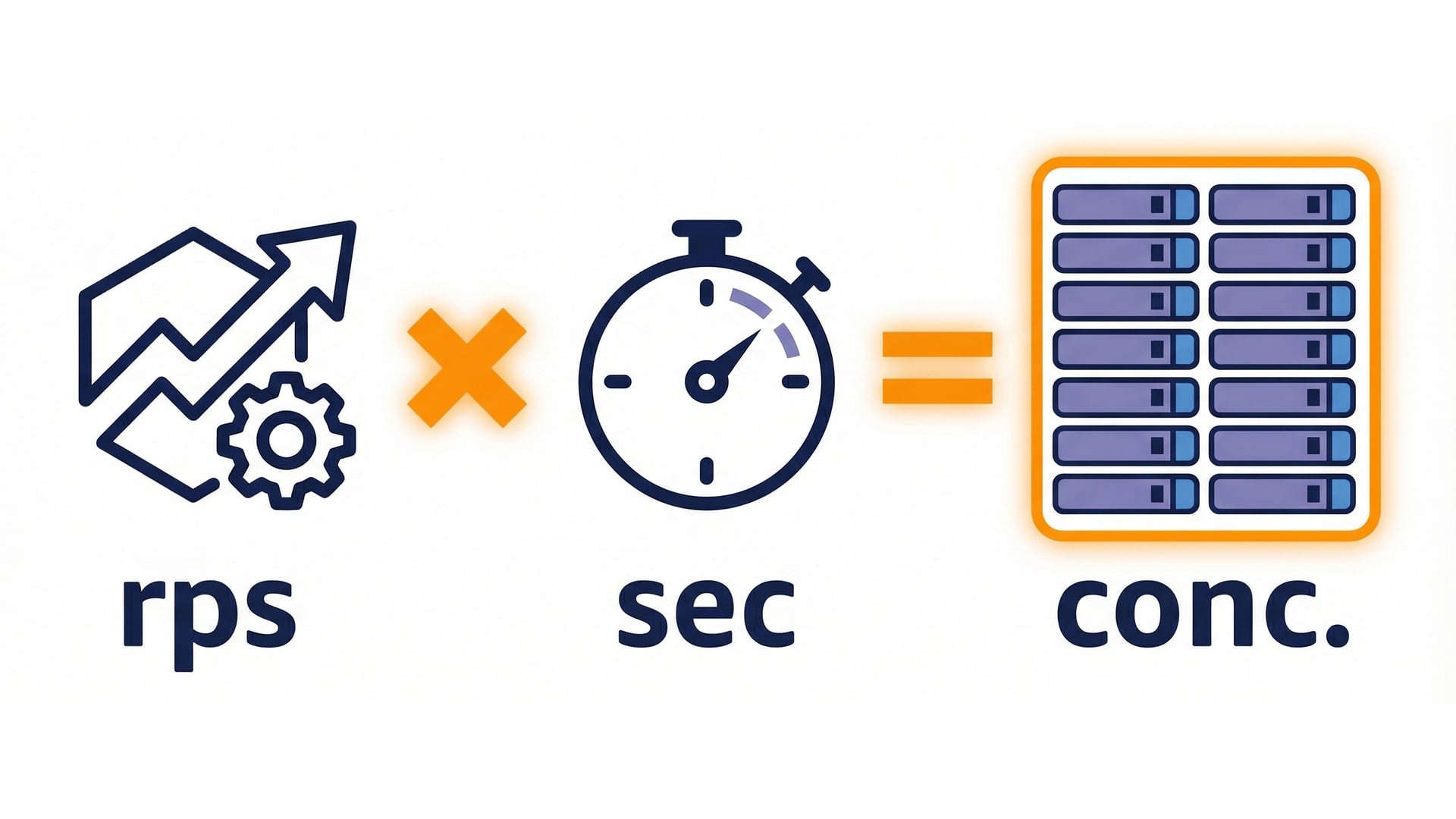

Estimating required concurrency

- Rule of thumb:

concurrency = rps * duration. - Reduce duration to reduce concurrency.

- Use this to size limits safely.

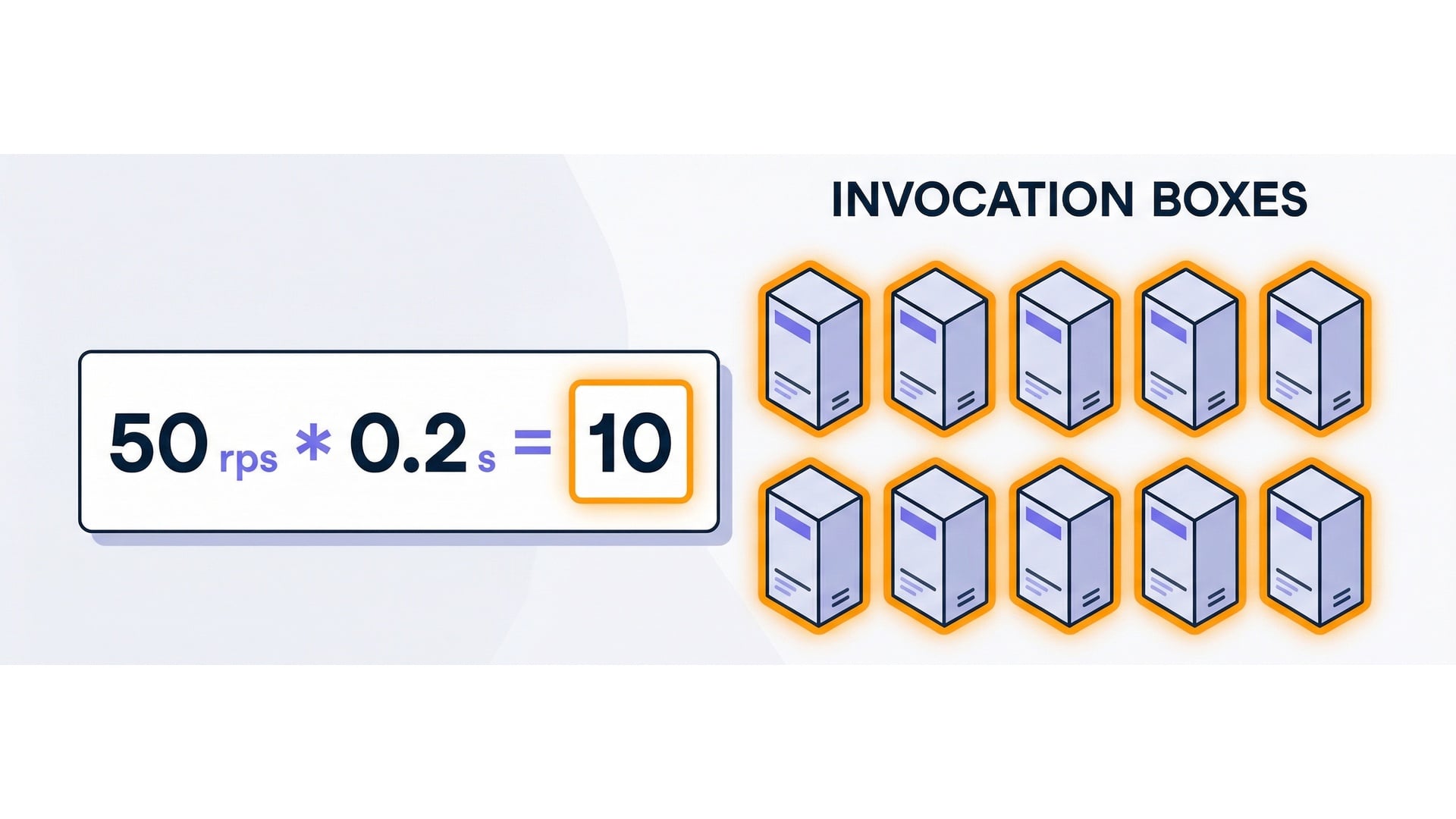

Example: 50 rps at 200 ms

- 200 ms = 0.2 seconds.

- 50 * 0.2 = 10 concurrent executions.

- Faster code means fewer parallel runs.

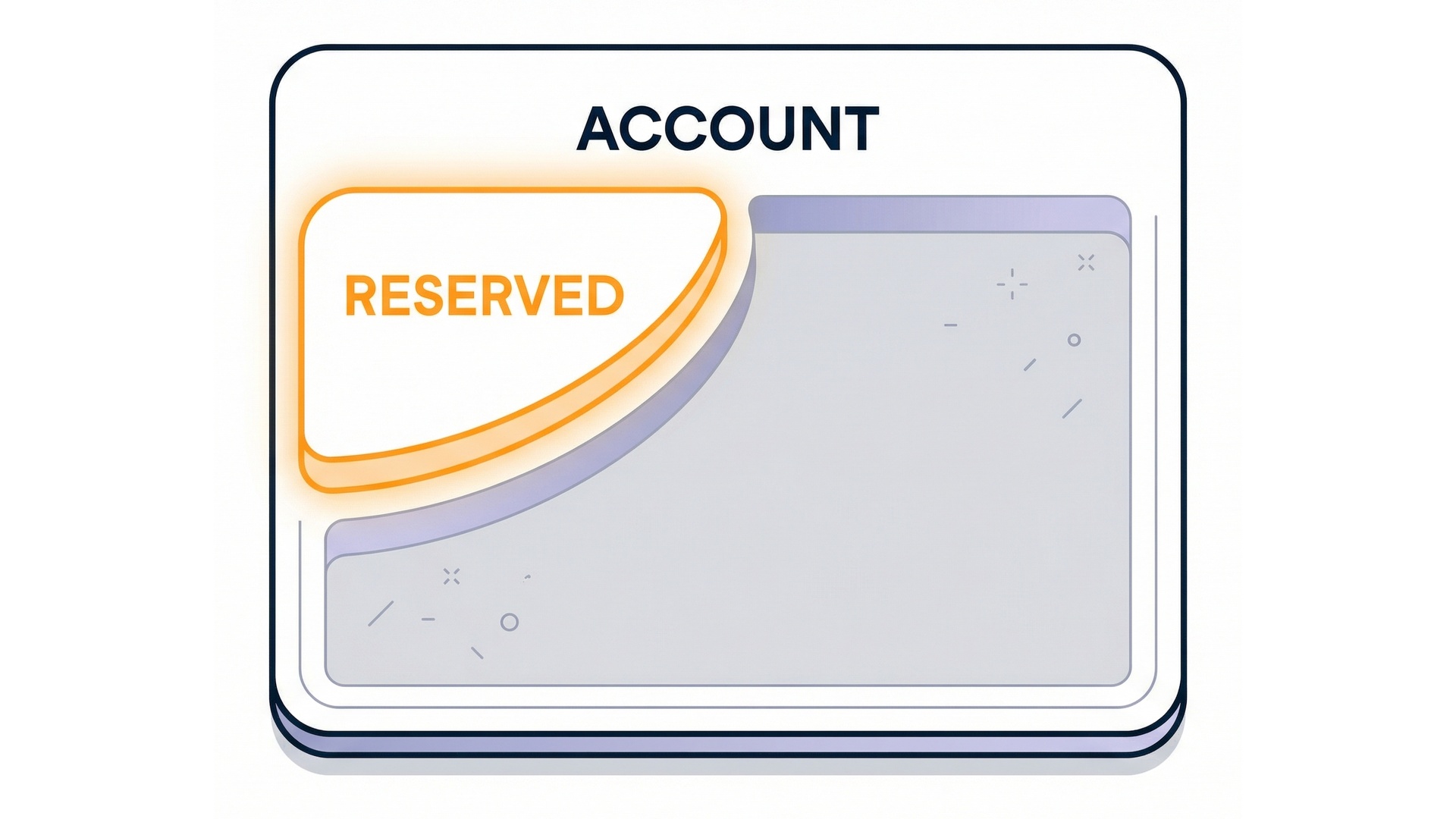

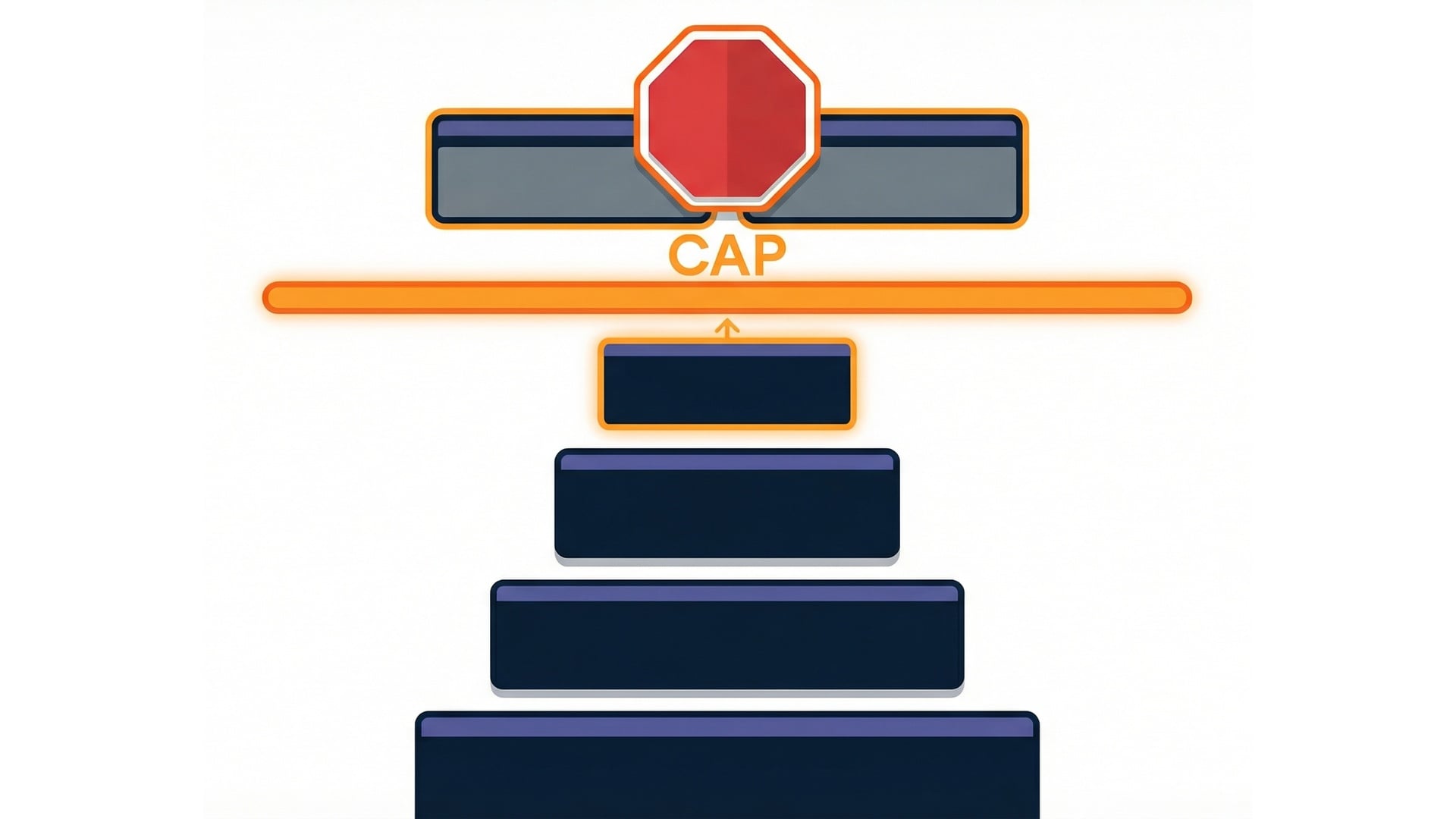

Limits: account pool vs function slice

- Concurrency is limited at the account level.

- Reserve part of the pool for a critical function.

- Other functions share what's left.

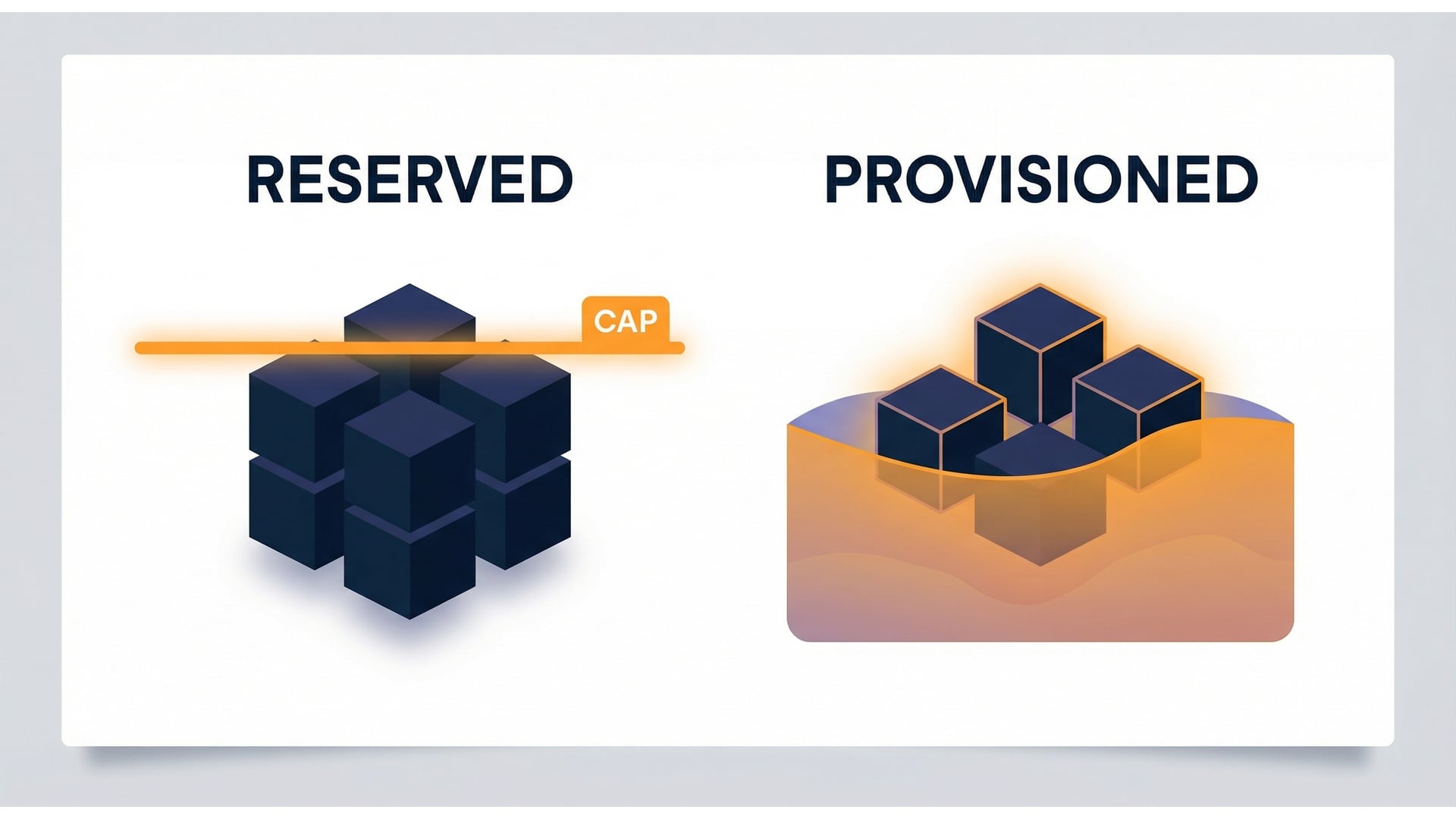

Reserved Concurrency: a hard cap

- A safety valve.

- Limits parallel work.

- Above the cap, invocations throttle.

- A noisy function can't exhaust capacity.

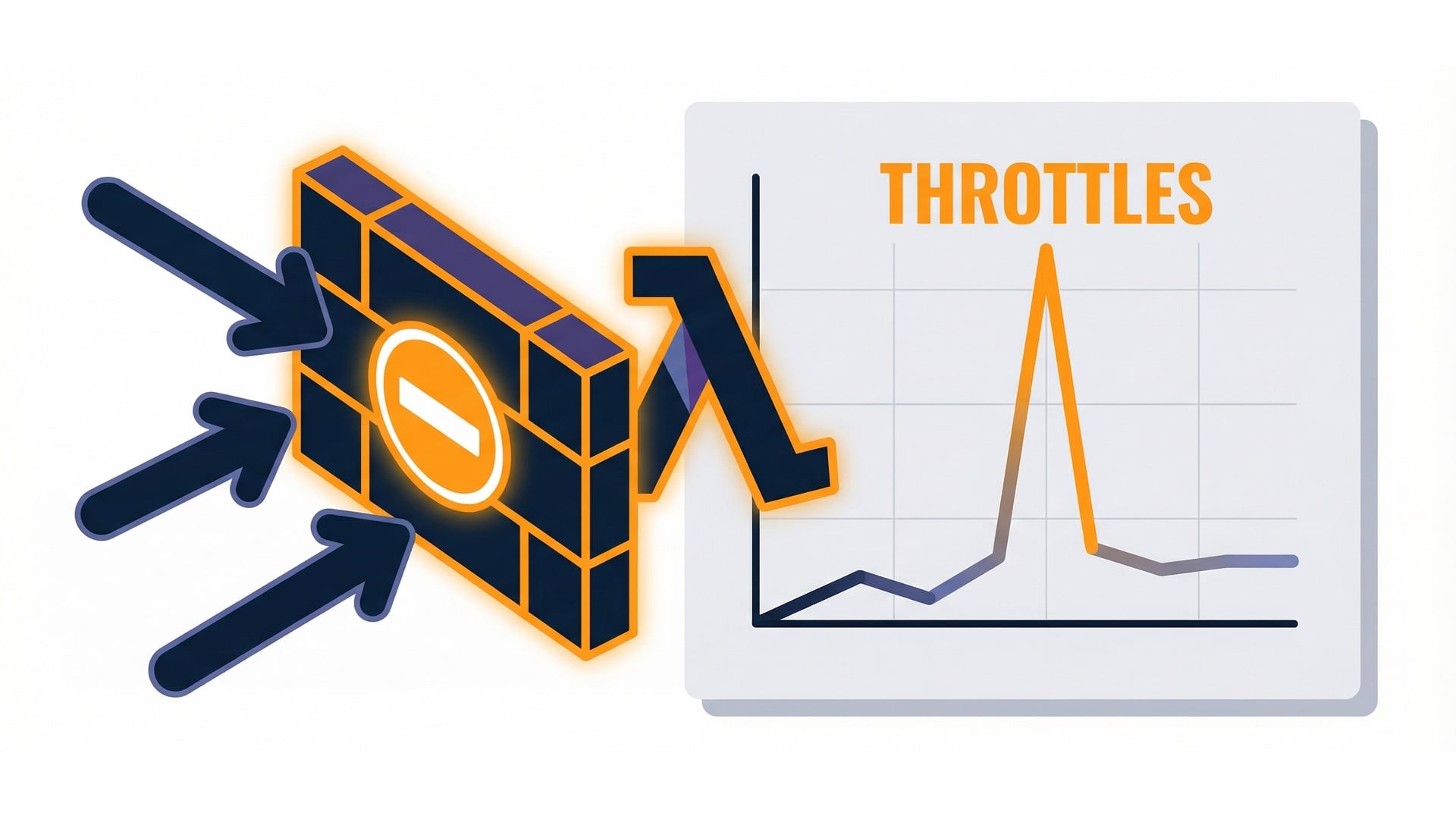

Throttling: what it looks like

- Lambda throttles at the concurrency limit.

- The caller doesn't get normal execution.

- You see throttles in monitoring.

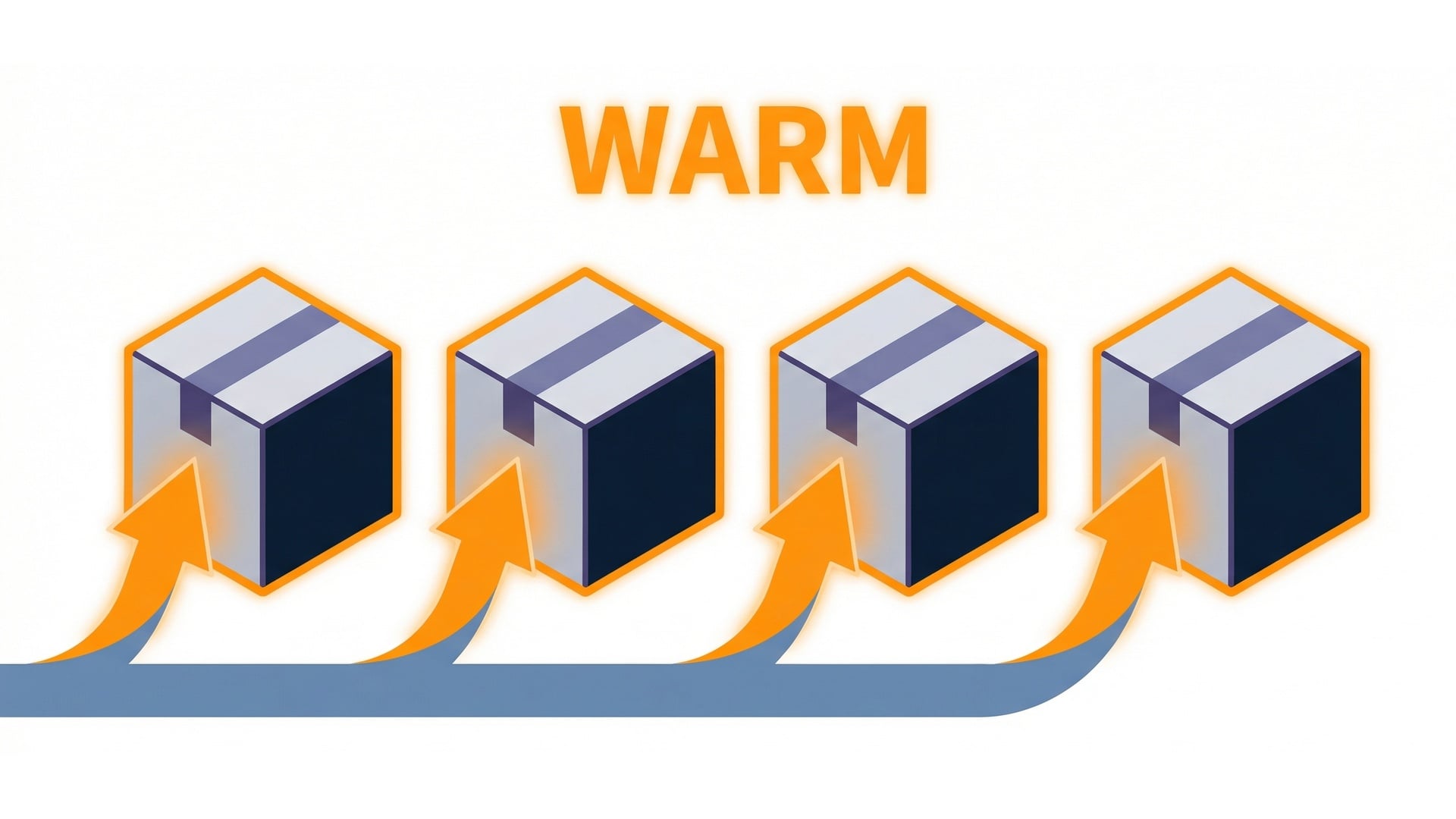

Provisioned Concurrency: warm capacity

- A pool of pre-initialized environments.

- Often behind an alias.

- Requests start faster.

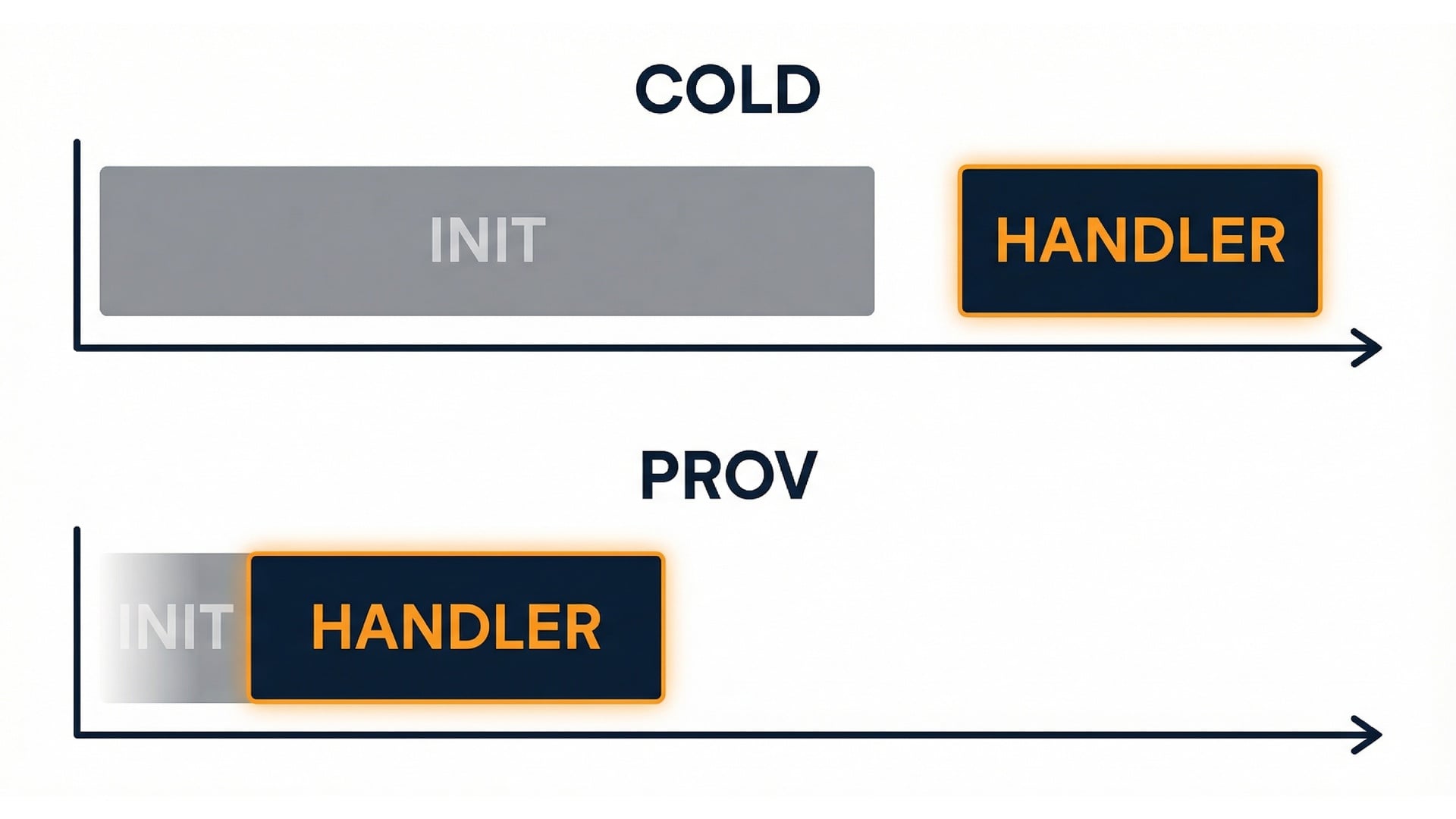

Cold start vs provisioned

Cold start

- A one-time initialization delay.

- First request pays the setup cost.

Provisioned

- Init work done in advance.

- First request doesn't pay the setup cost.

Reserved vs provisioned (different problems)

- Reserved controls load.

- Provisioned controls startup latency.

- Many production functions use both.

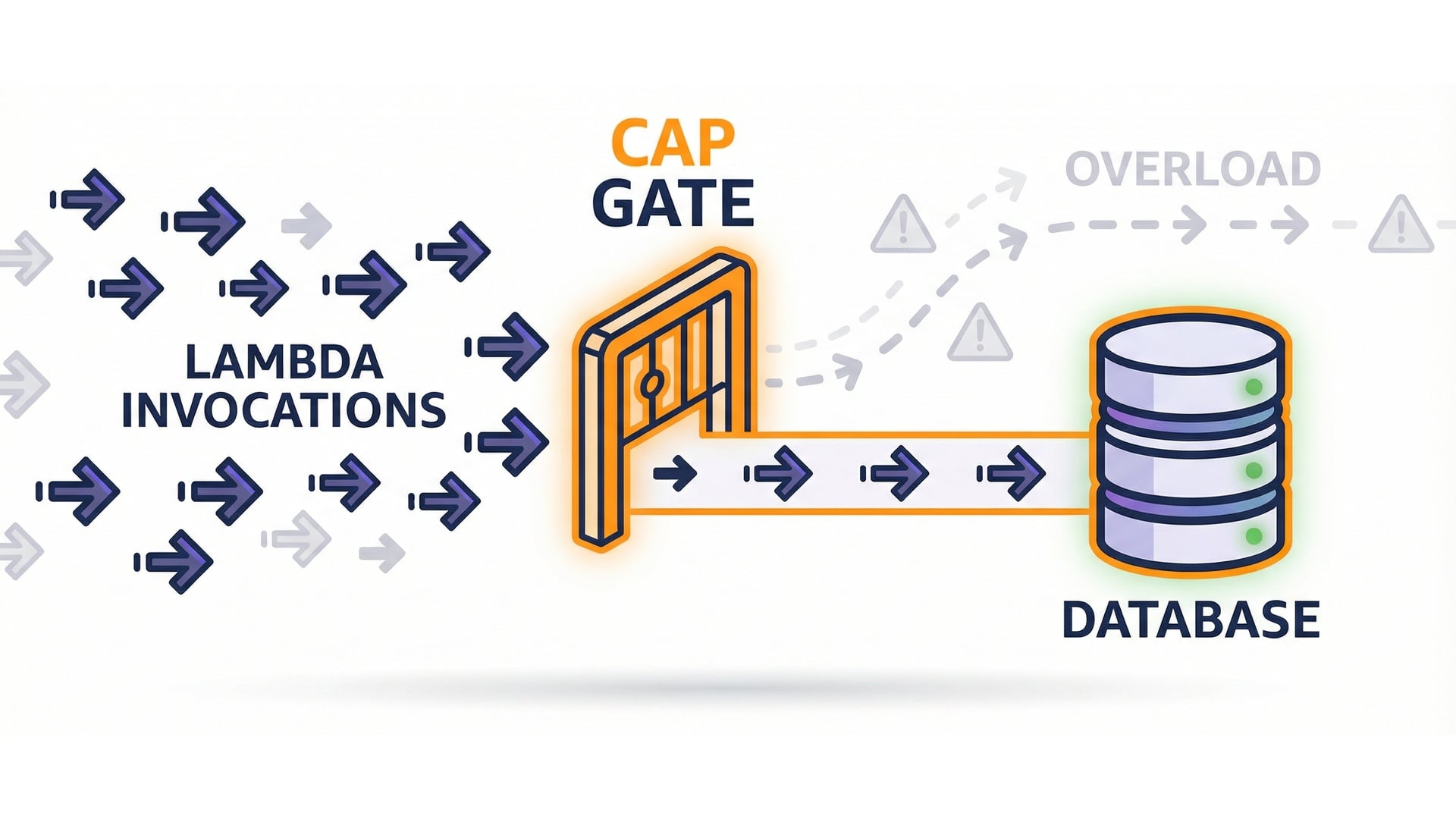

Protect downstream systems

- Database handles only so many connections.

- A concurrency cap prevents it.

- A traffic spike can turn into an outage.

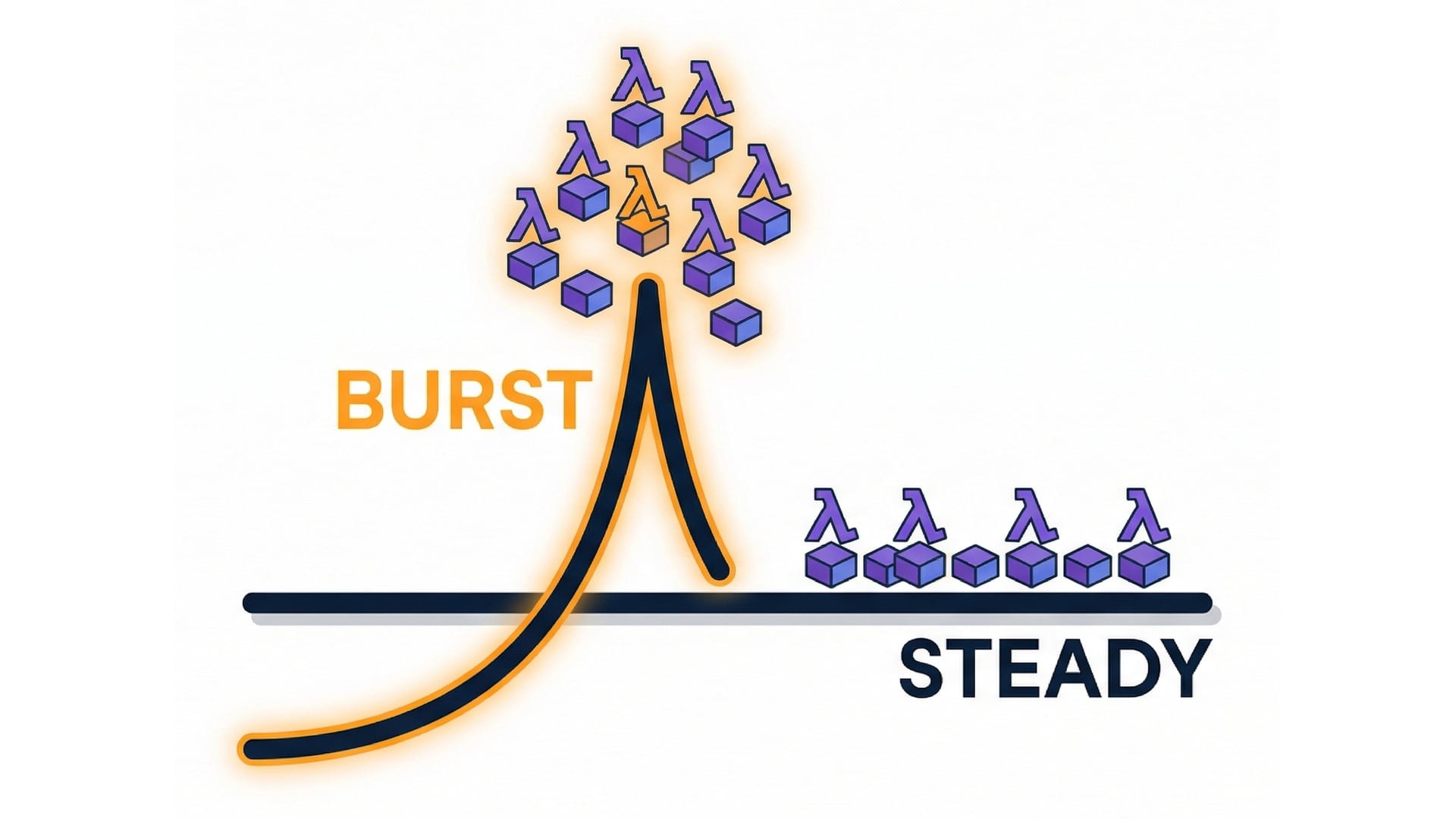

Bursts vs steady traffic

- Bursts create many parallel environments.

- Slow handlers keep concurrency high.

- Caps can smooth the spike.

- Improving duration is also a scaling strategy.

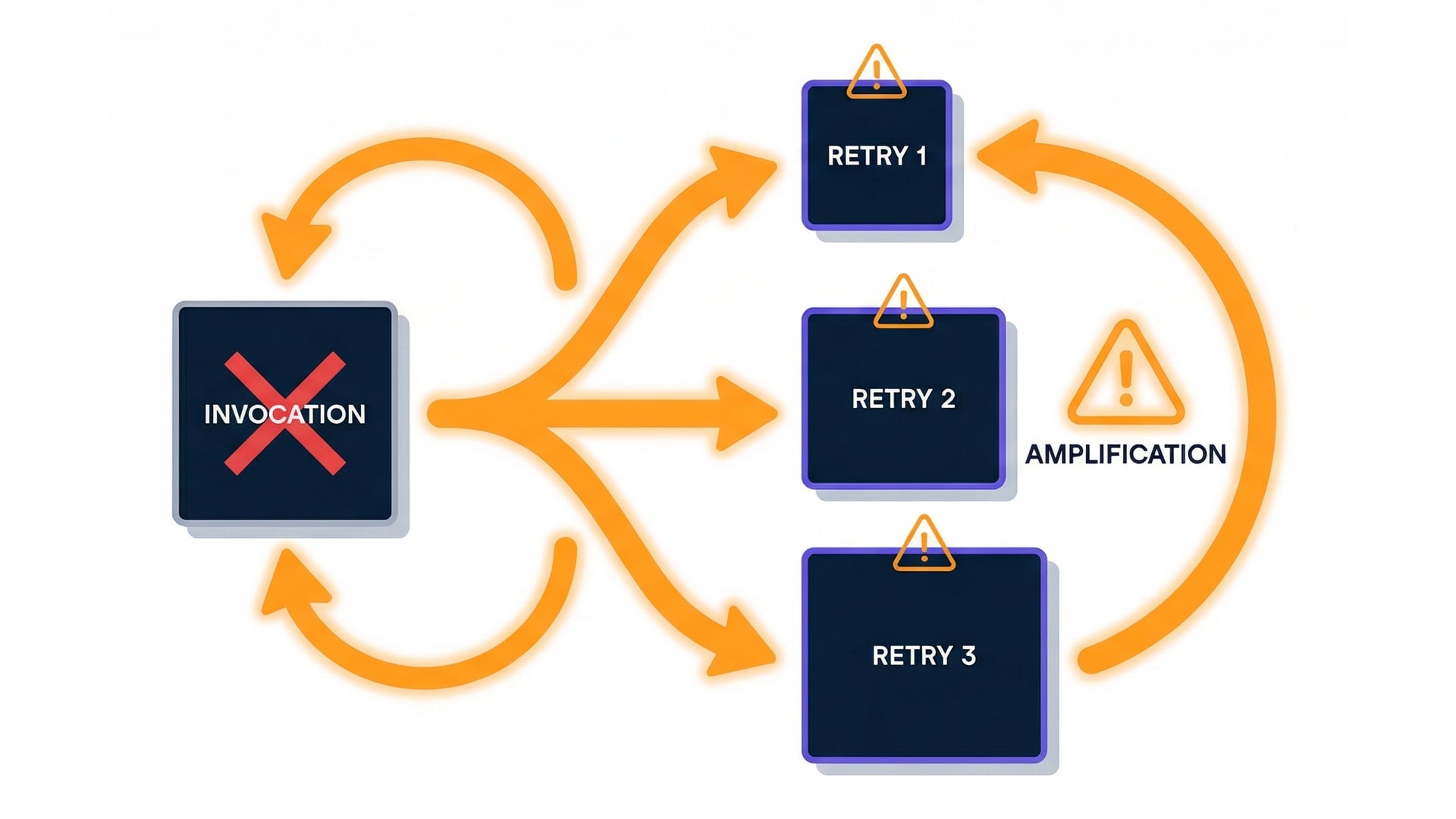

Failures and retries increase load

- Retries can amplify traffic.

- Concurrency limits reduce blast radius.

- A failure loop can overwhelm dependencies.

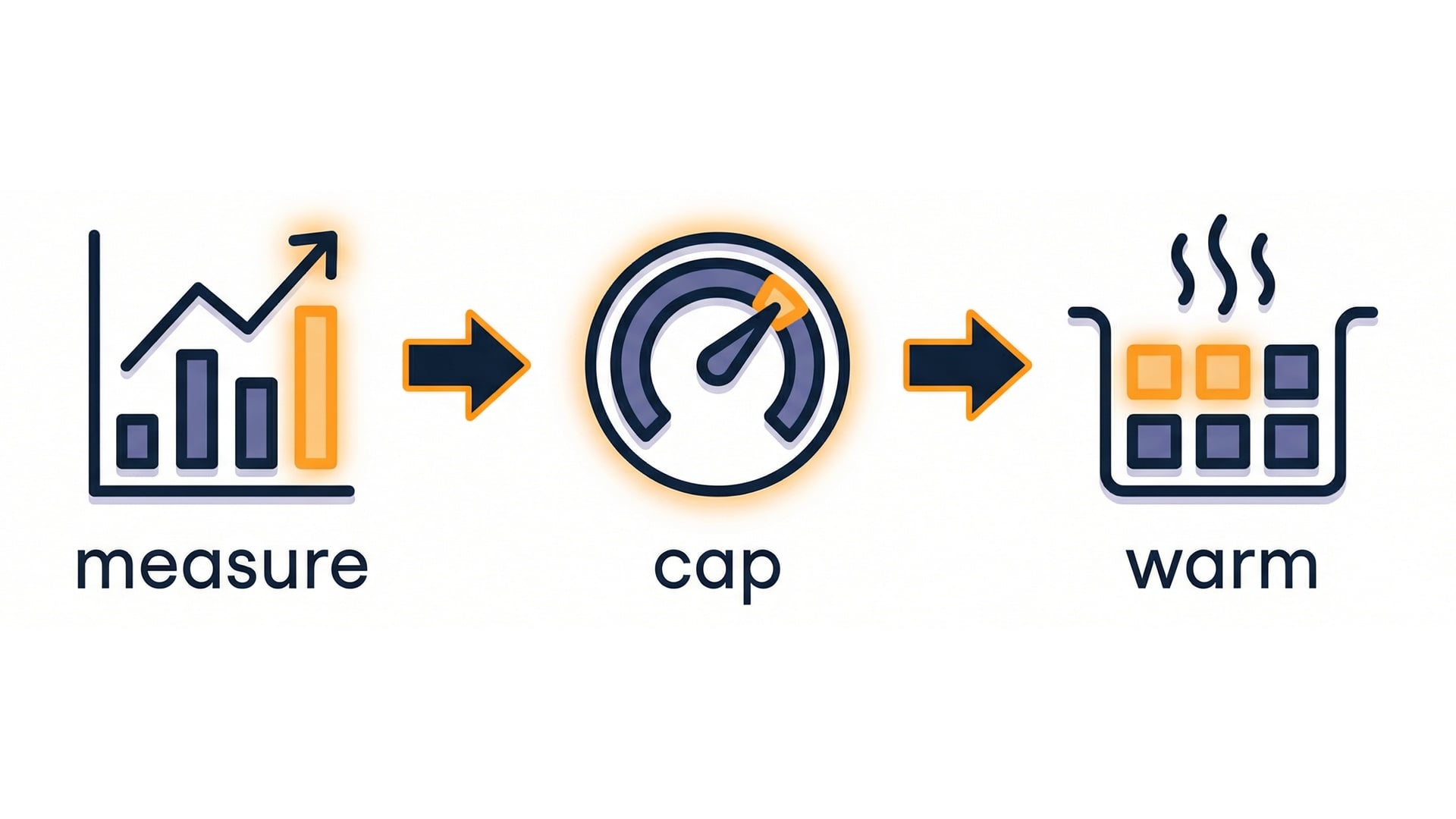

A simple tuning workflow

- Start with measurement.

- Cap concurrency when throttles or pressure appear.

- Add provisioned capacity when cold starts hurt latency.

Key takeaways

- Concurrency helps you think about scaling.

- Estimate:

rps * duration. - Reserved caps load; watch for throttles.

- Provisioned reduces cold-start latency.

Let's practice!

Serverless Applications with AWS Lambda