Métodos avançados de divisão

Retrieval Augmented Generation (RAG) com LangChain

Meri Nova

Machine Learning Engineer

Limitações das estratégias atuais de divisão

🤦 Divisões são ingênuas (sem contexto)

- Ignoram o contexto do texto ao redor

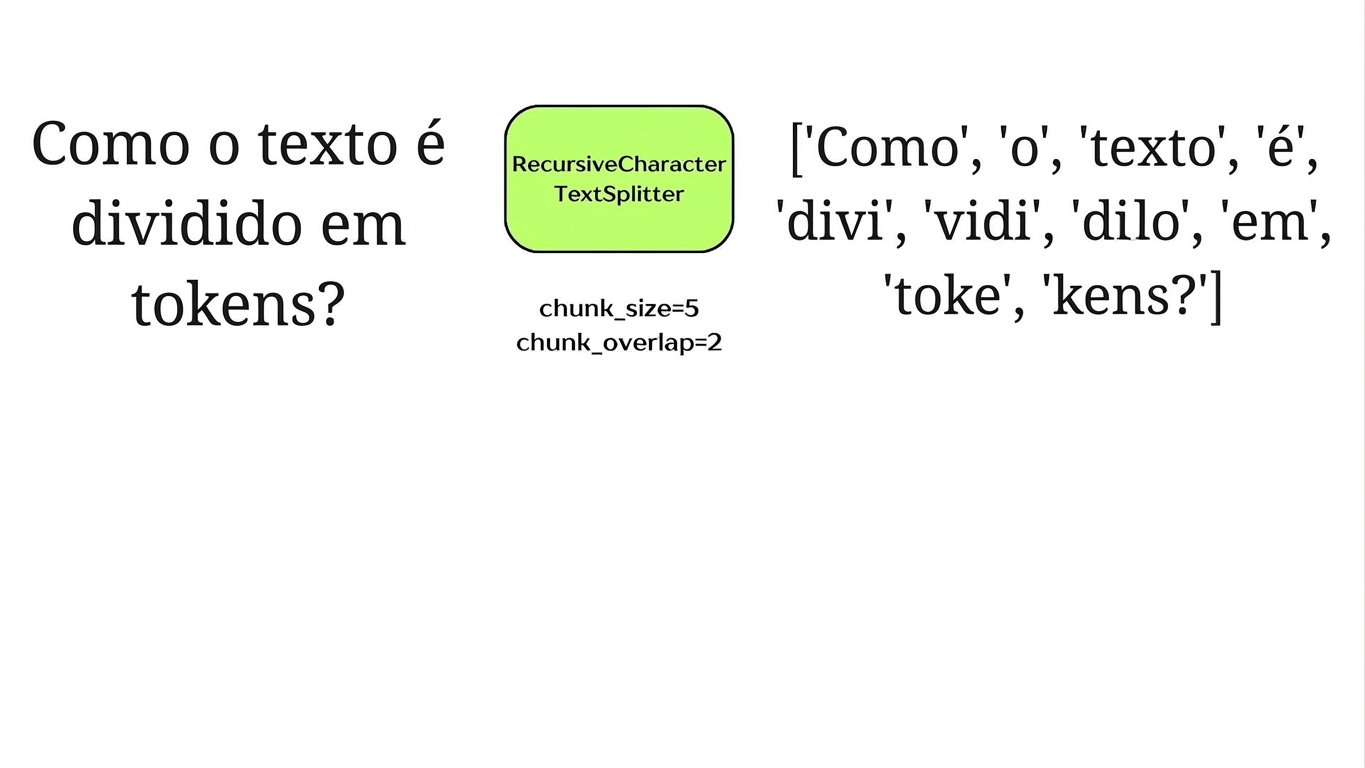

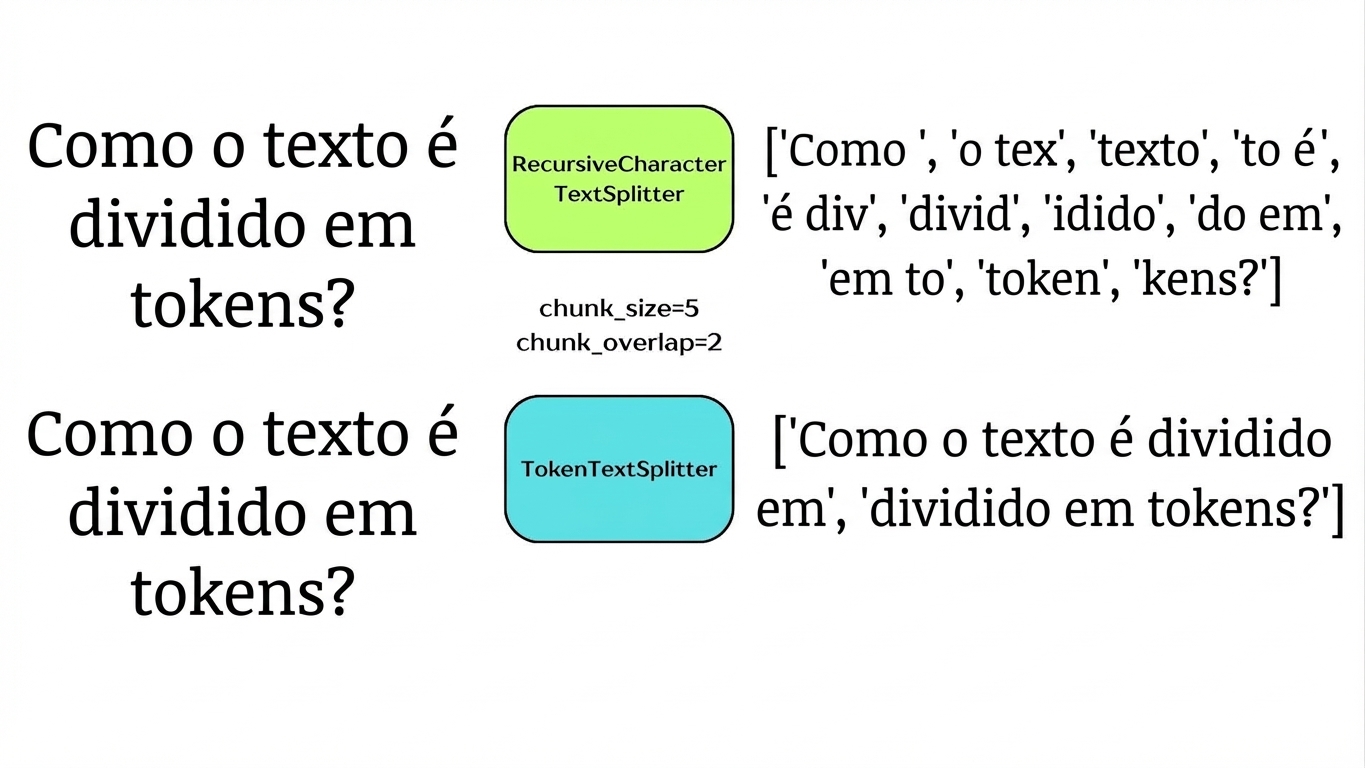

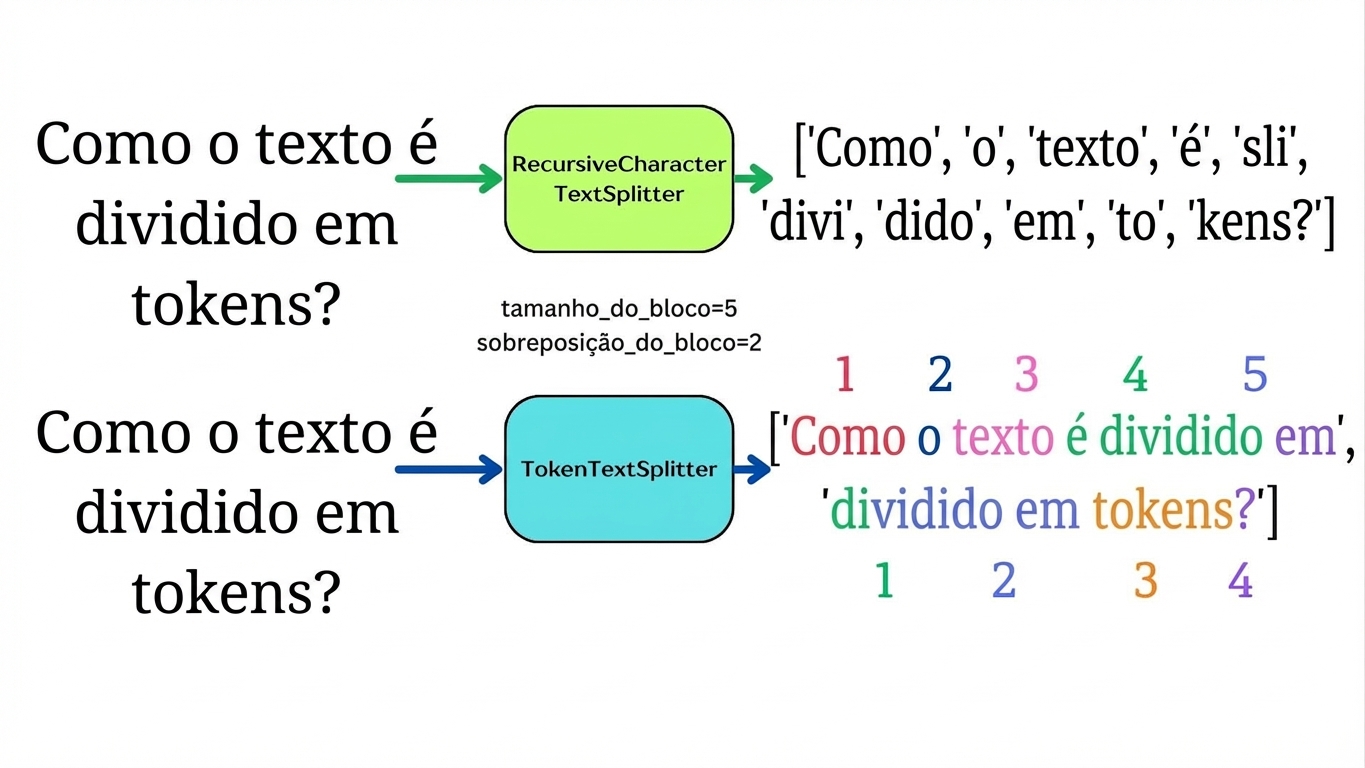

🖇 Divisões por caracteres vs. tokens

- Modelos processam tokens

- Risco de exceder a janela de contexto

→ SemanticChunker

→ TokenTextSplitter

Divisão por tokens

Divisão por tokens

Divisão por tokens

Divisão por tokens

import tiktoken from langchain_text_splitters import TokenTextSplitter example_string = "Mary had a little lamb, it's fleece was white as snow." encoding = tiktoken.encoding_for_model('gpt-4o-mini') splitter = TokenTextSplitter(encoding_name=encoding.name, chunk_size=10, chunk_overlap=2)chunks = splitter.split_text(example_string) for i, chunk in enumerate(chunks): print(f"Chunk {i+1}:\n{chunk}\n")

Divisão por tokens

Chunk 1:

Mary had a little lamb, it's fleece

Chunk 2:

fleece was white as snow.

Divisão por tokens

for i, chunk in enumerate(chunks):

print(f"Chunk {i+1}:\nNo. tokens: {len(encoding.encode(chunk))}\n{chunk}\n")

Chunk 1:

No. tokens: 10

Mary had a little lamb, it's fleece was

Chunk 2:

No. tokens: 6

fleece was white as snow.

Divisão semântica

Divisão semântica

Divisão semântica

Divisão semântica

from langchain_openai import OpenAIEmbeddings from langchain_experimental.text_splitter import SemanticChunkerembeddings = OpenAIEmbeddings(api_key="...", model='text-embedding-3-small')semantic_splitter = SemanticChunker( embeddings=embeddings,breakpoint_threshold_type="gradient", breakpoint_threshold_amount=0.8)

1 https://api.python.langchain.com/en/latest/text_splitter/langchain_experimental.text_splitter. SemanticChunker.html

Divisão semântica

chunks = semantic_splitter.split_documents(data)

print(chunks[0])

page_content='Retrieval-Augmented Generation for\nKnowledge-Intensive NLP Tasks\ Patrick Lewis,

Ethan Perez,\nAleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Heinrich

Küttler,\nMike Lewis, Wen-tau Yih, Tim Rocktäschel, Sebastian Riedel, Douwe Kiela\nFacebook AI

Research; University College London;New York University;\[email protected]\nAbstract\nLarge

pre-trained language models have been shown to store factual knowledge\nin their parameters,

and achieve state-of-the-art results when fine-tuned on down-\nstream NLP tasks. However, their

ability to access and precisely manipulate knowl-\nedge is still limited, and hence on

knowledge-intensive tasks, their performance\nlags behind task-specific architectures.'

metadata={'source': 'rag_paper.pdf', 'page': 0}

Vamos praticar!

Retrieval Augmented Generation (RAG) com LangChain