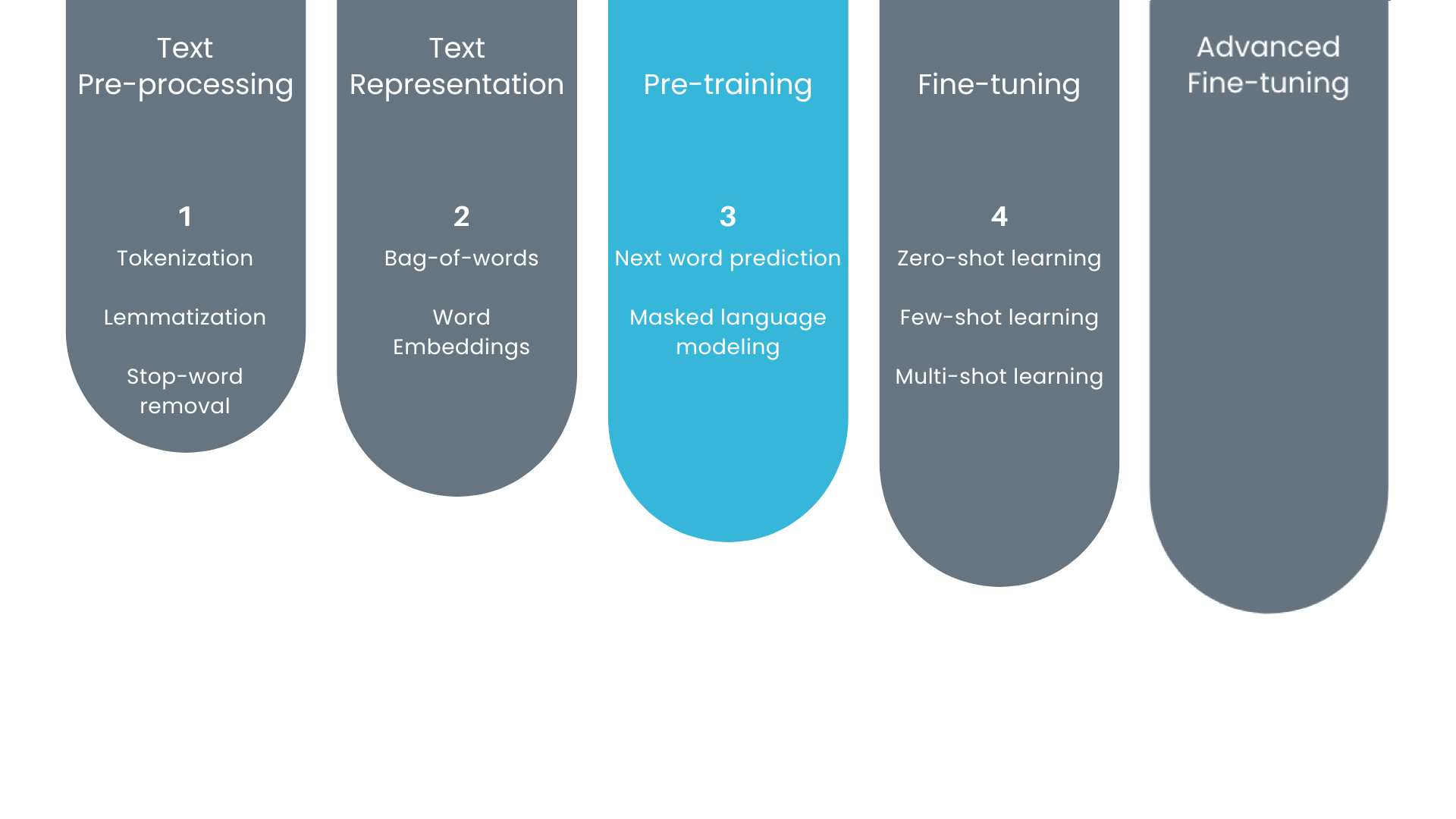

Building blocks to train LLMs

Concetti sui Large Language Models (LLM)

Vidhi Chugh

AI strategist and ethicist

Where are we?

Generative pre-training

Trained using generative pre-training

- Input data of text tokens

- Trained to predict the tokens within the dataset

- Types:

- Next word prediction

- Masked language modeling

Next word prediction

- Supervised learning technique

- Model trained on input-output pairs

- Predicts next word and generates coherent text

- Captures the dependencies between words

- Training Data

- Pairs of input and output examples

Training data for next word prediction

Input

The quick brown

The quick brown fox

The quick brown fox jumps

The quick brown fox jumps over

The quick brown fox jumps over the

The quick brown fox jumps over the lazy

The quick brown fox jumps over the lazy dog.

Output

fox

jumps

over

the

lazy

dog

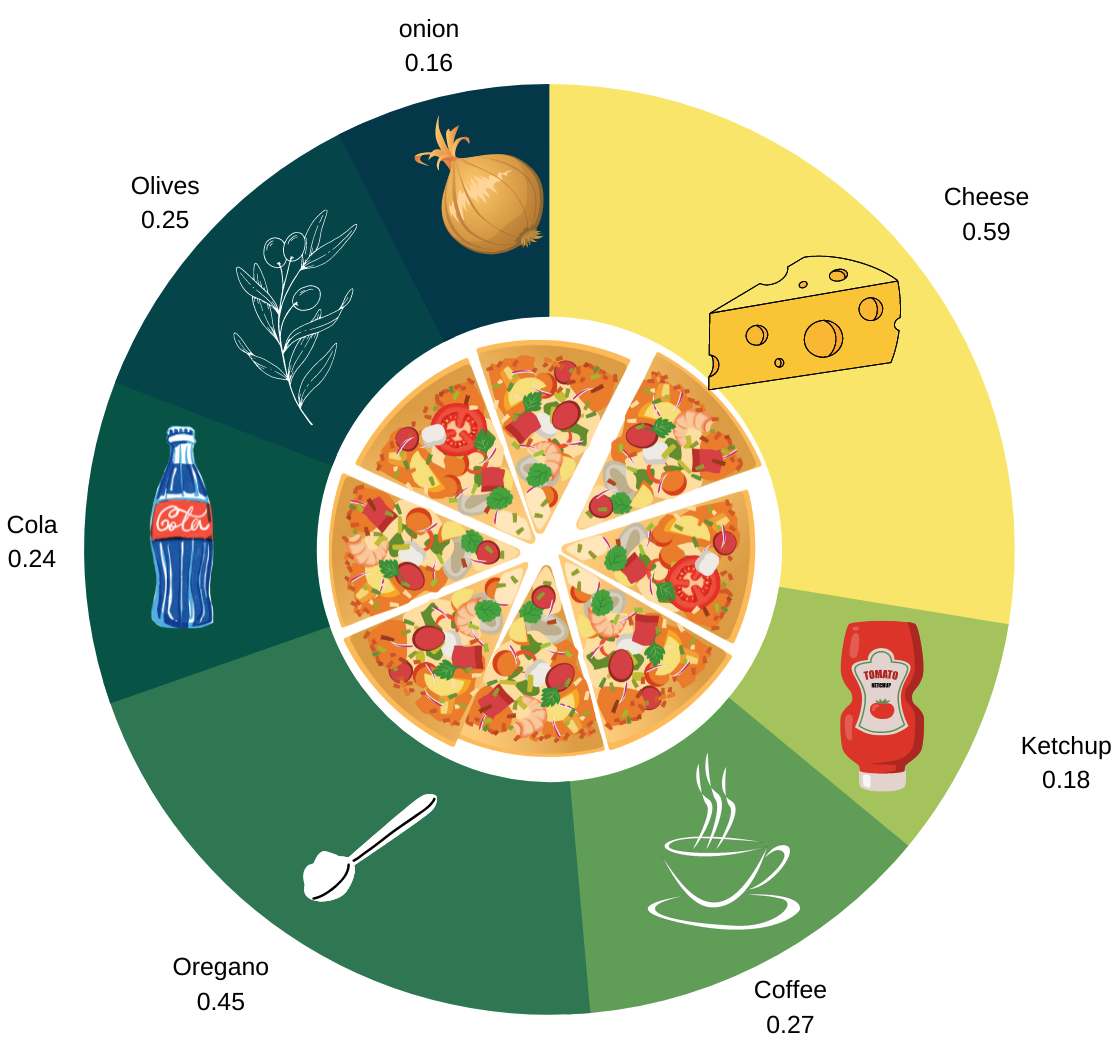

Which word relates more with pizza?

- More examples = better prediction

- For example:

- I love to eat pizza with _ _ _ _ _ _

- Cheese is more related with pizza than anything else

Masked language modeling

Hides a selective word

Trained model predicts the masked word

Original Text: "The quick brown fox jumps over the lazy dog."

Masked Text: "The quick [MASK] fox jumps over the lazy dog."

Objective: predict the missing word

Based on learnings from training data

Let's practice!

Concetti sui Large Language Models (LLM)