ReLU aktivasyon fonksiyonları

PyTorch ile Deep Learning'e Giriş

Jasmin Ludolf

Senior Data Science Content Developer, DataCamp

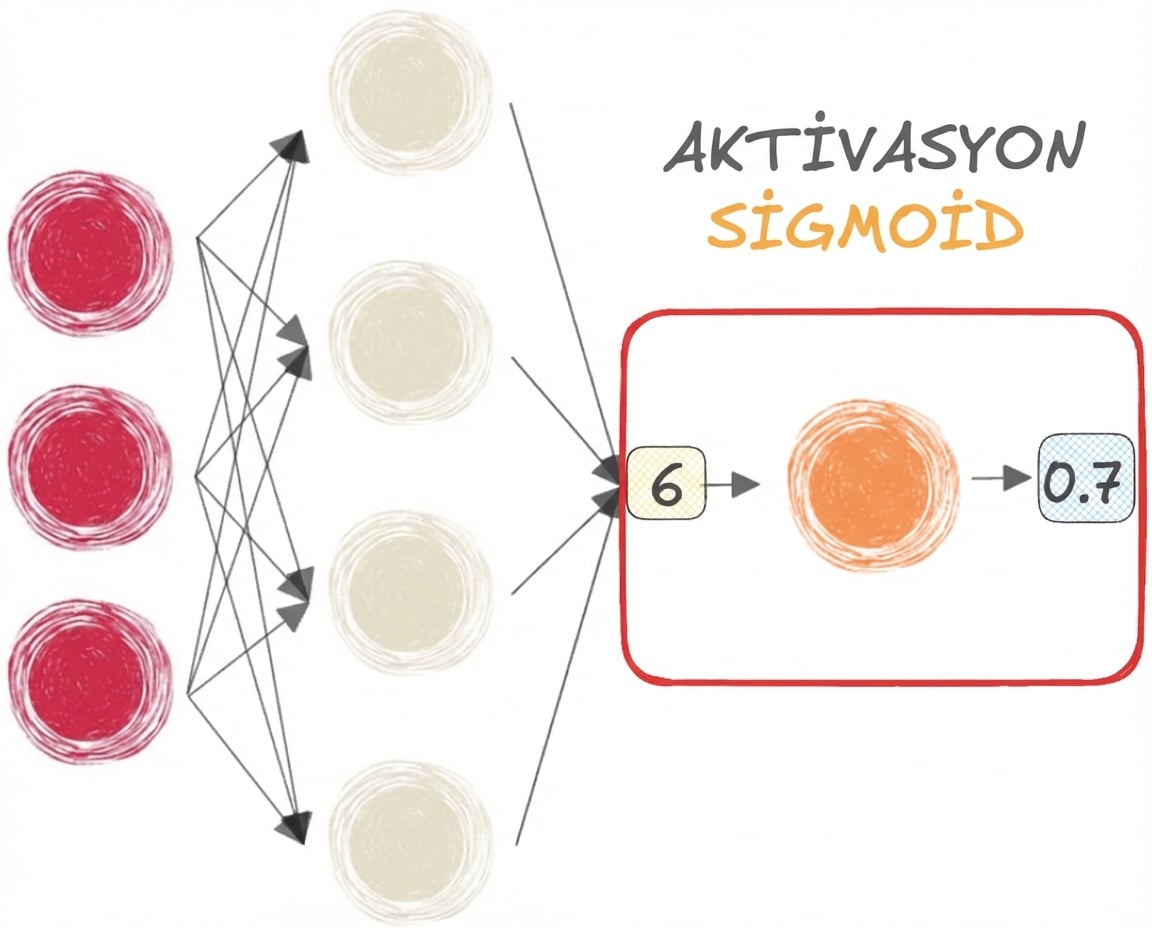

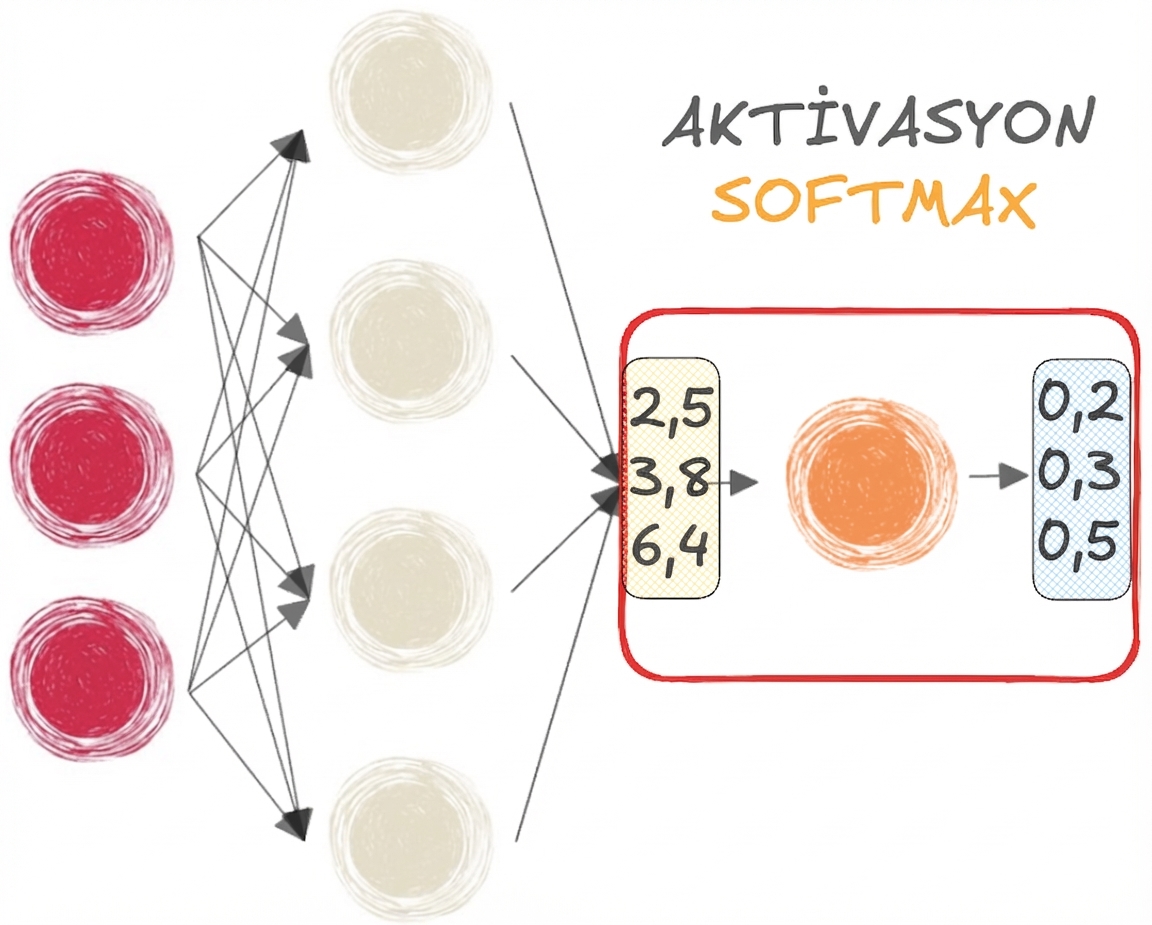

Sigmoid ve softmax fonksiyonları

$$

- SIGMOID, İKİ SINIFLI sınıflandırma için

$$

- SOFTMAX, ÇOK SINIFLI sınıflandırma için

Sigmoid ve softmax'ın sınırlamaları

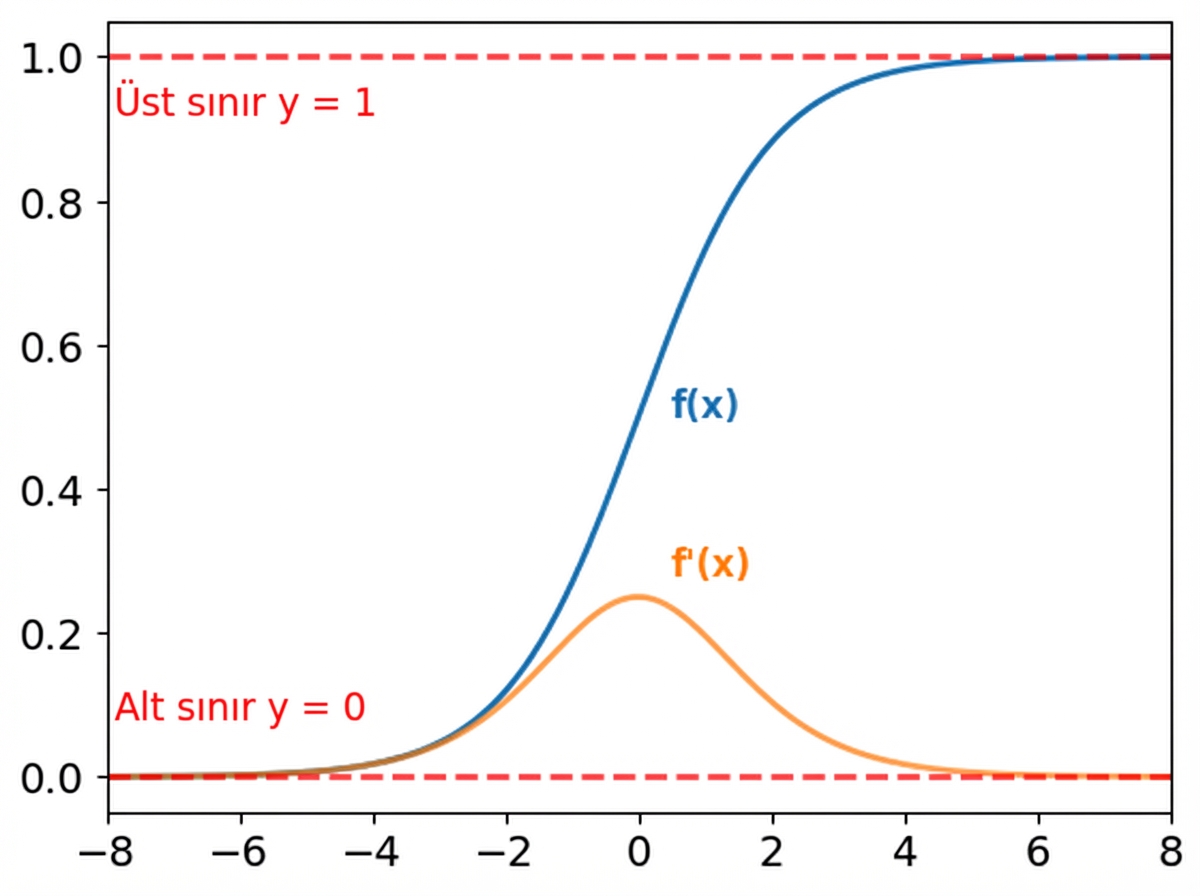

Sigmoid fonksiyonu:

- Çıktılar 0 ile 1 arasındadır

- Ağın her yerinde kullanılabilir

Gradyanlar:

- x çok büyük ya da çok küçükken çok küçüktür

- Doymaya ve kaybolan gradyan sorununa yol açar

$$

Softmax da doyma yaşar

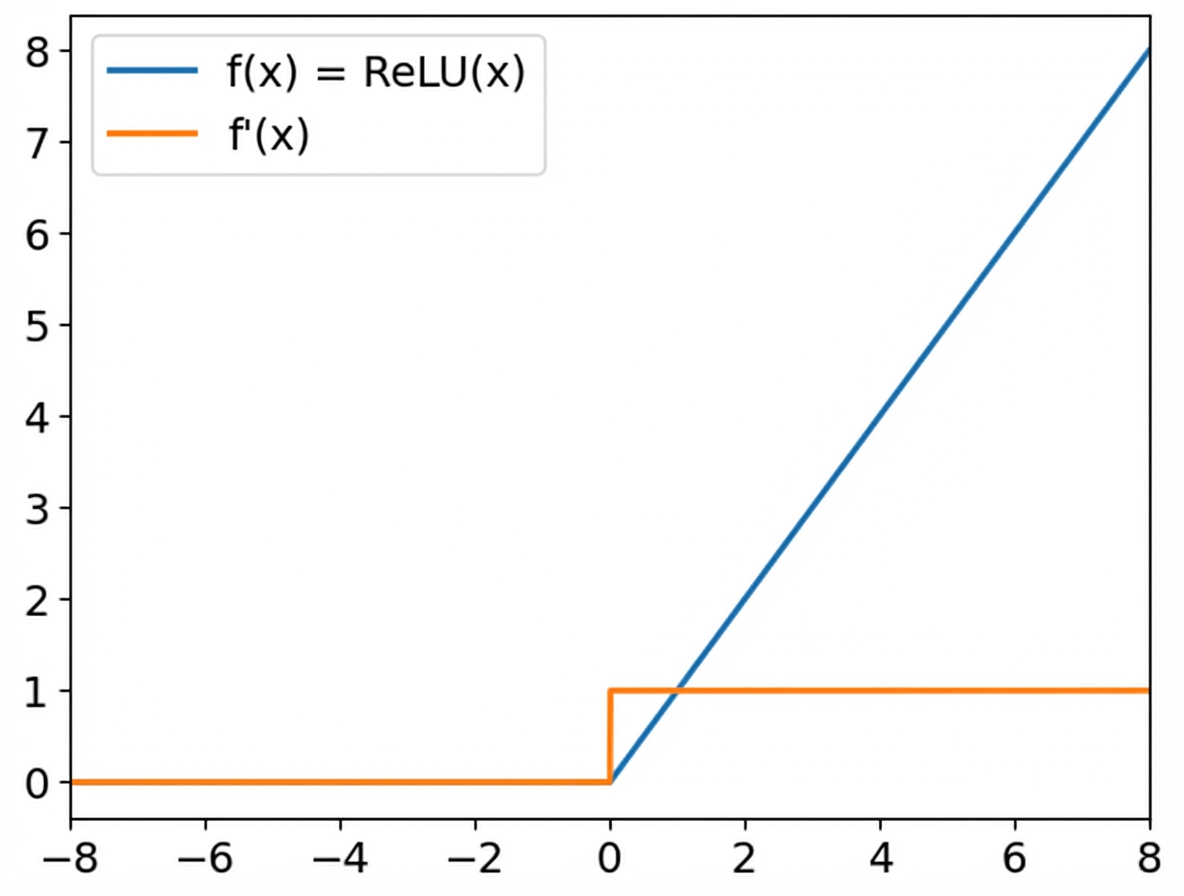

ReLU

Rectified Linear Unit (ReLU):

f(x) = max(x, 0)- Pozitif girdiler: çıktı girdiye eşittir

- Negatif girdiler: çıktı 0'dır

- Kaybolan gradyanları azaltır

$$

PyTorch'ta:

relu = nn.ReLU()

Leaky ReLU

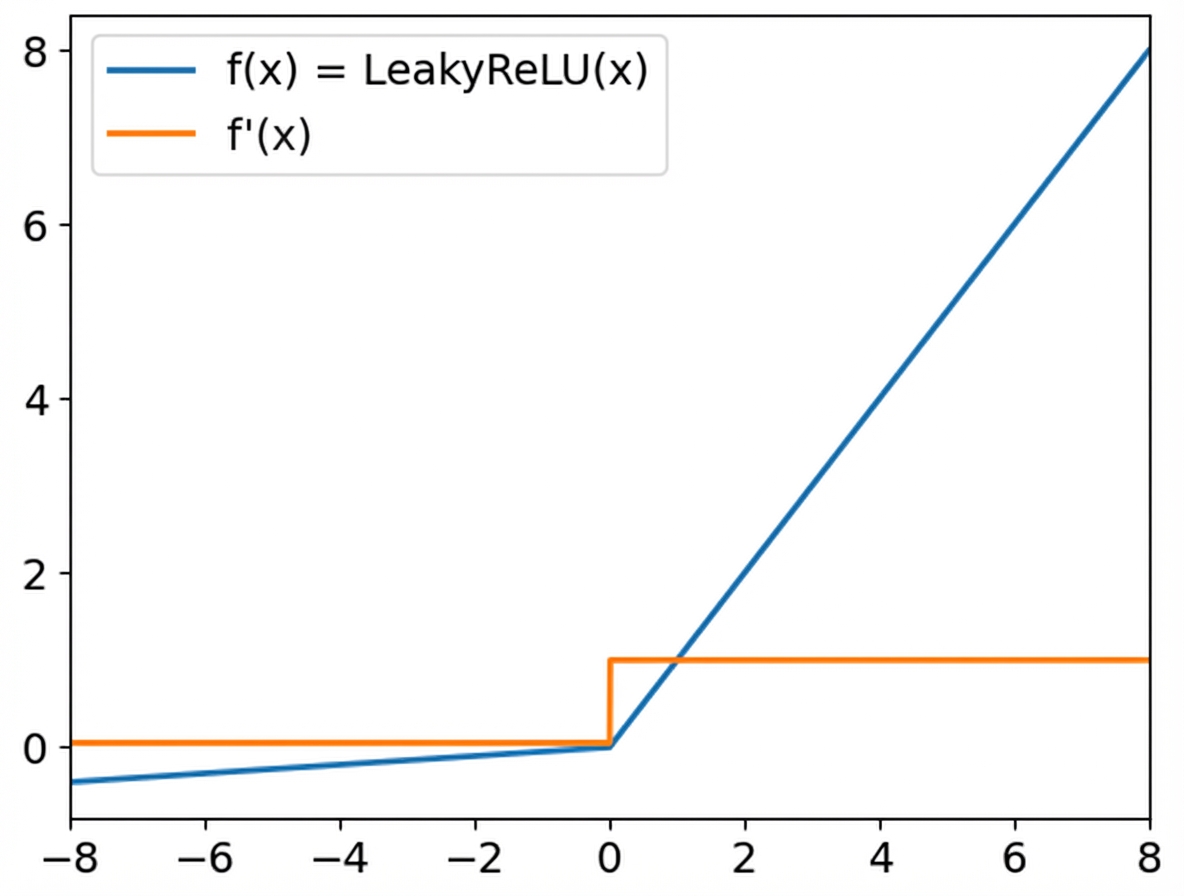

Leaky ReLU:

- Pozitif girdiler ReLU gibi davranır

- Negatif girdiler küçük bir katsayıyla ölçeklenir (varsayılan 0,01)

- Negatif girdilerin gradyanları sıfır değildir

$$

PyTorch'ta:

leaky_relu = nn.LeakyReLU(

negative_slope = 0.05)

Haydi pratik yapalım!

PyTorch ile Deep Learning'e Giriş