DeepSeek modellerine istek gönderme

Python ile DeepSeek Kullanımı

James Chapman

Curriculum Manager, DataCamp

Özet

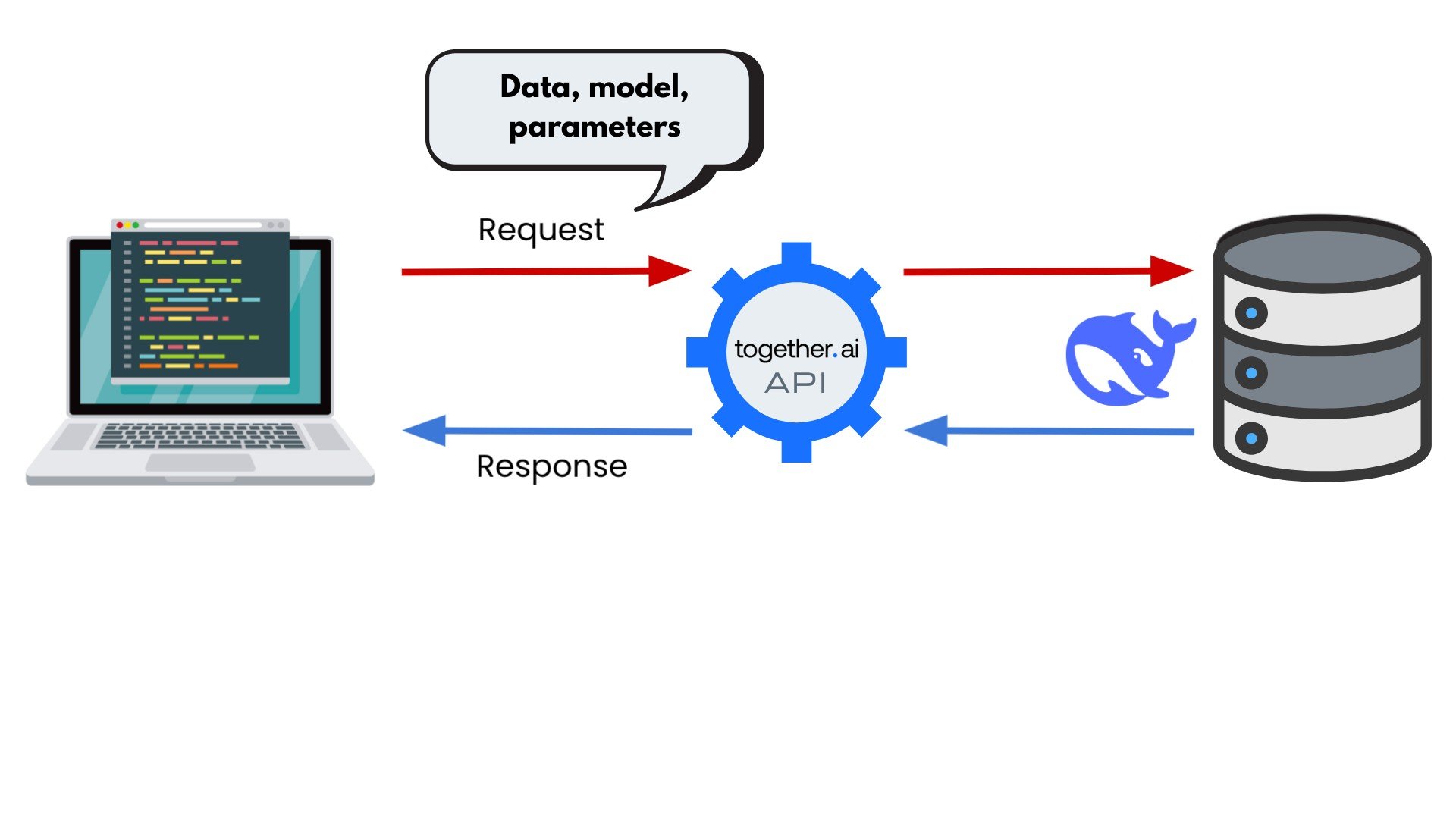

İstek oluşturma

from openai import OpenAI# DeepSeek API'ye göndermek için: base_url="https://api.deepseek.com" client = OpenAI(api_key="<TogetherAI API Key>", base_url="https://api.together.xyz/v1")

openaikütüphanesiyle istek oluşturun- OpenAI: Yapay zeka model ve uygulama geliştiricileri (ör. ChatGPT)

base_url: isteği OpenAI’dan DeepSeek sağlayıcısına yönlendirirapi_key: API kimlik doğrulaması (sağlayıcı dokümantasyonuna bakın)

1 https://platform.deepseek.com/api_keys

İstek oluşturma

from openai import OpenAI # DeepSeek API'ye göndermek için: base_url="https://api.deepseek.com" client = OpenAI(api_key="<TogetherAI API Key>", base_url="https://api.together.xyz/v1")response = client.chat.completions.create(# DeepSeek'in API'sinde: model="deepseek-chat" model="deepseek-ai/DeepSeek-V3",messages=[{"role": "user", "content": "In one sentence, what is hallucination in AI?"}])print(response)

Yanıt

ChatCompletion(id='ns1zcjp-zqrih-937de597af0fd643', choices=[Choice(finish_reason='stop', index=0,

logprobs=None, message=ChatCompletionMessage(content='Hallucination in AI refers to the generation of

false or nonsensical information that is presented as factual, often due to limitations in training

data, model biases, or inference errors.', refusal=None, role='assistant', annotations=None, audio=None,

function_call(None), tool_calls=[]), seed=12469585682595789000)], created=1745920244,

model='deepseek-ai/DeepSeek-V3', object='chat.completion', service_tier=None, system_fingerprint=None,

usage=CompletionUsage(completion_tokens=38, prompt_tokens=14, total_tokens=52,

completion_tokens_details=None, prompt_tokens_details=None), prompt=[])

Yanıt

ChatCompletion(id='ns1zcjp-zqrih-937de597af0fd643',

choices=[Choice(finish_reason='stop', index=0, logprobs=None,

message=ChatCompletionMessage(content='Hallucination in AI refers to the

generation of false or nonsensical information that is presented as

factual, often due to limitations in training data, model biases, or

inference errors.',

refusal=None, role='assistant', annotations=None, audio=None,

function_call=None, tool_calls=[]), seed=12469585682595789000)],

created=1745920244,

model='deepseek-ai/DeepSeek-V3',

object='chat.completion',

service_tier=None,

system_fingerprint=None,

usage=CompletionUsage(completion_tokens=38, prompt_tokens=14, total_tokens=52,

completion_tokens_details=None, prompt_tokens_details=None),

prompt=[])

Yanıtı yorumlama

print(response.choices)

[Choice(finish_reason='stop', index=0, logprobs=None, message=ChatCompletionMessage(

content='Hallucination in AI refers to the generation of false or nonsensical information

that is presented as factual, often due to limitations in training data, model biases, or

inference errors.', refusal=None, role='assistant', annotations=None, audio=None,

function_call=None, tool_calls=[]), seed=12469585682595789000)]

Yanıtı yorumlama

print(response.choices[0])

Choice(finish_reason='stop', index=0, logprobs=None, message=ChatCompletionMessage(

content='Hallucination in AI refers to the generation of false or nonsensical information

that is presented as factual, often due to limitations in training data, model biases, or

inference errors.', refusal=None, role='assistant', annotations=None, audio=None,

function_call=None, tool_calls=[]), seed=12469585682595789000)

Yanıtı yorumlama

print(response.choices[0].message)

ChatCompletionMessage(content='Hallucination in AI refers to the generation of false or

nonsensical information that is presented as factual, often due to limitations in training

data, model biases, or inference errors.', refusal=None, role='assistant', annotations=None,

audio=None, function_call=None, tool_calls=[])

print(response.choices[0].message.content)

Hallucination in AI refers to the generation of false or nonsensical information that is

presented as factual, often due to limitations in training data, model biases, or inference

errors.

API kullanım maliyetleri

- DeepSeek için:

- Sağlayıcı (ör. together.ai)

- Model

- Daha büyük giriş + çıkış = Daha yüksek maliyet

1 https://api-docs.deepseek.com/quick_start/pricing

Haydi pratik yapalım!

Python ile DeepSeek Kullanımı