Hyperparameterafstemming

Supervised Learning met scikit-learn

George Boorman

Core Curriculum Manager

Hyperparameterafstemming

Ridge/lasso-regressie:

alphakiezenKNN:

n_neighborskiezenHyperparameters: Instellingen vóór het fitten

- Zoals

alphaenn_neighbors

- Zoals

De juiste hyperparameters kiezen

Probeer veel hyperparameterwaarden

Fit ze allemaal apart

Bekijk de prestaties

Kies de beste waarden

Dit heet hyperparameterafstemming

Gebruik cross-validatie om overfitting op de testset te vermijden

Splits de data en doe cross-validatie op de trainingsset

Bewaar de testset voor de eindscores

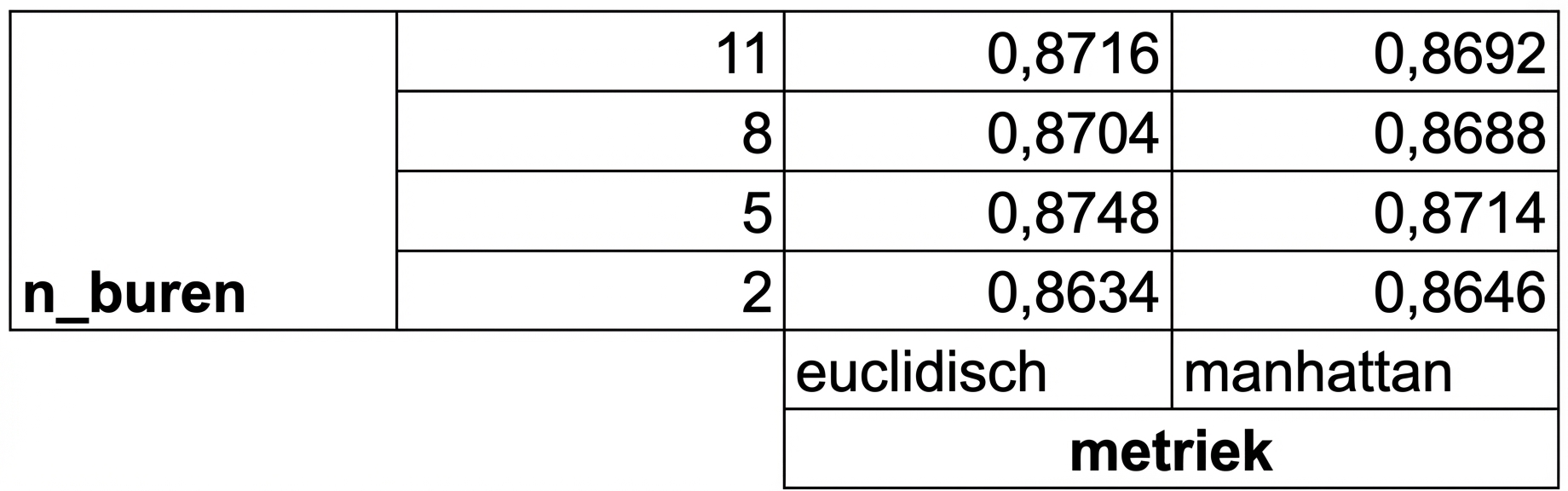

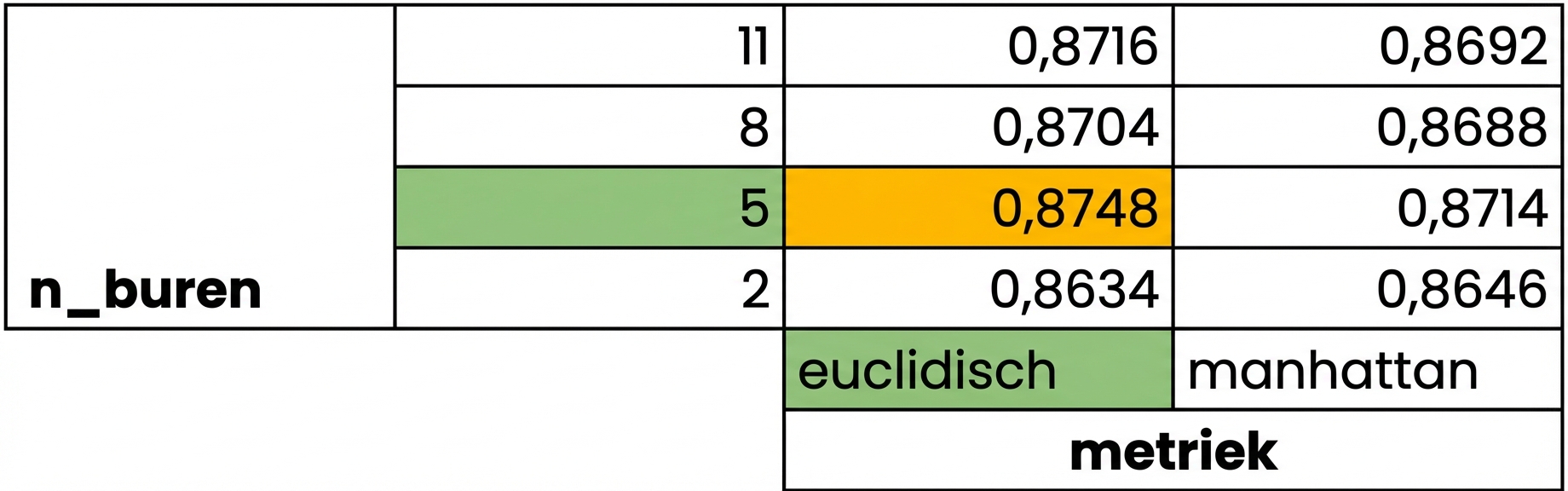

Grid search cross-validatie

Grid search cross-validatie

Grid search cross-validatie

GridSearchCV in scikit-learn

from sklearn.model_selection import GridSearchCVkf = KFold(n_splits=5, shuffle=True, random_state=42)param_grid = {"alpha": np.arange(0.0001, 1, 10), "solver": ["sag", "lsqr"]}ridge = Ridge()ridge_cv = GridSearchCV(ridge, param_grid, cv=kf)ridge_cv.fit(X_train, y_train)print(ridge_cv.best_params_, ridge_cv.best_score_)

{'alpha': 0.0001, 'solver': 'sag'}

0.7529912278705785

Beperkingen en een alternatief

- 3-voudige cross-validatie, 1 hyperparameter, 10 waarden = 30 fits

- 10-voudige cross-validatie, 3 hyperparameters, 30 waarden = 900 fits

RandomizedSearchCV

from sklearn.model_selection import RandomizedSearchCVkf = KFold(n_splits=5, shuffle=True, random_state=42) param_grid = {'alpha': np.arange(0.0001, 1, 10), "solver": ['sag', 'lsqr']} ridge = Ridge()ridge_cv = RandomizedSearchCV(ridge, param_grid, cv=kf, n_iter=2) ridge_cv.fit(X_train, y_train)print(ridge_cv.best_params_, ridge_cv.best_score_)

{'solver': 'sag', 'alpha': 0.0001}

0.7529912278705785

Evalueren op de testset

test_score = ridge_cv.score(X_test, y_test)print(test_score)

0.7564731534089224

Laten we oefenen!

Supervised Learning met scikit-learn