Metrieken voor taaltaken: perplexity en BLEU

Introductie tot LLM’s in Python

Jasmin Ludolf

Senior Data Science Content Developer, DataCamp

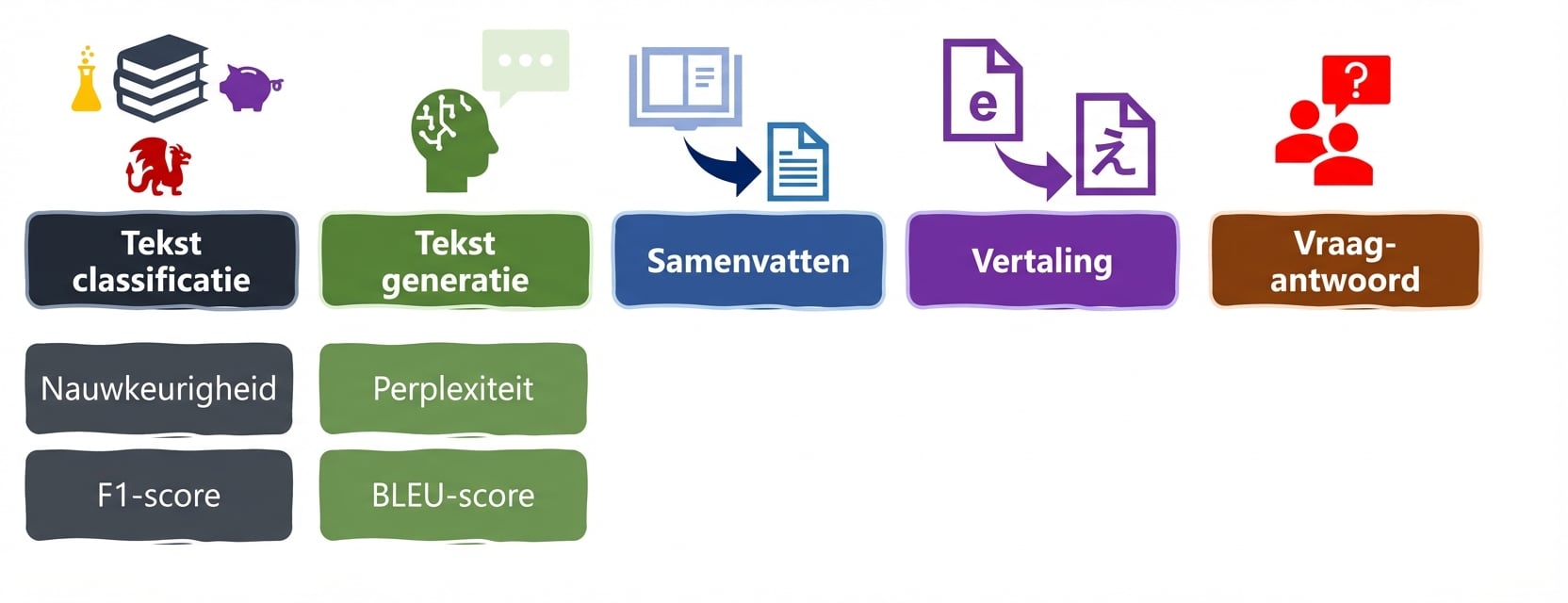

LLM‑taken en metrieken

Perplexity

- Vermogen van een model om het volgende woord nauwkeurig en zeker te voorspellen

- Lagere perplexity = hogere zekerheid

input_text = "Latest research findings in Antarctica show" generated_text = "Latest research findings in Antarctica show that the ice sheet is melting faster than previously thought."# Encode the prompt, generate text and decode it input_text_ids = tokenizer.encode(input_text, return_tensors="pt") output = model.generate(input_text_ids, max_length=20) generated_text = tokenizer.decode(output[0], skip_special_tokens=True)

Perplexity‑output

perplexity = evaluate.load("perplexity", module_type="metric") results = perplexity.compute(predictions=generated_text, model_id="gpt2")print(results)

{'perplexities': [245.63299560546875, 520.3106079101562, ....],

'mean_perplexity': 2867.7229790460497}

print(results["mean_perplexity"])

2867.7229790460497

- Vergelijk met baseline‑resultaten

BLEU

Meet vertaalkwaliteit t.o.v. menselijke referenties

Predictions: outputs van het LLM

- References: menselijke referenties

bleu = evaluate.load("bleu")input_text = "Latest research findings in Antarctica show" references = [["Latest research findings in Antarctica show significant ice loss due to climate change.", "Latest research findings in Antarctica show that the ice sheet is melting faster than previously thought."]] generated_text = "Latest research findings in Antarctica show that the ice sheet is melting faster than previously thought."

BLEU‑output

results = bleu.compute(predictions=[generated_text], references=references)

print(results)

{'bleu': 1.0,

'precisions': [1.0, 1.0, 1.0, 1.0],

'brevity_penalty': 1.0,

'length_ratio': 1.2142857142857142,

'translation_length': 17,

'reference_length': 14}

- Score 0‑1: dichter bij 1 = hogere overeenkomst

Laten we oefenen!

Introductie tot LLM’s in Python