Documentopvraging optimaliseren

Retrieval Augmented Generation (RAG) met LangChain

Meri Nova

Machine Learning Engineer

De R van RAG...

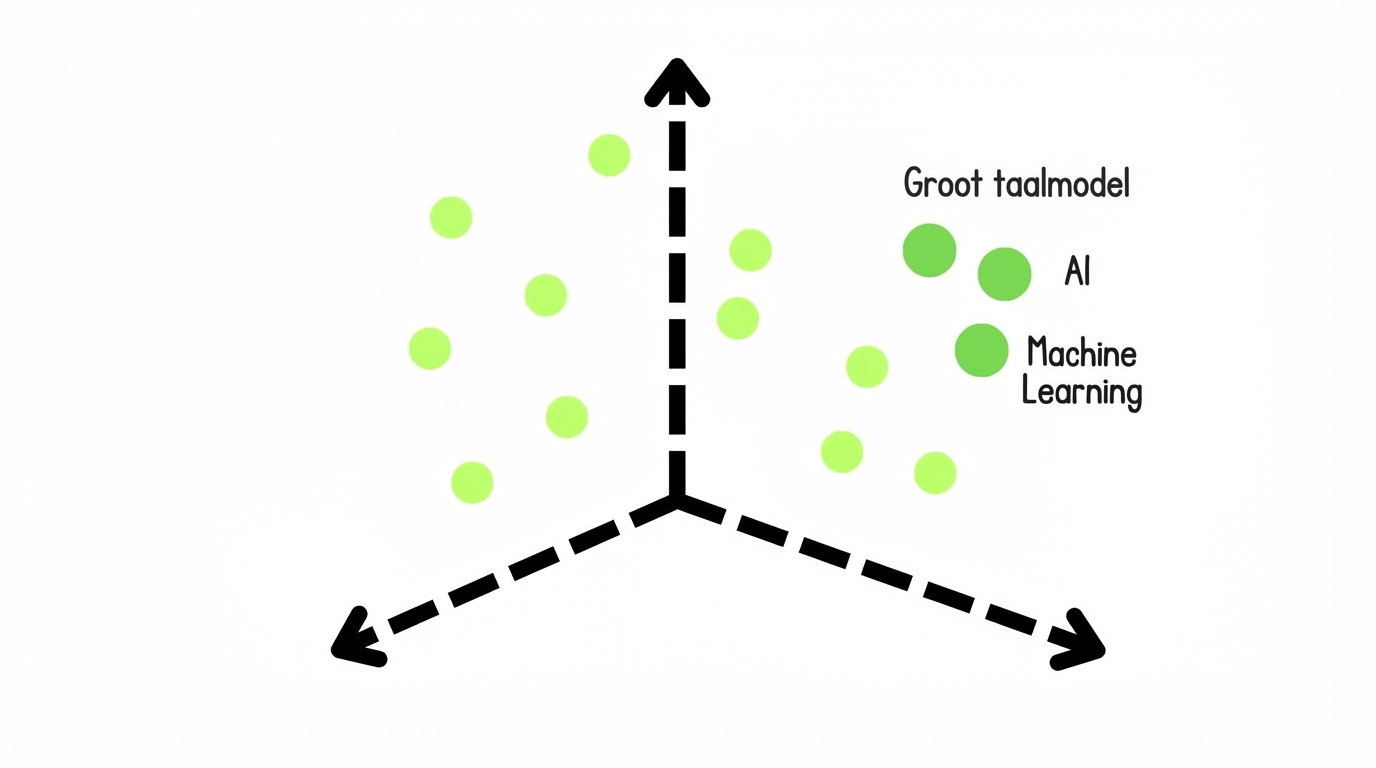

Dense

Codeer chunks als één vector met niet-nul componenten

- Voordelen: Vangt semantiek

- Nadelen: Rekenintensief

Dense

Codeer chunks als één vector met niet-nul componenten

- Voordelen: Vangt semantiek

- Nadelen: Rekenintensief

Sparse

Codeer met woordovereenkomst met vooral nul componenten

- Voordelen: Precies, uitlegbaar, zeldzame-woordverwerking

- Nadelen: Generaliseerbaarheid

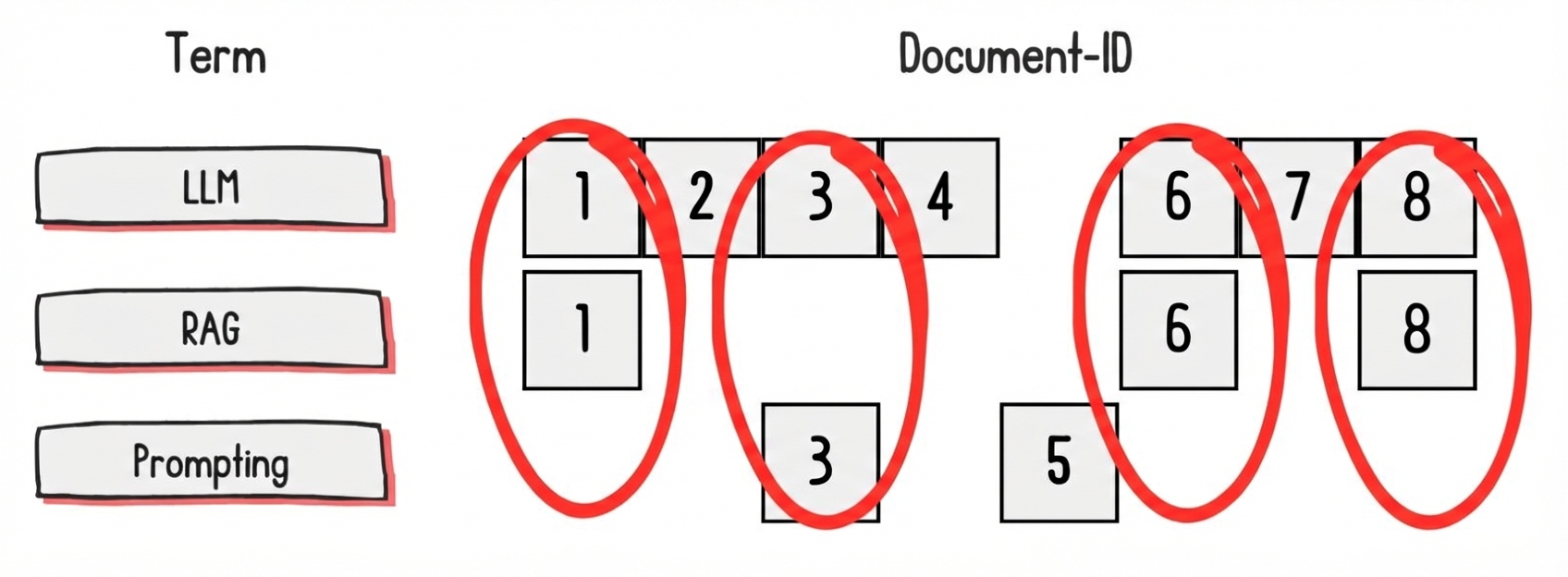

Sparse-opvraagmethoden

TF-IDF: Codeert documenten via woorden die het document uniek maken

BM25: Dempt effect van zeer frequente woorden in de encoding

BM25-opvraging

from langchain_community.retrievers import BM25Retrieverchunks = [ "Python was created by Guido van Rossum and released in 1991.", "Python is a popular language for machine learning (ML).", "The PyTorch library is a popular Python library for AI and ML." ]bm25_retriever = BM25Retriever.from_texts(chunks, k=3)

BM25-opvraging

results = bm25_retriever.invoke("When was Python created?")

print("Most Relevant Document:")

print(results[0].page_content)

Most Relevant Document:

Python was created by Guido van Rossum and released in 1991.

- Python was created by Guido van Rossum and released in 1991."

- "Python is a popular language for machine learning (ML)."

- "The PyTorch library is a popular Python library for AI/ML."

BM25 in RAG

retriever = BM25Retriever.from_documents( documents=chunks, k=5 )chain = ({"context": retriever, "question": RunnablePassthrough()} | prompt | llm | StrOutputParser() )

1 https://www.datacamp.com/blog/what-is-retrieval-augmented-generation-rag

BM25 in RAG

print(chain.invoke("How can LLM hallucination impact a RAG application?"))

De RAG-app kan antwoorden genereren die off-topic of onjuist zijn.

Laten we oefenen!

Retrieval Augmented Generation (RAG) met LangChain