Encoder-transformers

Transformermodels met PyTorch

James Chapman

Curriculum Manager, DataCamp

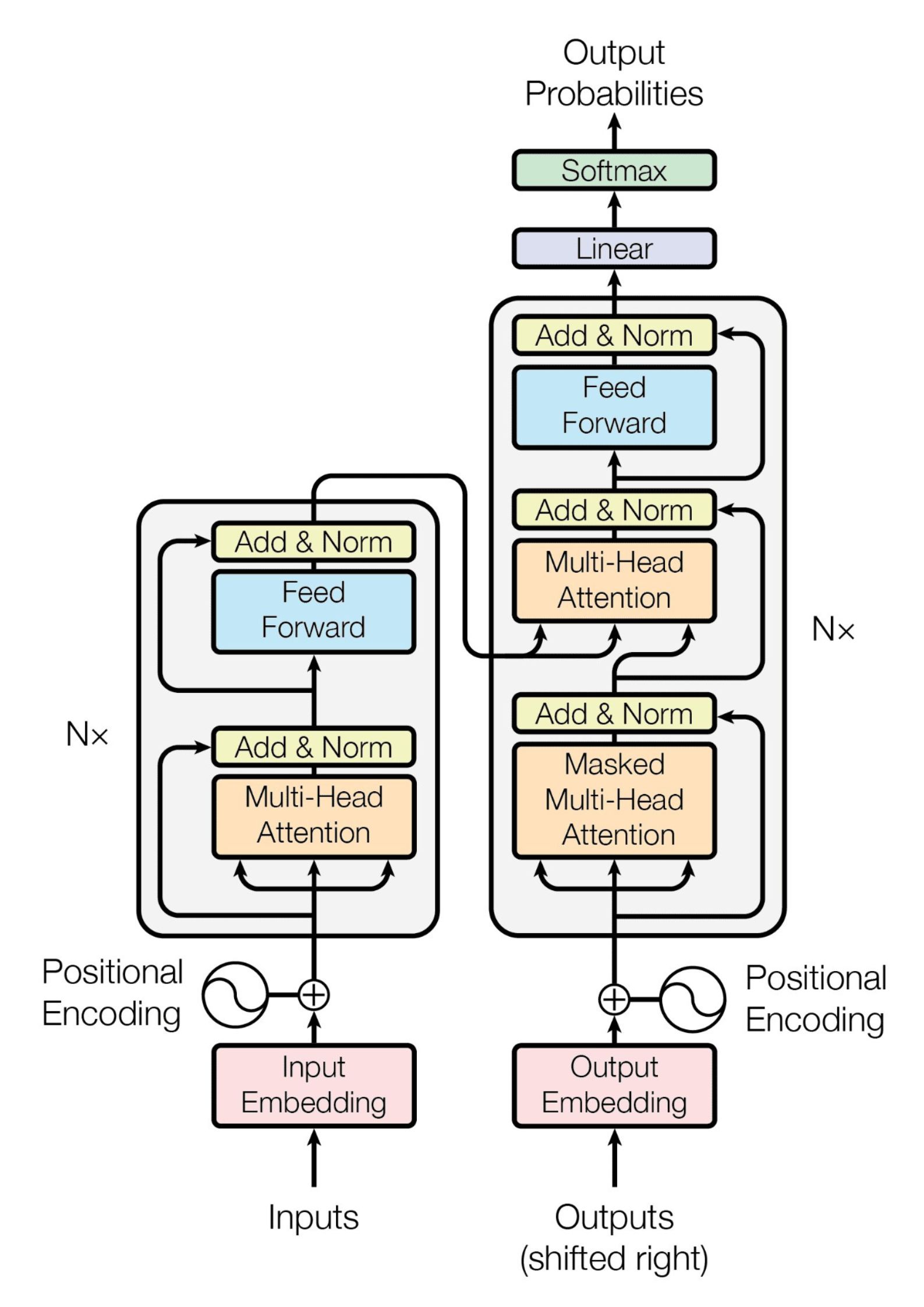

De oorspronkelijke transformer

De oorspronkelijke transformer

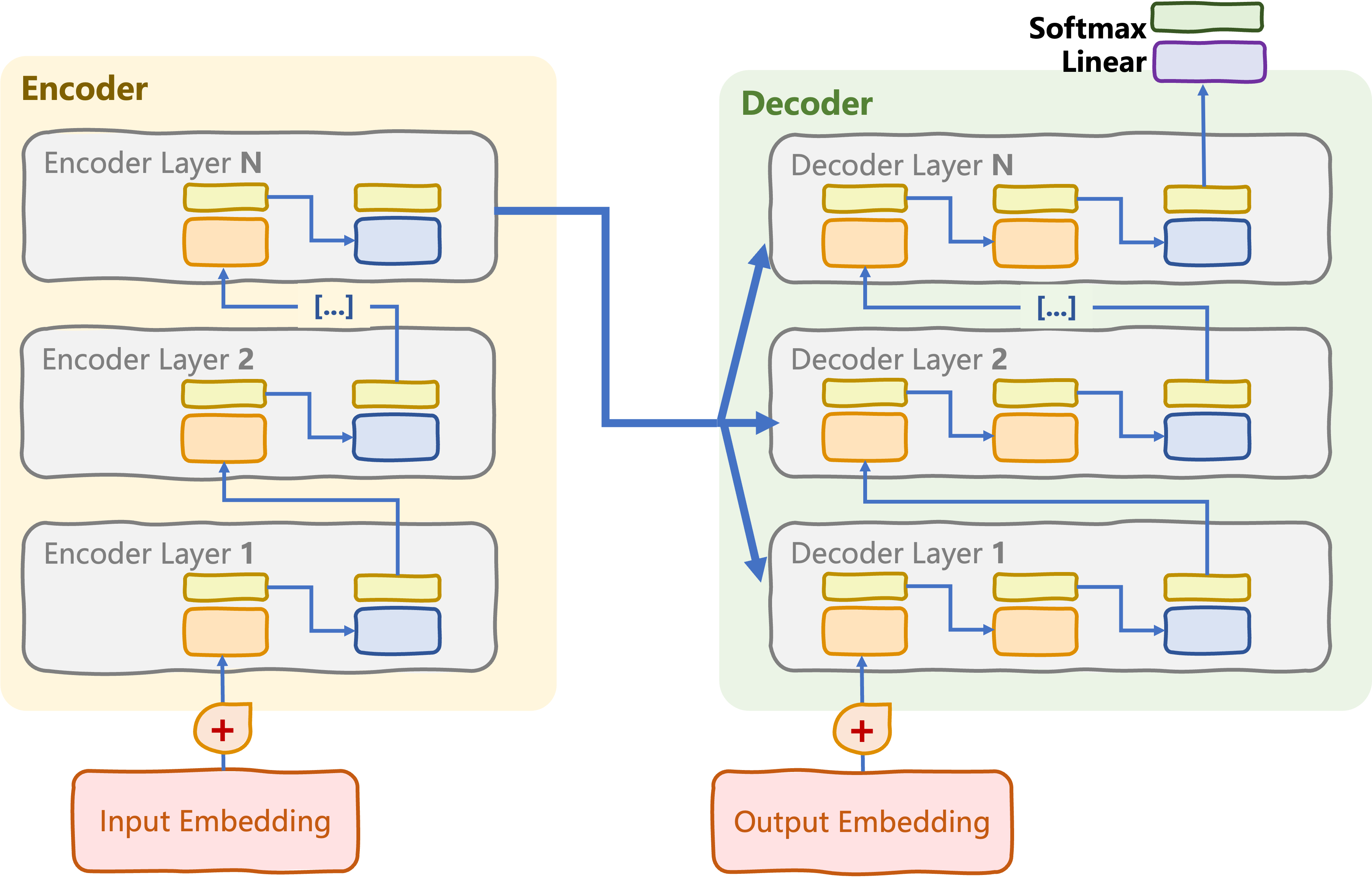

Alleen-encoder transformers

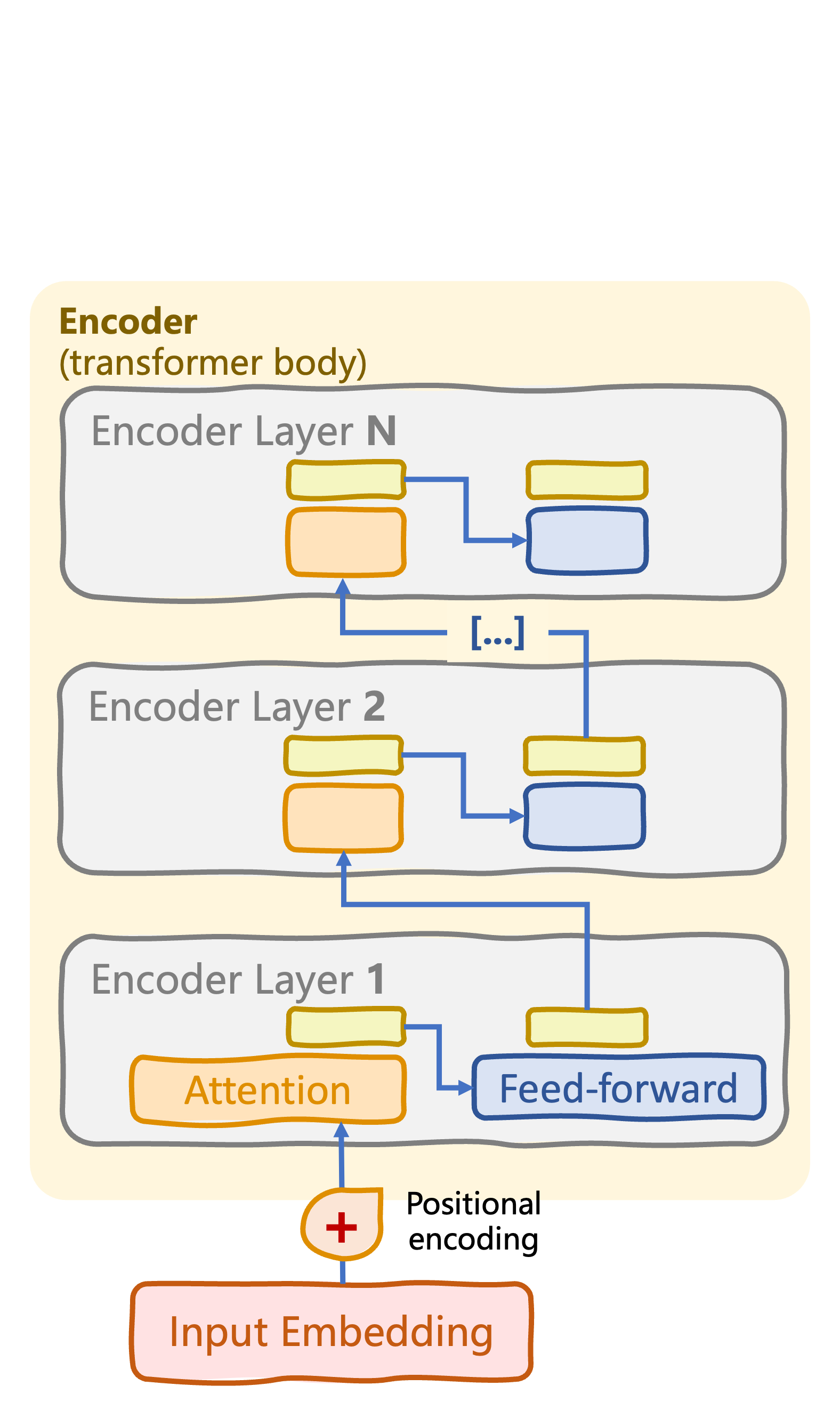

Transformer-body: encoderstack met N encoderlagen

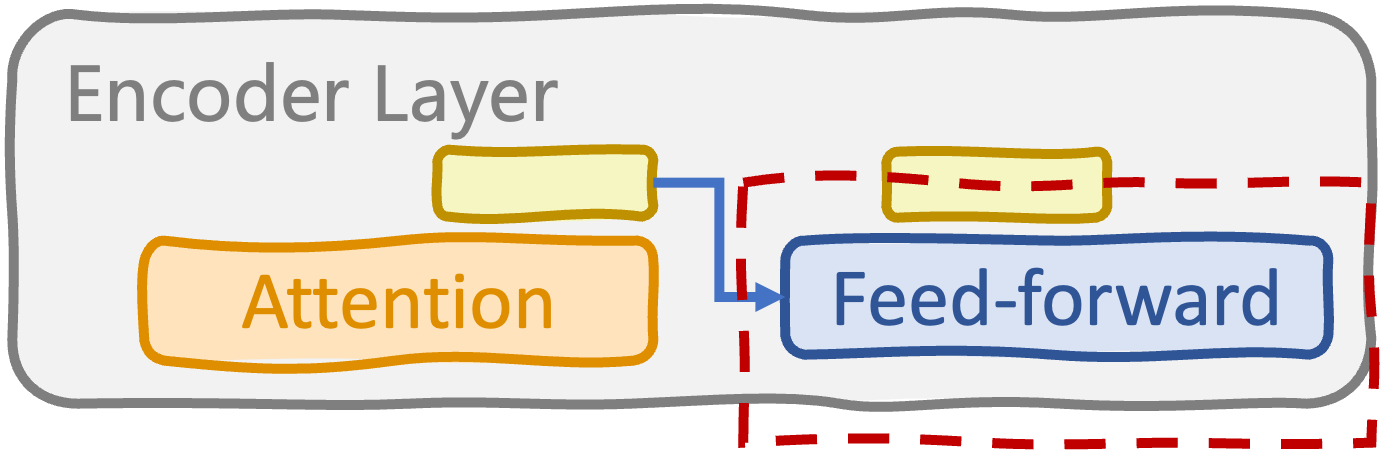

Encoderlaag

- Multi-head self-attention

- Feed-forward-(sub)lagen

- Layer-normalisaties, dropouts

Transformer-head

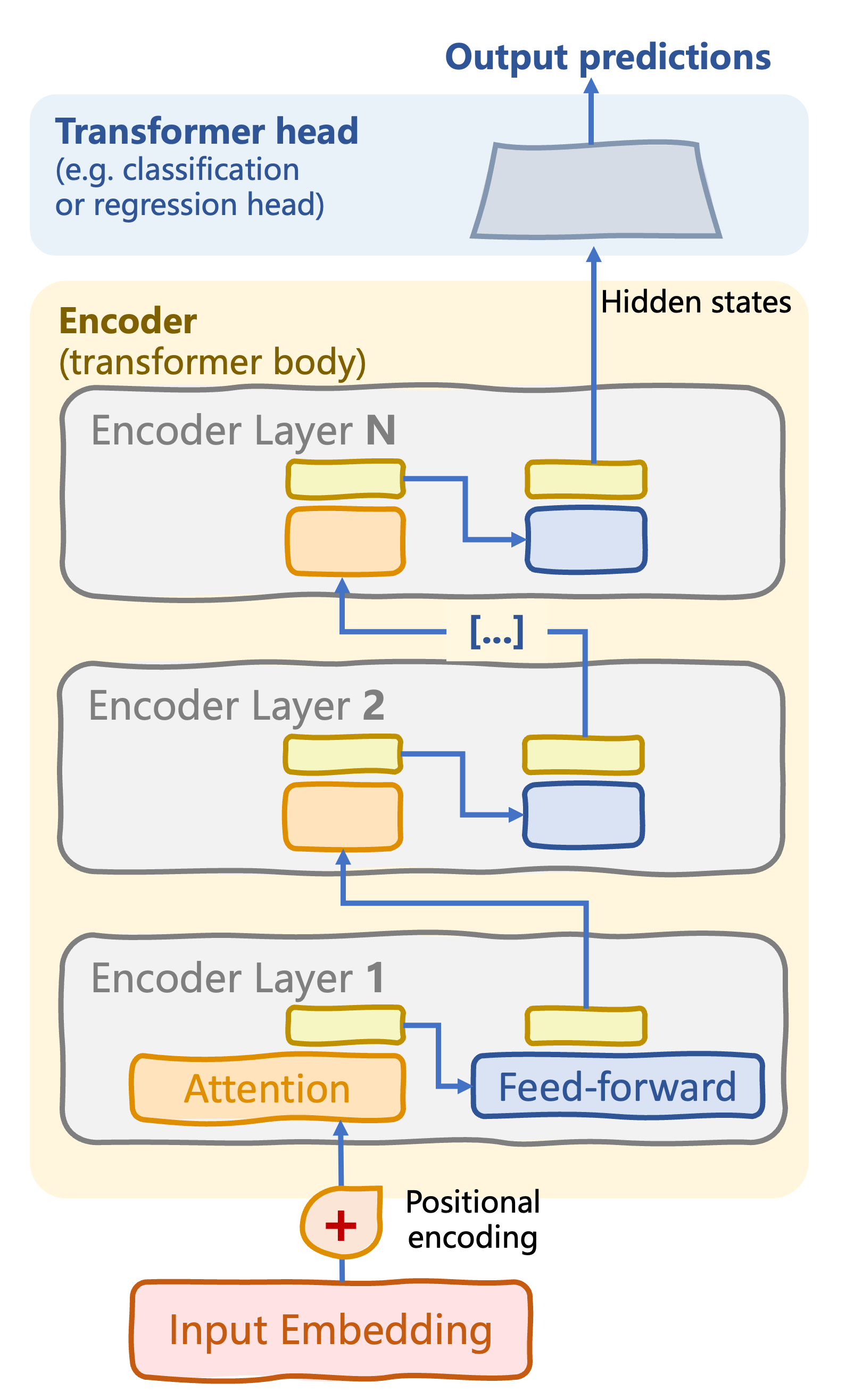

Alleen-encoder transformers

Transformer-body: encoderstack met N encoderlagen

Encoderlaag

- Multi-head self-attention

- Feed-forward-(sub)lagen

- Layer-normalisaties, dropouts

Transformer-head: verwerkt gecodeerde input tot een outputvoorspelling

Supervised task: classificatie, regressie

Feed-forward-subslaag in encoderlagen

class FeedForwardSubLayer(nn.Module): def __init__(self, d_model, d_ff): super().__init__() self.fc1 = nn.Linear(d_model, d_ff) self.fc2 = nn.Linear(d_ff, d_model) self.relu = nn.ReLU()def forward(self, x): return self.fc2(self.relu(self.fc1(x)))

2 x fully connected + ReLU-activatie

d_ff: dimensie tussen lineaire lagenforward(): verwerkt attention-uitvoer om complexe, niet-lineaire patronen te vangen

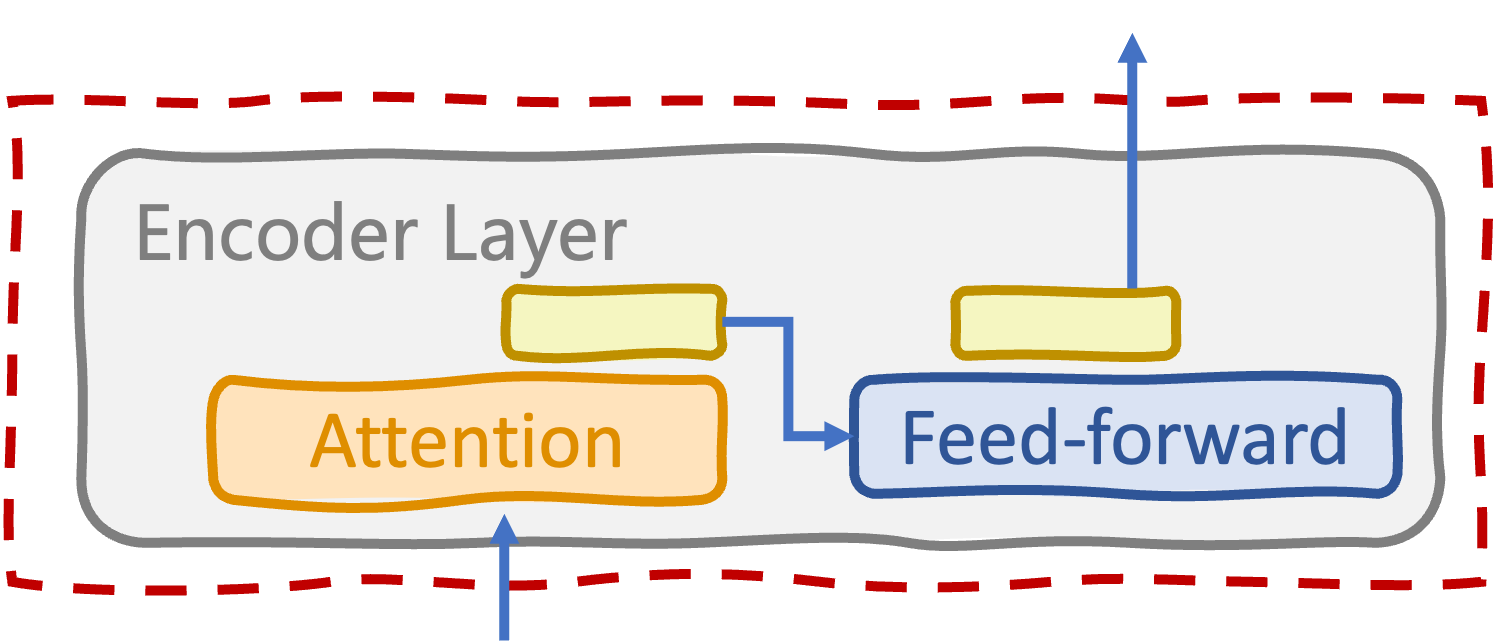

Encoderlaag

class EncoderLayer(nn.Module): def __init__(self, d_model, num_heads, d_ff, dropout): super().__init__() self.self_attn = MultiHeadAttention(d_model, num_heads) self.ff_sublayer = FeedForwardSubLayer(d_model, d_ff)self.norm1 = nn.LayerNorm(d_model) self.norm2 = nn.LayerNorm(d_model) self.dropout = nn.Dropout(dropout)def forward(self, x, src_mask): attn_output = self.self_attn(x, x, x, src_mask) x = self.norm1(x + self.dropout(attn_output)) ff_output = self.ff_sublayer(x) x = self.norm2(x + self.dropout(ff_output)) return x

- Multi-head self-attention

- Feed-forward-subslaag

- Layer-normalisaties en dropouts

forward():

maskvoorkomt verwerking van paddingtokens

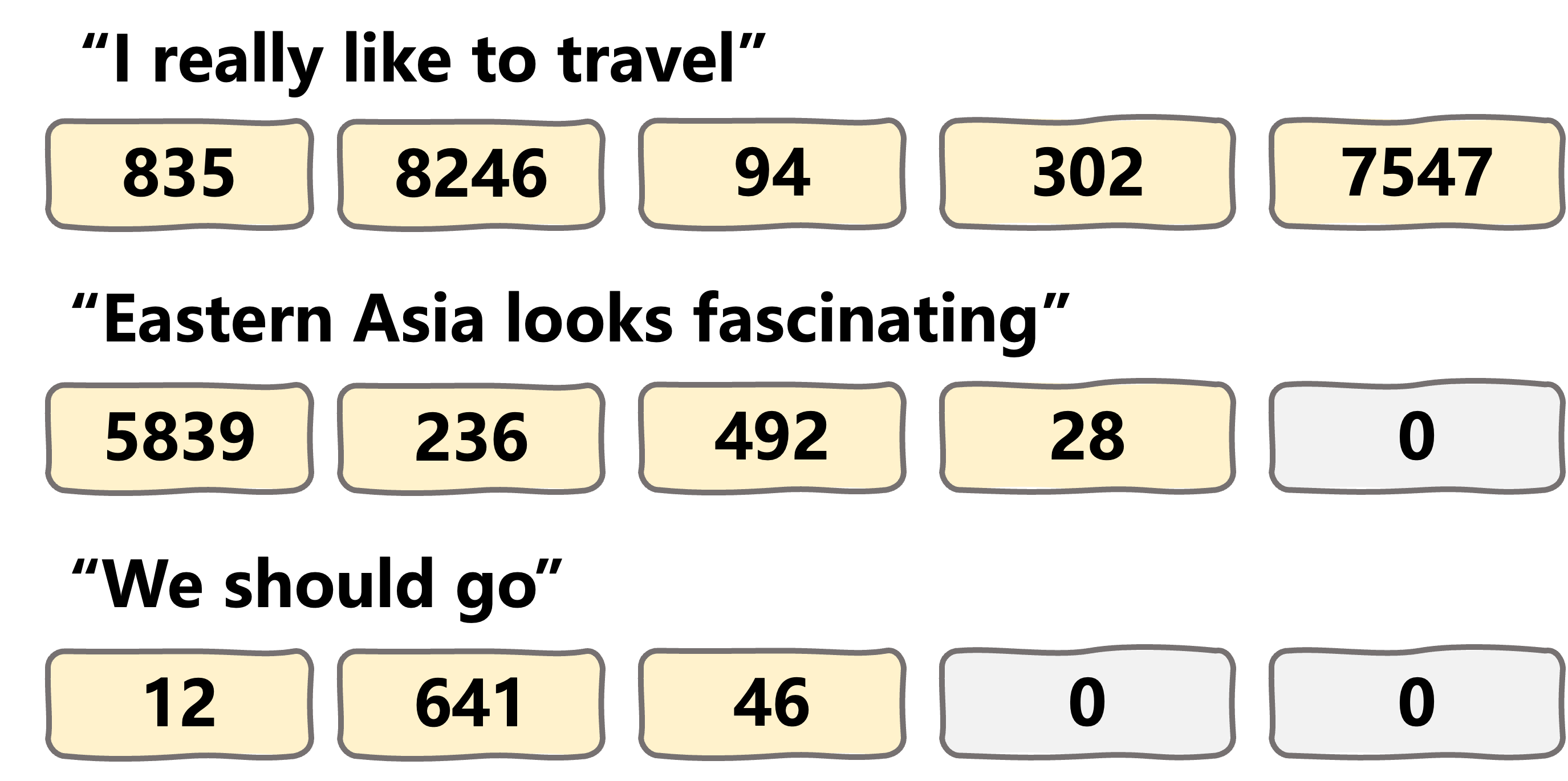

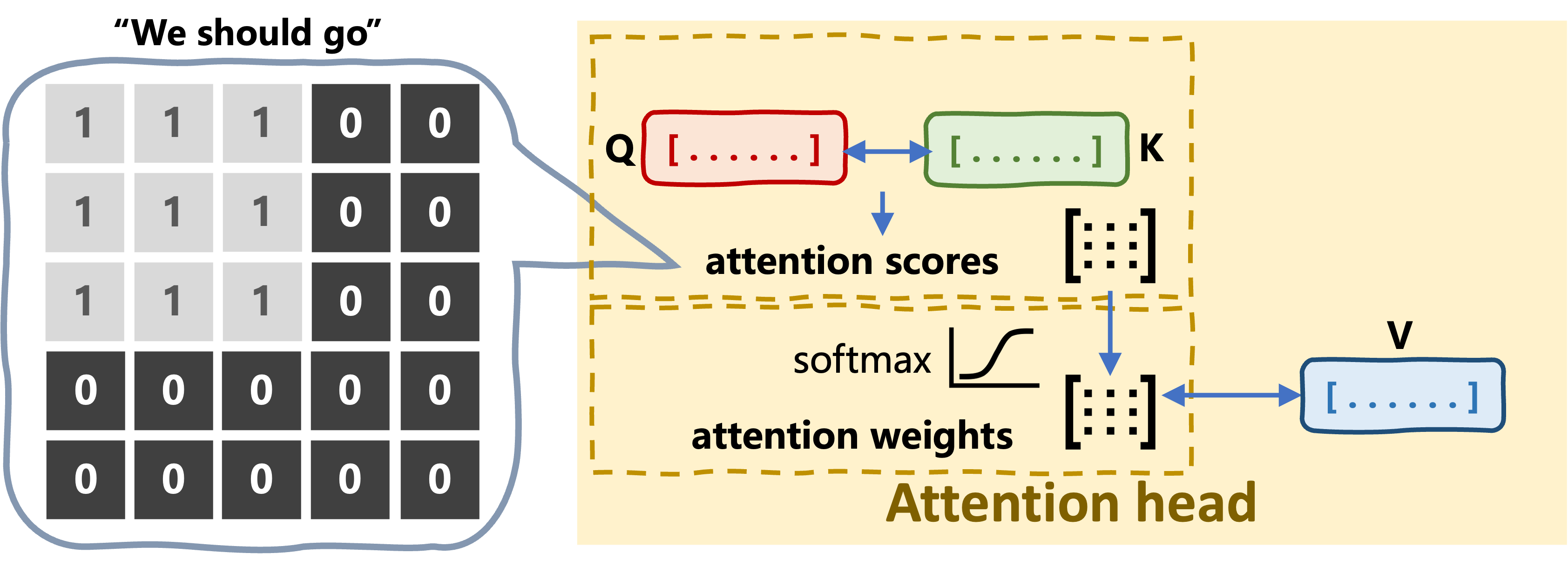

Het attention-proces maskeren

Encoder-transformer: body

class TransformerEncoder(nn.Module): def __init__(self, vocab_size, d_model, num_layers, num_heads, d_ff, dropout, max_seq_length): super().__init__()self.embedding = InputEmbeddings(vocab_size, d_model)self.positional_encoding = PositionalEncoding(d_model, max_seq_length)self.layers = nn.ModuleList( [EncoderLayer(d_model, num_heads, d_ff, dropout) for _ in range(num_layers)] )def forward(self, x, src_mask): x = self.embedding(x) x = self.positional_encoding(x) for layer in self.layers: x = layer(x, src_mask) return x

Encoder-transformer: head

class ClassifierHead(nn.Module):

def __init__(self, d_model, num_classes):

super().__init__()

self.fc = nn.Linear(d_model, num_classes)

def forward(self, x):

logits = self.fc(x)

return F.log_softmax(logits, dim=-1)

class RegressionHead(nn.Module):

def __init__(self, d_model, output_dim):

super().__init__()

self.fc = nn.Linear(d_model, output_dim)

def forward(self, x):

return self.fc(x)

Classification head

- Taken: tekstclassificatie, sentimentanalyse, NER, extractieve QA, enz.

fc: fully connected-laag- Zet encoder hidden states om naar

num_classesklassekansen

- Zet encoder hidden states om naar

Regression head

- Taken: leesbaarheid schatten, taalcomplexiteit, enz.

output_dimis 1 bij voorspelling van één numerieke waarde

Laten we oefenen!

Transformermodels met PyTorch