Hyperparametertuning

Een Kaggle-competitie winnen met Python

Yauhen Babakhin

Kaggle Grandmaster

Iteraties

| Model | Validatie-RMSE | Public LB-RMSE | Public LB-positie |

|---|---|---|---|

| Eenvoudig gemiddelde | 9.986 | 9.409 | 1449 / 1500 |

| Groepsgemiddelde | 9.978 | 9.407 | 1411 / 1500 |

| Gradient Boosting | 5.996 | 4.595 | 1109 / 1500 |

| Uurfeature toevoegen | 5.553 | 4.352 | 1068 / 1500 |

| Afstandfeature toevoegen | 5.268 | 4.103 | 1006 / 1500 |

| ... | ... | ... | ... |

Iteraties

| Model | Validatie-RMSE | Public LB-RMSE | Public LB-positie |

|---|---|---|---|

| Eenvoudig gemiddelde | 9.986 | 9.409 | 1449 / 1500 |

| Groepsgemiddelde | 9.978 | ||

| Gradient Boosting | 5.996 | 4.595 | 1109 / 1500 |

| Uurfeature toevoegen | 5.553 | ||

| Afstandfeature toevoegen | 5.268 | 4.103 | 1006 / 1500 |

| ... | ... | ... | ... |

Hyperparameteroptimalisatie

| Competitietype | Feature engineering | Hyperparameteroptimalisatie |

|---|---|---|

| Klassieke machine learning | +++ | + |

| Deep learning | - | +++ |

Ridge-regressie

Kleinste-kwadraten lineaire regressie

$$Loss = \sum_{i=1}^{N}{(y_i - \hat{y}_i)^2} \to \min$$

Ridge-regressie

Kleinste-kwadraten lineaire regressie

$$Loss = \sum_{i=1}^{N}{(y_i - \hat{y}_i)^2} \to \min$$

Ridge-regressie

$$Loss = \sum_{i=1}^{N}{(y_i - \hat{y}_i)^2 + \alpha\sum_{j=1}^{K}{{w_j}^2}} \to \min$$

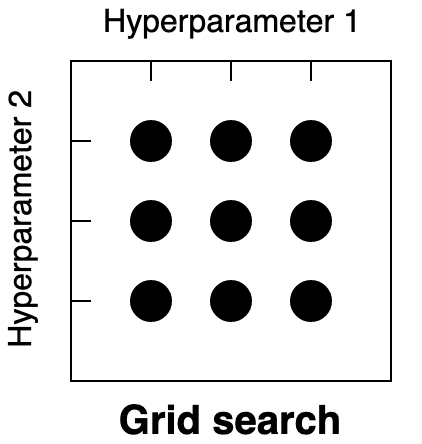

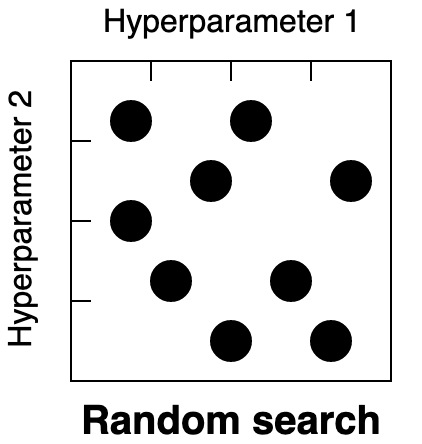

Strategieën voor hyperparameteroptimalisatie

- Grid search. Kies een vooraf gedefinieerd raster met hyperparametervaarden

- Random search. Kies de zoekruimte voor hyperparametervaarden

- Bayesiaanse optimalisatie. Kies de zoekruimte voor hyperparametervaarden

Grid search

# Possible alpha values alpha_grid = [0.01, 0.1, 1, 10]from sklearn.linear_model import Ridge results = {} # For each value in the grid for candidate_alpha in alpha_grid:# Create a model with a specific alpha value ridge_regression = Ridge(alpha=candidate_alpha)# Find the validation score for this model# Save the results for each alpha value results[candidate_alpha] = validation_score

Laten we oefenen!

Een Kaggle-competitie winnen met Python