Gegevens aggregeren

Gegevens transformeren en analyseren met Microsoft Fabric

Luis Silva

Solution Architect - Data & AI

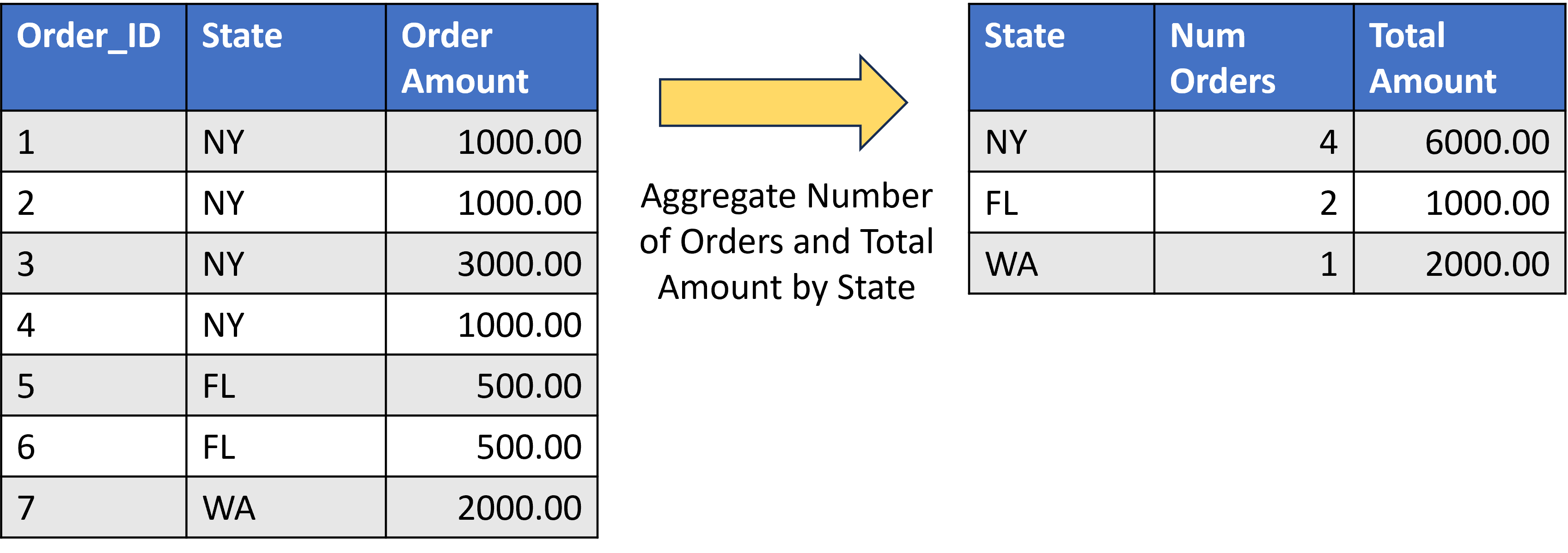

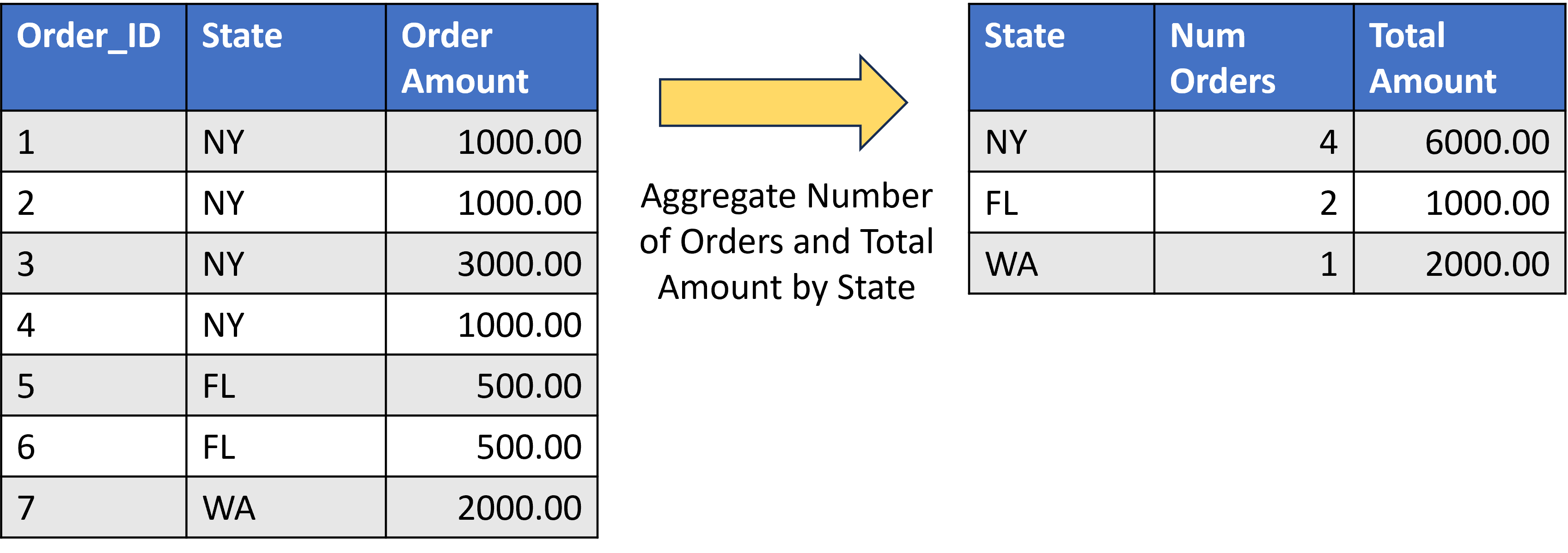

Wanneer moet je data aggregeren?

- Verminder rijen in een dataset met aggregatiefuncties voor samenvattingen.

- Aantal

- Sommeer

- Gemiddelde

- Maximum

- Minimum

Wanneer moet je data aggregeren?

- Verminder rijen in een dataset met aggregatiefuncties voor samenvattingen.

- Aantal

- Sommeer

- Gemiddelde

- Maximum

- Minimum

Tools voor data-aggregatie

Data aggregeren met SQL

- Veelgebruikte SQL-aggregatiefuncties:

SUM()COUNT()AVG()MIN()MAX()

- Meestal samen met

GROUP BY - Statistische functies

STDEV()VAR()

SELECT

<unaggregated columns>,

function(<aggregated column>)

FROM

<table>

GROUP BY

<unaggregated columns>;

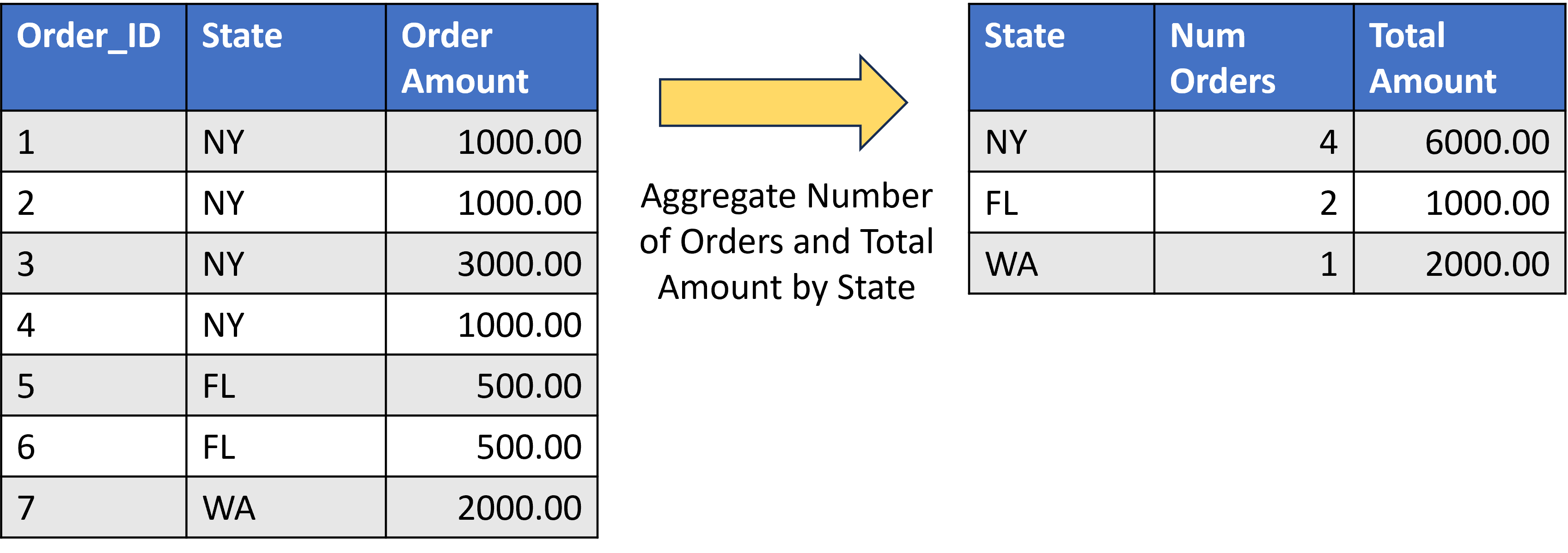

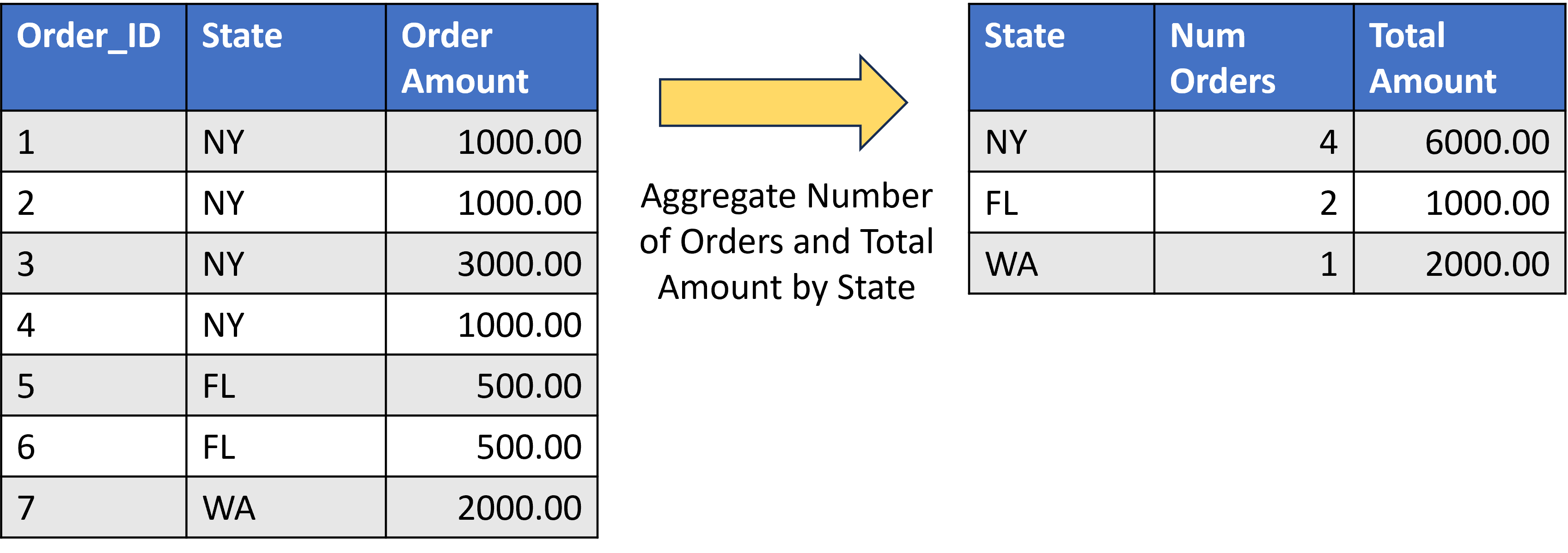

Data aggregeren met SQL

SELECT

[State],

COUNT([Order_ID]) AS [Num Orders],

SUM([Order_Amount]) AS [Total Amount]

FROM

[tbl_Orders]

GROUP BY

[State]

Data aggregeren met Spark

- Veelgebruikte PySpark-aggregatiefuncties:

sum()count()avg()min()enmax()first()enlast()

- Statistische functies

stdev()variance()

- Gebruikt met

groupBy()enagg()

df.groupBy(<unaggregated columns>)

.agg(function(<aggregated column>))

Data aggregeren met Spark

from pyspark.sql.functions import sum

df.groupBy("state").agg(count("order_id"), sum("order_amount")).show()

Data aggregeren met Spark

- Aggregatiefuncties moet je importeren uit

pyspark.sql.functionsmet een statement aan het begin van je code.

#----- Importeer één of meerdere functies:

from pyspark.sql.functions import sum, avg, count, min, max

#----- Importeer alle SQL-functies:

from pyspark.sql.functions import *

#----- Importeer alle SQL-functies met een alias:

import pyspark.sql.functions as F

# call sum: F.sum()

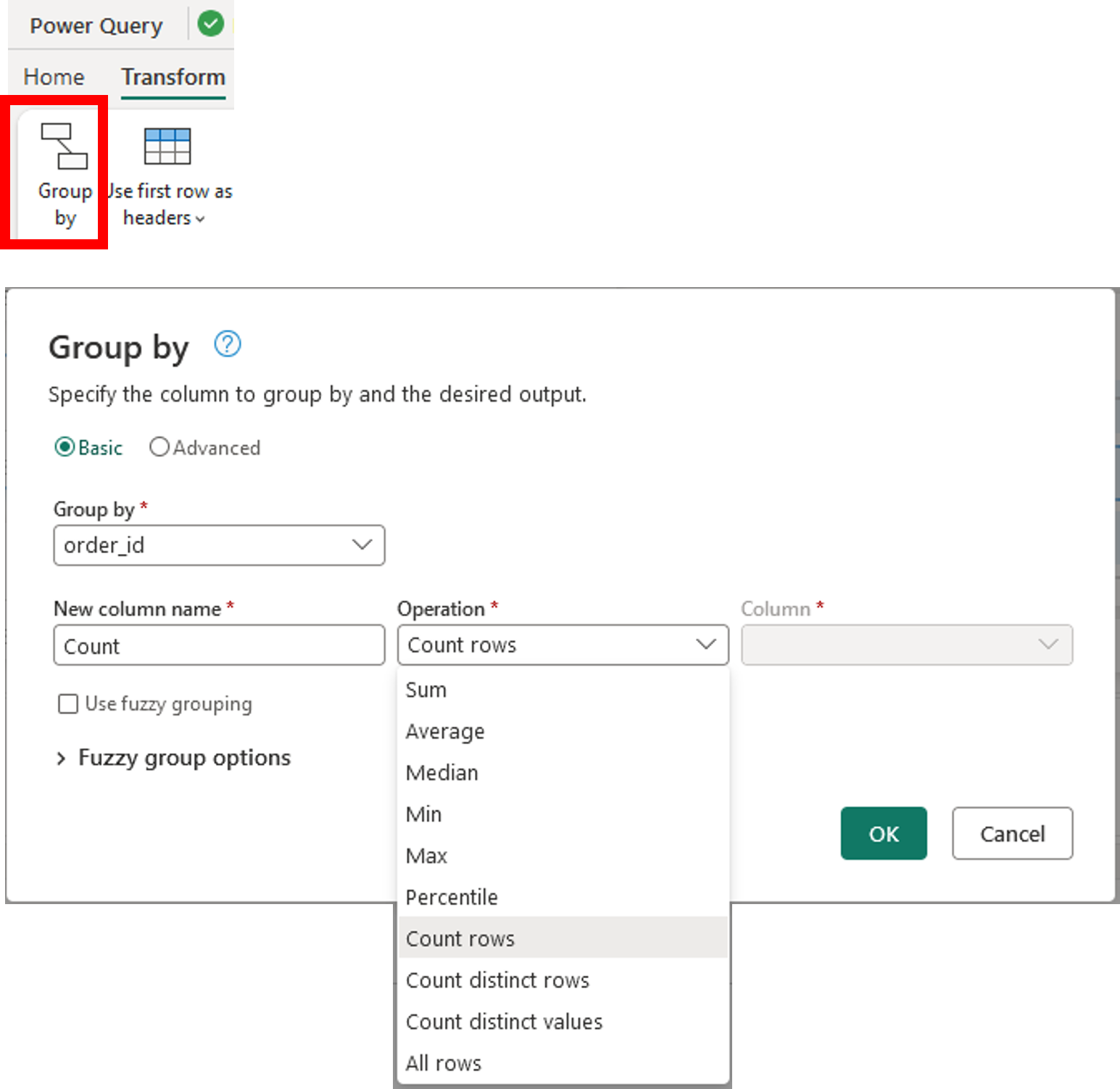

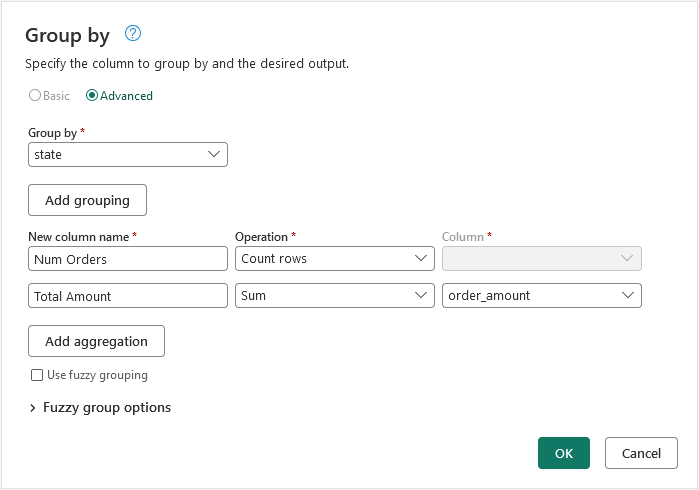

Data aggregeren met Dataflows

- Groeperen op-transformatie

SomGemiddeldeMediaanMinMaxPercentielRijen tellen

Data aggregeren met Dataflows

Laten we oefenen!

Gegevens transformeren en analyseren met Microsoft Fabric