Persiapan fine-tuning

Pengantar LLM di Python

Jasmin Ludolf

Senior Data Science Content Developer, DataCamp

Pipelines dan kelas Auto

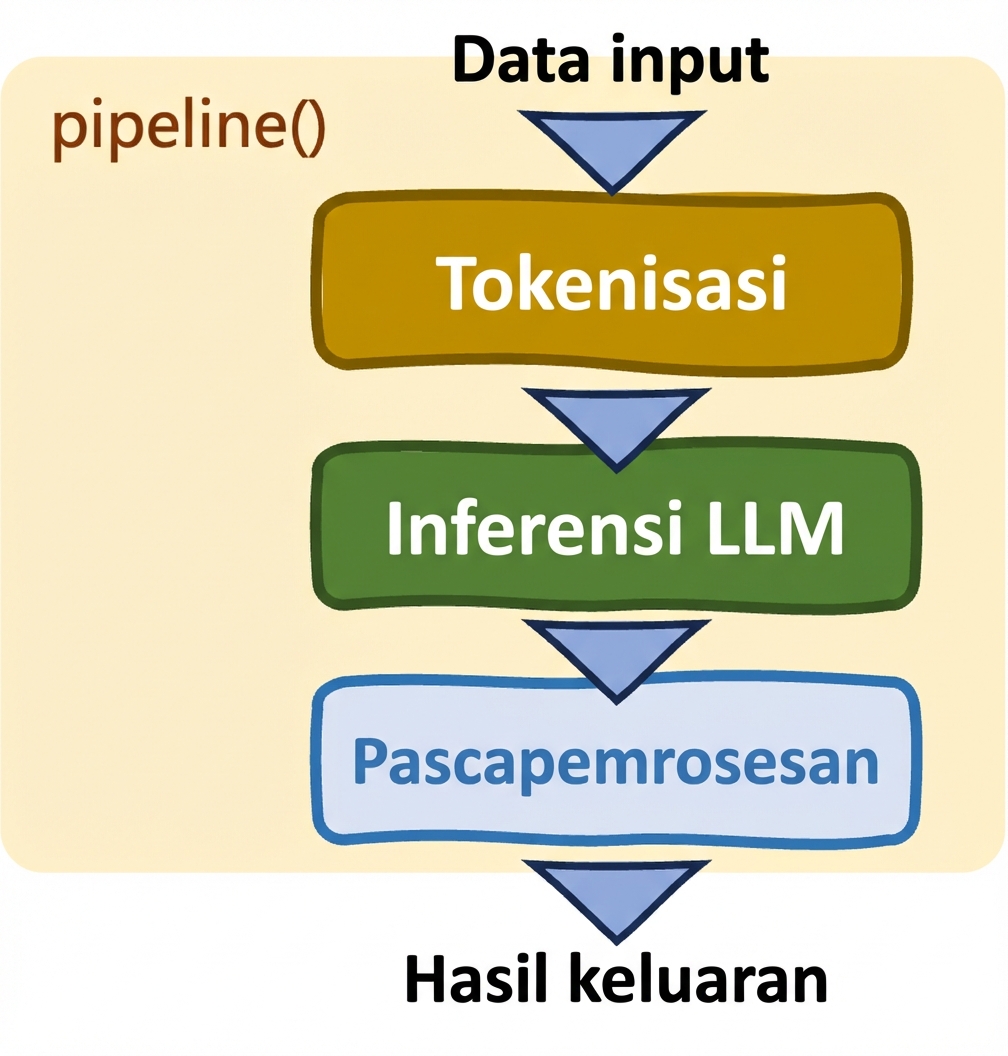

Pipelines: pipeline()

- Mempermudah tugas

- Pemilihan model dan tokenizer otomatis

- Kontrol terbatas

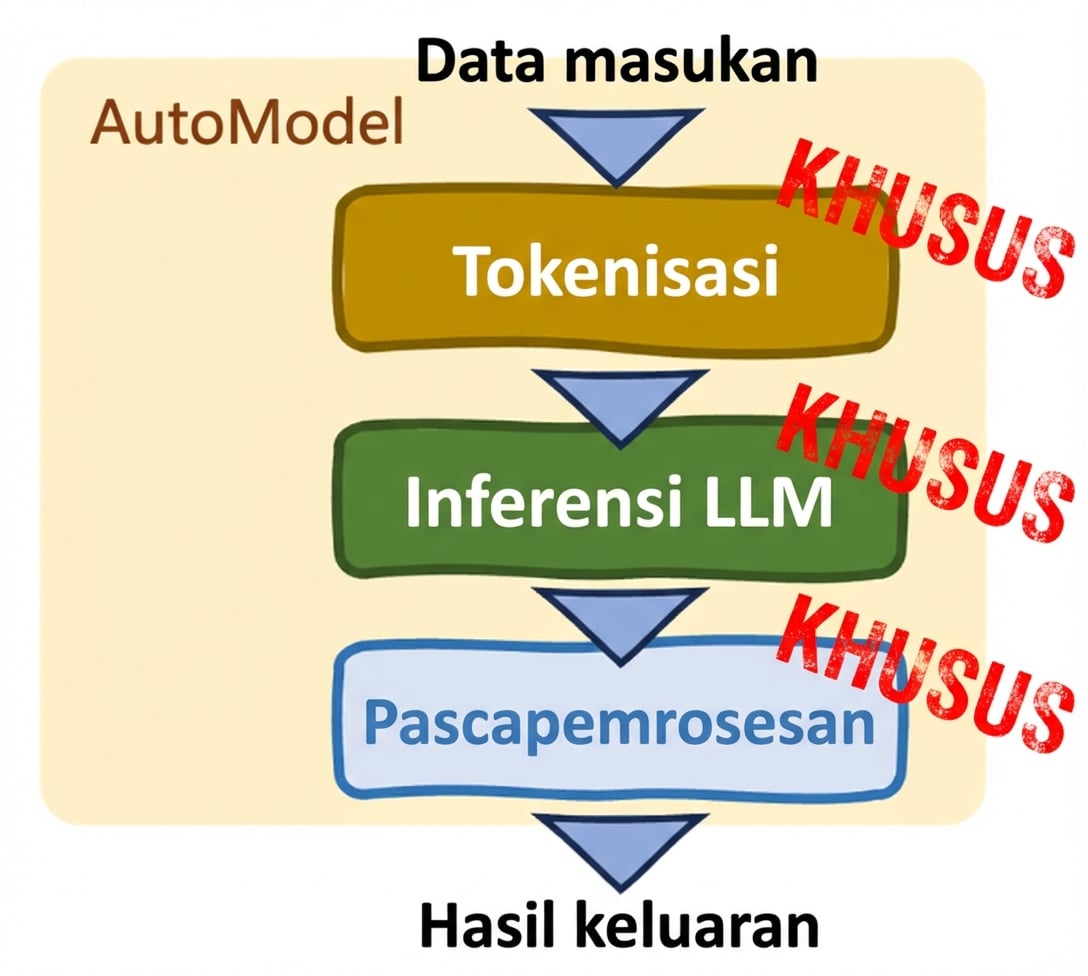

Kelas Auto (AutoModel class)

- Kustomisasi

- Penyesuaian manual

- Mendukung fine-tuning

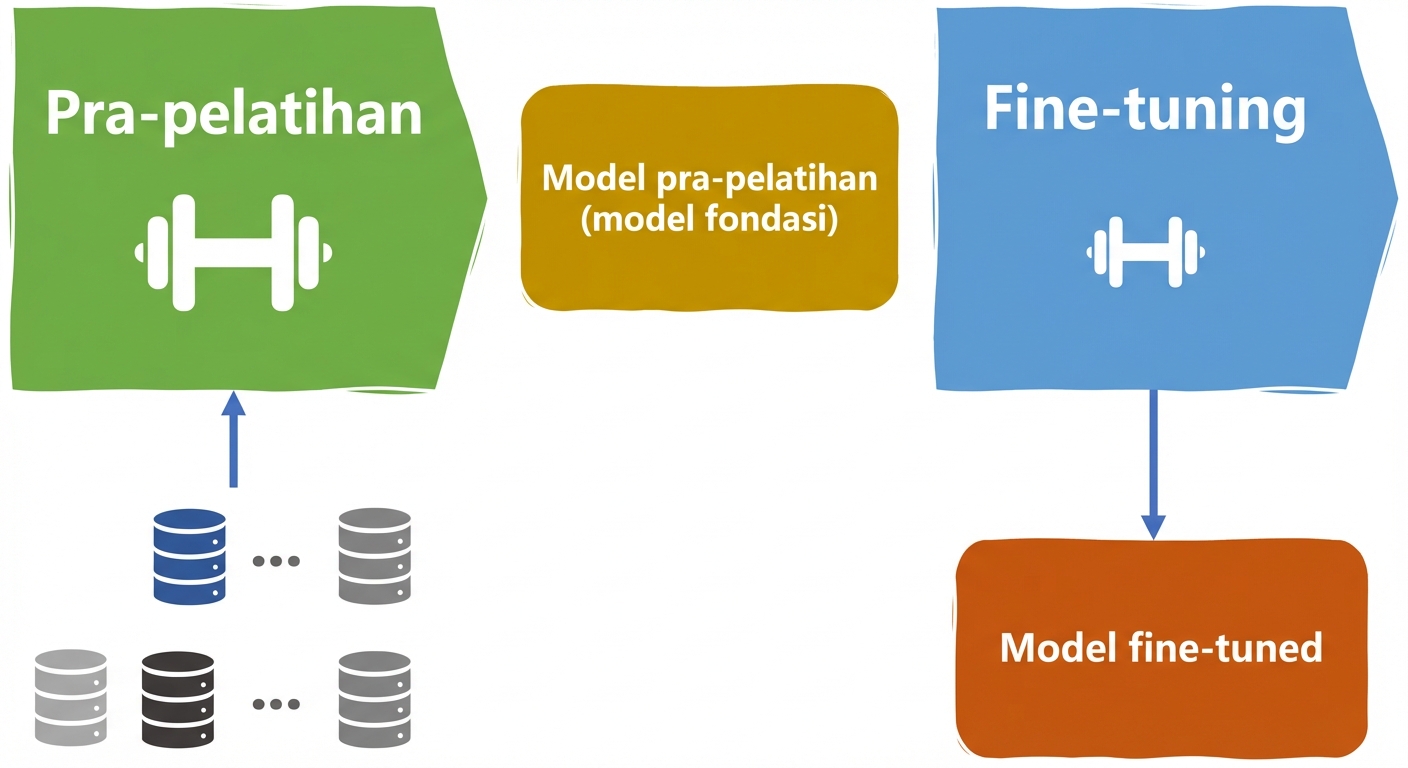

Siklus hidup LLM

Pre-training

- Data luas

- Belajar pola umum

Siklus hidup LLM

Pre-training Fine-tuning

- Data luas Spesifik domain

- Belajar pola umum Tugas khusus

Memuat dataset untuk fine-tuning

from datasets import load_datasettrain_data = load_dataset("imdb", split="train")train_data = data.shard(num_shards=4, index=0)test_data = load_dataset("imdb", split="test")test_data = data.shard(num_shards=4, index=0)

load_dataset(): memuat dataset dari Hugging Face Hub- imdb: klasifikasi ulasan

Kelas Auto

from transformers import AutoModel, AutoTokenizerfrom transformers import AutoModelForSequenceClassificationmodel = AutoModelForSequenceClassification.from_pretrained("bert-base-uncased") tokenizer = AutoTokenizer.from_pretrained("bert-base-uncased")

Tokenisasi

from transformers import AutoTokenizer, AutoModelForSequenceClassification from datasets import load_dataset train_data = load_dataset("imdb", split="train") train_data = data.shard(num_shards=4, index=0) test_data = load_dataset("imdb", split="test") test_data = data.shard(num_shards=4, index=0) model = AutoModelForSequenceClassification.from_pretrained("bert-base-uncased") tokenizer = AutoTokenizer.from_pretrained("bert-base-uncased")# Tokenize the data tokenized_training_data = tokenizer(train_data["text"], return_tensors="pt", padding=True, truncation=True, max_length=64) tokenized_test_data = tokenizer(test_data["text"], return_tensors="pt", padding=True, truncation=True, max_length=64)

Keluaran tokenisasi

print(tokenized_training_data)

{'input_ids': tensor([[ 101, 1045, 12524, 1045, 2572, 8025, 1011, 3756,

2013, 2026, 2678, 3573, 2138, 1997, 2035, 1996, 6704, 2008, 5129, 2009,

2043, 2009, 2001, 2034, 2207, 1999, 3476, 1012, 1045, 2036, ...

Tokenisasi per baris

def tokenize_function(text_data): return tokenizer(text_data["text"], return_tensors="pt", padding=True, truncation=True, max_length=64) # Tokenize in batches tokenized_in_batches = train_data.map(tokenize_function, batched=True)# Tokenize row by row tokenized_by_row = train_data.map(tokenize_function, batched=False)

Dataset({

features: ['text', 'label', 'input_ids', 'token_type_ids', 'attention_mask'],

num_rows: 1563

})

Tokenisasi subword

- Umum pada tokenizer modern

- Kata dipecah menjadi sub-bagian bermakna

Tokenisasi subword

- Umum pada tokenizer modern

- Kata dipecah menjadi sub-bagian bermakna

Ayo berlatih!

Pengantar LLM di Python