Transformer encoder

Model Transformer dengan PyTorch

James Chapman

Curriculum Manager, DataCamp

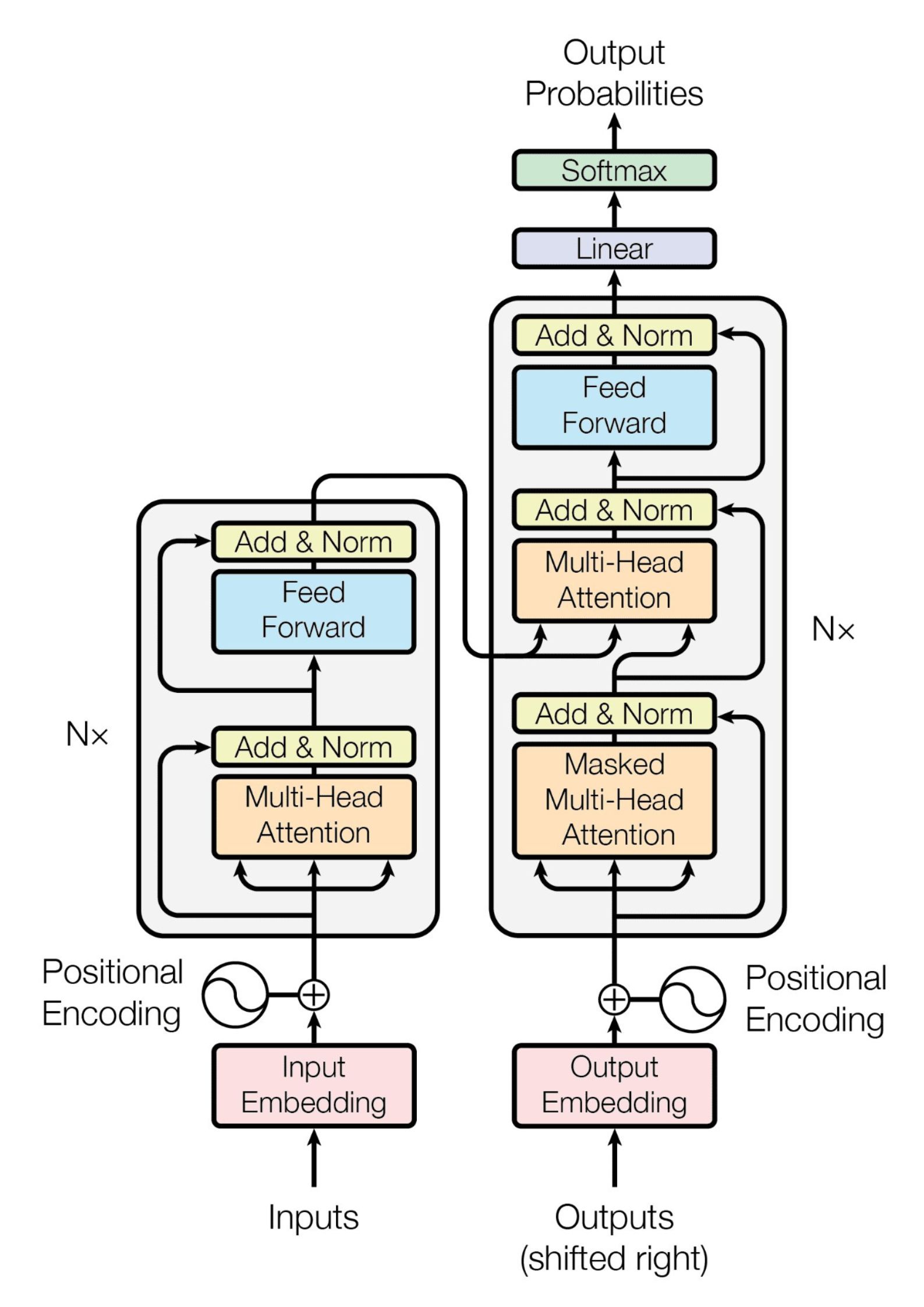

Transformer asli

Transformer asli

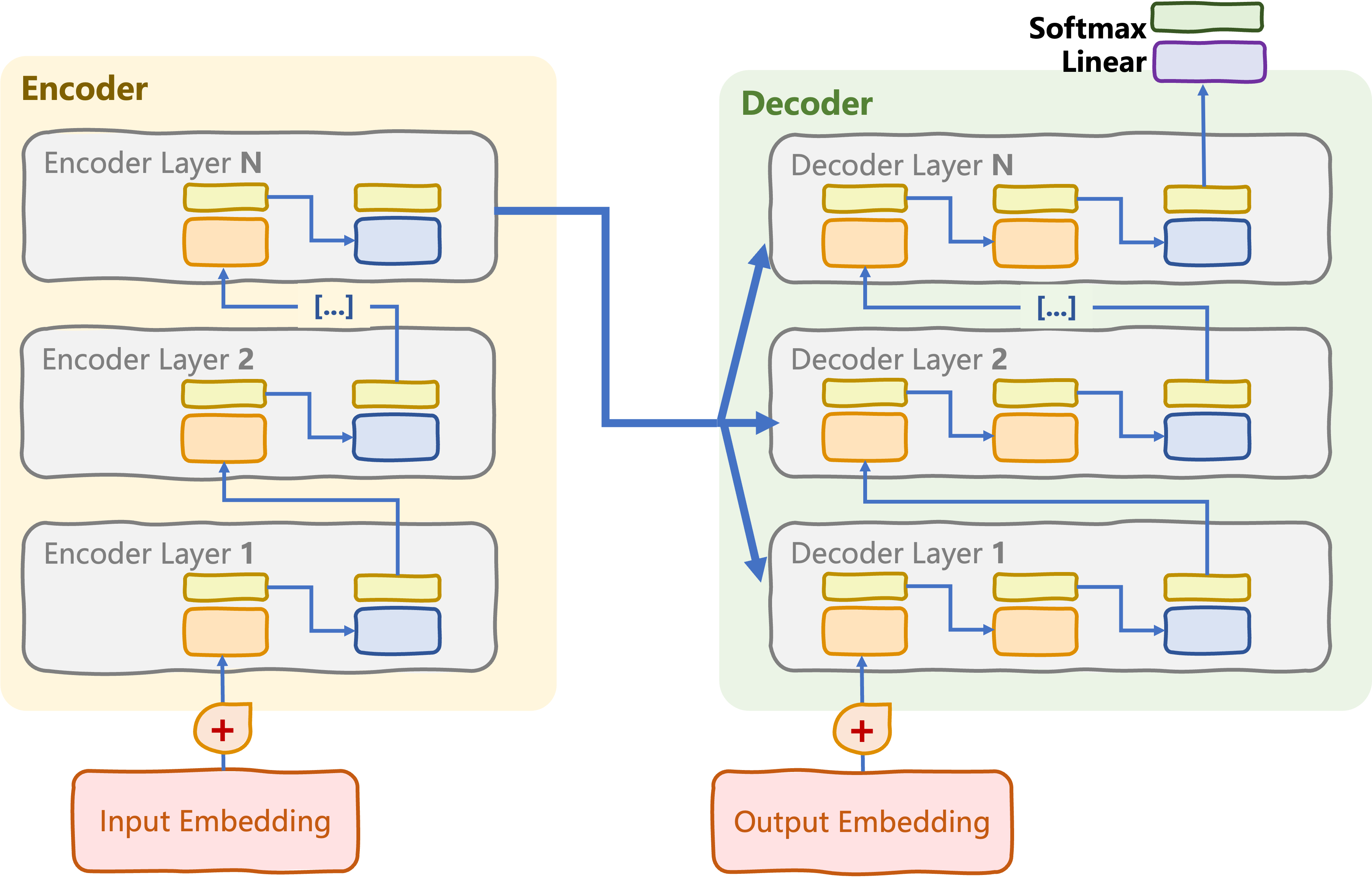

Transformer hanya-encoder

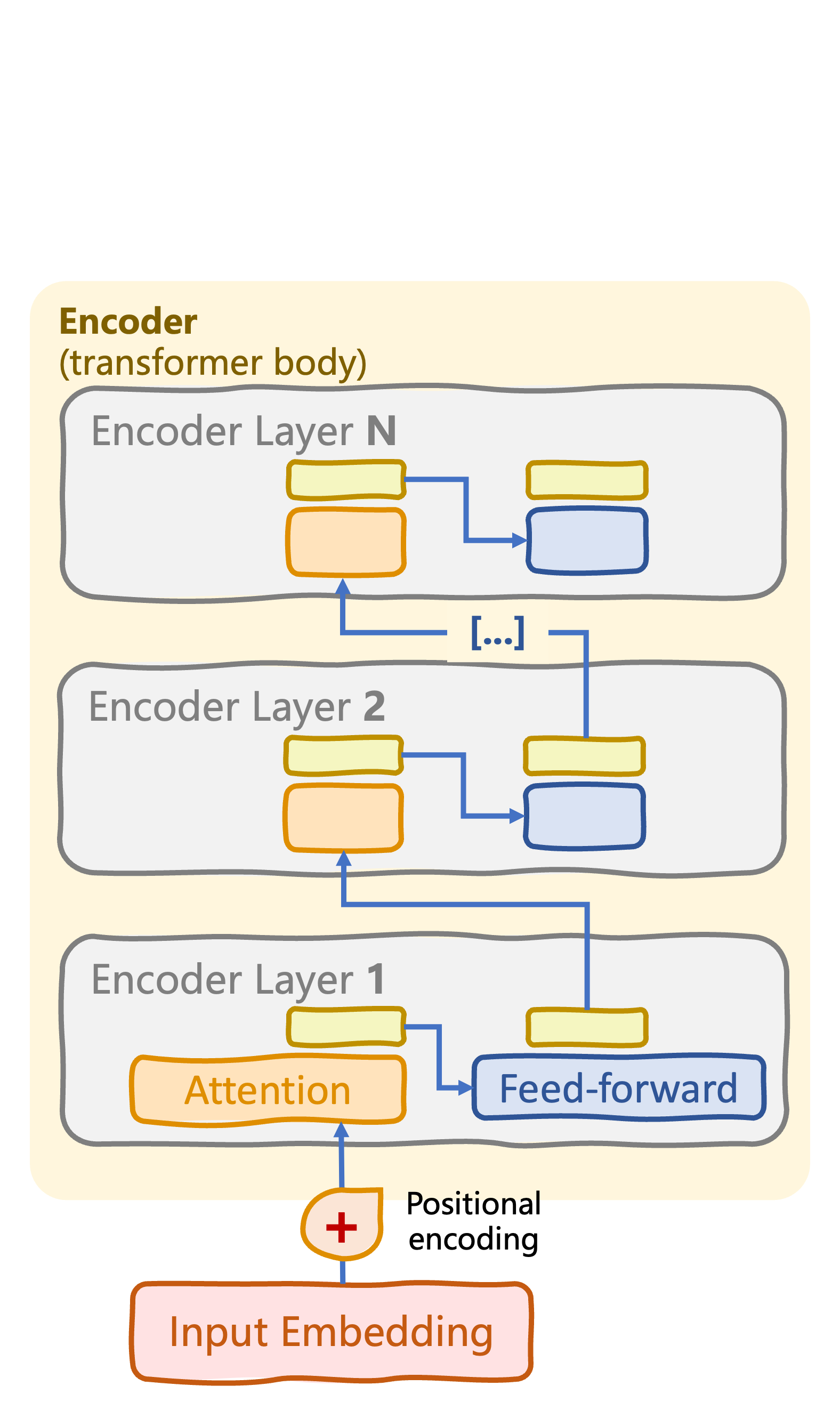

Badan transformer: tumpukan encoder dengan N layer encoder

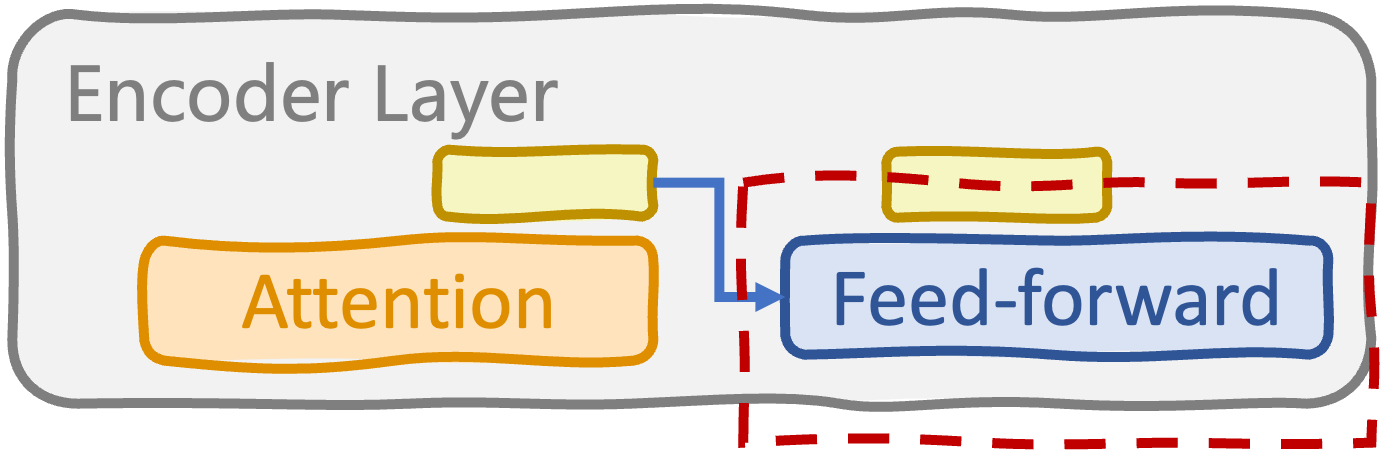

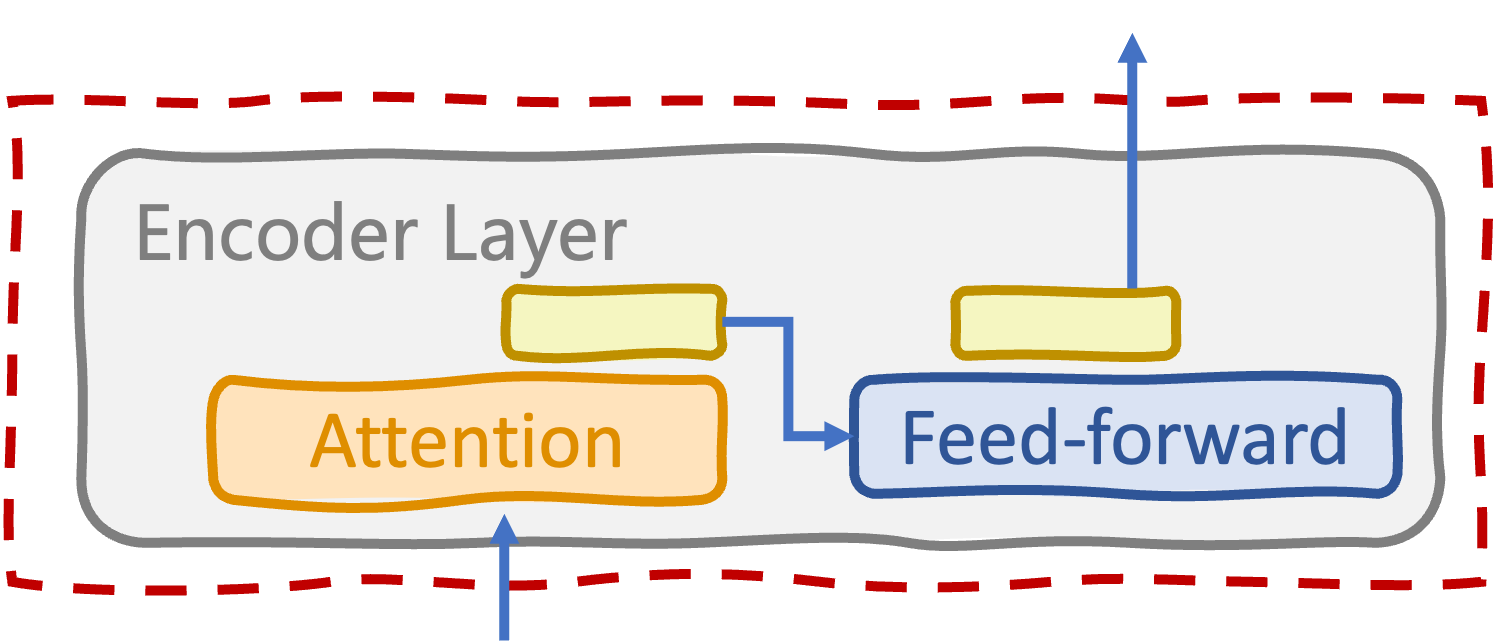

Layer encoder

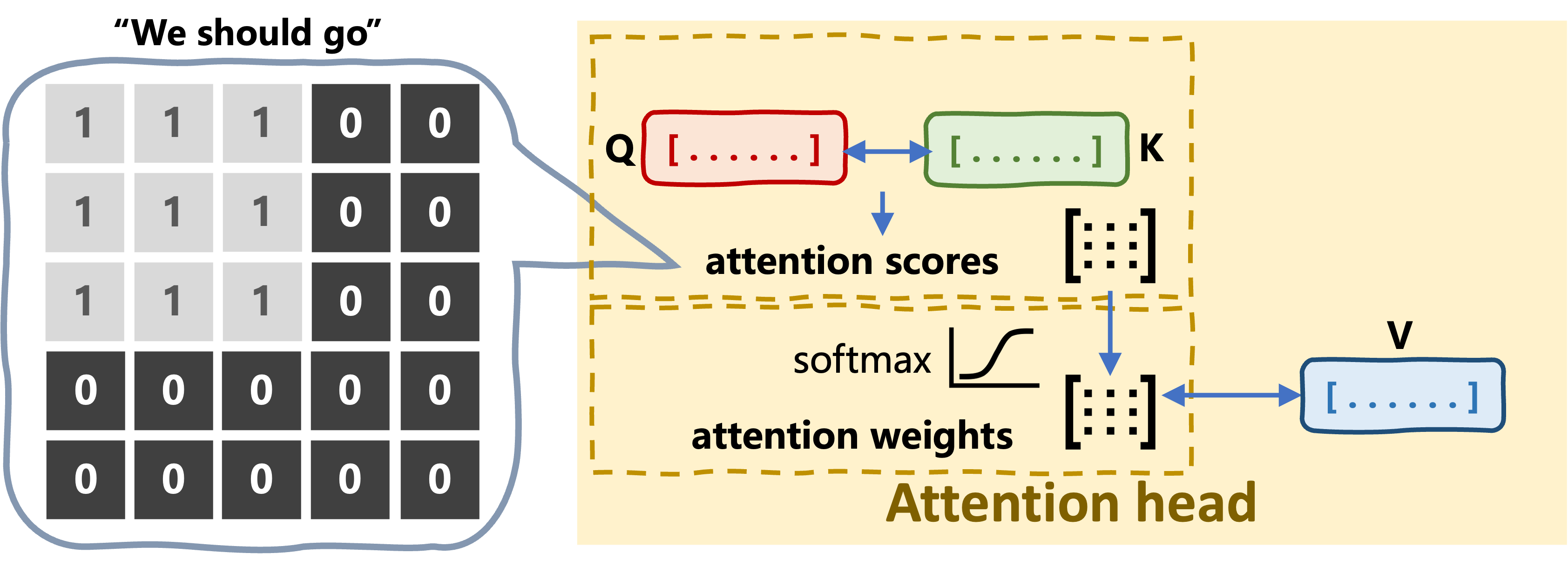

- Self-attention multi-head

- (Sub)layer feed-forward

- Layer normalization, dropout

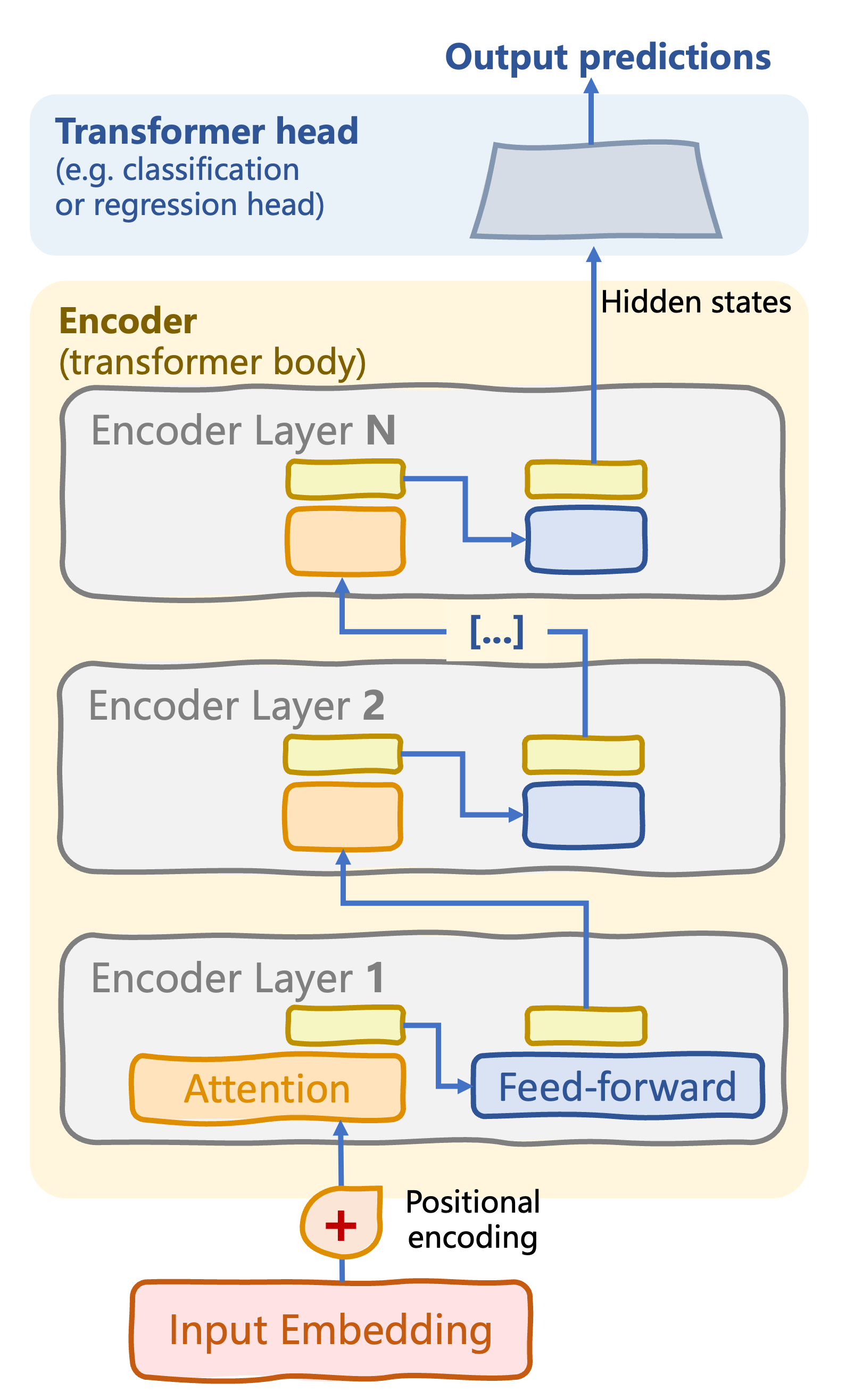

Kepala transformer

Transformer hanya-encoder

Badan transformer: tumpukan encoder dengan N layer encoder

Layer encoder

- Self-attention multi-head

- (Sub)layer feed-forward

- Layer normalization, dropout

Kepala transformer: memproses input terenkode untuk menghasilkan prediksi keluaran

Tugas terawasi: klasifikasi, regresi

Sub-layer feed-forward pada layer encoder

class FeedForwardSubLayer(nn.Module): def __init__(self, d_model, d_ff): super().__init__() self.fc1 = nn.Linear(d_model, d_ff) self.fc2 = nn.Linear(d_ff, d_model) self.relu = nn.ReLU()def forward(self, x): return self.fc2(self.relu(self.fc1(x)))

2 x fully connected + aktivasi ReLU

d_ff: dimensi antar layer linearforward(): memproses keluaran attention untuk menangkap pola non-linear yang kompleks

Layer encoder

class EncoderLayer(nn.Module): def __init__(self, d_model, num_heads, d_ff, dropout): super().__init__() self.self_attn = MultiHeadAttention(d_model, num_heads) self.ff_sublayer = FeedForwardSubLayer(d_model, d_ff)self.norm1 = nn.LayerNorm(d_model) self.norm2 = nn.LayerNorm(d_model) self.dropout = nn.Dropout(dropout)def forward(self, x, src_mask): attn_output = self.self_attn(x, x, x, src_mask) x = self.norm1(x + self.dropout(attn_output)) ff_output = self.ff_sublayer(x) x = self.norm2(x + self.dropout(ff_output)) return x

- Self-attention multi-head

- Sub-layer feed-forward

- Layer normalization dan dropout

forward():

maskmencegah pemrosesan token padding

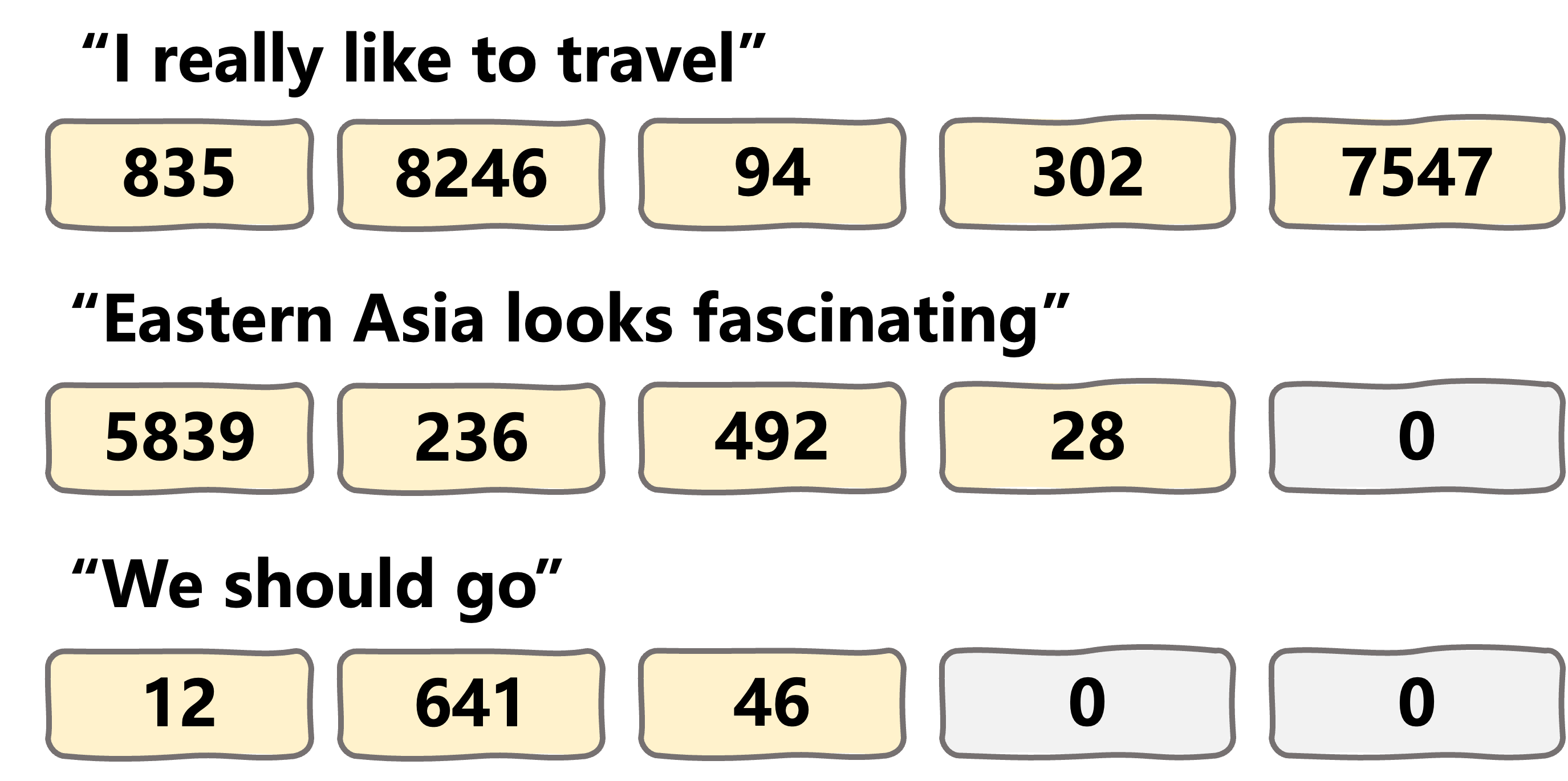

Masking pada proses attention

Kerangka encoder transformer

class TransformerEncoder(nn.Module): def __init__(self, vocab_size, d_model, num_layers, num_heads, d_ff, dropout, max_seq_length): super().__init__()self.embedding = InputEmbeddings(vocab_size, d_model)self.positional_encoding = PositionalEncoding(d_model, max_seq_length)self.layers = nn.ModuleList( [EncoderLayer(d_model, num_heads, d_ff, dropout) for _ in range(num_layers)] )def forward(self, x, src_mask): x = self.embedding(x) x = self.positional_encoding(x) for layer in self.layers: x = layer(x, src_mask) return x

Kepala encoder transformer

class ClassifierHead(nn.Module):

def __init__(self, d_model, num_classes):

super().__init__()

self.fc = nn.Linear(d_model, num_classes)

def forward(self, x):

logits = self.fc(x)

return F.log_softmax(logits, dim=-1)

class RegressionHead(nn.Module):

def __init__(self, d_model, output_dim):

super().__init__()

self.fc = nn.Linear(d_model, output_dim)

def forward(self, x):

return self.fc(x)

Kepala klasifikasi

- Tugas: klasifikasi teks, analisis sentimen, NER, QA ekstraktif, dll.

fc: layer linear fully connected- Mengubah hidden state encoder menjadi probabilitas

num_classes

- Mengubah hidden state encoder menjadi probabilitas

Kepala regresi

- Tugas: estimasi keterbacaan teks, kompleksitas bahasa, dll.

output_dimadalah 1 saat memprediksi satu nilai numerik

Ayo berlatih!

Model Transformer dengan PyTorch