Optimasi hyperparameter dengan Optuna

Deep Reinforcement Learning dengan Python

Timothée Carayol

Principal Machine Learning Engineer, Komment

Apa itu hyperparameter

- Banyak hyperparameter pada algoritme DRL

- Dapat sangat memengaruhi kinerja

- Kompleksitas pencarian tumbuh seiring jumlah hyperparameter

| Contoh |

|---|

| Discount rate |

| PPO: clipping epsilon, entropy bonus |

| Experience replay: ukuran buffer, ukuran batch |

| Jadwal epsilon-greedy yang menurun |

| Fixed Q-targets: $\tau$ |

| Learning rate |

| Jumlah layer, node per layer... |

Cara memilih nilai hyperparameter

Objektif: rata-rata reward kumulatif

Teknik pencarian hyperparameter:

- Coba-coba manual

- Grid search

- Random search

- Algoritme khusus

Alur kerja Optuna:

- Definisikan fungsi objektif

- Buat

studyOptuna - Biarkan Optuna menjalankan trials

import optunadef objective(trial): ...study = optuna.create_study()study.optimize(objective, n_trials=100)

study.best_params

{'learning_rate': 0.001292481, 'batch_size': 8}

Menentukan fungsi objektif

Dalam fungsi objektif:

- Tentukan hyperparameter yang dicari

- Tentukan metrik untuk dioptimalkan

Fleksibel penuh untuk spesifikasi hyperparameter:

- float

- integer

- kategorikal

def objective(trial: optuna.trial.Trial):# Hyperparameters x dan y antara -10 dan 10x = trial.suggest_float('x', -10, 10) y = trial.suggest_float('y', -10, 10)# Kembalikan metrik yang akan diminimalkan return (x - 2) ** 2 + 1.2 * (y + 3) ** 2

Study di Optuna

- Gunakan sqlite untuk menyimpan study

- Sampling

n_trialsdengan sampler default (TPE)- Awalnya acak

- Lalu fokus ke area yang menjanjikan

- Jika

n_trialstidak diisi: jalan terus hingga dihentikan - Study dapat dimuat kembali dari database

import sqlite study = optuna.create_study( storage="sqlite:///DRL.db", study_name="my_study")study.optimize(objective, n_trials=100)

loaded_study = optuna.load_study(

study_name="my_study",

storage="sqlite:///DRL.db")

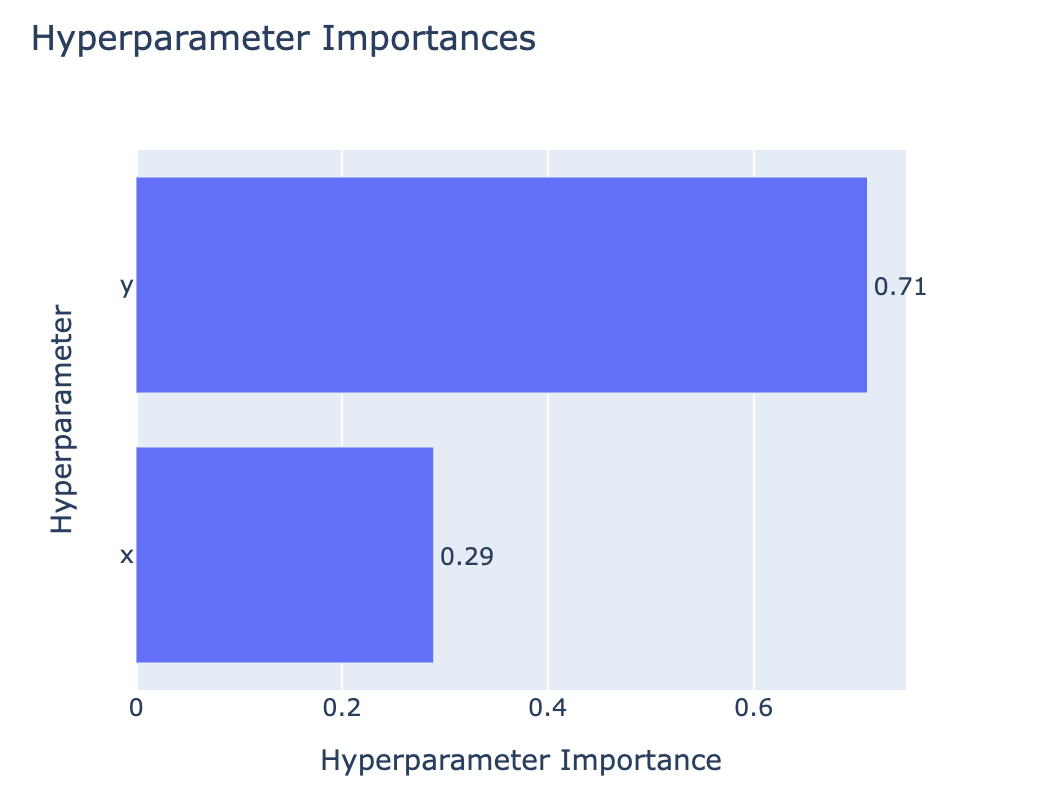

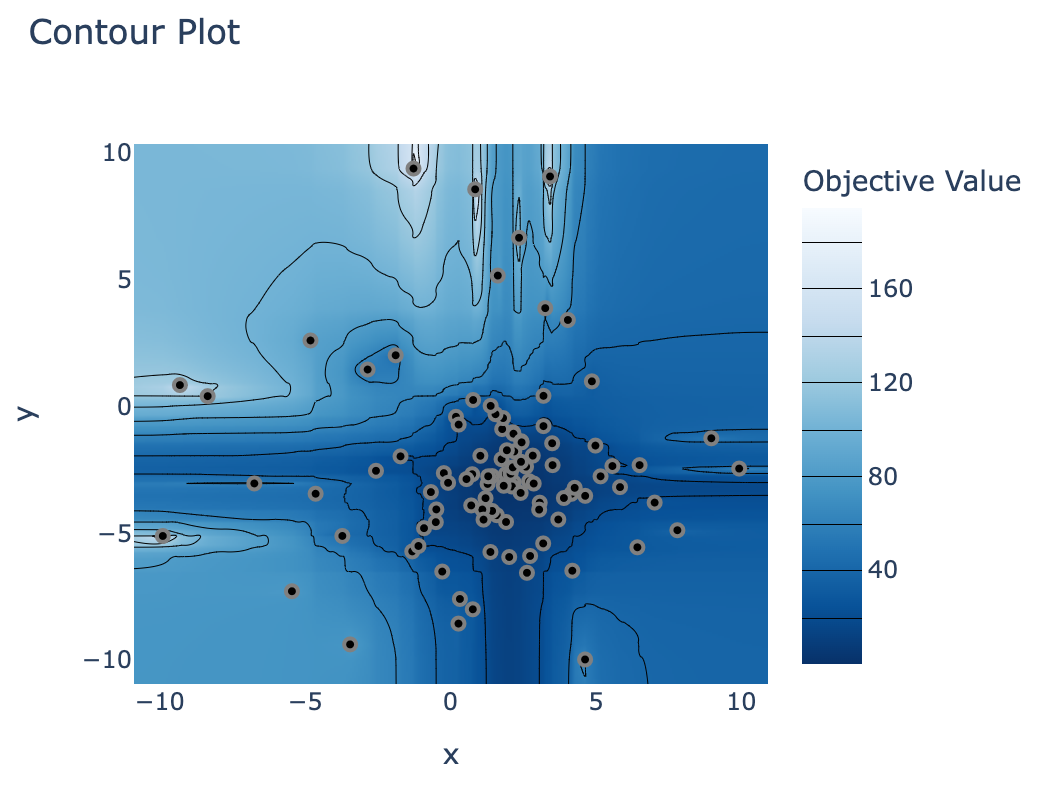

Menjelajahi hasil study

optuna.visualization.plot_param_importances(study)

optuna.visualization.plot_contour(study)

Ayo berlatih!

Deep Reinforcement Learning dengan Python