Algoritme DQN dasar

Deep Reinforcement Learning dengan Python

Timothée Carayol

Principal Machine Learning Engineer, Komment

DQN dasar

- Langkah pertama ke algoritme DQN lengkap

- Fitur:

- Loop pelatihan DRL generik

- Q-network

- Prinsip Q-learning

for episode in range(1000):

state, info = env.reset()

done = False

while not done:

# Action selection

action = select_action(network, state)

next_state, reward, terminated, truncated, _ = (

env.step(action))

done = terminated or truncated

# Loss calculation

loss = calculate_loss(network, state, action,

next_state, reward, done)

optimizer.zero_grad()

loss.backward()

optimizer.step()

state = next_state

Pemilihan aksi pada DQN dasar

def select_action(q_network, state):# Feed state to network to obtain Q-valuesq_values = q_network(state)# Obtain index of action with highest Q-value action = torch.argmax(q_values).item()return action

- Kebijakan: pilih aksi dengan Q-value tertinggi

- $ a_t = {\arg\max}_a Q(S_t, a) $

- Di sini: aksi 2, dengan Q-value

0.12

Q-values: [-0.01, 0.08, 0.12, -0.07]Aksi terpilih: 2, dengan q-value 0.12

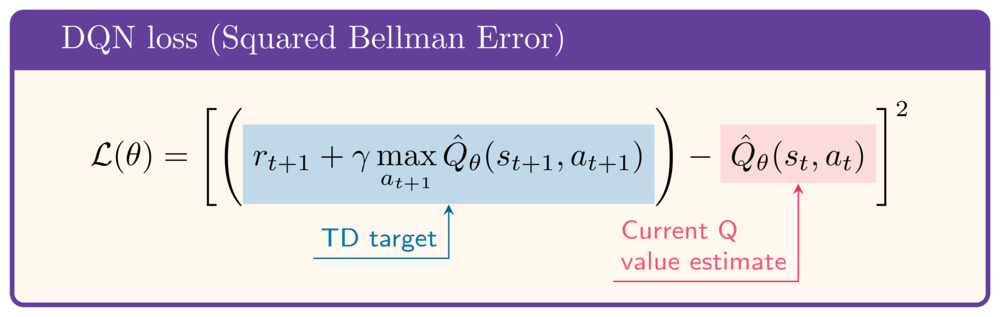

Fungsi loss DQN dasar

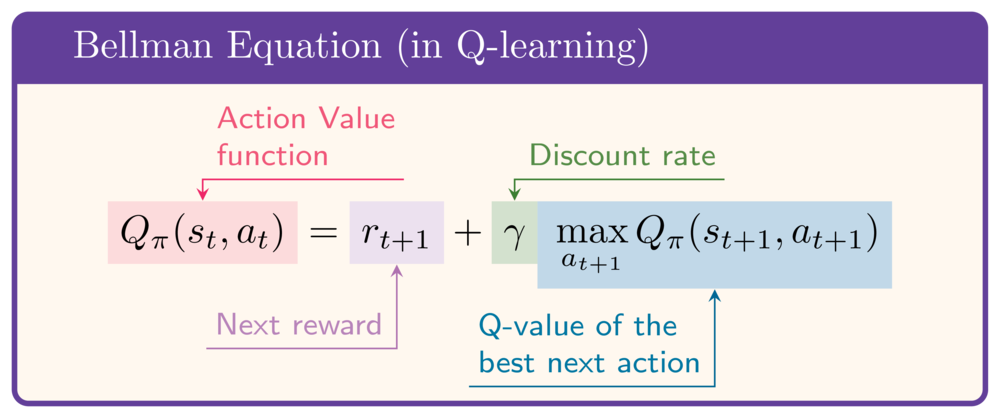

- Fungsi nilai-aksi memenuhi Persamaan Bellman

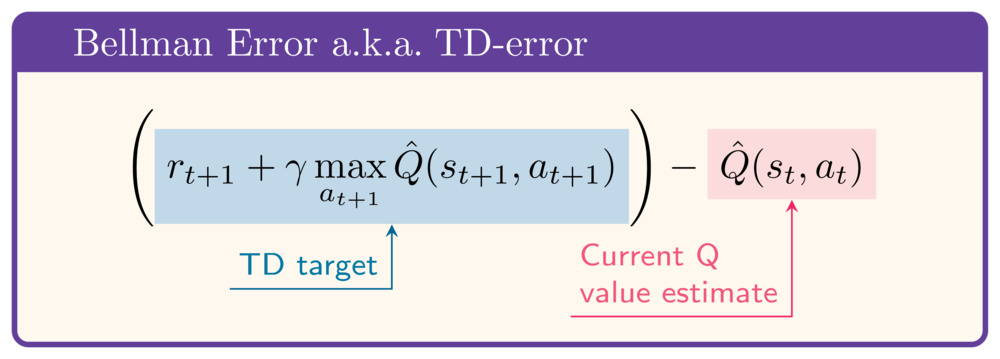

- Ide: minimalkan selisih kedua sisi (TD-error/Bellman error)

- Gunakan Kuadrat Bellman Error sebagai loss:

Fungsi loss DQN dasar

def calculate_loss( q_network, state, action, next_state, reward, done):q_values = q_network(state)current_state_q_value = q_values[action]next_state_q_value = q_network(next_state).max()target_q_value = reward + gamma * next_state_q_value * (1-done)loss = nn.MSELoss()( current_state_q_value, target_q_value)return loss

- Q-value keadaan saat ini:

$Q(s_t, a_t)$

- Q-value keadaan berikutnya:

$\max_a Q(s_{t+1}, a)$

- Target Q-value:

$r_{t+1} + \gamma \max_a Q(s_{t+1}, a)$

- Loss DQN:

$$\left(Q(s_t, a_t) - (r_{t+1} + \gamma \max_a Q(s_{t+1}, a)\right)^2$$

Mendeskripsikan episode

describe_episode(episode, reward, episode_reward, step)

| Episode 1 | Durasi: 84 langkah | Return: -871.38 | Kecelakaan || Episode 2 | Durasi: 53 langkah | Return: -452.68 | Kecelakaan || Episode 3 | Durasi: 57 langkah | Return: -414.22 | Kecelakaan | | Episode 4 | Durasi: 54 langkah | Return: -475.09 | Kecelakaan || Episode 5 | Durasi: 67 langkah | Return: -532.31 | Kecelakaan | | Episode 6 | Durasi: 53 langkah | Return: -407.00 | Kecelakaan | | Episode 7 | Durasi: 52 langkah | Return: -380.45 | Kecelakaan | | Episode 8 | Durasi: 55 langkah | Return: -380.75 | Kecelakaan | | Episode 9 | Durasi: 88 langkah | Return: -688.68 | Kecelakaan | | Episode 10 | Durasi: 76 langkah | Return: -338.06 | Kecelakaan |

Ayo berlatih!

Deep Reinforcement Learning dengan Python