Menjelajahi model reward

Reinforcement Learning from Human Feedback (RLHF)

Mina Parham

AI Engineer

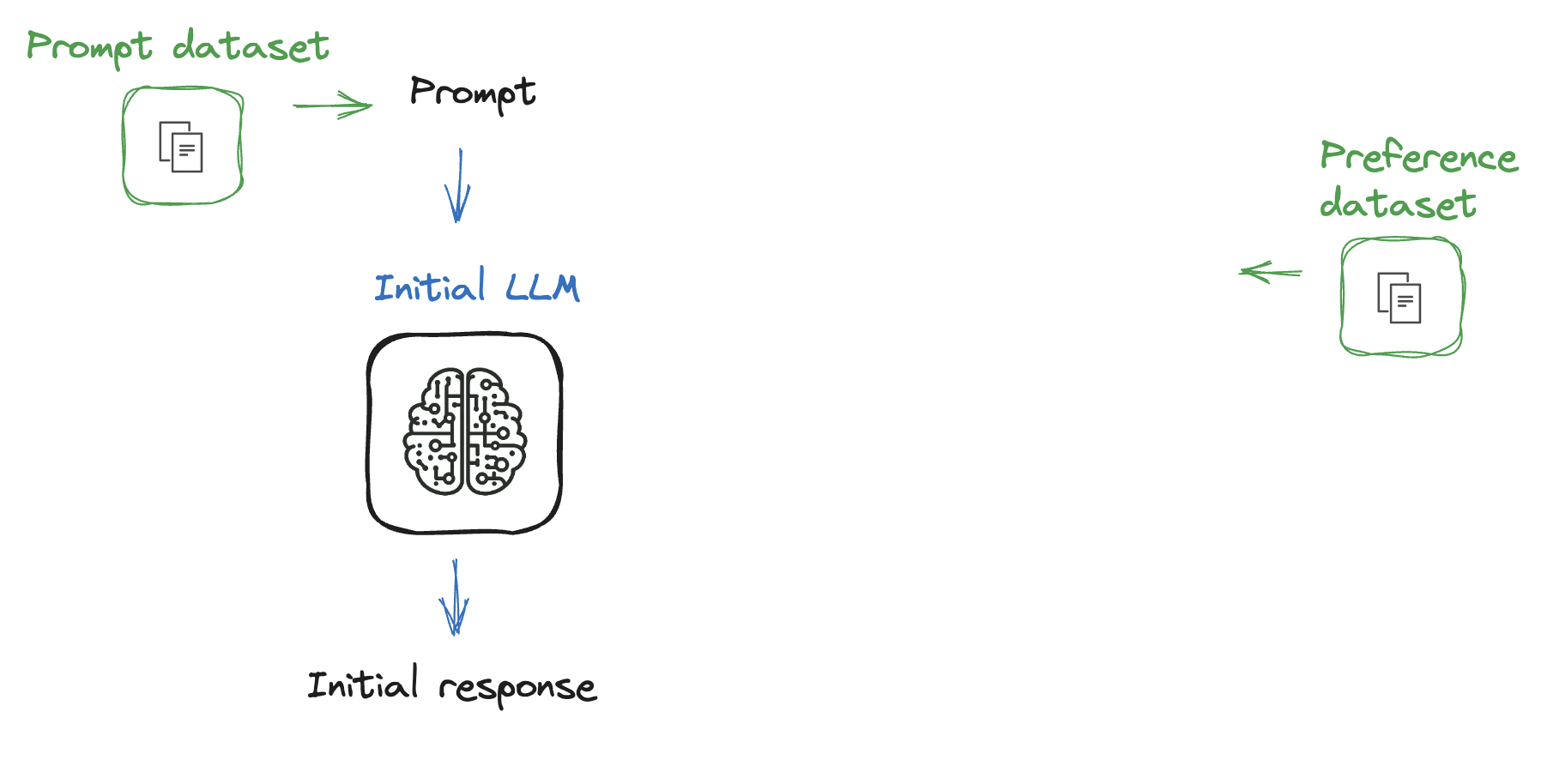

Proses sejauh ini

Proses sejauh ini

Apa itu model reward?

Apa itu model reward?

- Model memberi masukan ke agen

- Agen mengevaluasi model untuk memaksimalkan reward

Menggunakan reward trainer

from trl import RewardTrainer, RewardConfigfrom transformers import AutoModelForSequenceClassification, AutoTokenizerfrom datasets import load_dataset

# Load pre-trained model and tokenizer model = AutoModelForSequenceClassification.from_pretrained("gpt2", num_labels=1) tokenizer = AutoTokenizer.from_pretrained("gpt2")# Load dataset in the required format dataset = load_dataset("path/to/dataset")

Melatih model reward

# Define training arguments training_args = RewardConfig(output_dir="path/to/output/dir",per_device_train_batch_size=8, per_device_eval_batch_size=8,num_train_epochs=3,learning_rate=1e-3)

Melatih model reward

# Initialize the RewardTrainer

trainer = RewardTrainer(

model=model,

args=training_args,

train_dataset=dataset["train"],

eval_dataset=dataset["validation"],

tokenizer=tokenizer,

)

# Train the reward model

trainer.train()

Ayo berlatih!

Reinforcement Learning from Human Feedback (RLHF)