Fine-tuning efisien dalam RLHF

Reinforcement Learning from Human Feedback (RLHF)

Mina Parham

AI Engineer

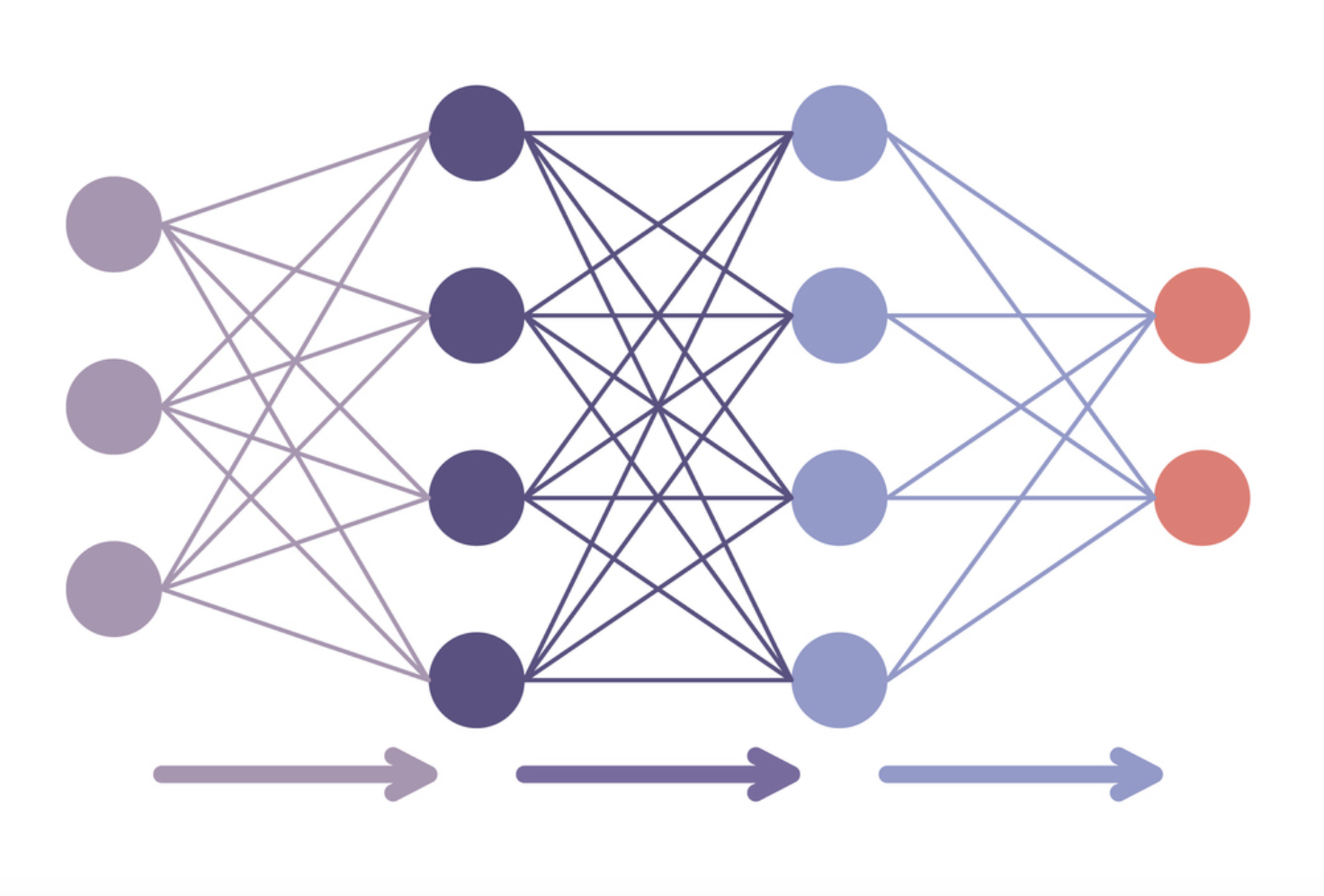

Fine-tuning hemat parameter

- Fine-tuning seluruh model

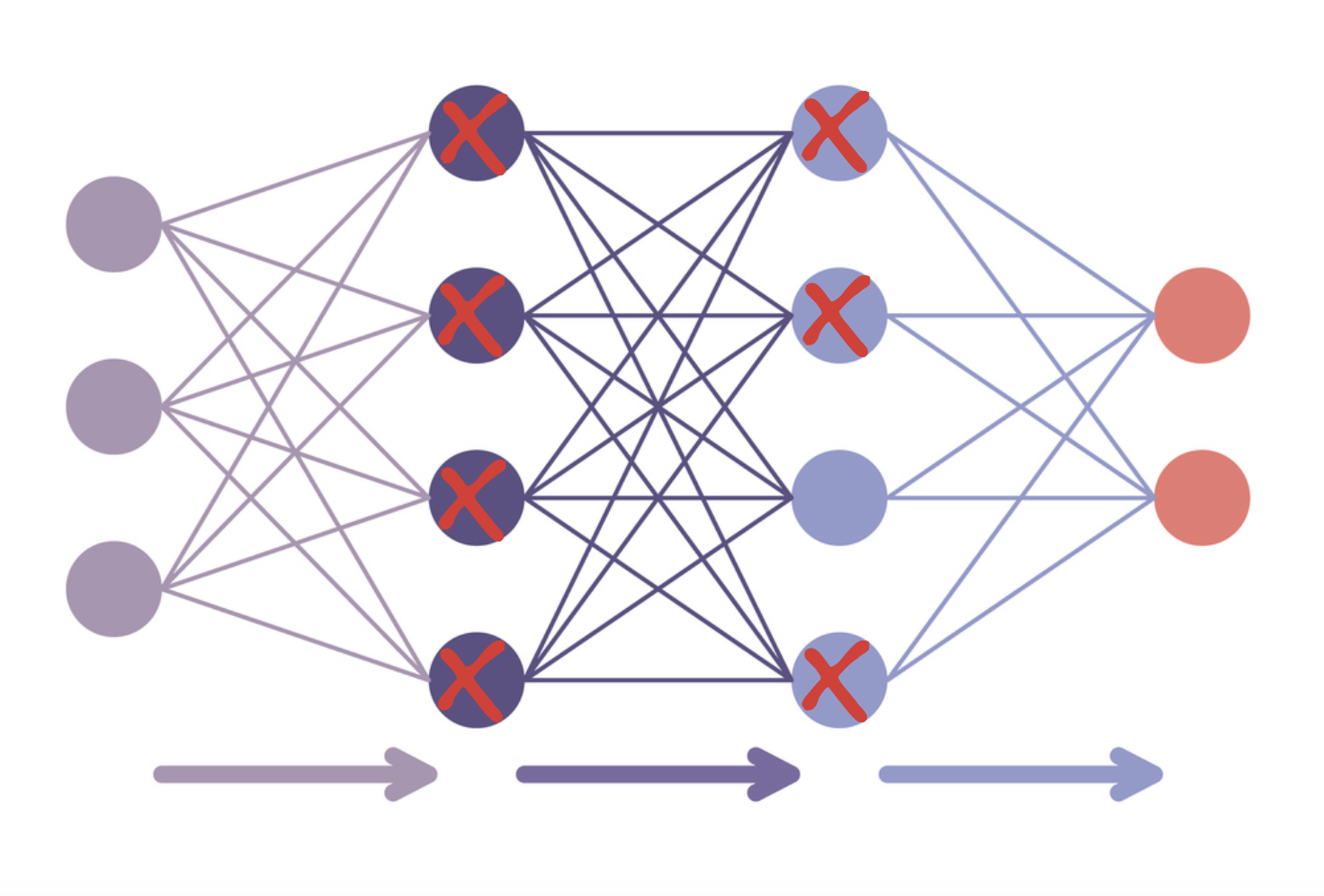

Fine-tuning hemat parameter

- Fine-tuning dengan PEFT

- LoRA: menyesuaikan beberapa lapisan saja

- Kuantisasi: menurunkan presisi tipe data

Langkah 1: muat model aktif dalam presisi 8-bit

from peft import prepare_model_for_int8_trainingpretrained_model = AutoModelForCausalLM.from_pretrained( model_name, load_in_8bit=True )pretrained_model_8bit = prepare_model_for_int8_training(pretrained_model)

Langkah 2: tambahkan adapter terlatih tambahan dengan peft

from peft import LoraConfig, get_peft_modelconfig = LoraConfig(r=32, # Rank of the low-rank matriceslora_alpha=32, # Scaling factor for the LoRA updateslora_dropout=0.1, # Dropout rate for LoRA layersbias="lora_only"# Only update bias terms for LoRA layers, others remain frozen)lora_model = get_peft_model(pretrained_model_8bit, config) model = AutoModelForCausalLMWithValueHead.from_pretrained(lora_model)

Langkah 3: gunakan satu model untuk logit referensi dan aktif

ppo_trainer = PPOTrainer(

config, # The config we just defined

model, # Our PPO model

ref_model=None,

tokenizer=tokenizer,

dataset=dataset,

data_collator=collator,

optimizer=optimizer

)

Ayo berlatih!

Reinforcement Learning from Human Feedback (RLHF)