Pengantar RLHF

Reinforcement Learning from Human Feedback (RLHF)

Mina Parham

AI Engineer

Selamat datang di kursus!

- Instruktur: Mina Parham

- Insinyur AI

- Large Language Models (LLMs)

- Reinforcement Learning from Human Feedback (RLHF)

- Topik: Reinforcement Learning from Human Feedback (RLHF)

Selamat datang di kursus!

- Instruktur: Mina Parham

- Insinyur AI

- Large Language Models (LLMs)

- Reinforcement Learning from Human Feedback (RLHF)

- Topik: Reinforcement Learning from Human Feedback (RLHF)

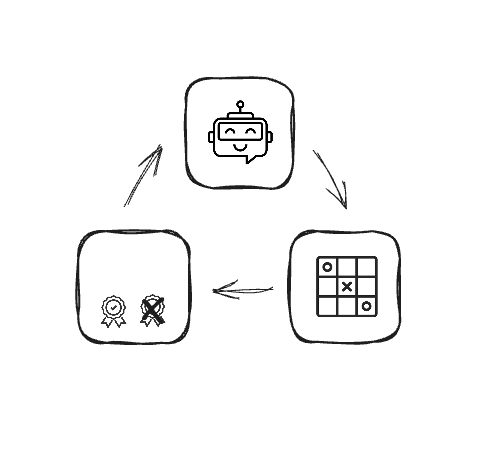

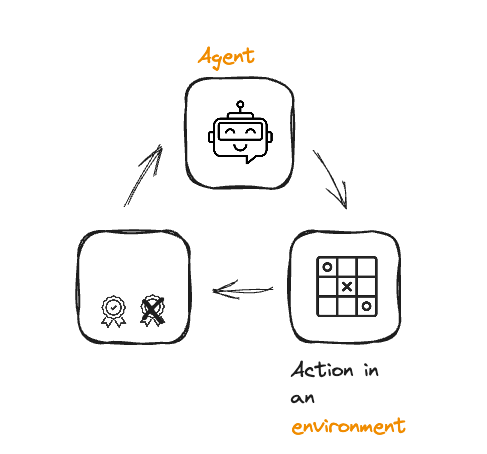

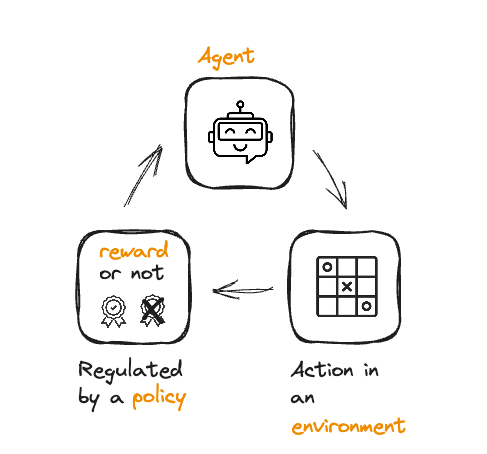

Tinjauan reinforcement learning

Tinjauan reinforcement learning

Tinjauan reinforcement learning

Tinjauan reinforcement learning

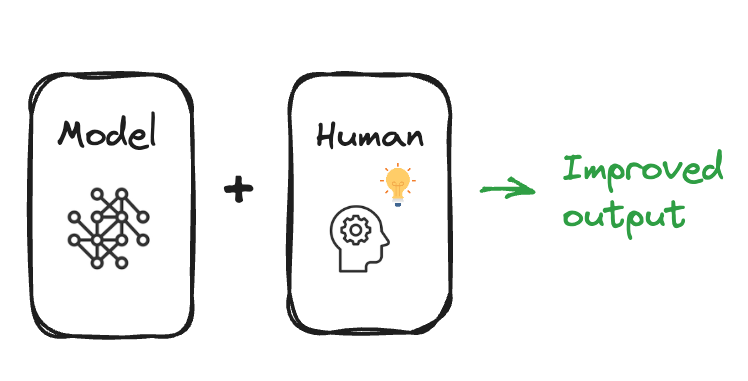

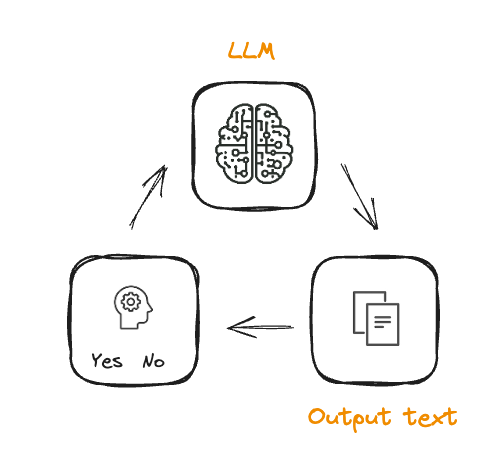

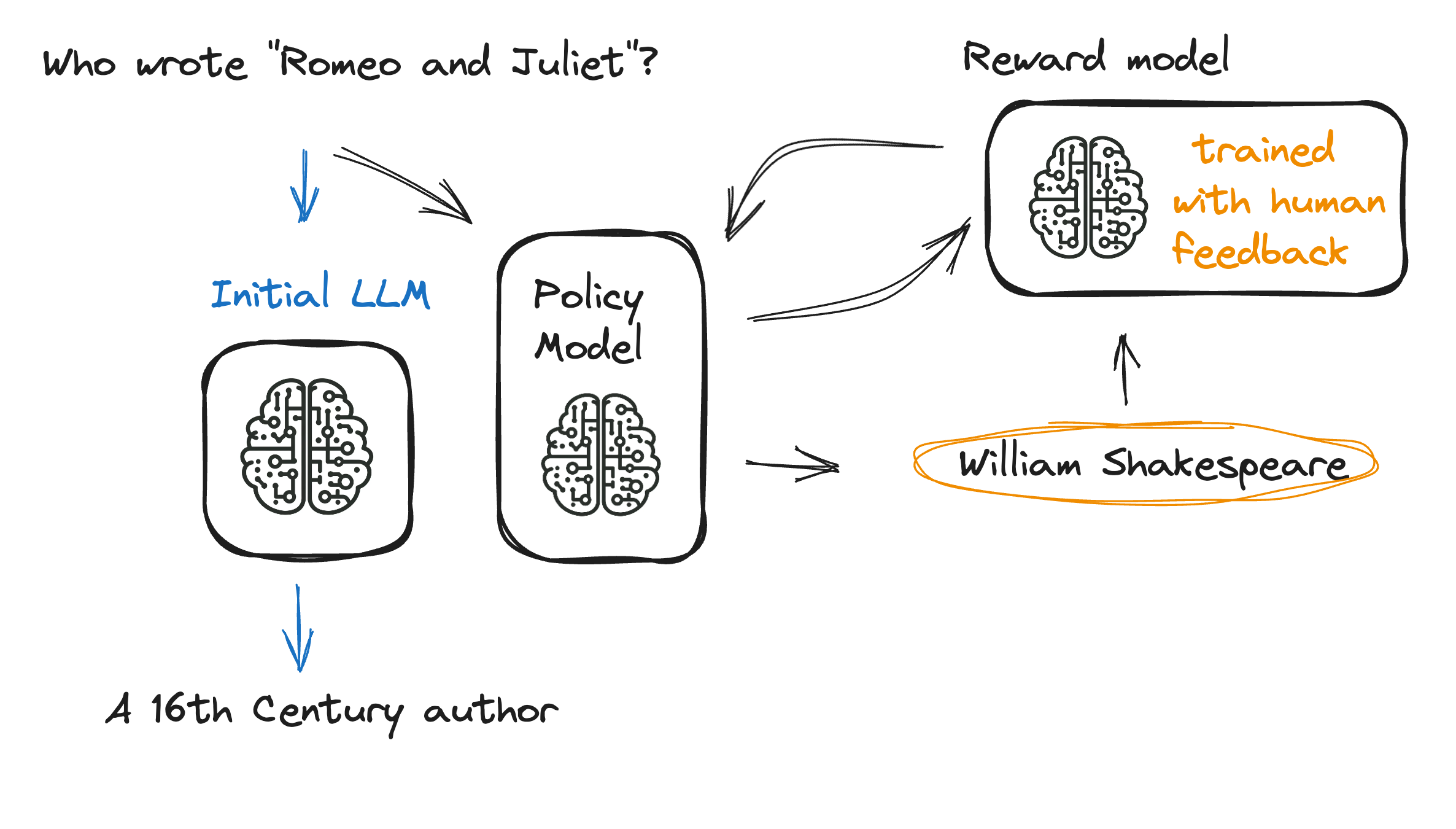

Dari RL ke RLHF

Dari RL ke RLHF

Dari RL ke RLHF

- Melatih model hadiah

- Selaras dengan preferensi manusia

Fine-tuning LLM dalam RLHF

Fine-tuning LLM dalam RLHF

- Melatih LLM awal

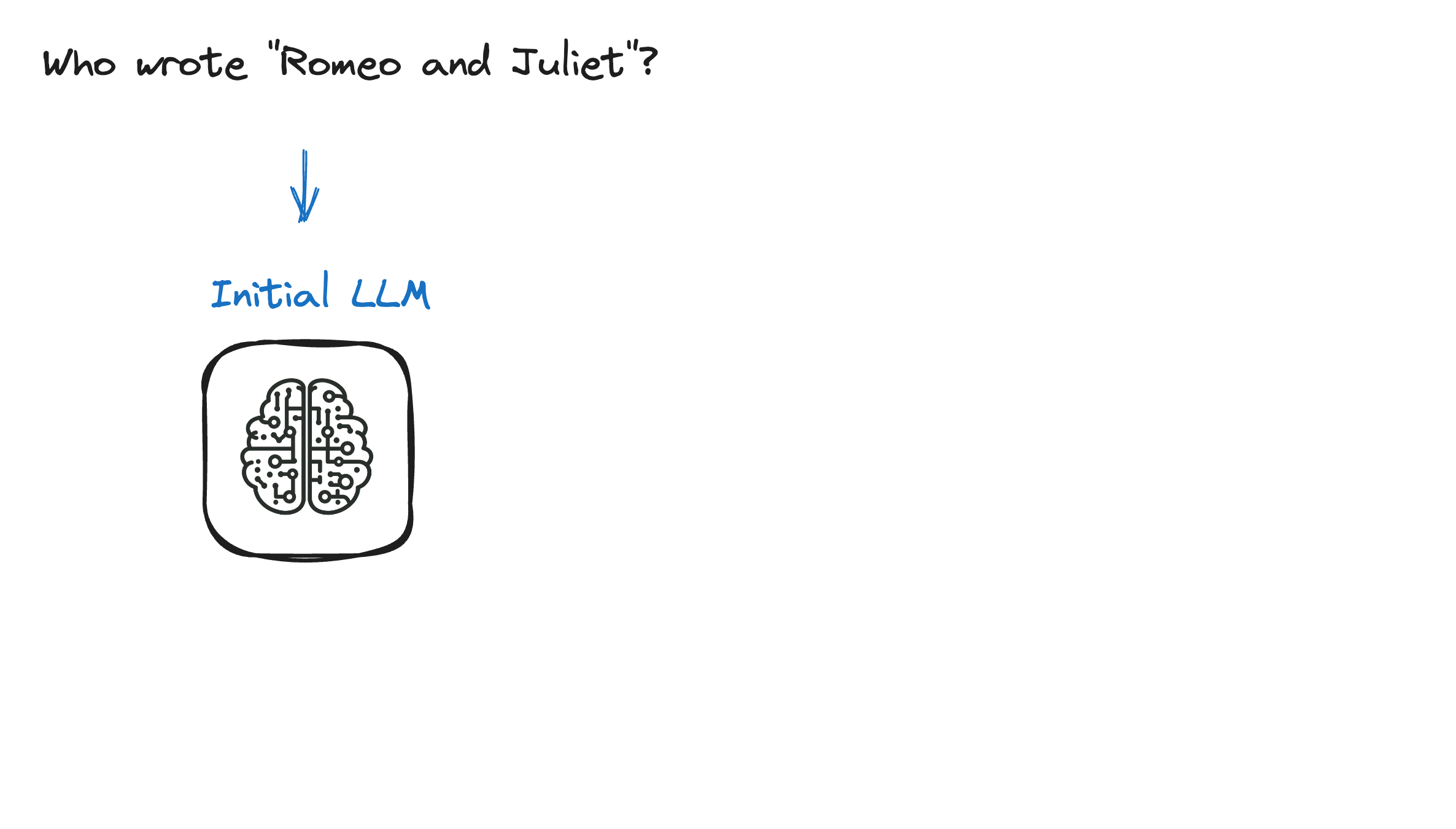

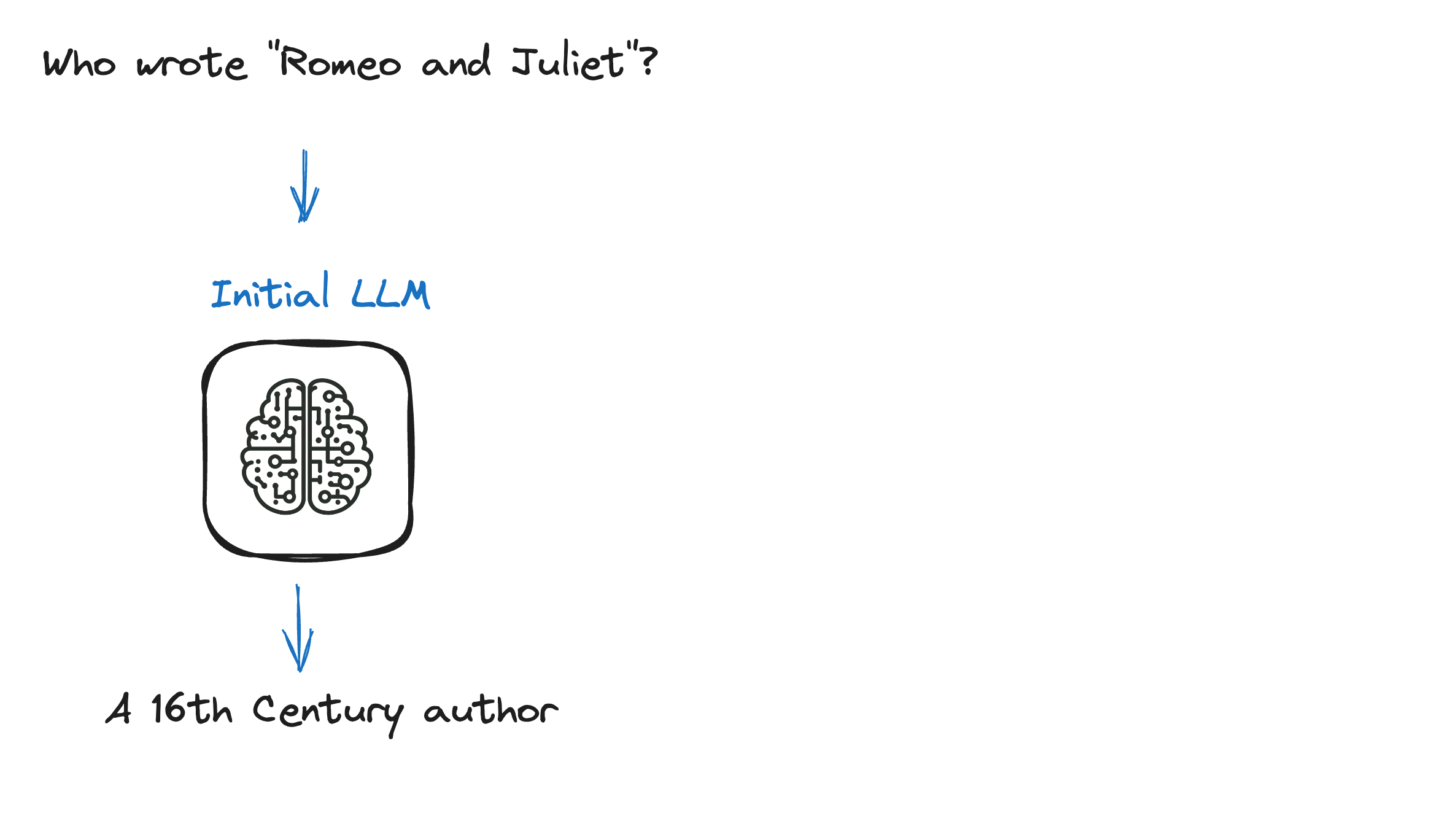

Proses RLHF lengkap

Proses RLHF lengkap

Proses RLHF lengkap

Proses RLHF lengkap

Proses RLHF lengkap

Proses RLHF lengkap

Berinteraksi dengan LLM yang di-tuning RLHF

- Model RLHF pratampil di Hugging Face 🤗

from transformers import pipelinetext_generator = pipeline('text-generation', model='lvwerra/gpt2-imdb-pos-v2')# Provide a review prompt review_prompt = "This is definitely a" # Generate the continuation output = text_generator(review_prompt, max_length=50) #Print the generated text print(output[0]['generated_text'])

This is definitely a crucial improvement.

Berinteraksi dengan LLM yang di-tuning RLHF

from transformers import pipeline, AutoModelForSequenceClassification, AutoTokenizer# Instantiate the pre-trained model and tokenizer model = AutoModelForSequenceClassification.from_pretrained("lvwerra/distilbert-imdb") tokenizer = AutoTokenizer.from_pretrained("lvwerra/distilbert-imdb")# Use pipeline to create the sentiment analyzer sentiment_analyzer = pipeline('sentiment-analysis', model=model, tokenizer=tokenizer) # Pass the text to the sentiment analyzer and print the result sentiment = sentiment_analyzer("This is definitely a crucial improvement.")print(f"Sentiment Analysis Result: {sentiment}")

positive

Ayo berlatih!

Reinforcement Learning from Human Feedback (RLHF)