Metrik model dan penyesuaian

Reinforcement Learning from Human Feedback (RLHF)

Mina Parham

AI Engineer

Mengapa gunakan model referensi?

- Output tidak bermakna

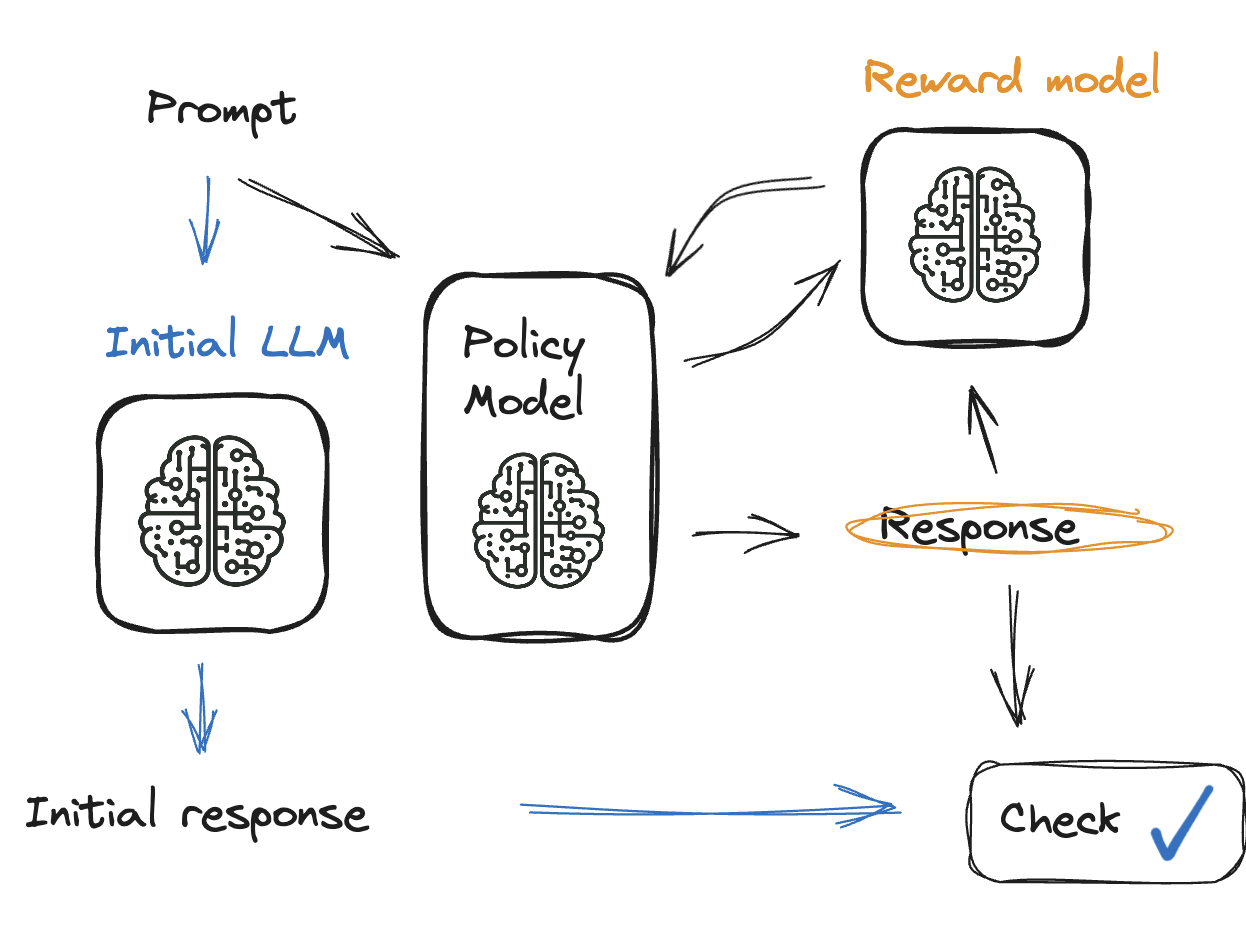

Memeriksa output model

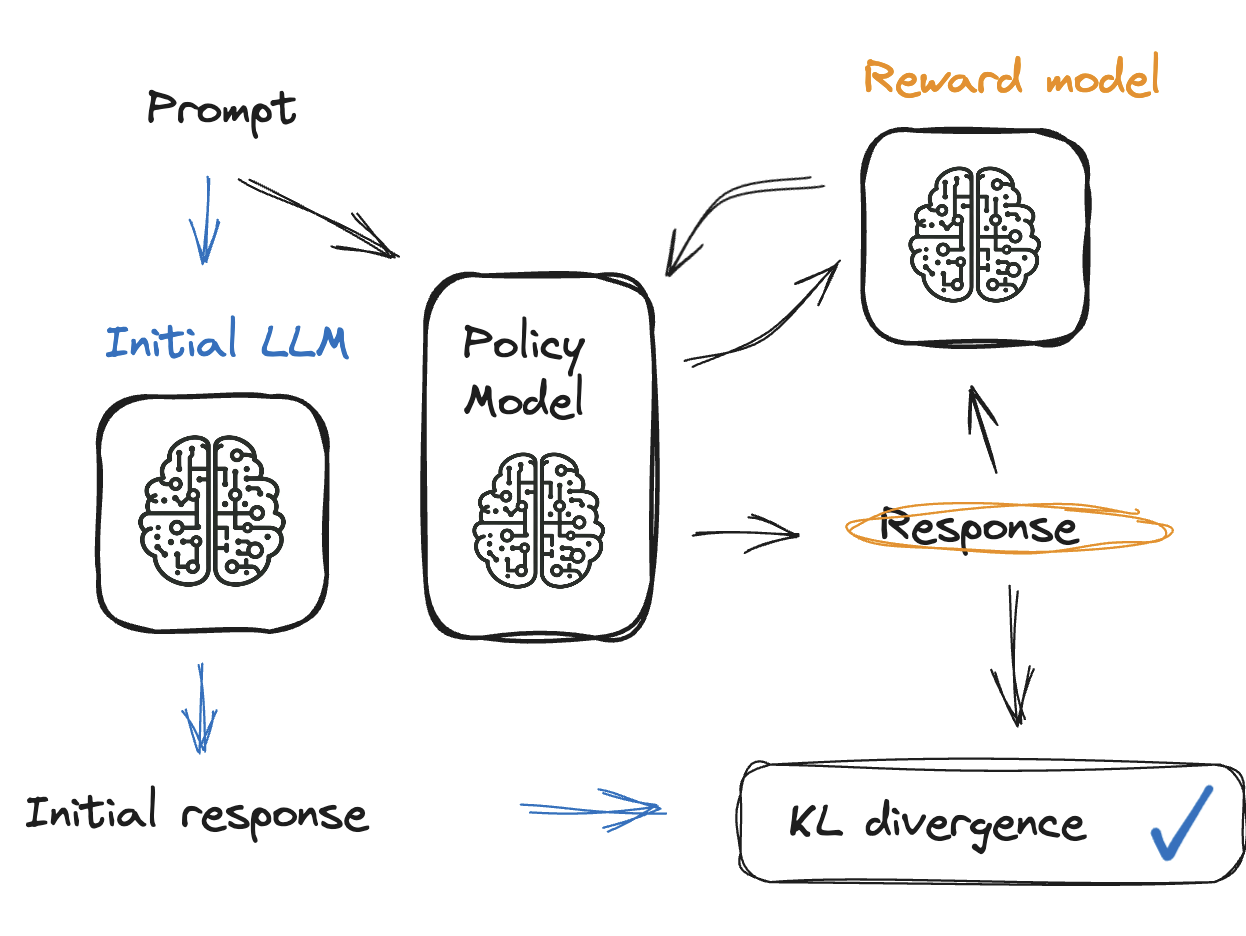

Solusi: divergensi KL

Solusi: divergensi KL

- Tambahkan penalti ke model reward

- Penalti mengoreksi arah saat output tidak relevan

- Divergensi KL membandingkan model saat ini dan model reward

- Antara 0 dan 10, dan tidak pernah negatif

Menyesuaikan parameter

generation_kwargs = {"min_length": -1, # don't ignore the EOS token"top_k": 0.0, # no top-k sampling"top_p": 1.0, "do_sample": True, "pad_token_id": tokenizer.eos_token_id, "max_new_tokens": 32}

- Parameter diteruskan ke model kebijakan

Memeriksa model reward

Memeriksa model reward

Memeriksa output (reward)

reward_model_results.head()

|ID | Comment |Sentiment |Reward|

|---|---------------------------------------------|----------|------|

| 1 | This event was lit! So much fun! | Positive | 0.9 |

| 2 | Terrible experience, never attending again. | Negative | -0.8 |

| 3 | It was okay, nothing extraordinary. | Neutral | 0.2 |

| 4 | The event was poorly organized and chaotic. | Negative | -0.85|

| 5 | Had an amazing time with great people! | Positive | 0.95|

Memeriksa model reward

- 👍 👎 Periksa kasus ekstrem

extreme_positive = reward_model_results[reward_model_results['Reward'] >= 0.9] extreme_negative = reward_model_results[reward_model_results['Reward'] <= -0.8]

🧘 Pastikan dataset seimbang

sentiment_distribution = reward_model_results['Sentiment'].value_counts()📊 Normalisasi model reward

from sklearn.preprocessing import MinMaxScaler scaler = MinMaxScaler(feature_range=(-1, 1)) scaler.fit_transform(reward_model_results[['Reward']])

Ayo berlatih!

Reinforcement Learning from Human Feedback (RLHF)