Menggabungkan beragam sumber umpan balik

Reinforcement Learning from Human Feedback (RLHF)

Mina Parham

AI Engineer

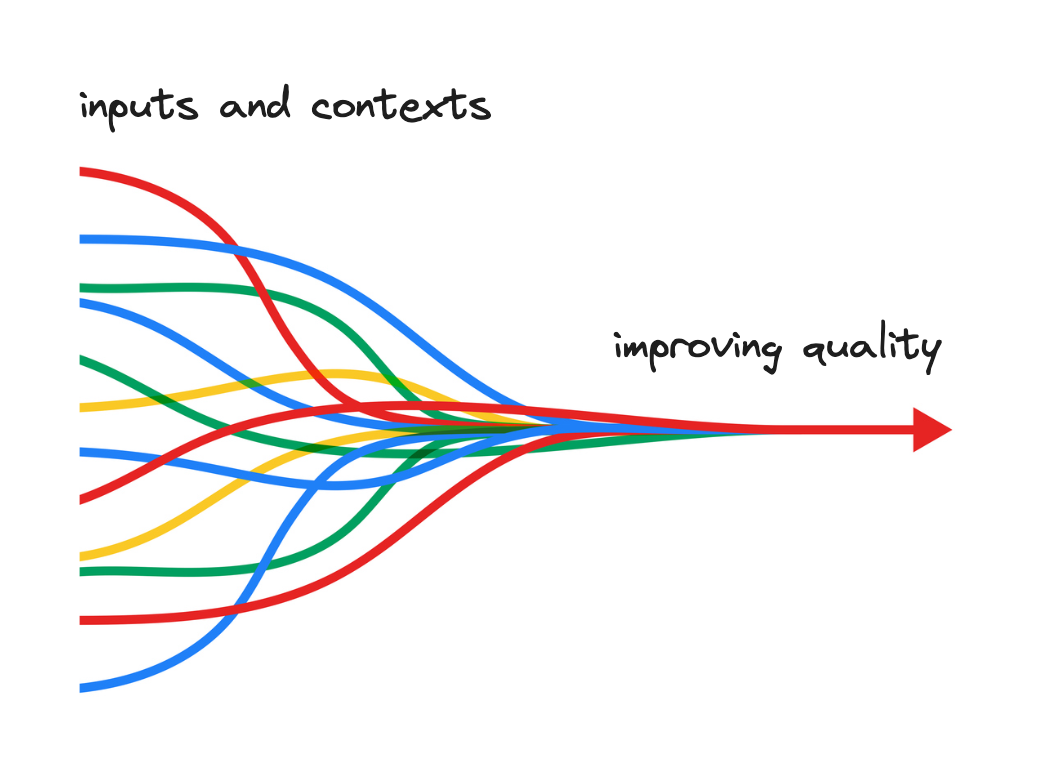

Generalization model yang lebih baik

- Mewakili beragam sudut pandang dan konteks

- Menggeneralisasi preferensi dan nilai

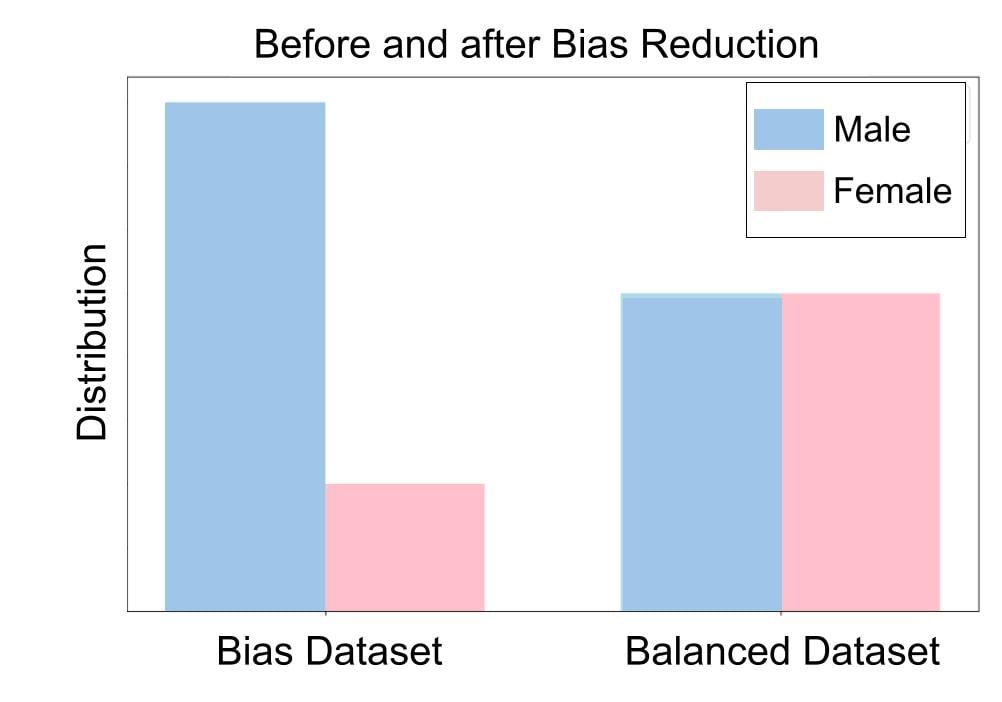

Bias berkurang

- Mengurangi bias individu

- Menghasilkan keluaran model yang lebih seimbang dan adil

Kesesuaian yang lebih baik dengan nilai manusia

- Preferensi manusia yang kompleks

- Budaya dan latar belakang terwakili

Adaptabilitas yang lebih baik

- Model merespons lebih banyak kebutuhan dan preferensi pengguna

- Mewakili berbagai sudut pandang

Ketahanan meningkat

- Tangguh terhadap berbagai tipe input

- Kinerja meningkat

Mengintegrasikan data preferensi dari banyak sumber

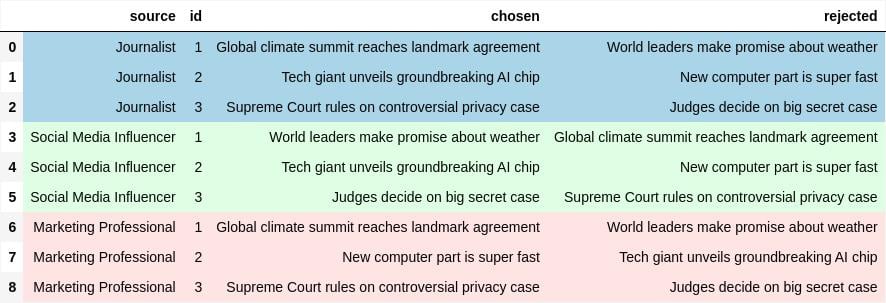

Data preferensi preference_df dengan sumber 'Journalist', 'Social Media Influencer', dan 'Marketing Professional':

Majority voting

Contoh data ini mudah diintegrasikan dengan mengelompokkan berdasarkan 'id':

df_majority = preference_df.groupby(['id']).apply(majority_vote)

Lalu gunakan majority voting:

from collections import Counter

def majority_vote(df):

votes = Counter(zip(df['chosen'], df['rejected']))

return max(votes, key=votes.get)

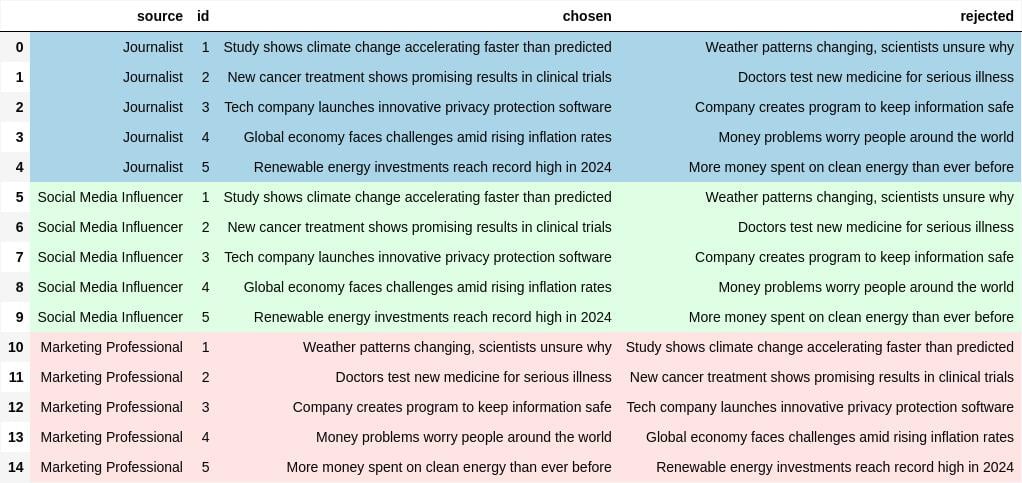

Sumber data preferensi yang tidak andal

Data preferensi preference_df2 dengan tiga pakar yang sama:

Sumber data preferensi yang tidak andal

- Iterasi baris

preference_df2untuk mengidentifikasi sumber yang tidak andal:

df_majority = preference_df2.groupby('id').apply(majority_vote)disagreements = {source: 0 for source in preference_df2['source'].unique()}for _, row in preference_df2.iterrows(): if (row['chosen'], row['rejected']) != df_majority[row['id']]: disagreements[row['source']] += 1detect_unreliable_source = max(disagreements, key=disagreements.get)

Ayo berlatih!

Reinforcement Learning from Human Feedback (RLHF)