From dataset to detection model

Fraud Detection in R

Sebastiaan Höppner

PhD researcher in Data Science at KU Leuven

Roadmap

- (1) Divide dataset in training set and test set

- (2) Choose a machine learning model

- (3) Apply SMOTE on training set to balance the class distribution

- (4) Train model on re-balanced training set

- (5) Test performance on (original) test set

Divide dataset in training & set

- Split the dataset into a training set and a test set (e.g. 50/50, 75/25, ...)

- Make sure that both sets have identical class distribution (at first)

- Example: 50% training set and 50% test set

prop.table(table(train$Class))

0 1

0.98 0.02

prop.table(table(test$Class))

0 1

0.98 0.02

Choose & train machine learning model

- Decision tree, artificial neural network, support vector machines, logistic regression, random forest, Naive Bayes, k-Nearest Neighbors, ...

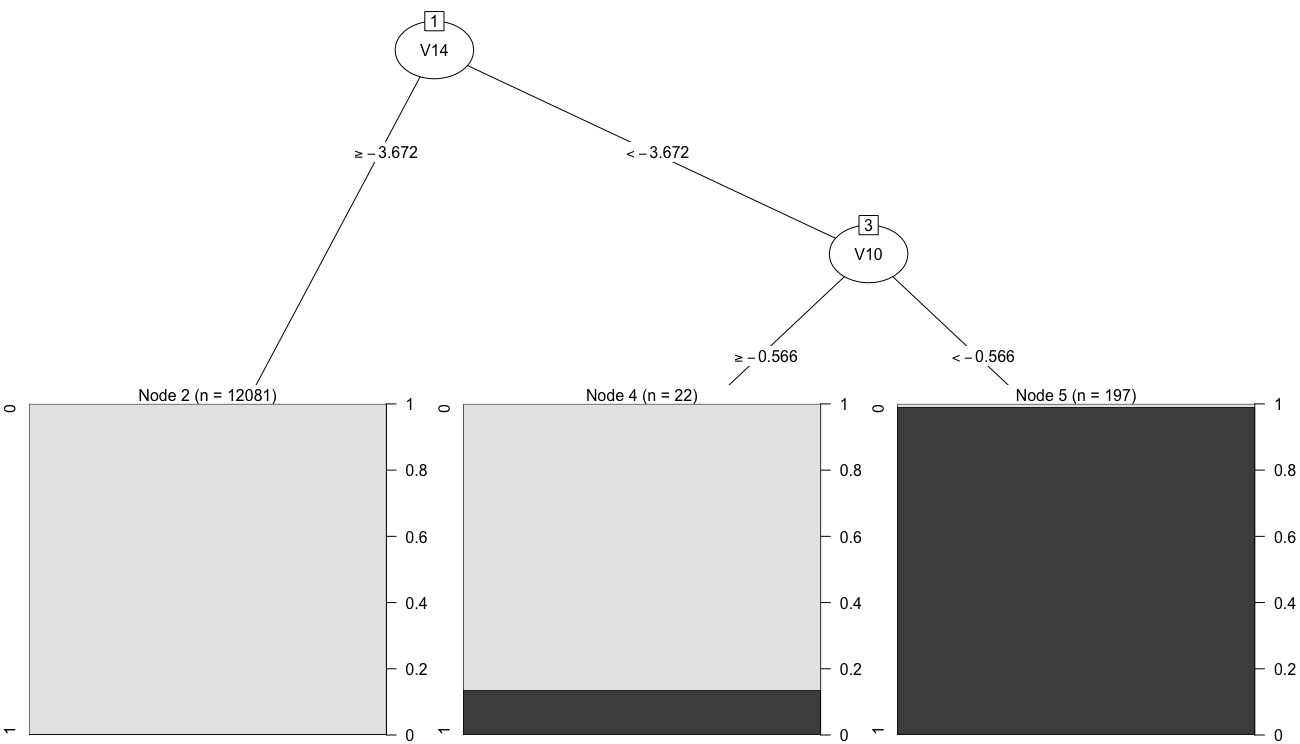

- Example: Classification And Regression Tree (CART) algorithm

- Function

rpartinrpartpackage

library(rpart)

model1 = rpart(Class ~ ., data = train)

library(partykit)

plot(as.party(model1))

## Predict fraud probability of test set scores1 = predict(model1, newdata = test, type = "prob")[, 2]## Predict class (fraud or not) of test set predicted_class1 = factor(ifelse(scores1 > 0.5, 1, 0))## Confusion matrix & accuracy, library(caret) CM1 = confusionMatrix(data = predicted_class1, reference = test$Class)

Reference

Prediction 0 1

0 12046 55

1 8 191 Accuracy : 0.994878

library(pROC)

auc(roc(response = test$Class, predictor = scores1)) ## Area Under ROC Curve (AUC)

Area under the ROC curve: 0.8938

Apply SMOTE on training set

library(smotefamily) set.seed(123) smote_result = SMOTE(X = train[, -17], target = train$Class, K = 5, dup_size = 10)train_oversampled = smote_result$data colnames(train_oversampled)[17] = "Class"prop.table(table(train_oversampled$Class))

0 1

0.8166667 0.1833333

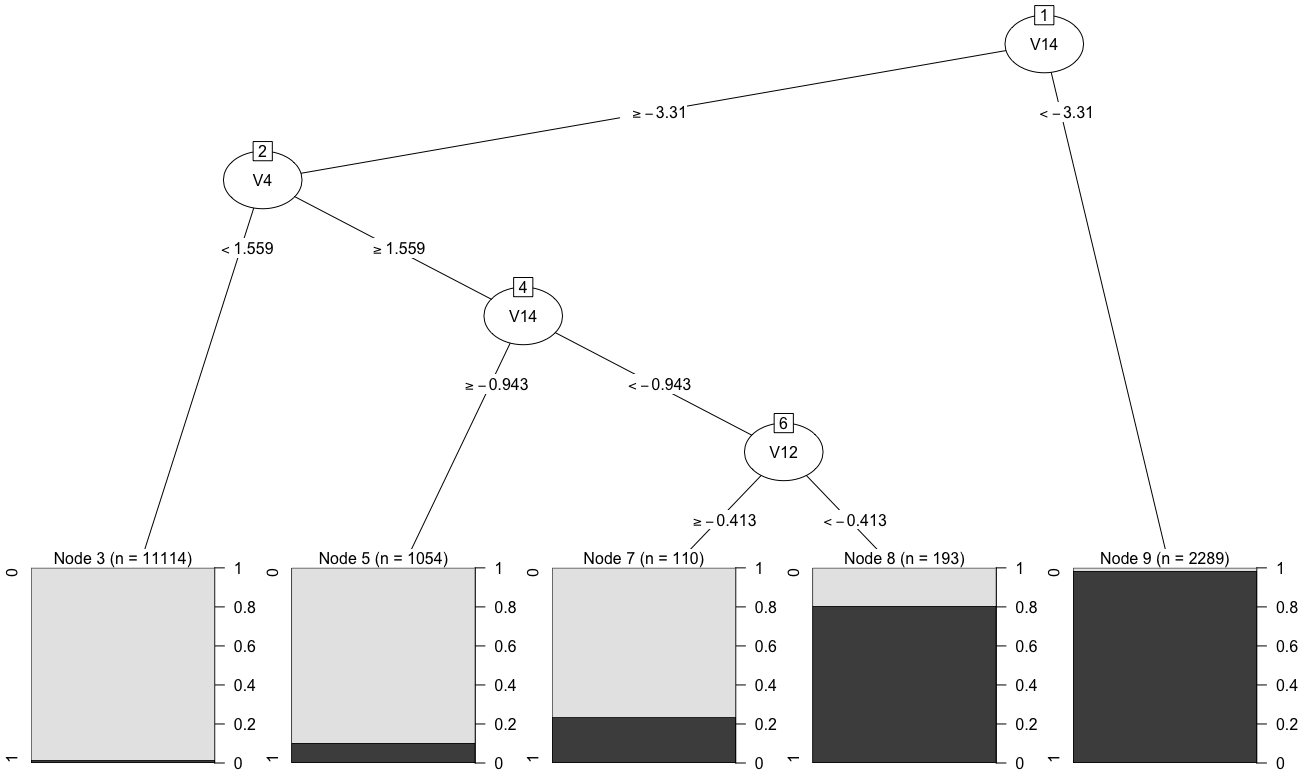

library(rpart)

model2 = rpart(Class ~ ., data = train_oversampled)

## Predict fraud probability of test set scores2 = predict(model2, newdata = test, type = "prob")[, 2]## Predict class (fraud or not) of test set predicted_class2 = factor(ifelse(scores2 > 0.5, 1, 0))## Confusion matrix & accuracy library(caret) CM2 = confusionMatrix(data = predicted_class2, reference = test$Class)

Reference

Prediction 0 1

0 11967 34

1 87 212 Accuracy : 0.9901626

library(pROC)

auc(roc(response = test$Class, predictor = scores2)) ## Area Under ROC Curve (AUC)

Area under the curve: 0.9538

Cost of deploying a detection model

- Take into account the different costs of fraud detection during the evaluation of an algorithm

- Costs are associated with

- misclassification errors (false positives & false negatives) and

- correct classifications (true positives & true negatives)

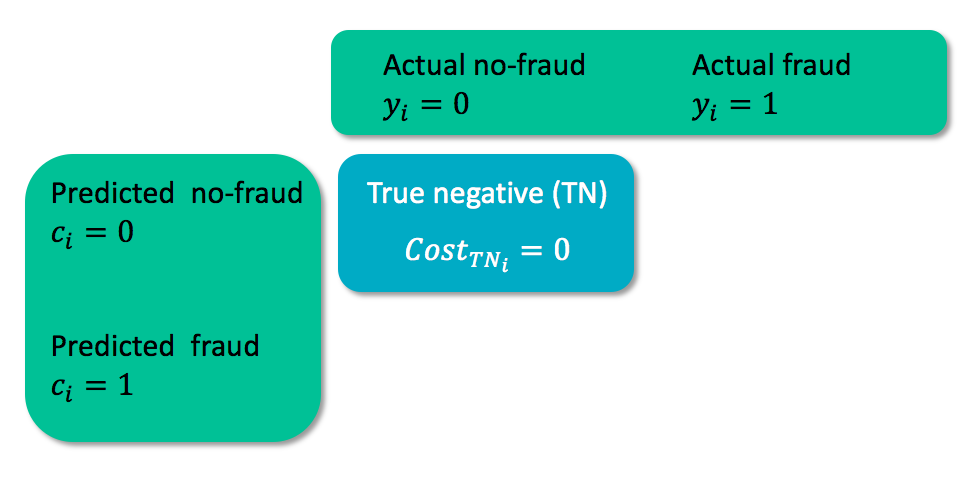

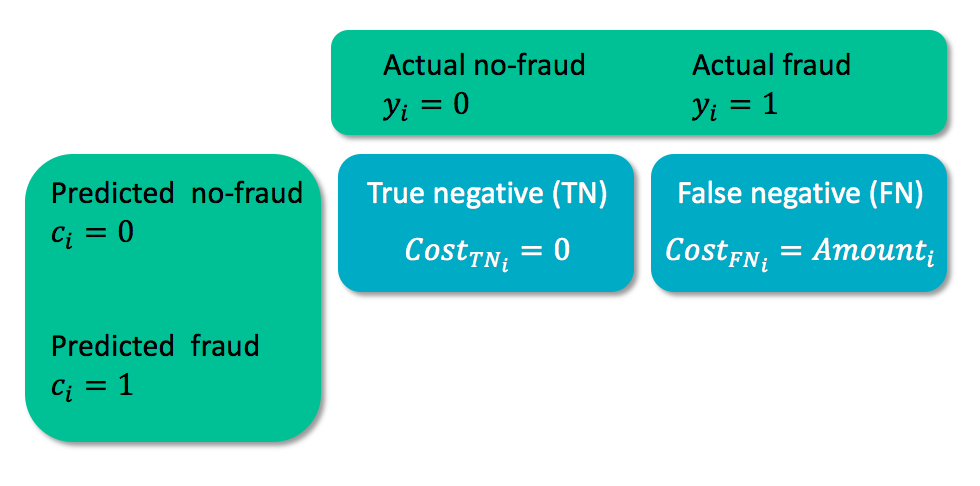

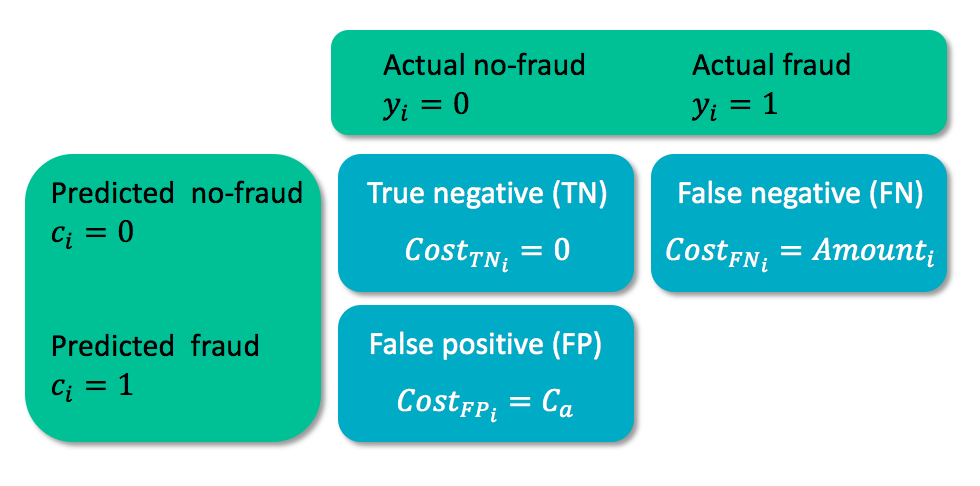

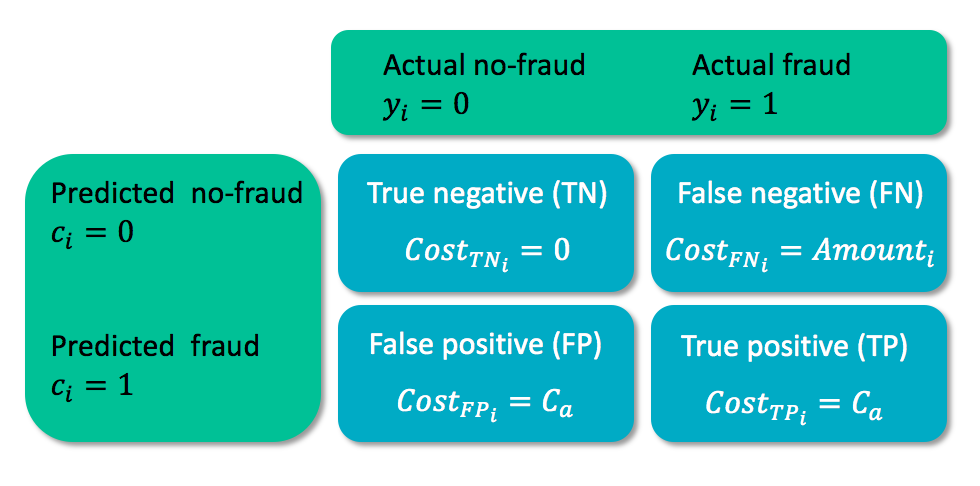

Cost matrix

- $y_i$ = true class of case $i$

- $c_i$ = predicted class for case $i$

Cost matrix

- $y_i$ = true class of case $i$

- $c_i$ = predicted class for case $i$

Cost matrix

- $C_a$ = cost for analyzing the case

Cost matrix

- $C_a$ = cost for analyzing the case

Cost measure for a detection model

- Take into account the actual costs of each case:

$$Cost(model)=\sum_{i=1}^{N}y_i(1-c_i)Amount_i + c_iC_a$$

- $y_i$ = true class of case $i$

- $c_i$ = predicted class for case $i$

cost_model = function(predicted.classes, true.classes, amounts, fixedcost) {

cost = sum(true.classes * (1 - predicted.classes) * amounts +

predicted.classes * fixedcost)

return(cost)

}

True cost of fraud detection

## Total cost without using SMOTE:

cost_model(predicted_class1, test$Class, test$Amount, fixedcost = 10)

10061.8

## Total cost when using SMOTE:

cost_model(predicted_class2, test$Class, test$Amount, fixedcost = 10)

7431.93

- Losses decrease by 26%!

Let's practice!

Fraud Detection in R